首页

edge-labeling graph neural network for few-shot learning

edge-labeling graph neural network for few-shot learning

时间: 2023-05-03 22:02:29

浏览: 168

B'edge-labeling graph neural network for few-shot learning'是一种用于少样本学习的边标签图神经网络。该网络可用于处理其他神经网络难以解决的少样本学习问题,可以提高学习效果和准确率。

阅读全文

相关推荐

Edge-Labeling图神经网络在小样本学习中的应用解析

"这篇笔记是关于论文《Edge-Labeling Graph Neural Network for Few-shot Learning》的学习,主要关注模型的数学解析部分。" 在 Few-shot learning 领域,该论文提出了一种名为 Edge-Labeling Graph Neural Network...

Python开发的Web贴标工具:labeling-machine简析

- **labeling-machine-minimal**: 这个名称暗示着存在一个最小化的Labeling Machine版本。"Minimal"通常表示该版本仅包含了最基本的功能,可能适用于那些对资源占用有严格限制的用户,或者是在演示或学习环境中使用...

序列标注分析工具sequence-labeling-master发布

资源摘要信息:"sequence-labeling-master.zip_WINDOWS_" 从给定文件信息中,我们可以提取出以下几个关键知识点: 1. 序列标注(Sequence Labeling): 序列标注是一种在自然语言处理(NLP)中常用的技术,它涉及到...

Gram-SLD Automatic Self-labeling and Detection for Instance Obje

近年来,深度卷积神经网络(Deep Convolutional Neural Network,DCNN)的显著成就为实例对象检测带来了新的研究方向。然而,DCNN在训练过程中通常需要大规模的标注数据集,而手动标注既耗时又费力。为了解决这一...

213401010317-王崇旭-Labeling schemes for weighted dynamic trees-计算机学院英文翻译.zip

213401010317-王崇旭-Labeling schemes for weighted dynamic trees-计算机学院英文翻译.zip

Hands-On Neural Network Programming with C#

Neural networks have made a surprise comeback in the last few years and have brought tremendous innovation in the world of artificial intelligence. The goal of this book is to provide C# programmers ...

Citation-Role-labeling

引文角色标签科学出版物之间的引文关系已成功用于文献计量学、信息检索和数据挖掘任务,并且基于引文的推荐算法有据可查。 虽然之前的研究从不同的角度调查了引文关系,但他们中的大多数都有一个相同的假设,即如果...

excentric-labeling-react

"excentric-labeling-react" 是一个基于JavaScript的开源项目,专注于信息可视化中的偏心标签设计。在信息可视化领域,标签的设计是至关重要的,因为它帮助用户理解图表中的数据和元素。"偏心标签"(Excentric ...

data-labeling-system

数据贴标系统想象标记的数据是已被一个或多个标签标记的一组样本。 标记系统通常会获取一组未标记的数据,并使用信息性标签来扩展数据的每一部分。 在获得标记的数据集之后,可以将机器学习模型应用于数据,以便可以...

Coronary-labeling

Coronary-labeling

data-labeling-tool-reactjs

通用数据工具 在尝试一下,或。 ••• • Universal Data Tool是一个Web /桌面应用程序,用于编辑和注释图像,文本,音频,文档以及查看和编辑在可扩展定义的任何数据。 支援资料 • • • • • • • • ...

Neuronal-labeling-system-based-on-crowdsourcing

项目的背景 从认识脑、保护脑和创造脑角度,脑科学一般分为神经生物学,临床神经科学和计算神经科学等学科,脑的研究对治疗脑疾病、类脑智能等方面有着重要的意义,脑空间信息学作为脑科学的一个分支学科,脑空间...

matlab有些代码不运行-Polyphonic-Sound-Event-Detection-with-Weak-Labeling-Datas

matlab有些代码不运行命题 该存储库包含我的博士学位论文中三个实验的代码: 声音事件检测,带有/不...for the DCASE experiment using default settings cd .. / code / dcase python train . py # Needs to run on

Fluorochrome-labeled monoclonal antibody with characteristic M-shaped spectral peak for optical imaging: Dual-labeling versus mixture of fluorochromes

Dual-labeling of Cetuximab by mixing both fluorochromes before the conjugation step gives spectral results similar to those of mixing of fluorochrome-labeled Cetuximab after the conjugation step (P>...

dss-plugin-ml-assisted-labeling:Dataiku标签和主动学习解决方案

ML辅助标签插件 通过此插件,您可以利用Web应用程序和有效的学习食谱来有效地标记文本,表格,图像或音频数据。 在训练模型时,并非所有样本都带来相同数量的信息。 标记与已标记样品非常相似的样品可能不会对模型...

Scene-Labeling-master (1).zip_Scene Labeling

在"Scene-Labeling-master (1).zip"这个压缩包中,包含了对这一技术的深入研究和实践资源。 场景标注的核心在于将图像的二维像素空间转换为带有语义信息的三维标签空间,这一过程涉及多个关键技术。首先,图像...

top-k-pruned-landmark-labeling:Top-K PLL(AAAI '15)的实现

$ bin/construct_index sample/example.txt 16 0 sample/index_file #compute an index that answer top 16 shortest distance on an undirected graph specified by sample/example.txt. $ bin/k_distance sample/...

sequence-labeling-master.zip_WINDOWS_

在这个"sequence-labeling-master.zip_WINDOWS_"压缩包中,我们可以推断出这可能是一个专门为Windows系统设计的序列标注工具或库。下面将详细探讨序列标注的基本概念、应用以及可能包含的内容。 1. **序列标注的...

easy-sequence-labeling-box:简化JavaScript序列标签任务

资源摘要信息:"easy-sequence-labeling-box 是一个基于 JavaScript 编写的简单插件,专门用于执行序列标签任务。序列标签在自然语言处理(NLP)和文本分析中应用广泛,它涉及到给序列中的每个元素分配一个标签,通常...

Python实现的Coronary-labeling标注工具详解

资源摘要信息:"Coronary-labeling-master是一个使用Python语言编写的项目,旨在实现冠状动脉的标注功能。冠状动脉标注在医学图像分析中有着重要的作用,尤其是在心脏病的诊断和治疗过程中。" 首先,我们需要了解...

CSDN会员

开通CSDN年卡参与万元壕礼抽奖

海量

VIP免费资源

千本

正版电子书

商城

会员专享价

千门

课程&专栏

全年可省5,000元

立即开通

全年可省5,000元

立即开通

大家在看

MOOC工程伦理课后习题答案(主观+判断+选择)期末考试答案.docx

MOOC工程伦理课程,课程讲义以及课后选择题、判断题和主观题习题答案

基于Farrow结构的滤波器频响特性matlab仿真,含仿真操作录像

1.版本:matlab2022a,包含仿真操作录像,操作录像使用windows media player播放。 2.领域:Farrow滤波器。 3.内容:基于Farrow结构的滤波器频响特性matlab仿真 % 得到Farrow结构滤波器的频响特性 for j=1:Nfil x=(j-1)*xinc + 0.0001; % 避免出现sin(0)/0 h = C(Np+1,:); % 由拟合后的子滤波器系数矩阵 for n=1:Np h=h+x^n*C(Np+1-n,:); % 得到子滤波器的系数和矩阵 end h=h/sum(h); % 综合滤波器组的系数矩阵 H = freqz(h,1,wpi); mag(j,:) = abs(H); end plot(w,20*log10(abs(H))); grid on;xlabel('归一化频率');ylabel('幅度'); 4.注意事项:注意MATLAB左侧当前文件夹路径,必须是程序所在文件夹位置,具体可以参考视频录。

电路ESD防护原理与设计实例.pdf

电路ESD防护原理与设计实例,不错的资源,硬件设计参考,相互学习

主生產排程員-SAP主生产排程

主生產排程員 比較實際需求與預測需求,提出預測與MPS的修訂建議。 把預測與訂單資料轉成MPS。 使MPS能配合出貨與庫存預算、行銷計畫、與管理政策。 追蹤MPS階層產品安全庫存的使用、分析MPS項目生產數量和FAS消耗數量之間的差異、將所有的改變資料輸入MPS檔案,以維護MPS。 參加MPS會議、安排議程、事先預想問題、備好可能的解決方案、將可能的衝突搬上檯面。 評估MPS修訂方案。 提供並監控對客戶的交貨承諾。

信息几何-Information Geometry

信息几何是最近几年新的一个研究方向,主要应用于统计分析、控制理论、神经网络、量子力学、信息论等领域。本书为英文版,最为经典。阅读需要一定的英文能力。

最新推荐

开发板基于STM32H750VBT6+12位精度AD9226信号采集快速傅里叶(FFT)变计算对应信号质量,资料包含原理图、调试好的源代码、PCB文件可选

开发板基于STM32H750VBT6+12位精度AD9226信号采集快速傅里叶(FFT)变计算对应信号质量,资料包含原理图、调试好的源代码、PCB文件可选

海康无插件摄像头WEB开发包(20200616-20201102163221)

资源摘要信息:"海康无插件开发包" 知识点一:海康品牌简介 海康威视是全球知名的安防监控设备生产与服务提供商,总部位于中国杭州,其产品广泛应用于公共安全、智能交通、智能家居等多个领域。海康的产品以先进的技术、稳定可靠的性能和良好的用户体验著称,在全球监控设备市场占有重要地位。 知识点二:无插件技术 无插件技术指的是在用户访问网页时,无需额外安装或运行浏览器插件即可实现网页内的功能,如播放视频、音频、动画等。这种方式可以提升用户体验,减少安装插件的繁琐过程,同时由于避免了插件可能存在的安全漏洞,也提高了系统的安全性。无插件技术通常依赖HTML5、JavaScript、WebGL等现代网页技术实现。 知识点三:网络视频监控 网络视频监控是指通过IP网络将监控摄像机连接起来,实现实时远程监控的技术。与传统的模拟监控相比,网络视频监控具备传输距离远、布线简单、可远程监控和智能分析等特点。无插件网络视频监控开发包允许开发者在不依赖浏览器插件的情况下,集成视频监控功能到网页中,方便了用户查看和管理。 知识点四:摄像头技术 摄像头是将光学图像转换成电子信号的装置,广泛应用于图像采集、视频通讯、安全监控等领域。现代摄像头技术包括CCD和CMOS传感器技术,以及图像处理、编码压缩等技术。海康作为行业内的领军企业,其摄像头产品线覆盖了从高清到4K甚至更高分辨率的摄像机,同时在图像处理、智能分析等技术上不断创新。 知识点五:WEB开发包的应用 WEB开发包通常包含了实现特定功能所需的脚本、接口文档、API以及示例代码等资源。开发者可以利用这些资源快速地将特定功能集成到自己的网页应用中。对于“海康web无插件开发包.zip”,它可能包含了实现海康摄像头无插件网络视频监控功能的前端代码和API接口等,让开发者能够在不安装任何插件的情况下实现视频流的展示、控制和其他相关功能。 知识点六:技术兼容性与标准化 无插件技术的实现通常需要遵循一定的技术标准和协议,比如支持主流的Web标准和兼容多种浏览器。此外,无插件技术也需要考虑到不同操作系统和浏览器间的兼容性问题,以确保功能的正常使用和用户体验的一致性。 知识点七:安全性能 无插件技术相较于传统插件技术在安全性上具有明显优势。由于减少了外部插件的使用,因此降低了潜在的攻击面和漏洞风险。在涉及监控等安全敏感的领域中,这种技术尤其受到青睐。 知识点八:开发包的更新与维护 从文件名“WEB无插件开发包_20200616_20201102163221”可以推断,该开发包具有版本信息和时间戳,表明它是一个经过时间更新和维护的工具包。在使用此类工具包时,开发者需要关注官方发布的版本更新信息和补丁,及时升级以获得最新的功能和安全修正。 综上所述,海康提供的无插件开发包是针对其摄像头产品的网络视频监控解决方案,这一方案通过现代的无插件网络技术,为开发者提供了方便、安全且标准化的集成方式,以实现便捷的网络视频监控功能。

PCNM空间分析新手必读:R语言实现从入门到精通

# 摘要 本文旨在介绍PCNM空间分析方法及其在R语言中的实践应用。首先,文章通过介绍PCNM的理论基础和分析步骤,提供了对空间自相关性和PCNM数学原理的深入理解。随后,详细阐述了R语言在空间数据分析中的基础知识和准备工作,以及如何在R语言环境下进行PCNM分析和结果解

生成一个自动打怪的脚本

创建一个自动打怪的游戏脚本通常是针对游戏客户端或特定类型的自动化工具如Roblox Studio、Unity等的定制操作。这类脚本通常是利用游戏内部的逻辑漏洞或API来控制角色的动作,模拟玩家的行为,如移动、攻击怪物。然而,这种行为需要对游戏机制有深入理解,而且很多游戏会有反作弊机制,自动打怪可能会被视为作弊而被封禁。 以下是一个非常基础的Python脚本例子,假设我们是在使用类似PyAutoGUI库模拟键盘输入来控制游戏角色: ```python import pyautogui # 角色位置和怪物位置 player_pos = (0, 0) # 这里是你的角色当前位置 monster

CarMarker-Animation: 地图标记动画及转向库

资源摘要信息:"CarMarker-Animation是一个开源库,旨在帮助开发者在谷歌地图上实现平滑的标记动画效果。通过该库,开发者可以实现标记沿路线移动,并在移动过程中根据道路曲线实现平滑转弯。这不仅提升了用户体验,也增强了地图应用的交互性。 在详细的技术实现上,CarMarker-Animation库可能会涉及到以下几个方面的知识点: 1. 地图API集成:该库可能基于谷歌地图的API进行开发,因此开发者需要有谷歌地图API的使用经验,并了解如何在项目中集成谷歌地图。 2. 动画效果实现:为了实现平滑的动画效果,开发者需要掌握CSS动画或者JavaScript动画的实现方法,包括关键帧动画、过渡动画等。 3. 地图路径计算:标记在地图上的移动需要基于实际的道路网络,因此开发者可能需要使用路径规划算法,如Dijkstra算法或者A*搜索算法,来计算出最合适的路线。 4. 路径平滑处理:仅仅计算出路线是不够的,还需要对路径进行平滑处理,以使标记在转弯时更加自然。这可能涉及到曲线拟合算法,如贝塞尔曲线拟合。 5. 地图交互设计:为了与用户的交互更为友好,开发者需要了解用户界面和用户体验设计原则,并将这些原则应用到动画效果的开发中。 6. 性能优化:在实现复杂的动画效果时,需要考虑程序的性能。开发者需要知道如何优化动画性能,减少卡顿,确保流畅的用户体验。 7. 开源协议遵守:由于CarMarker-Animation是一个开源库,开发者在使用该库时,需要遵守其开源协议,合理使用代码并遵守贡献指南。 此库的文件名'CarMarker-Animation-master'表明这是一个主分支的项目,可能包含源代码文件、示例项目、文档说明等资源。开发者可以通过下载解压缩后获得这些资源,并根据提供的文档来了解如何安装和使用该库。在使用过程中,建议仔细阅读开源项目的贡献指南和使用说明,以确保库的正确集成和使用,同时也可以参与开源社区,与其他开发者共同维护和改进这一项目。"

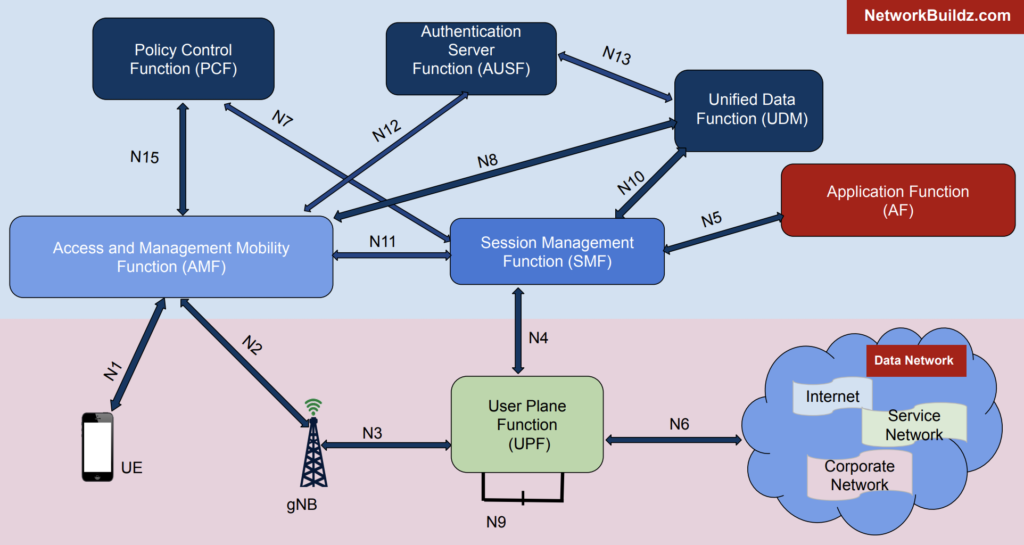

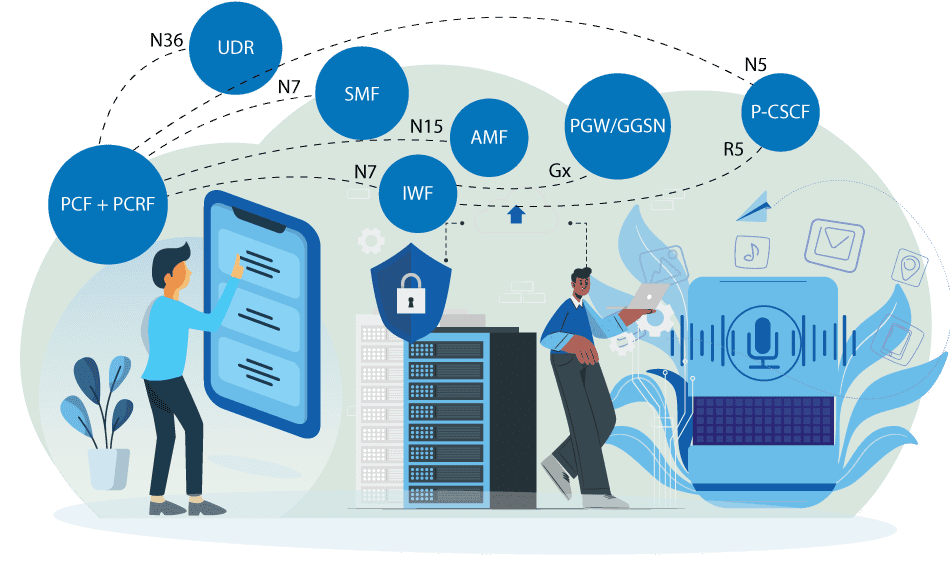

5G核心网元性能瓶颈揭秘

# 摘要 随着5G技术的发展和应用,其核心网的性能优化成为了行业关注的焦点。本文首先概述了5G核心网的架构,并对性能瓶颈进行深入分析,识别了关键的性能指标和瓶颈识别方法。通过案例分析,展示了核心网元常见的性能问题及其诊断和解决过程。随后,文章提出了多项性能优化策略,包括网络设计、系统配置调整以及新技术的应用。此外,本文探讨了安全挑战如何影响核心网的性能,

stm32连接红外传感器并将gsm900a短信收发实现报警

在STM32上连接红外传感器并通过GSM900A模块实现短信报警功能,可以按照以下步骤进行: ### 硬件连接 1. **红外传感器连接**: - 将红外传感器的VCC连接到STM32的3.3V或5V电源(根据传感器规格)。 - 将GND连接到STM32的地线。 - 将GSM900A的VCC连接到外部电源(通常需要2A电流,3.4V-4.4V)。 - 将GND连接到STM32的地线。 - 将TXD引脚连接到STM32的一个UART RX引脚(例如PA10)。 - 将RXD引脚连接到STM32的一个UART TX引脚(例如PA9)。 - 如果需要,可

C语言时代码的实现与解析

资源摘要信息:"在本次提供的文件信息中,有两个关键的文件:main.c 和 README.txt。标题和描述中的‘c代码-ce shi dai ma’可能是一个笔误或特定语境下的表述,其真实意图可能是指 'C代码 - 测试代码'。下面将分别解释这两个文件可能涉及的知识点。 首先,关于文件名 'main.c',这很可能是源代码文件,使用的编程语言是C语言。C语言是一种广泛使用的计算机编程语言,它以其功能强大、表达能力强、能够进行底层操作和高效的资源管理而著称。C语言广泛应用于操作系统、嵌入式系统、系统软件、编译器、数据库系统以及各种应用软件的开发。C语言程序通常包含一个或多个源文件,这些源文件包含函数定义、变量声明和宏定义等。 在C语言中,'main' 函数是程序的入口点,即程序从这里开始执行。一个标准的C程序至少包含一个 main 函数。该函数可以有两种形式: 1. 不接受任何参数:`int main(void) { ... }` 2. 接受命令行参数:`int main(int argc, char *argv[]) { ... }` main 函数应该返回一个整数,通常用0表示程序正常结束,非0值表示出现错误。 'c代码-ce shi dai ma' 中的 'ce shi dai ma' 部分,可能是对 '测试代码' 的音译或笔误。在软件开发中,测试代码是用来验证程序功能正确性的代码片段或测试套件。测试代码的目的是确保程序的各个部分按照预期工作,包括单元测试、集成测试、系统测试和验收测试等。 接下来是文件 'README.txt',这通常是一个文本文件,包含项目或软件的说明信息。虽然名称暗示了这是一个简单的说明文件,但它可能包含以下内容: - 软件或项目的简短描述 - 如何安装或部署软件的说明 - 如何运行程序或测试的步骤 - 软件或项目的许可证和使用条款 - 作者信息和联系方法 - 更多文档的链接或引用 在处理 README.txt 文件时,读者应该能够了解到程序的基本概念、如何编译运行程序以及可能遇到的问题及其解决方案。此外,它还可能详细说明了main.c文件中所包含的测试代码的具体作用和如何对其进行测试验证。 综上所述,两个文件共同为我们提供了一个C语言项目的概览:一个实际的源代码文件main.c,和一个说明性文档README.txt。了解这些文件将帮助我们理解项目的基础结构、测试策略和使用方法。"

5G SA核心网元性能问题分析

# 摘要 随着5G技术的快速发展,独立组网(SA)核心网已成为关键的网络架构组成部分,其性能直接关系到用户体验和网络服务质量。本文首先概述了5G SA核心网的基本概念及其性能指标,继而详细分析了网络架构与数据流,性能测试方法,并探讨了性能问题的案例。在此基础上,本文进一步研究了性能保障技术,如网络切片和人工智能技术的应用,并提出了性能优化实践操作指南。通过案例分析,本文总结了性能优化策略,评估了优化效果,并展望了未来技术的发展方向,旨在为5

数据集yorkurban怎么跑

运行数据集yorkurban通常涉及到以下几个步骤,具体操作可能会根据你的研究或应用目的有所不同。以下是一个通用的流程: 1. **下载数据集**: 首先,你需要从官方网站或其他可信的数据集资源下载yorkurban数据集。通常,数据集会以压缩文件的形式提供。 2. **解压文件**: 下载完成后,解压文件到一个你方便访问的目录。 3. **安装必要的软件**: 根据数据集的格式和你的应用需求,安装必要的软件和库。例如,如果数据集是图像数据,你可能需要安装OpenCV或Pillow等图像处理库。 4. **加载数据集**: 使用编程语言(如Python)加载数据