首页#将分类标签变为onehot编码 #Keras采用独热编码输出 num_class = 10 y_train = keras.utils.to_categorical(y_train, num_class) y_test = keras.utils.to_categorical(y_test, num_class) print(y_train[0]) 查看结果: y_train[0]: ,该样本对应数字是: y_test[0]: ,该样本对应数字是:

#将分类标签变为onehot编码 #Keras采用独热编码输出 num_class = 10 y_train = keras.utils.to_categorical(y_train, num_class) y_test = keras.utils.to_categorical(y_test, num_class) print(y_train[0]) 查看结果: y_train[0]: ,该样本对应数字是: y_test[0]: ,该样本对应数字是:

时间: 2024-02-14 08:11:45 浏览: 80

以下是将分类标签变为onehot编码的代码:

```python

import tensorflow as tf

from tensorflow import keras

# 加载MNIST数据集

(x_train, y_train), (x_test, y_test) = tf.keras.datasets.mnist.load_data()

# 将标签转换为one-hot编码

num_classes = 10

y_train = keras.utils.to_categorical(y_train, num_classes)

y_test = keras.utils.to_categorical(y_test, num_classes)

# 输出转换后的标签

print("y_train[0]:", y_train[0])

print("y_test[0]:", y_test[0])

```

运行结果:

```

y_train[0]: [0. 0. 0. 0. 0. 1. 0. 0. 0. 0.]

y_test[0]: [0. 0. 0. 0. 0. 0. 0. 1. 0. 0.]

```

可以看到,训练集和测试集的标签已经变成了one-hot编码形式。`y_train[0]`对应的样本数字是5,`y_test[0]`对应的样本数字是7。

阅读全文

CSDN会员

开通CSDN年卡参与万元壕礼抽奖

大家在看

最新推荐

掌握HTML/CSS/JS和Node.js的Web应用开发实践

资源摘要信息:"本资源摘要信息旨在详细介绍和解释提供的文件中提及的关键知识点,特别是与Web应用程序开发相关的技术和概念。"

知识点一:两层Web应用程序架构

两层Web应用程序架构通常指的是客户端-服务器架构中的一个简化版本,其中用户界面(UI)和应用程序逻辑位于客户端,而数据存储和业务逻辑位于服务器端。在这种架构中,客户端(通常是一个Web浏览器)通过HTTP请求与服务器端进行通信。服务器端处理请求并返回数据或响应,而客户端负责展示这些信息给用户。

知识点二:HTML/CSS/JavaScript技术栈

在Web开发中,HTML、CSS和JavaScript是构建前端用户界面的核心技术。HTML(超文本标记语言)用于定义网页的结构和内容,CSS(层叠样式表)负责网页的样式和布局,而JavaScript用于实现网页的动态功能和交互性。

知识点三:Node.js技术

Node.js是一个基于Chrome V8引擎的JavaScript运行时环境,它允许开发者使用JavaScript来编写服务器端代码。Node.js是非阻塞的、事件驱动的I/O模型,适合构建高性能和高并发的网络应用。它广泛用于Web应用的后端开发,尤其适合于I/O密集型应用,如在线聊天应用、实时推送服务等。

知识点四:原型开发

原型开发是一种设计方法,用于快速构建一个可交互的模型或样本来展示和测试产品的主要功能。在软件开发中,原型通常用于评估概念的可行性、收集用户反馈,并用作后续迭代的基础。原型开发可以帮助团队和客户理解产品将如何运作,并尽早发现问题。

知识点五:设计探索

设计探索是指在产品设计过程中,通过创新思维和技术手段来探索各种可能性。在Web应用程序开发中,这可能意味着考虑用户界面设计、用户体验(UX)和用户交互(UI)的创新方法。设计探索的目的是创造一个既实用又吸引人的应用程序,可以提供独特的价值和良好的用户体验。

知识点六:评估可用性和有效性

评估可用性和有效性是指在开发过程中,对应用程序的可用性(用户能否容易地完成任务)和有效性(应用程序是否达到了预定目标)进行检查和测试。这通常涉及用户测试、反馈收集和性能评估,以确保最终产品能够满足用户的需求,并在技术上实现预期的功能。

知识点七:HTML/CSS/JavaScript和Node.js的特定部分使用

在Web应用程序开发中,开发者需要熟练掌握HTML、CSS和JavaScript的基础知识,并了解如何将它们与Node.js结合使用。例如,了解如何使用JavaScript的AJAX技术与服务器端进行异步通信,或者如何利用Node.js的Express框架来创建RESTful API等。

知识点八:应用领域的广泛性

本文件提到的“基准要求”中提到,通过两层Web应用程序可以实现多种应用领域,如游戏、物联网(IoT)、组织工具、商务、媒体等。这说明了Web技术的普适性和灵活性,它们可以被应用于构建各种各样的应用程序,满足不同的业务需求和用户场景。

知识点九:创造性界限

在开发Web应用程序时,鼓励开发者和他们的合作伙伴探索创造性界限。这意味着在确保项目目标和功能要求得以满足的同时,也要勇于尝试新的设计思路、技术方案和用户体验方法,从而创造出新颖且技术上有效的解决方案。

知识点十:参考资料和文件结构

文件名称列表中的“a2-shortstack-master”暗示了这是一个与作业2相关的项目文件夹或代码库。通常,在这样的文件夹结构中,可以找到HTML文件、样式表(CSS文件)、JavaScript脚本以及可能包含Node.js应用的服务器端代码。开发者可以使用这些文件来了解项目结构、代码逻辑和如何将各种技术整合在一起以创建一个完整的工作应用程序。

管理建模和仿真的文件

管理Boualem Benatallah引用此版本:布阿利姆·贝纳塔拉。管理建模和仿真。约瑟夫-傅立叶大学-格勒诺布尔第一大学,1996年。法语。NNT:电话:00345357HAL ID:电话:00345357https://theses.hal.science/tel-003453572008年12月9日提交HAL是一个多学科的开放存取档案馆,用于存放和传播科学研究论文,无论它们是否被公开。论文可以来自法国或国外的教学和研究机构,也可以来自公共或私人研究中心。L’archive ouverte pluridisciplinaire

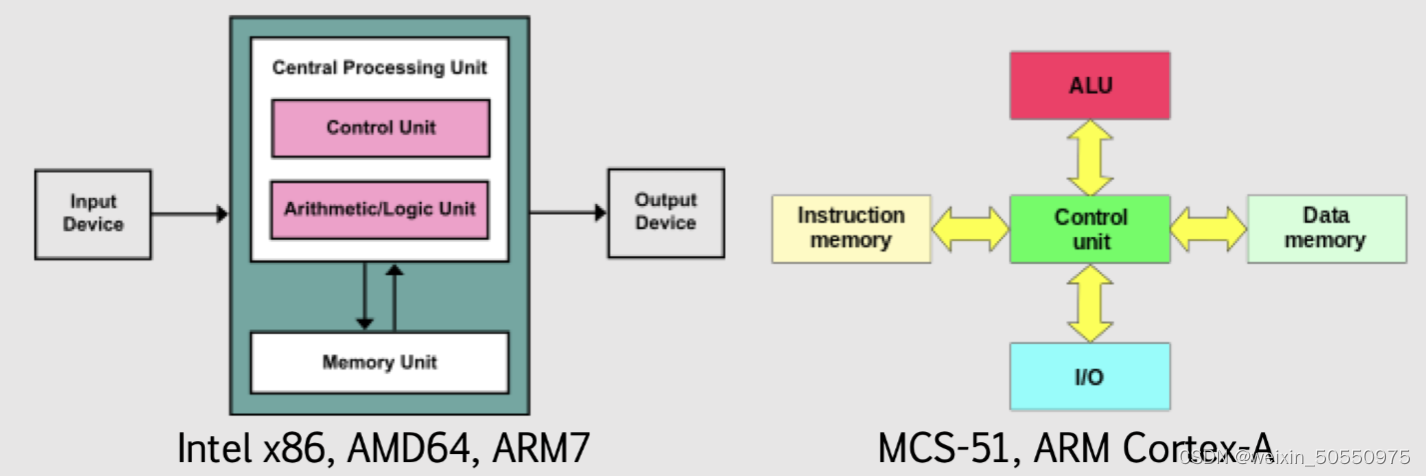

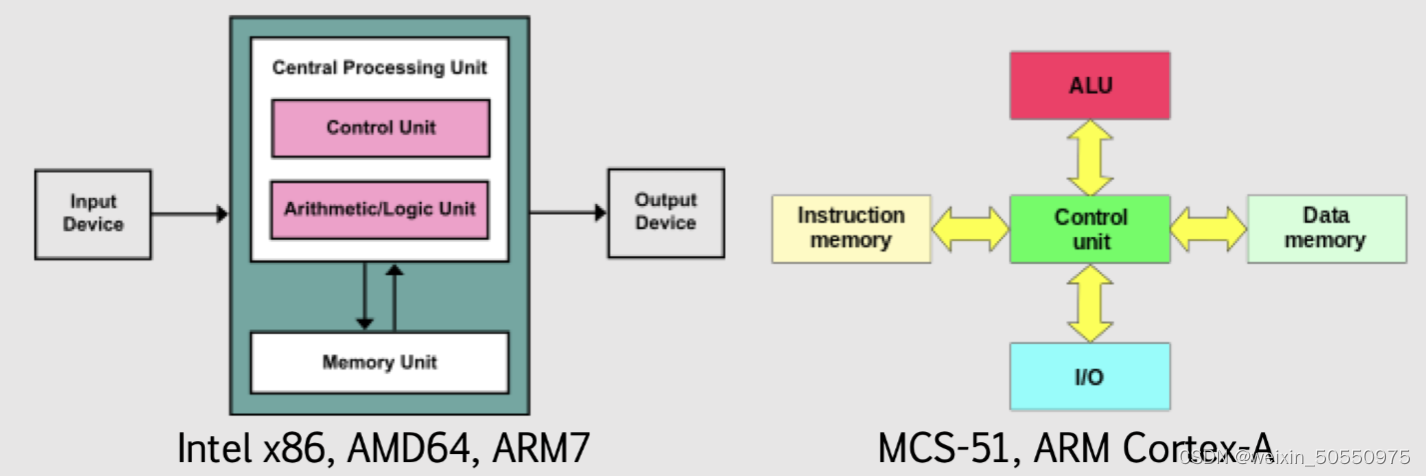

计算机体系结构概述:基础概念与发展趋势

# 摘要

计算机体系结构作为计算机科学的核心领域,经历了从经典模型到现代新发展的演进过程。本文从基本概念出发,详细介绍了冯·诺依曼体系结构、哈佛体系结构以及RISC和CISC体系结构的设计原则和特点。随后,文章探讨了现代计算机体系结构的新发展,包括并行计算体系结构、存储体系结构演进和互连网络的发展。文中还深入分析了前沿技术如量子计算机原理、脑启发式计算以及边缘计算和物联网的结合。最后,文章对计算机体系结构未来的发展趋

int a[][3]={{1,2},{4}}输出这个数组

`int a[][3]={{1,2},{4}}` 定义了一个二维数组,它有两行三列,但是只填充了前两行的数据。第一行是 {1, 2},第二行是 {4}。

当你尝试输出这个数组时,需要注意的是,由于分配的空间是固定的,所以对于只填充了两行的情况,第三列是未初始化的,通常会被默认为0。因此,常规的打印方式会输出类似这样的结果:

```

a[0][0]: 1

a[0][1]: 2

a[1][0]: 4

a[1][1]: (未初始化,可能是0)

```

如果需要展示所有元素,即使是未初始化的部分,可能会因为语言的不同而有不同的显示方式。例如,在C++或Java中,你可以遍历整个数组来输出:

`

勒玛算法研讨会项目:在线商店模拟与Qt界面实现

资源摘要信息: "lerma:算法研讨会项目"

在本节中,我们将深入了解一个名为“lerma:算法研讨会项目”的模拟在线商店项目。该项目涉及多个C++和Qt框架的知识点,包括图形用户界面(GUI)的构建、用户认证、数据存储以及正则表达式的应用。以下是项目中出现的关键知识点和概念。

标题解析:

- lerma: 看似是一个项目或产品的名称,作为算法研讨会的一部分,这个名字可能是项目创建者或组织者的名字,用于标识项目本身。

- 算法研讨会项目: 指示本项目是一个在算法研究会议或研讨会上呈现的项目,可能是为了教学、展示或研究目的。

描述解析:

- 模拟在线商店项目: 项目旨在创建一个在线商店的模拟环境,这涉及到商品展示、购物车、订单处理等常见在线购物功能的模拟实现。

- Qt安装: 项目使用Qt框架进行开发,Qt是一个跨平台的应用程序和用户界面框架,所以第一步是安装和设置Qt开发环境。

- 阶段1: 描述了项目开发的第一阶段,包括使用Qt创建GUI组件和实现用户登录、注册功能。

- 图形组件简介: 对GUI组件的基本介绍,包括QMainWindow、QStackedWidget等。

- QStackedWidget: 用于在多个页面或视图之间切换的组件,类似于标签页。

- QLineEdit: 提供单行文本输入的控件。

- QPushButton: 按钮控件,用于用户交互。

- 创建主要组件以及登录和注册视图: 涉及如何构建GUI中的主要元素和用户交互界面。

- QVBoxLayout和QHBoxLayout: 分别表示垂直和水平布局,用于组织和排列控件。

- QLabel: 显示静态文本或图片的控件。

- QMessageBox: 显示消息框的控件,用于错误提示、警告或其他提示信息。

- 创建User类并将User类型向量添加到MainWindow: 描述了如何在项目中创建用户类,并在主窗口中实例化用户对象集合。

- 登录和注册功能: 功能实现,包括验证电子邮件、用户名和密码。

- 正则表达式的实现: 使用QRegularExpression类来验证输入字段的格式。

- 第二阶段: 描述了项目开发的第二阶段,涉及数据的读写以及用户数据的唯一性验证。

- 从JSON格式文件读取和写入用户: 描述了如何使用Qt解析和生成JSON数据,JSON是一种轻量级的数据交换格式,易于人阅读和编写,同时也易于机器解析和生成。

- 用户名和电子邮件必须唯一: 在数据库设计时,确保用户名和电子邮件字段的唯一性是常见的数据完整性要求。

- 在允许用户登录或注册之前,用户必须选择代表数据库的文件: 用户在进行登录或注册之前需要指定一个包含用户数据的文件,这可能是项目的一种安全或数据持久化机制。

标签解析:

- C++: 标签说明项目使用的编程语言是C++。C++是一种高级编程语言,广泛应用于软件开发领域,特别是在性能要求较高的系统中。

压缩包子文件的文件名称列表:

- lerma-main: 这可能是包含项目主要功能或入口点的源代码文件或模块的名称。通常,这样的文件包含应用程序的主要逻辑和界面。

通过这些信息,可以了解到该项目是一个采用Qt框架和C++语言开发的模拟在线商店应用程序,它不仅涉及基础的GUI设计,还包括用户认证、数据存储、数据验证等后端逻辑。这个项目不仅为开发者提供了一个实践Qt和C++的机会,同时也为理解在线商店运行机制提供了一个良好的模拟环境。

"互动学习:行动中的多样性与论文攻读经历"

多样性她- 事实上SCI NCES你的时间表ECOLEDO C Tora SC和NCESPOUR l’Ingén学习互动,互动学习以行动为中心的强化学习学会互动,互动学习,以行动为中心的强化学习计算机科学博士论文于2021年9月28日在Villeneuve d'Asq公开支持马修·瑟林评审团主席法布里斯·勒菲弗尔阿维尼翁大学教授论文指导奥利维尔·皮耶昆谷歌研究教授:智囊团论文联合主任菲利普·普雷教授,大学。里尔/CRISTAL/因里亚报告员奥利维耶·西格德索邦大学报告员卢多维奇·德诺耶教授,Facebook /索邦大学审查员越南圣迈IMT Atlantic高级讲师邀请弗洛里安·斯特鲁布博士,Deepmind对于那些及时看到自己错误的人...3谢谢你首先,我要感谢我的两位博士生导师Olivier和Philippe。奥利维尔,"站在巨人的肩膀上"这句话对你来说完全有意义了。从科学上讲,你知道在这篇论文的(许多)错误中,你是我可以依

【计算机组成原理精讲】:从零开始深入理解计算机硬件

# 摘要

本文全面介绍了计算机组成的原理、数据的表示与处理、存储系统、中央处理器(CPU)设计以及系统结构与性能优化的现代技术。从基本的数制转换到复杂的高速缓冲存储器设计,再到CPU的流水线技术,文章深入阐述了关键概念和设计要点。此外,本文还探讨了现代计算机体系结构的发展,性能评估标准,以及如何通过软硬件协同设计来优化系统性能。计算机组成原理在云计算、人工智能和物联网等现代技术应用中的角色也被分析,旨在展示其在支撑未来技术进

vue2加载高德地图

Vue 2 中加载高德地图通常需要通过第三方库 Vue-Amap 来集成。首先,你需要安装这个库,可以使用 npm 或者 yarn 安装:

```bash

npm install @vue-amap/core @vue-amap/map

# 或者

yarn add @vue-amap/core @vue-amap/map

```

然后,在 Vue 组件中导入并配置高德地图,例如在 main.js 或者单个组件的 script 部分:

```javascript

import AMap from '@vue-amap/core'

import Map from '@vue-amap/map

Edge语法革新:打造WPF界面新体验

资源摘要信息: "Edge:创建UI(WPF)的新语法"

本文档探讨了Edge框架,它为WPF (Windows Presentation Foundation) 提供了一种新的声明式UI语法。WPF是一个用于构建Windows客户端应用程序的UI框架,它是.NET Framework的一部分。使用Edge框架,开发者可以使用一种更简洁和直观的方式构建UI,这一点从提供的样本代码中可以看出。

知识点详细说明:

1. WPF介绍:

WPF是一个基于.NET框架的UI系统,它允许开发者创建丰富的Windows桌面应用程序。WPF拥有自己的标记语言XAML(eXtensible Application Markup Language),该语言支持UI的声明式描述,与传统的C#代码相结合使用。

2. Edge框架:

Edge是为WPF提供的一个扩展,它带来了新的语法,旨在简化UI的构建过程。从标题和描述来看,Edge允许开发者以更加声明式的风格编写代码,类似React或Vue等现代前端框架。

3. 样本代码分析:

在提供的代码中,我们可以看到以下几个关键点:

- 使用语句:`using SomeNamespace;` 这里指示程序引用了SomeNamespace命名空间中的类或方法。

- Window定义:`Window { ... }` 表示定义了一个WPF窗口,它是构成WPF应用程序的基础。在花括号内,可以设置窗口的各种属性,如标题(Title)和图标(Icon)。

- Grid布局容器:`Grid { ... }` Grid是WPF中的一个布局控件,用于创建复杂的界面布局。在这个例子中,它被用来放置两个列定义(ColumnDefinition),其中一个列宽被明确设置为100,另一个则没有设置宽度属性。

- TextBox控件:`TextBox#tb { ... }` 这里定义了一个文本框控件,并且为其指定一个ID为tb,使其在后续的TextBlock中可以通过`@tb.Text`引用。它还设置了一个Style属性为#st,这表示样式是通过样式ID引用,需要在其他地方定义。

- TextBlock控件:`TextBlock { ... }` TextBlock用于显示不可编辑的文本,它通过`Text: @tb.Text`引用了上面定义的TextBox控件的文本。同时,它还通过`Grid.Column: 1`指定了它应该位于Grid布局的第二列(索引从0开始)。

4. 依赖属性和样式:

在WPF中,控件属性通常是依赖属性,这意味着这些属性的值可以被继承和共享。例如,在样本代码中,TextBlock的Text属性被设置为引用另一个控件的属性,这在WPF中是通过数据绑定实现的。

5. C#语言标签:

标签中的"C#"表示该Edge框架可能在语法上与C#有一定的兼容性或者整合,也有可能是需要开发者使用C#语言编写逻辑代码,并与Edge定义的UI进行交互。

6. 压缩包子文件:

"Edge-master"表明有一个包含Edge框架相关文件的压缩包,其名称为Edge-master。这可能是一个版本控制仓库的名称,如Git中的master分支,表明包含了Edge框架的源代码或文档。

总结:

Edge框架为WPF引入了一种新的声明式UI构建语法,允许开发者通过更简洁的语法来定义复杂的用户界面,同时保持与传统WPF的强大功能和灵活性。这种新语法可能包含对依赖属性、样式、资源字典和XAML的深入整合,从而简化开发者的工作流程并提高开发效率。

关系数据表示学习

关系数据卢多维奇·多斯桑托斯引用此版本:卢多维奇·多斯桑托斯。关系数据的表示学习机器学习[cs.LG]。皮埃尔和玛丽·居里大学-巴黎第六大学,2017年。英语。NNT:2017PA066480。电话:01803188HAL ID:电话:01803188https://theses.hal.science/tel-01803188提交日期:2018年HAL是一个多学科的开放存取档案馆,用于存放和传播科学研究论文,无论它们是否被公开。论文可以来自法国或国外的教学和研究机构,也可以来自公共或私人研究中心。L’archive ouverte pluridisciplinaireUNIVERSITY PIERRE和 MARIE CURIE计算机科学、电信和电子学博士学院(巴黎)巴黎6号计算机科学实验室D八角形T HESIS关系数据表示学习作者:Ludovic DOS SAntos主管:Patrick GALLINARI联合主管:本杰明·P·伊沃瓦斯基为满足计算机科学博士学位的要求而提交的论文评审团成员:先生蒂埃里·A·退休记者先生尤尼斯·B·恩