DNN的编码器-解码器视角:正向传播与反向传播深度解析

91 浏览量

更新于2024-08-26

收藏 2.32MB PDF 举报

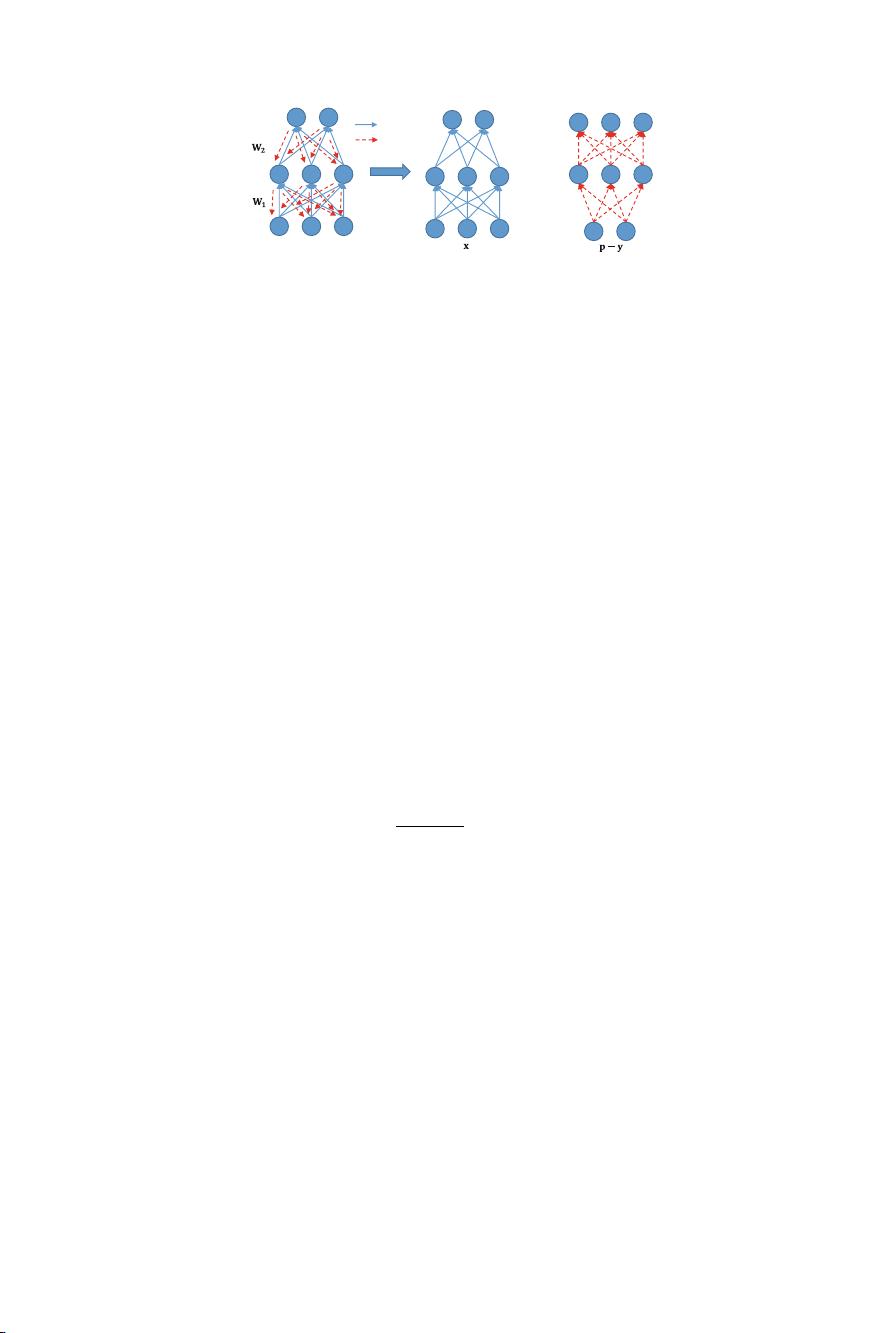

深度神经网络(Deep Neural Network, DNN)因其复杂的结构和隐藏层间的非线性关系而难以理解。本文旨在提供一个深入的视角来解析DNN的正向传播(forward-propagation)和反向传播(back-propagation)过程,将其视为两个独立但相关的网络结构:fp-DNN(forward-propagation DNN)和bp-DNN(back-propagation DNN)。

fp-DNN被视为一个编码器,其核心任务是在隐藏层中学习区分特征,这些特征在没有直接目标损失函数监督的情况下被提取出来。这个观点是通过引入一种对fp-DNN隐藏层的直接损失函数来实现的,它允许我们更直观地理解fp-DNN的作用。这种直接损失函数使得fp-DNN的学习过程更加透明,我们可以观察到它如何在bp-DNN的指导下,逐渐形成有意义的特征表示。

另一方面,bp-DNN被解释为一个解码器,它接收fp-DNN编码后的特征,并根据这些特征进行后续的决策或预测。反向传播算法在bp-DNN中起着至关重要的作用,它通过计算梯度来调整各层权重,以最小化整个网络的总损失。在这个过程中,bp-DNN利用fp-DNN提供的特征来进行优化,确保最终输出的准确性。

实验部分展示了如何通过bp-DNN来分析和可视化fp-DNN的学习过程。结果表明,fp-DNN在bp-DNN的监督下,能够有效地学习到有用的特征,这些特征对于网络的整体性能至关重要。通过这种方式,作者揭示了DNN中这两个过程之间的内在联系和协同工作机制,为理解深度学习模型的工作原理提供了新的见解。

总结来说,这篇研究论文通过构建fp-DNN和bp-DNN的理论框架,以及实验验证,深化了我们对DNN正向传播和反向传播的理解,将复杂的模型学习过程分解为编码和解码两部分,有助于提高模型可解释性和理解性。这对于优化模型设计、提升学习效率以及故障诊断等方面具有重要意义。

2024-02-15 上传

2021-05-27 上传

点击了解资源详情

点击了解资源详情

点击了解资源详情

点击了解资源详情

点击了解资源详情

weixin_38652196

- 粉丝: 2

- 资源: 939

最新资源

- Java集合ArrayList实现字符串管理及效果展示

- 实现2D3D相机拾取射线的关键技术

- LiveLy-公寓管理门户:创新体验与技术实现

- 易语言打造的快捷禁止程序运行小工具

- Microgateway核心:实现配置和插件的主端口转发

- 掌握Java基本操作:增删查改入门代码详解

- Apache Tomcat 7.0.109 Windows版下载指南

- Qt实现文件系统浏览器界面设计与功能开发

- ReactJS新手实验:搭建与运行教程

- 探索生成艺术:几个月创意Processing实验

- Django框架下Cisco IOx平台实战开发案例源码解析

- 在Linux环境下配置Java版VTK开发环境

- 29街网上城市公司网站系统v1.0:企业建站全面解决方案

- WordPress CMB2插件的Suggest字段类型使用教程

- TCP协议实现的Java桌面聊天客户端应用

- ANR-WatchDog: 检测Android应用无响应并报告异常