01 kernel<<<gD,bD>>>(args)

(a) Original Kernel Call

05 allocate arrays for args, gD, and bD

06 store args in arg arrays

07 store gD in gD array, and bD in bD array

08 new gD = sum of gD array across warp/block

09 new bD = max of bD array across warp/block

10 if(threadIdx == launcher thread in warp/block) {

11 kernel_agg<<<new gD,new bD>>>

12 (arg arrays, gD array, bD array)

13 }

(c) Transformed Kernel Call (called in a kernel)

02 __global__ void kernel(params) {

03 kernel body

04 }

(b) Original Kernel

(d) Transformed Kernel (called from a kernel)

14 __global__ void kernel_agg(param arrays, gD array, bD array) {

15 calculate index of parent thread

16 load params from param arrays

17 load actual gridDim/blockDim from gD/bD arrays

18 calculate actual blockIdx

19 if(threadIdx < actual blockDim) {

20 kernel body (with kernel launches transformed and with

21 using actual gridDim/blockDim/blockIdx)

22 }

23 }

Fig. 2. Code Generation for Aggregation at Warp and Block Granularity

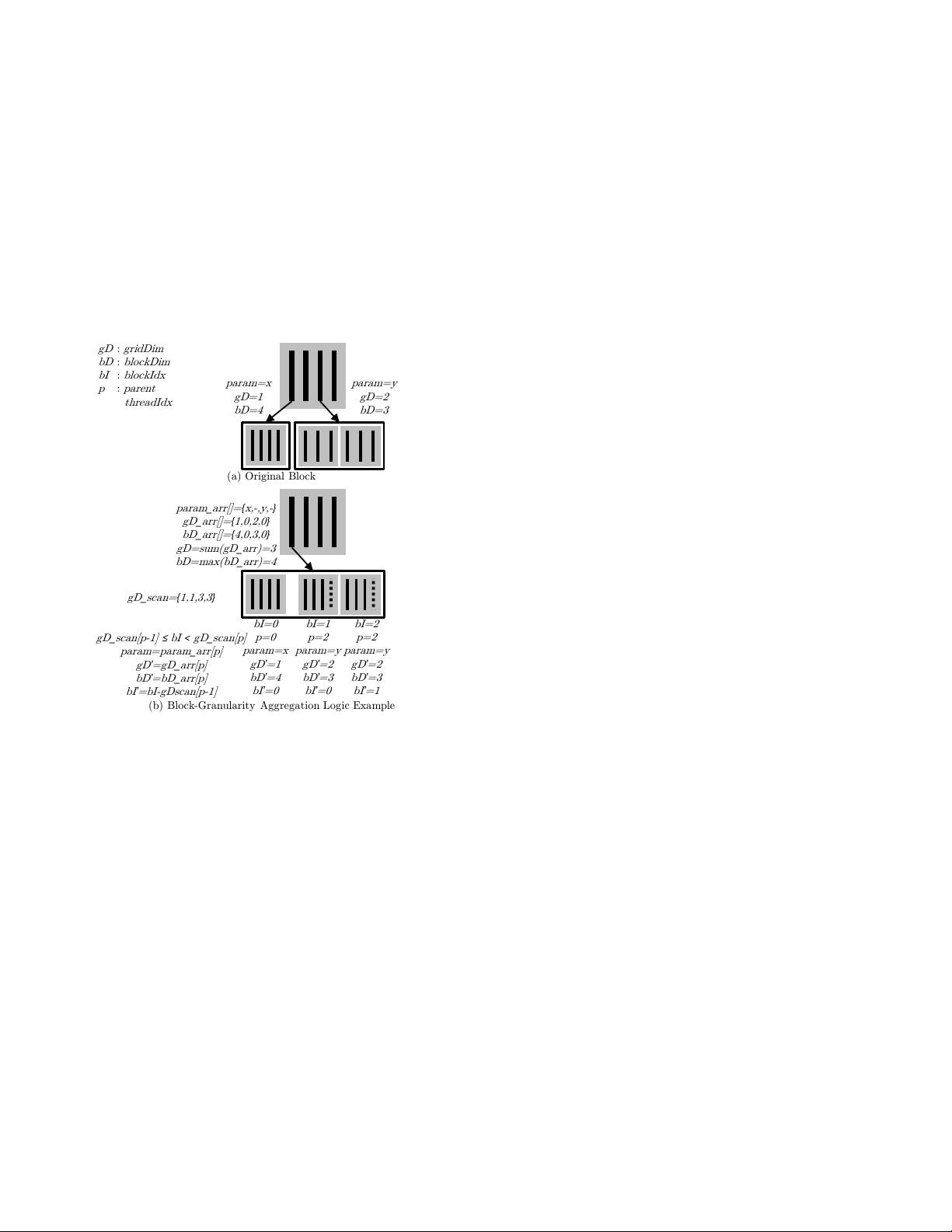

(a) Original Block

param=x

gD=1

bD=4

param=y

gD=2

bD=3

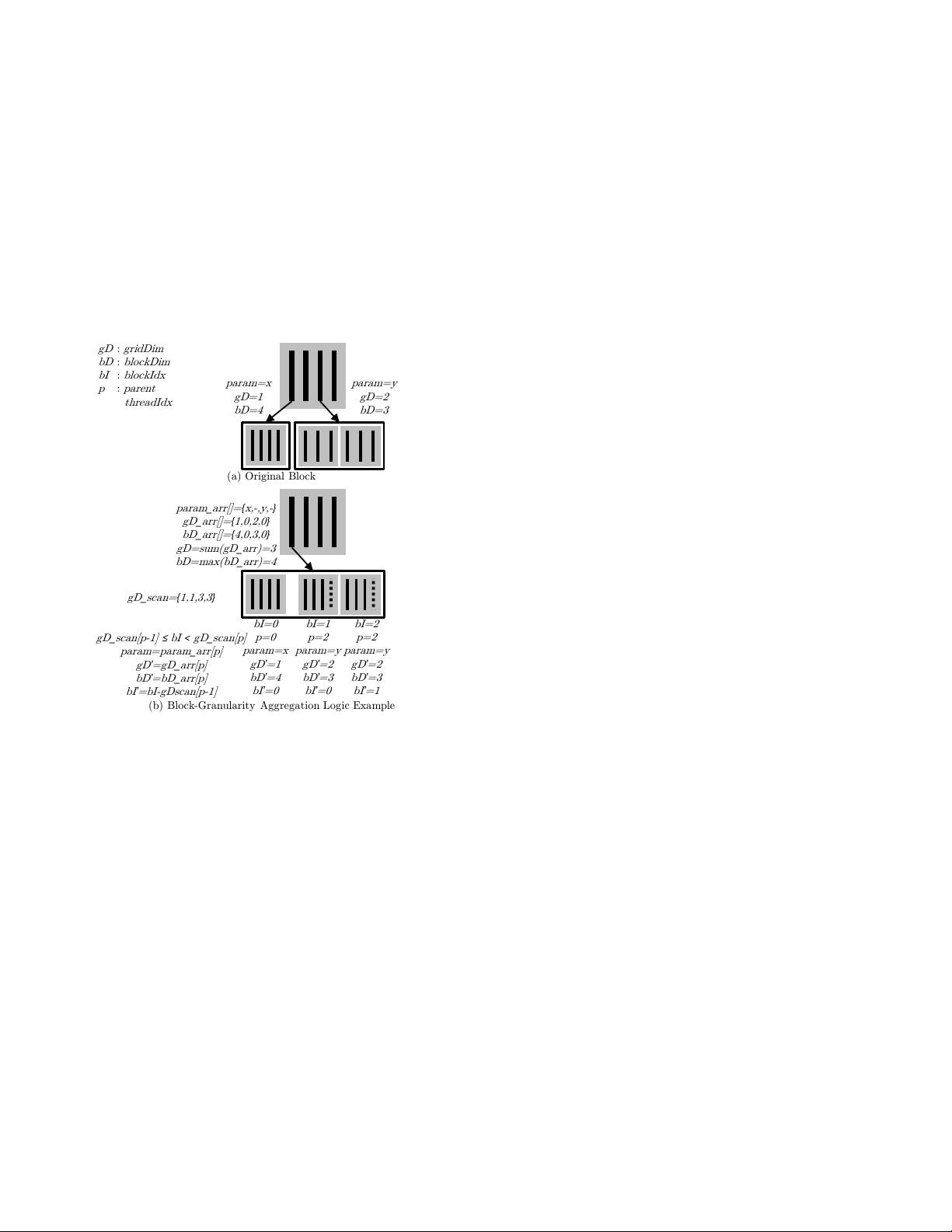

(b) Block-Granularity Aggregation Logic Example

param

_

arr[]=

{

x,-,y,-

}

gD

_

arr[]=

{

1,0,2,0

}

bD

_

arr[]=

{

4,0,3,0

}

gD=sum(gD

_

arr)=3

bD=max(bD

_

arr)=4

gD

_

scan=

{

1,1,3,3

}

gD

_

scan[p-1]

≤

bI

<

gD

_

scan[p]

param=param

_

arr[p]

gD

’

=gD

_

arr[p]

bD

’

=bD

_

arr[p]

bI

’

=bI-gDscan[p-1]

gD

:

gridDim

bD

:

blockDim

bI

:

blockIdx

p

:

parent

threadIdx

bI=0

p=0

param=x

gD

’

=1

bD

’

=4

bI

’

=0

bI=1

p=2

param=y

gD

’

=2

bD

’

=3

bI

’

=0

bI=2

p=2

param=y

gD

’

=2

bD

’

=3

bI

’

=1

Fig. 3. Aggregation Example

uniform across parent threads. Finally, one of the threads in

the warp (or block) launches a single aggregated kernel on

behalf of the others (line 10). For block granularity, a barrier

synchronization is needed before the launch to ensure that

all the threads in the block have completed their preparation

of the arguments and configurations. In the aggregated kernel

launch, the new configurations are used (line 11), arguments

are replaced with argument arrays, and arrays containing the

configurations for each original child are added (line 12).

In addition to transforming kernel launches in all original

kernels, an aggregated version of each original kernel must

also be created. Figure 2(d) shows how the kernel in Fig-

ure 2(b) is transformed into an aggregated version. First, all

parameters are converted into parameter (param) arrays and

configuration arrays are appended to the parameter list (line

14). Next, before the kernel body, logic is added for the

block to identify which thread in the parent warp (or block)

was its original parent (line 15). After identifying its original

parent, the block is then able to load its actual configurations

and parameters (lines 16-18). Threads that were not in the

original child kernel are then masked out (line 19). Finally,

in the kernel body, all kernel launches are transformed into

aggregated kernel launches, and all uses of blockDim and

blockIdx are replaced with the actual values (lines 20-21).

For the block to identify its original parent, it needs to

execute a scan (prefix sum) on the gD (gridDim) array then

search for its position (given by the aggregated blockIdx

value) between the scanned values (using p-ary search [11]). In

practice, since all child blocks need to scan the same gD array,

the scan is instead performed once by the parent before the

array is passed to the aggregated child kernel. Conveniently,

the scan can be performed along with the preparation of the

configuration and parameter arrays in the parent, making it

incur little additional overhead. Since the child kernel needs

both the scan value and the original gD value, it can recover

the original gD value by subtracting adjacent scan elements.

The scan is performed using CUB [12].

The transformed code requires that all threads are active to

perform the scan and max operations. To handle control di-

vergence, a preprocessing pass performs control-flow-to-data-

flow conversion to convert divergent launches to non-divergent

predicated launches so that all threads reach the launch point.

Predication is achieved by multiplying the predicate with the

grid dimension such that launches by inactive threads become

launches of zero blocks.

B. Kernel Granularity

Figure 1(d) illustrates the transformation that takes place

when kernel launch aggregation is applied at kernel granu-

larity. At this granularity, all the original child kernels are

aggregated into a single kernel. Because there is no global

synchronization on the GPU, a single thread cannot be chosen

to launch the kernel on behalf of the others once the others are

ready. Instead, the child kernels are postponed and launched

from the host after the parent kernel terminates. In order

to postpone the kernel launches, this transformation requires

that parent kernels do not explicitly synchronize with their

child kernels, so kernels with explicit synchronization are not

supported at this granularity.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功