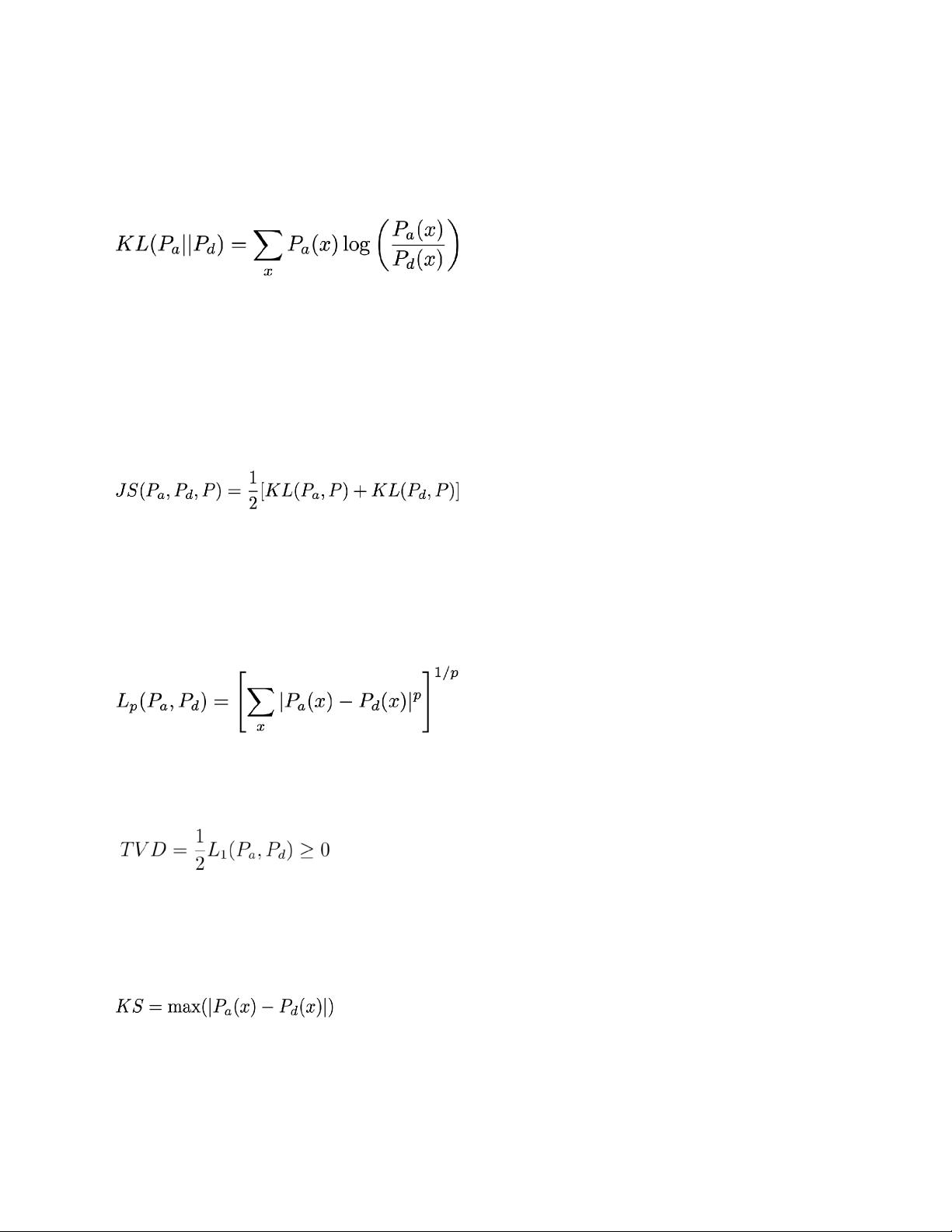

different the distribution P

a

is from P

d

. KL is essentially a measure of entropy. For all three categories, denoted by x, we

compute the ratio log[P

a

(x)/P

d

(x)], which is a measure of distance between the probability distributions for a label x.

We then take the probability weighted sum of this measure, weighted by the probability of P

a

(x), which gives the KL

measure of divergence of class a from class d. The measure is also a label imbalance metric and is denoted as

This metric is non-negative, KL >= 0.

P

d

(x) >0 for all x, else the metric is not defined.

We say that it gives the distance of P

a

from P

d

.

This measure is not symmetric (reversing the distributions

gives a different result), but is still a meaningful measure

of difference in label distributions.

[4] Jensen-Shannon divergence (JS): Denoting the average of the label distributions of the two classes as P, we can

compute the JS divergence as the average of the KL divergence of the probability distribution of the first class vs. P

andthe KL divergence of the probability distribution of the second class vs. P. This is an extension of the KL divergence

measure for label imbalance. In contrast to the KL divergence, this metric is symmetric and bounded above. The

measure is computed as

This metric is non-negative, and bounded above by ln(2),

that is, 0 <= JS <= ln(2), assuming that natural logarithm

is used in the KL divergence computation.

It provides a symmetric difference between label

distributions P

a

and P

d

.

[5] L

p

-Norm (LP): Another measure of distance in label distributions is the normed direct distance between the

distributions. For every label category, e.g., x = {rejected, wait-listed, accepted} in college admissions, we take the

difference and take the p-polynomial mean, as follows

This metric is non-negative, LP >= 0.

In this example, there will be three items to be summed

up.

[6] Total variation distance (TVD): this is half the L

1

-norm of the difference between the probability distribution of

labels of the first class and the probability distribution of labels of the second class.

This metric is non-negative, TVD >= 0.

This is a special case of the LP metric where p=1.

[7] Kolmogorov-Smirnov (KS), two-sample approximated version: This metric evaluates the KS statistical test between

the probability distribution of labels of the first class and the probability distribution of labels of the second class.This

metric indicates whether there is a big divergence in one of the labels across classes. It complements the other

measures by zoning in on the most imbalanced label.

This statistic is in the the range (0,1).

[8] Conditional Demographic Disparity in Labels (CDDL): This metric examines disparity of outcomes (labels) between

two classes, 1 and 2, but it also examines this disparity in subgroups, by stratifying the data using a “group”

variable.The metric examines whetherthe second class has a bigger proportion of the rejected outcomes than

theproportion of accepted outcomes for the same class. For example, in the case of college admissions, if class 2