This article has been accepted for inclusion in a future issue of this journal. Content is final as presented, with the exception of pagination.

4 IEEE/ACM TRANSACTIONS ON NETWORKING

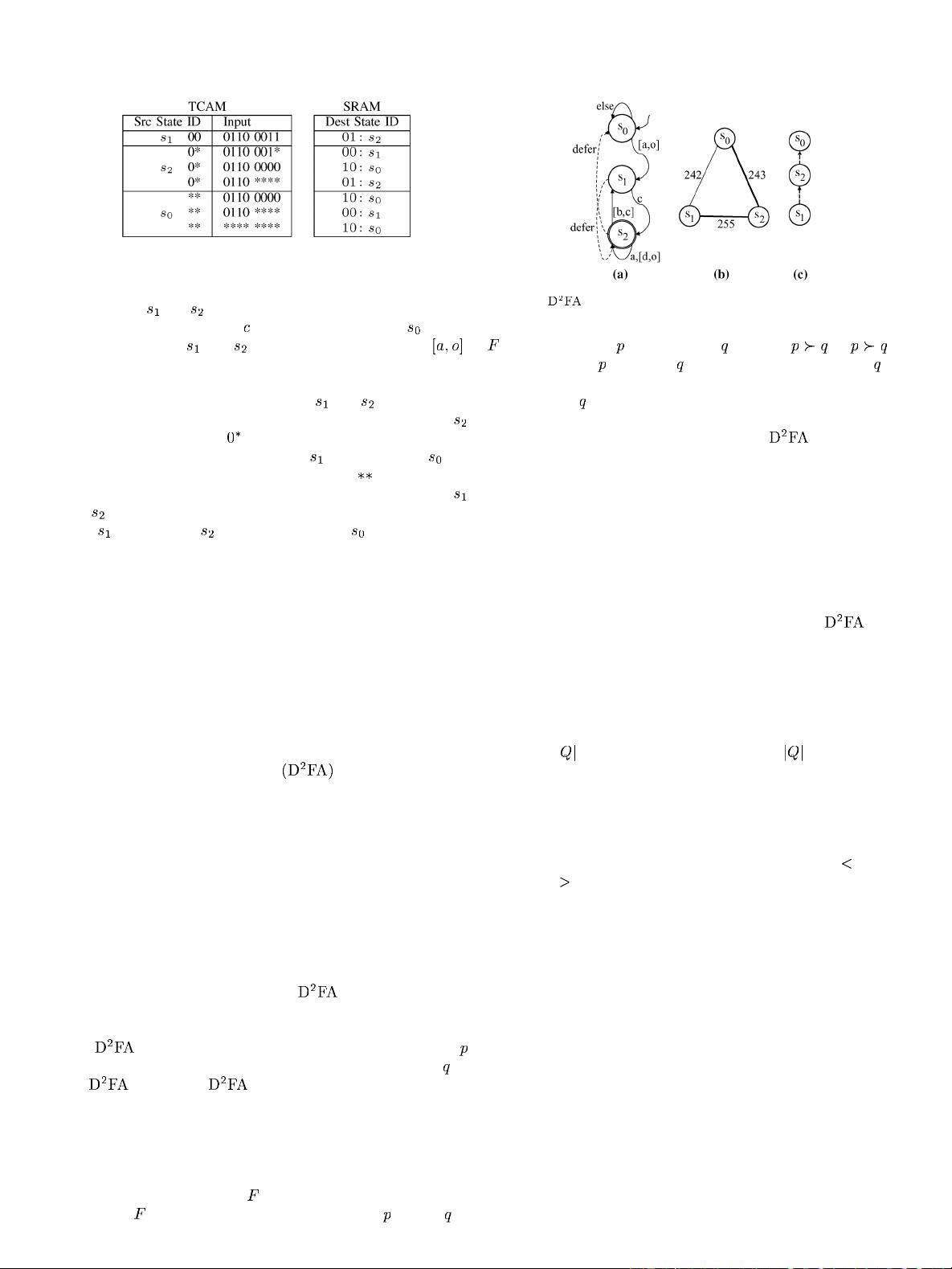

Fig. 2. TCAM table w ith shadow encoding.

source states and have the same destination state except for

the transitions on character

. L ikewise, source state differs

from source states

and only in the character range .

This implies there is a lot of state redundancy. The table in Fig. 2

shows how we can exploit state redundancy to further reduce re-

quired TCAM space. First, since states

and aremoresim-

ilar, we give them the state IDs 00 and 0 1, respectively. State

uses the ternary code of in the state ID fiel d of its TCAM

entries to share transitions with state

. We give state the

state ID of 10, and i t uses the ternary code of

in the state ID

field of its TCAM entries to share transitions with both states

and . Second, we order the state tables in the TCAM so that

state

is first, state is second, and state is last. This fa-

cilitates the shar ing of transitions among different states where

earlier states have incomplete tables deferring some transitions

to later tables.

In the rest of this section, we solve the following three prob-

lems in order to implem e nt shadow encoding: 1) Find the best

order of the state tables in the TCAM (any order is allowed).

2) Choose binary IDs and ternary codes for each state given the

state table order. 3) Identify entries to remove from each state

table.

Our shadow encoding techniqu e builds upon prior work with

default tran s itions [3], [5]–[7] b y exploiting the same state r e-

dundancy observation and using their concepts of default tran-

sitions and Delayed input DFAs

. However, our final

technical solutions are different because we work w ith TCAM,

whereas prior techniques work w ith RAM. For example, t he

concept of a ternary state code has no meaning when working

with RAM. The key advantage of shadow enco ding in TCAM

over prior default transition work is speed. Shado w encoding in

TCAM incurs no delay, whereas prior default transition tech-

niques incur significant delay because a DFA may have to tra-

verse multiple default transitions before con s um ing an input

character.

2) Determining Table Order: We first describe how we com-

pute the order of tables within the TCAM. We use some con-

cepts such as default transitions and

that were originally

definedbyKumaret al. [5] and subs equen tly refined in [3], [6],

and [7].

A

is a DFA with default transitions where each state

can have at most one default transition to one other state in

the

.Inalegal , the directed graph consisting of

only default transitions must be acyclic; we call this graph a

deferment forest. It is a forest rather than a tree since mo re than

one node may not have a default transition. We call a tree in a

deferment forest a deferment tree.

We determine the order of state tab les in TCAM by con-

structing a deferment forest

and then using the partial order

defined by

. If there is a directed path from state to stat e in

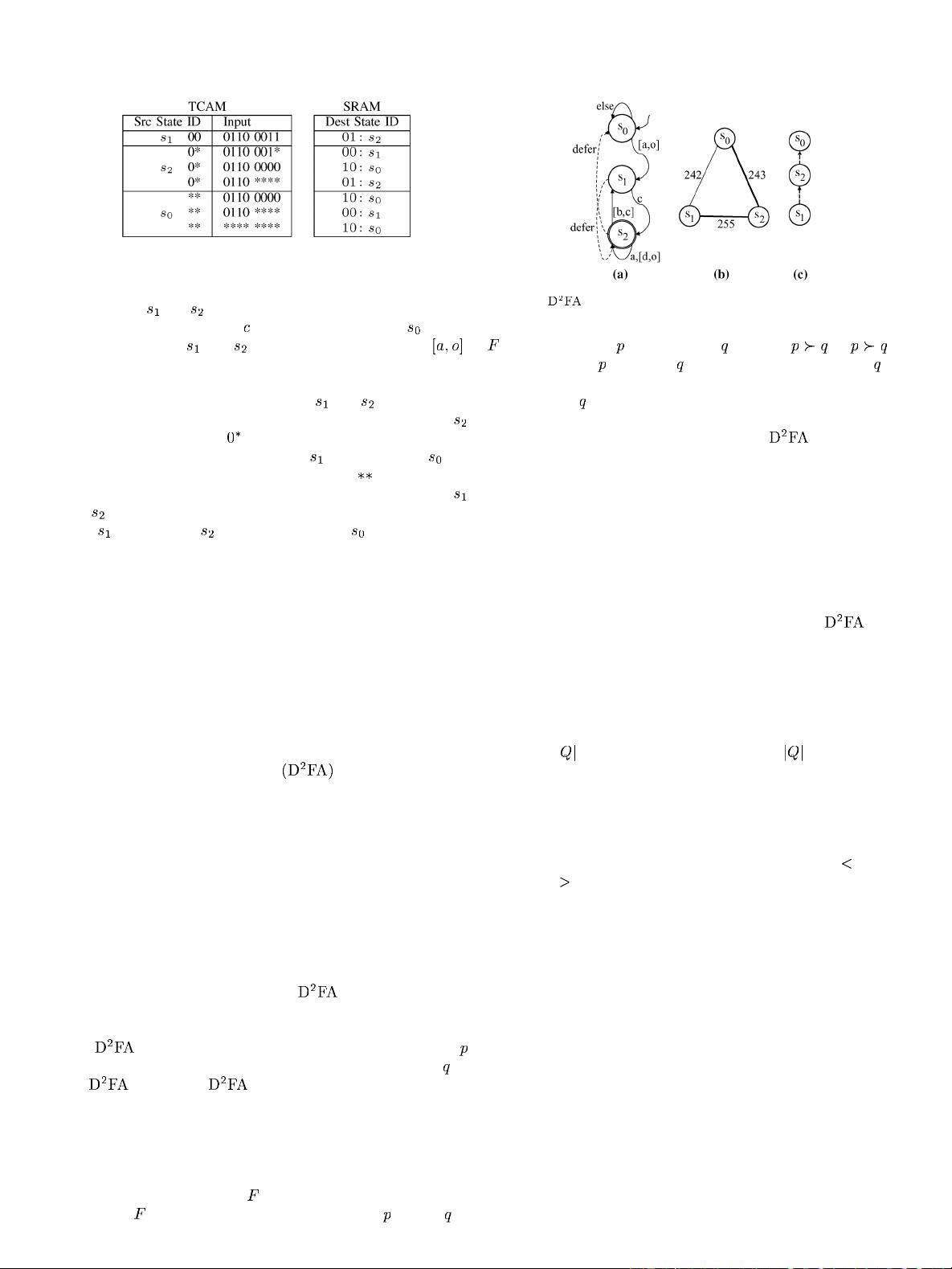

Fig. 3. (a) , (b) SRG, and (c) deferm ent tree of the DFA in Fig. 1.

, we say that state defers to state , d enoted .If ,

we say that state

is in state ’s shadow. Specifically, state ’s

transition table must be placed after the transition tables of all

states in state

’s shadow.

Our algorithm to compute a deferment forest that minimizes

the TCAM representation o f the resu lti ng

builds upon

algorithms from prior work [3], [5]–[7], but there are several

key differences. First, u nlike prior work, we do not pay a speed

penalty for long default transition paths. Thus, we achieve better

transition sharing than prior work. Second, t o maximize the po-

tential gains f ro m our variable striding technique described in

Section V and table consolidation, we choose states that have

lots of self-loop s to be the roots of our deferment trees. Prior

work has typically chosen roots in order to minimize the dis-

tance from a leaf node to a root, though Becchi and Crowley

do consider related criteria w hen constructing their

[3].

Third, we explicitly ig nor e tra nsit ion s har ing bet w een st ates th at

have few transitions in common. This has been done implicitly

in the past, but we show how doing so leads to better results

with table consolidation.

Our algorithm consists of four steps. First, we construct a

space reduction graph (SRG) [5] f ro m a given DFA. Given a

DFA with

states, an SRG is a clique with vertices each

representing a distinct state. The weight of each edge is the

number of comm on outgoing transitions between the two con-

nected states. Second, w e trim away edges with small weight

from the SRG. In our experim ents, we use a cutoff of 10. This

pruning is effective because the distribution of edge weights in

our experiments is bim odal: usually either very small (

10) or

very large (

180). Using these low weight edges as default tran-

sitions leads to m ore TCAM entries and reduces the number

of deferment trees which hinders our table consolidation tech-

nique (Section IV). Third, we compute a deferme nt forest b y

running Kruskal’s algorithm to find a maximum weight span-

ning forest. Fourth, for each deferment tree, we pick the state

that has largest number of transiti ons going back to itself as the

root. Fig. 3(b) and (c) shows the SRG and the defermen t tree,

respectively, for the DFA in Fig. 1.

In most deferment trees, m ore than 128 (i.e., half) of the root

state’s outgoing transitions lead back to the r oot state; we call

such a s tate a self-looping state. Based on the pigeonhole prin-

ciple and the observed bimodal distribution, each deferment tree

typically has exactly one self-looping state, and it is the root

state. We choose self-looping states as ro ots to improve the ef-

fectiveness of variable striding, which we describe in Section V.

When we apply Kruskal’s algorithm, we use a tie-breaking

strategy because many edges have the same weight. To have

most deferment trees centered around a self-looping state, we