Published as a conference paper at ICLR 2019

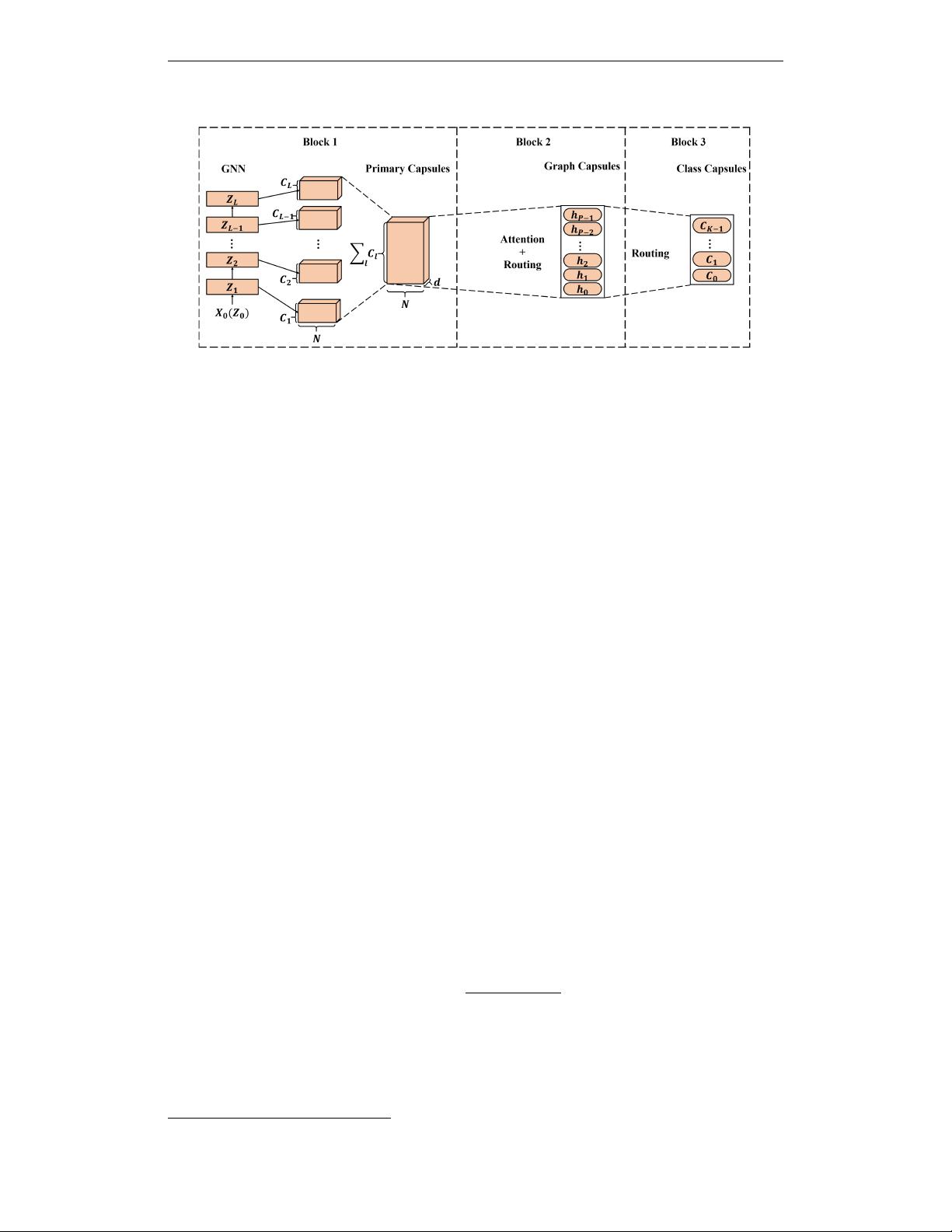

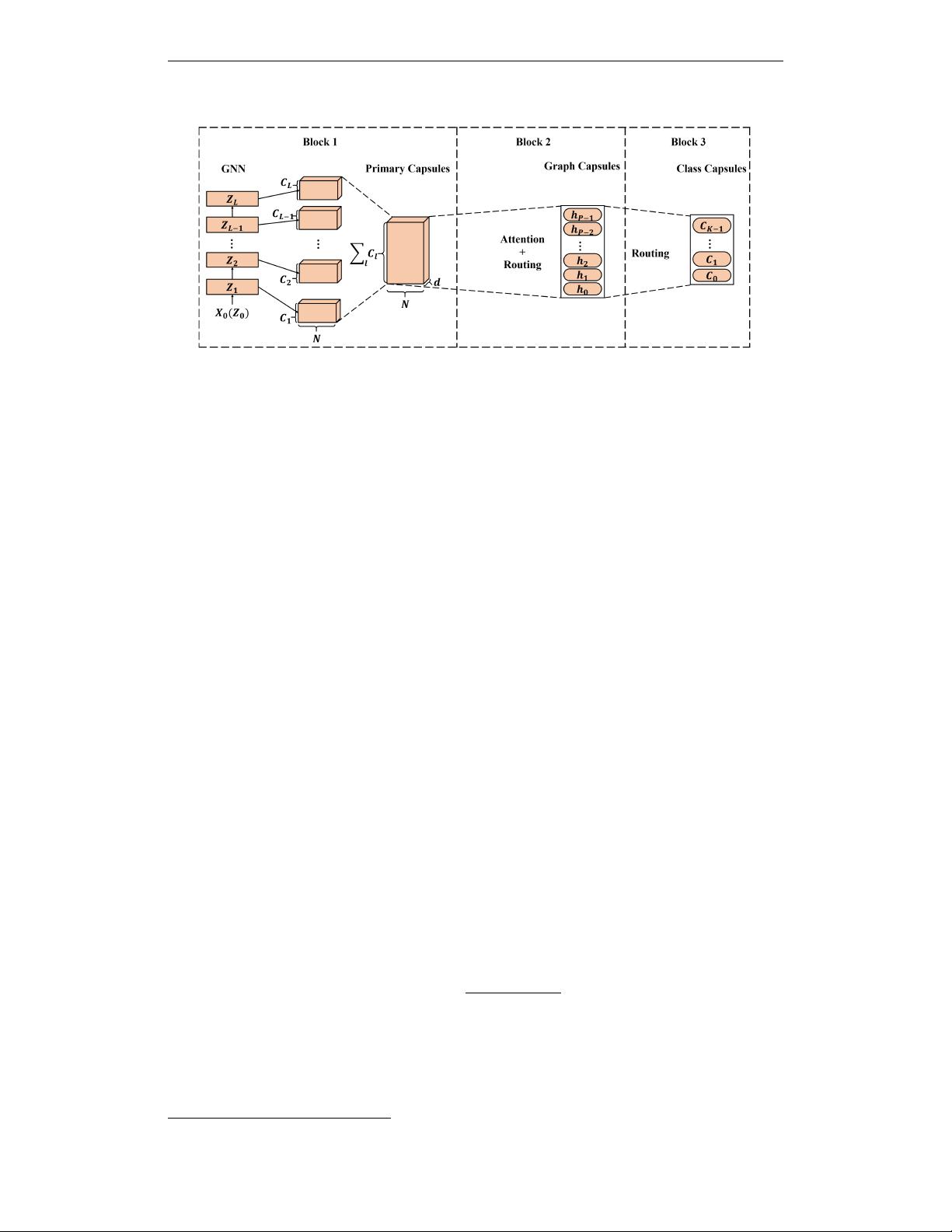

Figure 1: Framework of CapsGNN. At first, GNN is used to extract node embeddings and form

primary capsules. Attention module is used to scale node embeddings which is followed by Dynamic

Routing to generate graph capsules. At the last stage, Dynamic Routing is applied again to perform

graph classification.

where W

l

ij

∈ R

d×d

0

is the trainable weights matrix. It serves as the channel filters from the ith

channel at the lth layer to the jth channel at the (l + 1)th layer. Here, we choose f (·) = tanh(·)

as the activation function. Z

l+1

∈ R

N×d

0

, Z

0

= X,

˜

A = A + I

1

and

˜

D =

P

j

˜

A

ij

. To

preserve features of sub-components with different sizes, we use nodes features extracted from all

GNN layers to generate high-level capsules.

3.2 HIGH-LEVEL GRAPH CAPSULES

After getting local node capsules, global routing mechanism is applied to generate graph capsules.

The input of this block contains N sets of node capsules, each set is S

n

= {s

11

, .., s

1C

1

, ..., s

LC

L

},

s

lc

∈ R

d

, where C

l

is the number of channels at the lth layer of GNN, d is the dimension of each

capsule. The output of this block is a set of graph capsules H ∈ R

P ×d

0

. Each of the capsules

reflects the properties of the graph from different aspects. The length of these capsules reflects

the probability of the presence of these properties and the angle reflects the details of the graph

properties. Before generating graph capsules with node capsules, an Attention Module is introduced

to scale node capsules.

Attention Module. In CapsGNN, primary capsules are extracted based on each node which means

the number of primary capsules depends on the size of input graphs. In this case, if the routing

mechanism is directly applied, the value of the generated high-level capsules will highly depend on

the number of primary capsules (graph size) which is not the ideal case. Hence, an Attention Module

is introduced to combat this issue.

The attention measure we choose is a two-layer fully connected neural network F

attn

(·). The num-

ber of input units of F

attn

(·) is d × C

all

where C

all

=

P

l

C

l

and the number of output units equals

to C

all

. We apply node-based normalization to generate attention value in each channel and then

scale the original node capsules. The details of Attention Module is shown in Figure 2 and the

procedure can be written as:

scaled(s

(n,i)

) =

F

attn

(

˜

s

n

)

i

P

n

F

attn

(

˜

s

n

)

i

s

(n,i)

(3)

where

˜

s

n

∈ R

1×C

all

d

is obtained by concatenating all capsules of the node n. s

(n,i)

∈ R

1×d

represents the ith capsule of the node n and F

attn

(

˜

s

n

) ∈ R

1×C

all

is the generated attention value. In

this way, the generated graph capsules can be independent to the size of graphs and the architecture

will focus on more important parts of the input graph.

1

I represents identity matrix.

4