2.2.3 Selection operation

The one-to-one greedy selection is employed by means of

comparing a parent and its corresponding offspring. And

this strategy enhances diversity in comparison to other

selection strategies such as tournament selection, rank

based selection and fitness proportional selection. The

selection operation at the K-th generation is described as

X

Kþ1

i

¼

U

K

i

if f ðU

K

i

Þf ðX

K

i

Þ

X

K

i

if f ðU

K

i

Þ[ f ðX

K

i

Þ

ð7Þ

where f(X) is the objective function value of each trial

vector.

2.3 Hybrid teaching–learning-based optimization

(TLBO–DE)

An efficient evolutionary algorithm should make use of

both the local information of solutions found so far and

the global information about the search space. The local

information of solutions found so far can be helpful for

exploitation, while the global information can guide the

search for exploring promising areas. The search in

TLBO is mainly based on the global information, and

TLBO has the advantage that learners will converge to a

single attractor Teacher. DE is mainly based on the dis-

tance and direction information which is a kind of local

information. DE has the advantage of not being biased

toward any prior defined guider which make DE can

maintain the diversity of population and explore local

search. The key reason for employing the hybridization is

that the hybrid algorithm can take advantage of the

strengths of each individual technique while simulta-

neously overcoming its main limitations. That is, instead

of employing a single modification learner in TLBO, we

use the integration of two modification learners in TLBO–

DE. The first is the classical modification learner of

TLBO, and the second is generated using the DE muta-

tion rules for learner. The detailed learning processes are

described below.

2.3.1 Teacher phase

To balance the global and local search ability, a modified

interactive learning strategy is proposed in teacher phase.

In this learning method, each learner is randomly assigned

to an interactive learning strategy (the learning strategy of

Teacher Phase in the standard TLBO or differential

learning based on DE).

In TLBO–DE, the updating formula of the learning for a

learner X

i

in teacher phase is proposed by the hybridization

of the learning strategy of Teacher Phase and the differ-

ential learning as follows

newX

i;j

¼ u V

1;j

ðtÞþð1 uÞV

2;j

ðtÞð8Þ

where u called the hybridization factor is a random number

in the range [0, 1] for the j-th dimension, V

1,j

is the learners

which is calculated according to Eq. (2) and V

2,j

is the

learners which is calculated according to Eq. (5).

2.3.2 Learner phase

At the same time, in the learner phase, learners also learn

from interaction between themselves. In this learning

method, one of learners randomly learns from the other

learner in the population. Let newX

i

represent the interac-

tive learning result of the learner X

i

and it can be expressed

as follows:

newX

i;j

¼ u V

1;j

ðtÞþð1 uÞV

2;j

ðtÞð9Þ

where u called the hybridization factor is a random number

in the range [0, 1] for the j-th dimension, V

1,j

is the learners

which is calculated according to Eq. (3) and V

2,j

is the

learners which is calculated according to Eq. (5).

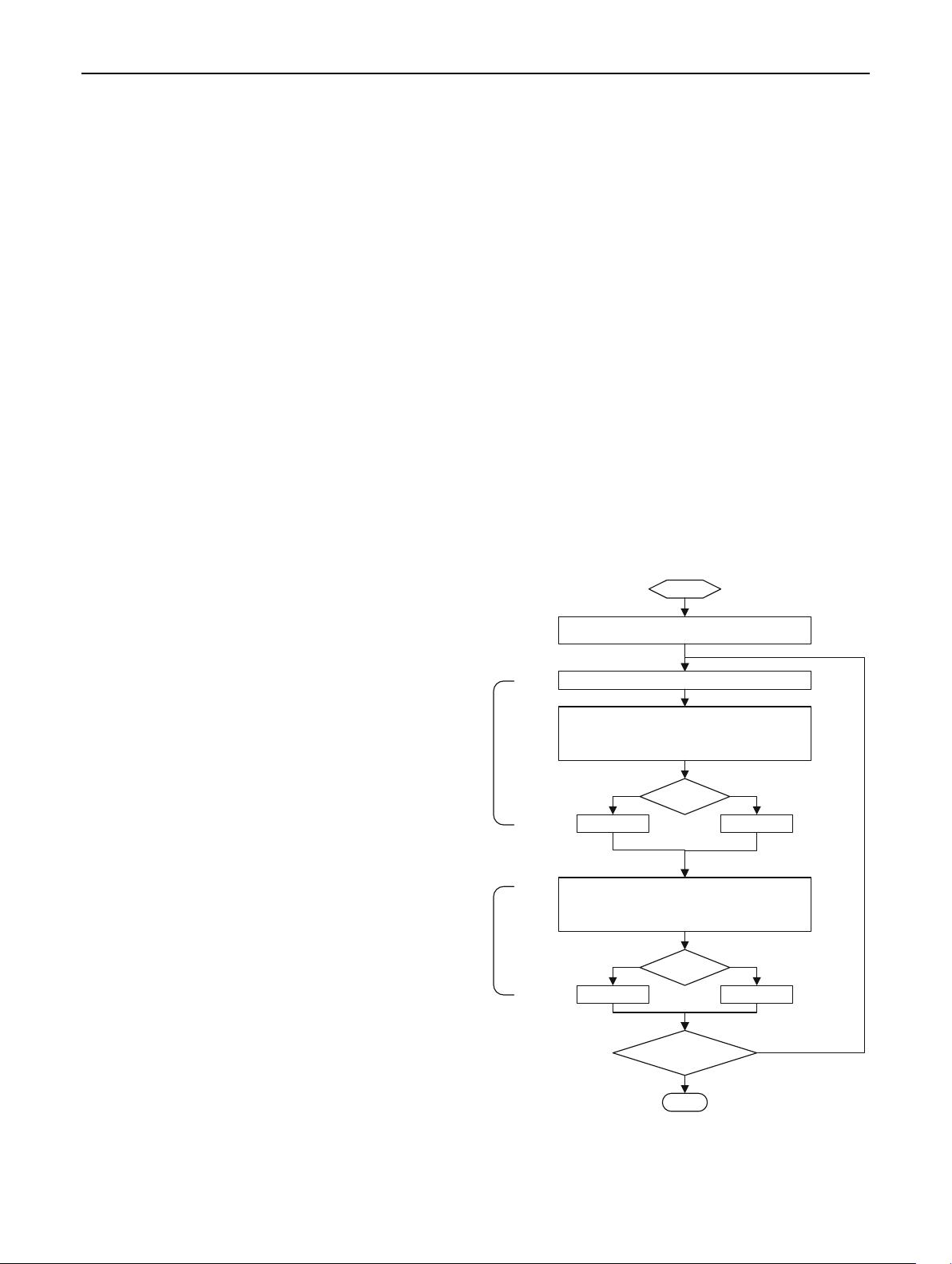

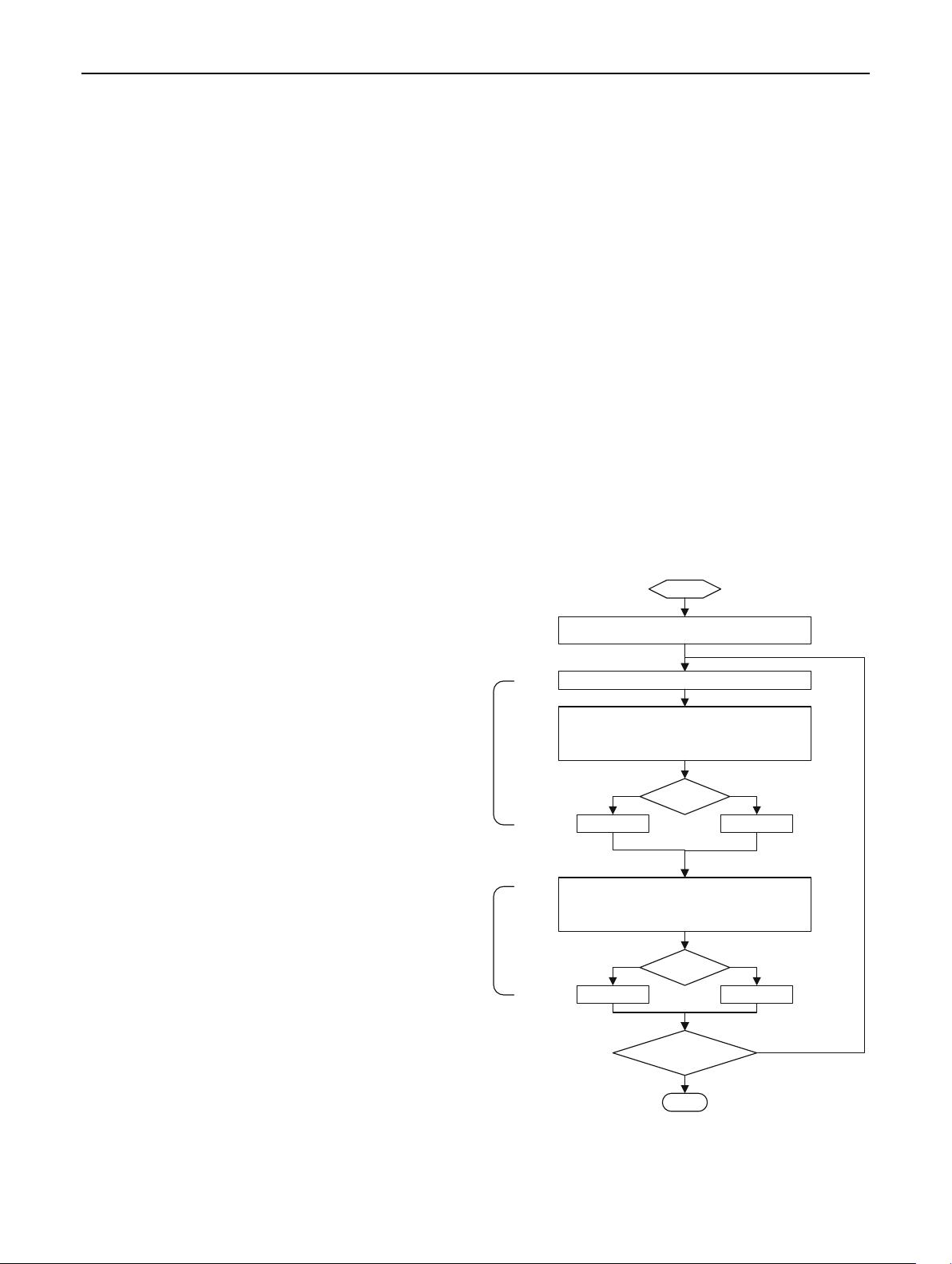

As previously analyzed, the complete flowchart of the

TLBO–DE algorithm is shown in Fig. 1.

Begin

Initialize learners size(NP), dimension(D)

Modify each learner X

i

in the class

V

1j

= Learning strategy of Teacher Phase

V

2j

= DE Learning

newX

i j

= u*V

1j

+(1-u)*V

2j

newX

i

better X

i

Modify each learner X

i

in the class

V

1j

= Learning strategy of Learner Phase

V

2j

= DE Learning

newX

i j

= u*V

1j

+(1-u)*V

2j

X

i

= newX

i

X

i

= X

i

Yes No

newX

i

better X

i

X

i

= newX

i

Yes No

X

i

= X

i

termination

criteria satisfied

No

End

Yes

Calculate the Teacher and Mean

Learner

Phase

Teacher

Phase

Fig. 1 Flow chart showing the working of TLBO–DE algorithm

Neural Comput & Applic

123