International Journal of Machine Learning and Cybernetics

1 3

Note that

x =(C(w

t−1

);C(w

t−2

); … ;C(w

t−n+1

))

is the concat-

enation of input distributed vectors. Then the probability of

each output word

is computed with a softmax function:

The model is trained by maximizing the following penalized

objective function:

where T is the number of training samples,

is the overall

parameters (including those of the neural network and the

embedding matrix C) and

is a regularization term with

weight

.

Following the idea of using distributed representations

from NNLM, a Log-Bilinear Language Model [79] was pro-

posed, where a bilinear function is used to compute the prob-

ability of the next word instead of the feed-forward network

in NNLM. More formally, the unnormalized probability is

computed as follows:

where

∈ ℝ

specifies the interaction between

and

,

is the softmax function, and biases are omitted

for simplicity. Later the Hierarchical Log-Bilinear Model

(HLBL) [80] was proposed based on the Log-Bilinear Lan-

guage Model to speed-up its predicting stage.

A major deficiency of NNLM is that the feed-forward

network can only observe a fixed length of context, which

hinders it from exploiting longer context. Therefore, Recur-

rent Neural Networks (RNNs) are then used to replace the

feed-forward network in the Recurrent Neural Network

Language Model (RNNLM), which effectively reduces the

perplexity [73].

2.2.2 SENNA

In all the works introduced above, distributed representa-

tions are only regarded as by-products of language mod-

els. Aiming at making use of the strong capability of such

embeddings to facilitate more NLP tasks, a Semantic Extrac-

tion using a Neural Network Architecture (SENNA) system

was proposed [20], where distributed embeddings are trained

in a language model with a large amount of unlabeled data,

and then used as input to downstream tasks including POS

tagging, chunking, Named Entity Recognition (NER) and

Semantic Role Labeling (SRL).

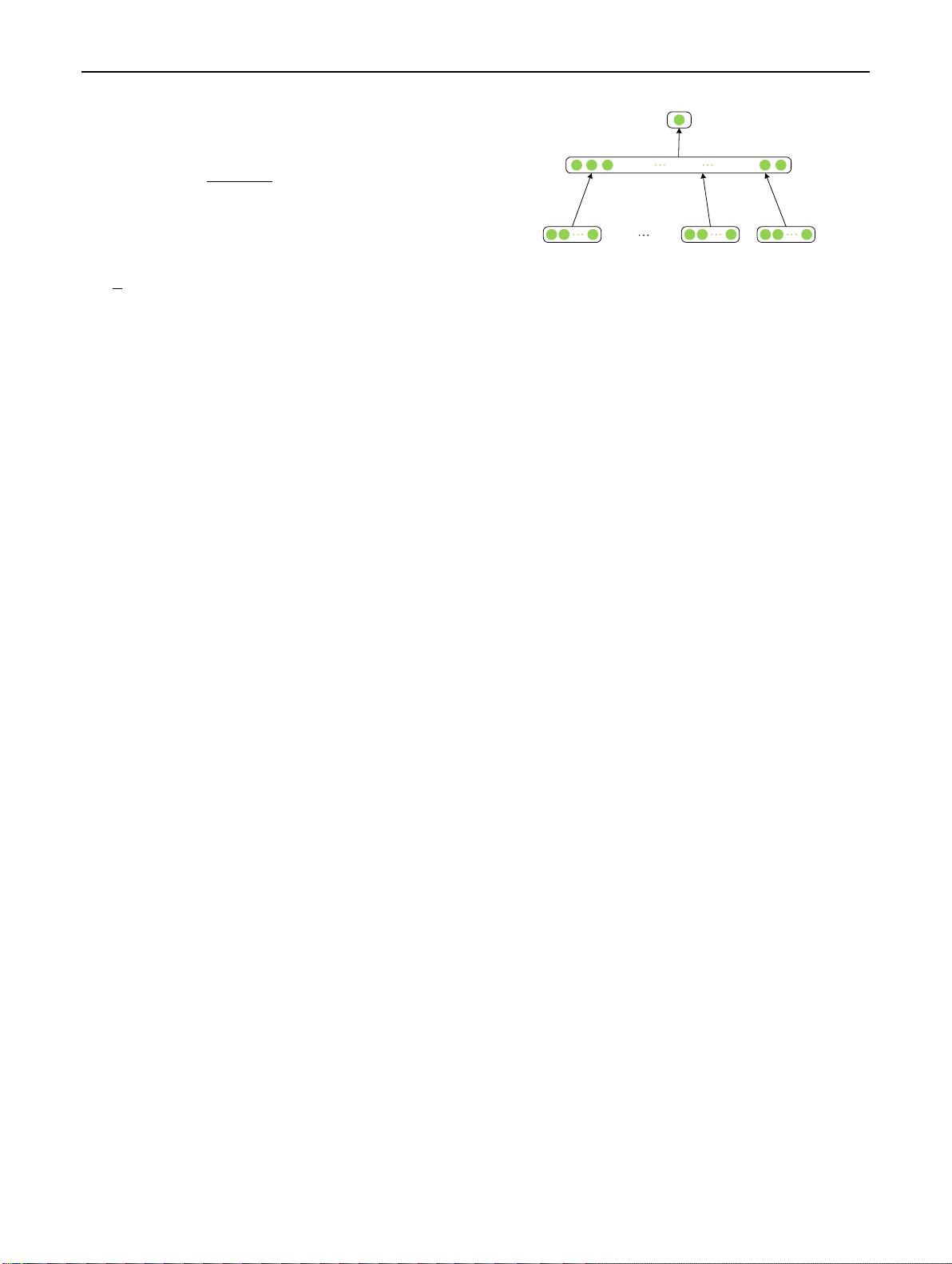

As depicted in Figure2, SENNA makes use of a neu-

ral network to train the language model which is similar to

(w

t

w

t−n+1∶t−1

)=

t

y

=

T

log P(w

t

w

t−n+1∶t−1

;𝜃)+𝜆 ⋅ R(𝜃)

(w

t

w

t−n+1∶t−1

)=𝜎(C(w

t

)

⊤

∑

W

i

C(w

i

))

NNLM. But instead of estimating the probability of a word

given the previous words, it computes scores describing the

acceptability of a piece of text. This is because computing

the normalization term of the probability with large diction-

ary size is extremely demanding, and sophisticated approxi-

mations are required. Therefore, in SENNA they instead use

a pairwise ranking approach, seeking a network that assigns

higher scores to legal phrases than to incorrect ones.

Formally, let

be the output score given a window of

text

, then the language model is trained

by minimizing a hinge loss with respect to parameters

in

the network:

where

is the set of all possible text windows with n words,

is the word dictionary, and

represents a text window

obtained by randomly replacing the central word of c with

word w.

Practically, n was set to 11 in the paper, and it took 7

weeks to train the embeddings on a large amount of unla-

beled data. These embeddings are then used as input to four

supervised tasks (i.e. POS tagging, chunking, NER and

SRL) in a similar network, where significant improvements

have been observed.

2.2.3 CBOW andSkip‑gram

SENNA innovatively applied the distributed representation

to a bunch of NLP tasks other than language modeling, and

demonstrated its capability of capturing textual information

from unlabeled data to facilitate downstream tasks. How-

ever, the training of it is extremely time-consuming. Later,

two prominent architectures, namely Continuous Bag-of-

Words (CBOW) model and Skip-gram model [74], were pro-

posed where the computational complexity is substantially

reduced by simplifying the network architecture.

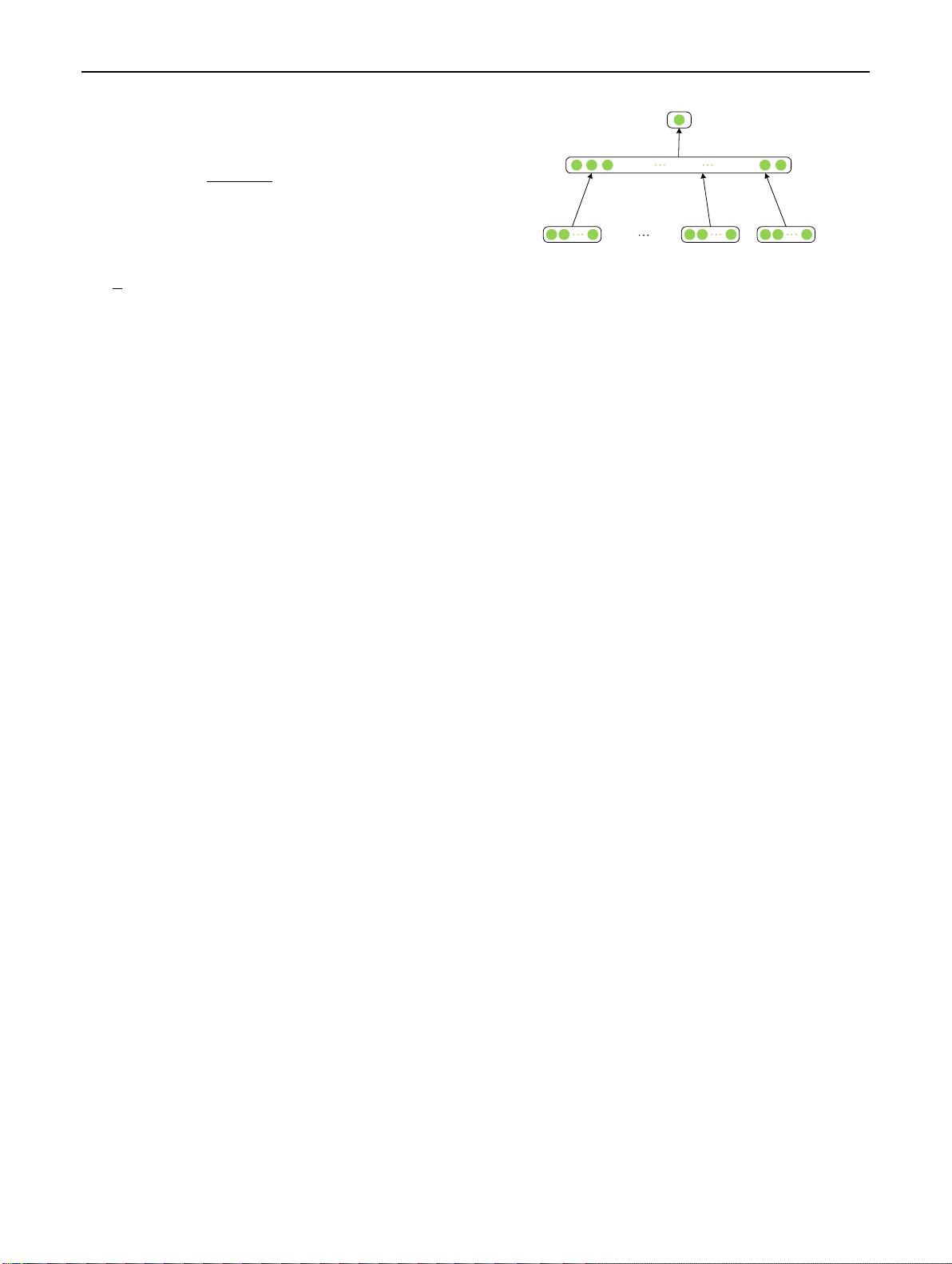

Concretely, the CBOW model predicts a word given its

context. Fig.3 shows its architecture, which is similar to

=

T

D

max{0, 1 − f

𝜃

(c)+f

𝜃

(c

(w)

)}

hard tanh

W, b

Fig. 2 Neural network architecture of SENNA