Parameter-Efficient Transfer Learning for NLP

Neil Houlsby

1

Andrei Giurgiu

1 *

Stanisław Jastrze¸bski

2 *

Bruna Morrone

1

Quentin de Laroussilhe

1

Andrea Gesmundo

1

Mona Attariyan

1

Sylvain Gelly

1

Abstract

Fine-tuning large pre-trained models is an effec-

tive transfer mechanism in NLP. However, in the

presence of many downstream tasks, fine-tuning

is parameter inefficient: an entire new model is

required for every task. As an alternative, we

propose transfer with adapter modules. Adapter

modules yield a compact and extensible model;

they add only a few trainable parameters per task,

and new tasks can be added without revisiting

previous ones. The parameters of the original

network remain fixed, yielding a high degree of

parameter sharing. To demonstrate adapter’s ef-

fectiveness, we transfer the recently proposed

BERT Transformer model to

26

diverse text clas-

sification tasks, including the GLUE benchmark.

Adapters attain near state-of-the-art performance,

whilst adding only a few parameters per task. On

GLUE, we attain within

0.4%

of the performance

of full fine-tuning, adding only

3.6%

parameters

per task. By contrast, fine-tuning trains

100%

of

the parameters per task.

1. Introduction

Transfer from pre-trained models yields strong performance

on many NLP tasks (Dai & Le, 2015; Howard & Ruder,

2018; Radford et al., 2018). BERT, a Transformer network

trained on large text corpora with an unsupervised loss,

attained state-of-the-art performance on text classification

and extractive question answering (Devlin et al., 2018).

In this paper we address the online setting, where tasks

arrive in a stream. The goal is to build a system that per-

forms well on all of them, but without training an entire new

model for every new task. A high degree of sharing between

tasks is particularly useful for applications such as cloud

services, where models need to be trained to solve many

*

Equal contribution

1

Google Research

2

Jagiellonian University.

Correspondence to: Neil Houlsby <neilhoulsby@google.com>.

Proceedings of the

36

th

International Conference on Machine

Learning, Long Beach, California, PMLR 97, 2019. Copyright

2019 by the author(s).

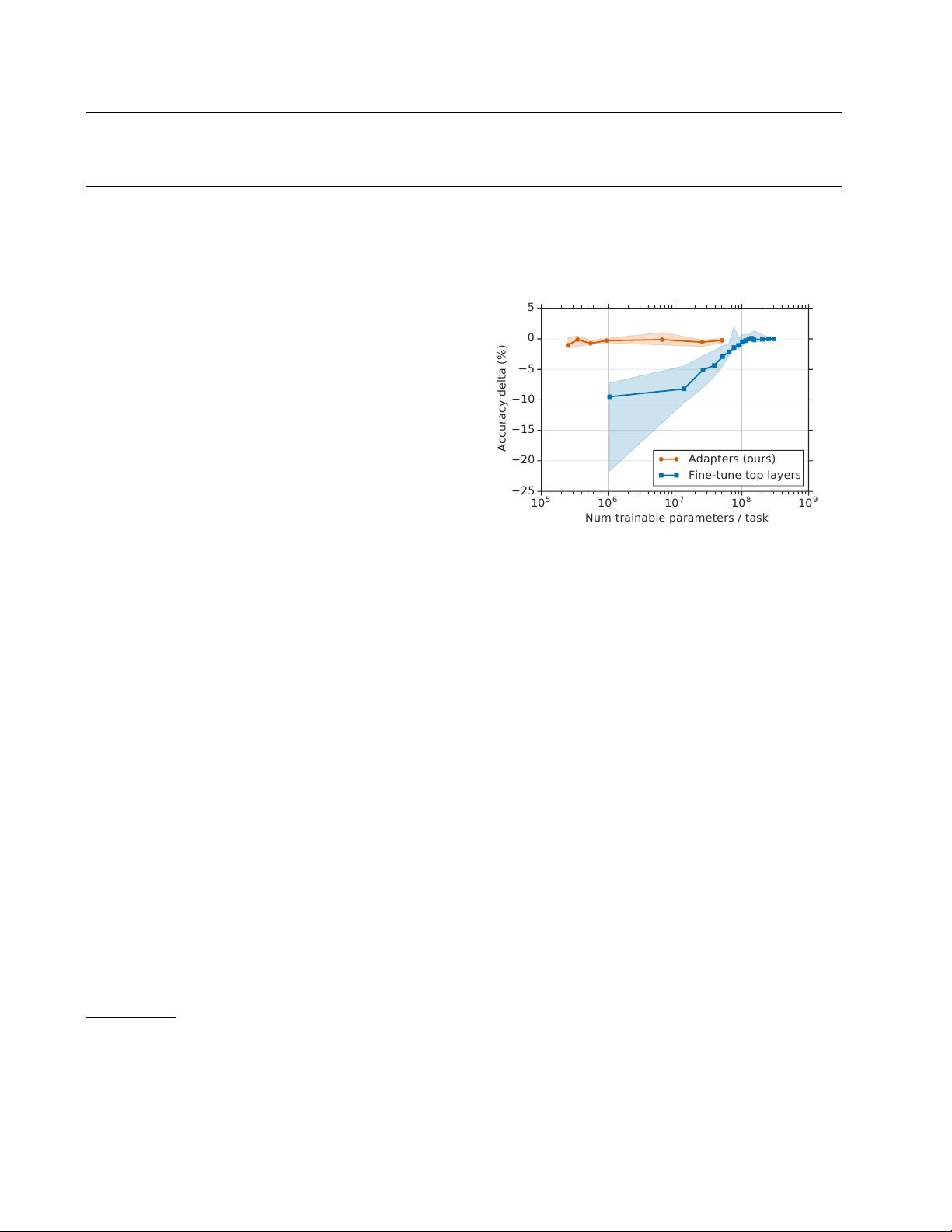

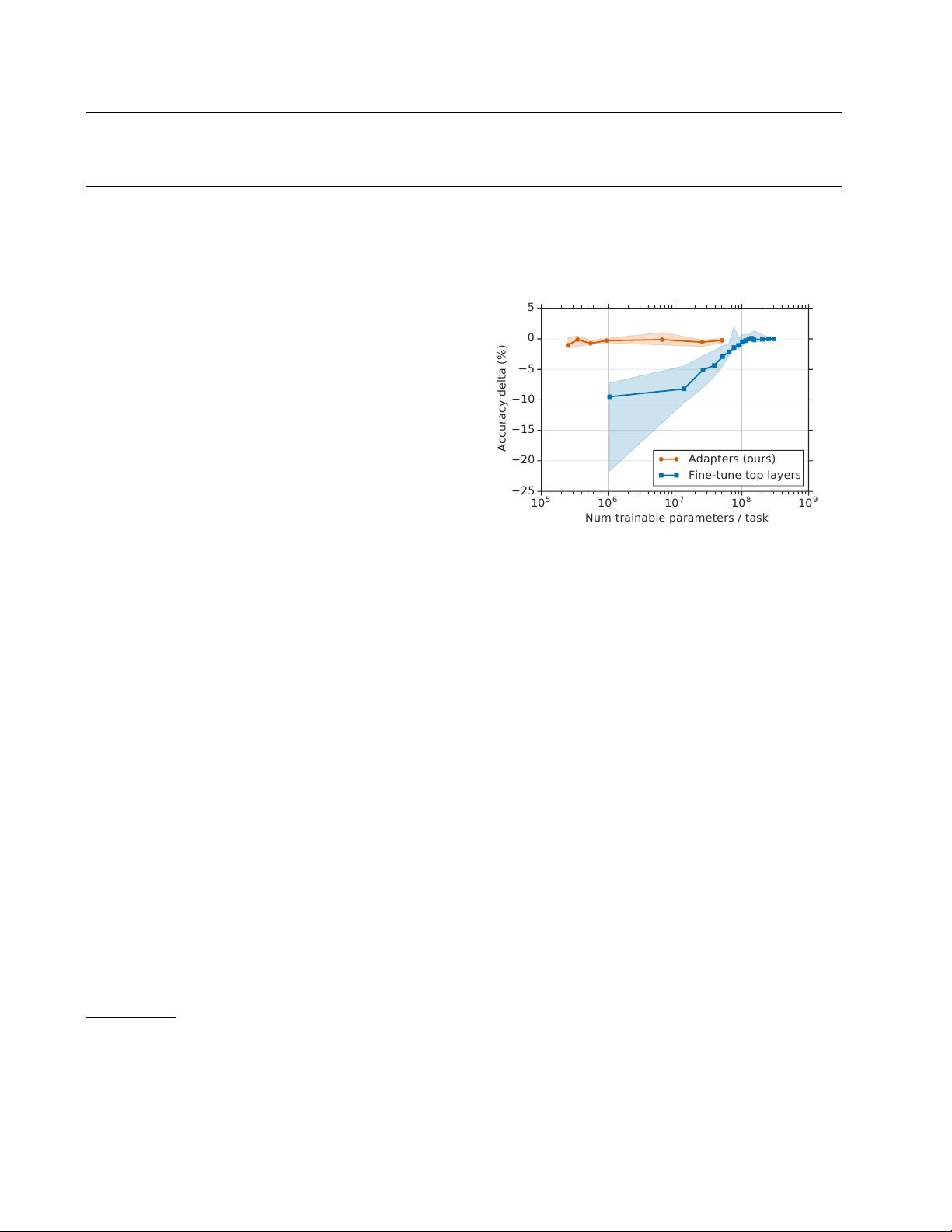

10

5

10

6

10

7

10

8

10

9

Num trainable parameters / task

−25

−20

−15

−10

−5

0

5

Accuracy delta (%)

Adapters (ours)

Fine-tune top layers

Figure 1.

Trade-off between accuracy and number of trained task-

specific parameters, for adapter tuning and fine-tuning. The y-axis

is normalized by the performance of full fine-tuning, details in

Section 3. The curves show the

20

th,

50

th, and

80

th performance

percentiles across nine tasks from the GLUE benchmark. Adapter-

based tuning attains a similar performance to full fine-tuning with

two orders of magnitude fewer trained parameters.

tasks that arrive from customers in sequence. For this, we

propose a transfer learning strategy that yields compact and

extensible downstream models. Compact models are those

that solve many tasks using a small number of additional

parameters per task. Extensible models can be trained in-

crementally to solve new tasks, without forgetting previous

ones. Our method yields a such models without sacrificing

performance.

The two most common transfer learning techniques in NLP

are feature-based transfer and fine-tuning. Instead, we

present an alternative transfer method based on adapter

modules (Rebuffi et al., 2017). Features-based transfer in-

volves pre-training real-valued embeddings vectors. These

embeddings may be at the word (Mikolov et al., 2013), sen-

tence (Cer et al., 2019), or paragraph level (Le & Mikolov,

2014). The embeddings are then fed to custom downstream

models. Fine-tuning involves copying the weights from a

pre-trained network and tuning them on the downstream

task. Recent work shows that fine-tuning often enjoys better

performance than feature-based transfer (Howard & Ruder,

2018).