A Dynamic Deep Neural Network Design for

Efficient Workload Allocation in Edge Computing

Chi Lo, Yu-Yi Su, Chun-Yi Lee, and Shih-Chieh Chang

Dept. of Computer Science, National Tsing Hua University

No. 101, Sec. 2, Kuang-Fu Rd., Hsinchu, Taiwan 30013, R.O.C.

{chilo9212, wwball34}@gmail.com, {cylee, scchang}@cs.nthu.edu.tw

Abstract—Unreliable communication channels and limited

computing resources at the edge end are two primary constraints

of battery-powered movable devices, such as autonomous robots

and unmanned aerial vehicles (UAVs). The impact is espe-

cially severe for those performing deep neural network (DNN)

computations. With increasing demand for accuracy, the trend

in modern DNN designs is the use of cascaded modularized

layers. Implementing a deep network at the edge increases

computational workloads and resource occupancy, leading to an

increase in battery drain. Using a shallow network and offloading

workloads to backbone servers, however, incur significant latency

overheads caused by unstable communication channels. Hence,

dynamic DNN design techniques for efficient workload allocation

are urgently required to manage the amount of workload trans-

missions while achieving the required accuracy. In this paper, we

explore the use of authentic operation (AO) unit and dynamic

network structure to enhance DNNs. The AO unit defines a set

of stochastic threshold values for different DNN output classes

and determines at runtime if an input has to be transferred

to backbone servers for further analysis. The dynamic network

structure adjusts its depth according to channel availability.

Experiments have been comprehensively performed on several

well-known DNN models and datasets. Our results show that,

on an average, the proposed techniques are able to reduce the

amount of transmissions by up to 17% compared to previous

methods under the same accuracy requirement.

Keywords—Deep neural network, workload allocation, edge

computing, authentic operation, dynamic network structure

I. INTRODUCTION

Deep neural networks (DNNs) have emerged as a popular

design paradigm in the area of image classification and object

detection [1]–[12]. This is due to their capabilities to extract

high-level and abstract features from raw data. A number of

DNN architectures and training algorithms have been proposed

to improve the accuracy of multilayer perceptrons (MLPs) and

convolutional neural networks (CNNs) from different perspec-

tives [3]–[5]. With increasing demand for high accuracy, there

has been a trend in recent years to increase the number of lay-

ers of DNNs. Several state-of-the-art CNN architectures, such

as AlexNet [2], Network In Network (NIN) [6], VGGNet [7],

and GoogLeNet [8], contain from eight to dozens of hidden

layers. Researchers have even further pushed the network size

up to 152 layers [9], achieving an unprecedented error rate less

than 5% on the famous ImageNet dataset [13]. It is believed

that the deeper a network is, the higher the accuracy it delivers

[7]–[9]. However, deeper neural networks usually require more

computation, leading to higher workloads as well as resource

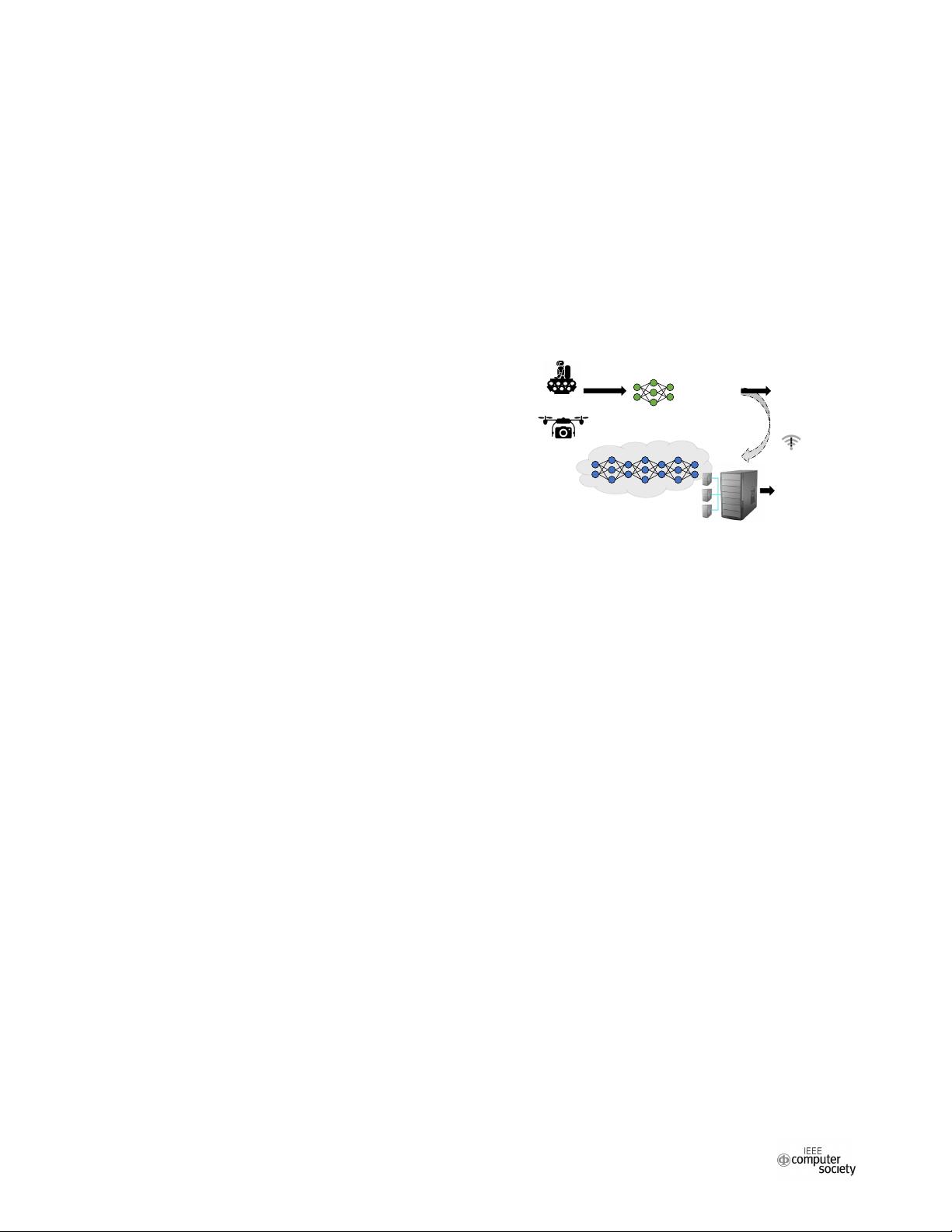

Robots

UAVs

Capture image

Built-in auxiliary network

Remote principal network

Trustworthy?

Yes

No

Unreliable

channel

Obtain

result

Obtain

result

Fig. 1: Workload sharing of DNN between edge and server

occupancy compared to shallower ones. These computational

workloads and resource requirements limit the scale of DNNs

to be executed on energy-constrained embedded devices. Thus,

an efficient method to manage the workloads of embedded

systems performing DNN computations is urgently needed.

The concept of edge computing [14]–[16] is to perform data

processing at the edge end, near the source of data. Edge-end

embedded devices, such as unmanned aerial vehicles (UAVs)

and autonomous robots, work synergistically with powerful

servers to provide performance in modern edge computing

systems. Edge-end devices usually have limited computational

capability and resources, thus only shallower DNNs (denoted

as auxiliary networks) can be accommodated, as compared to

the deeper ones (denoted as principal networks) executed at

the server end. In such systems, the edge devices may suffer

from unstable communication channels. When the communi-

cation channels are fully accessible, edge devices can leverage

both the auxiliary networks and principal networks to achieve

high DNN accuracy. When the channels are unstable, edge

devices can only share fewer workloads to the server. As a

result, utilizing the communication channels and efficiently

allocating workloads between an auxiliary network and a

principal network are of particular importance.

A promising strategy to deal with the above issues is to

allocate difficult DNN workloads to the principal network

at the remote server, while retaining easy ones at the edge.

Whether to transfer an input from the edge to the server

is determined by calculating its confidence level. Confidence

level is used as a measure of the reliability of a prediction.

The higher the confidence level is, the more trustworthy the

prediction might be. Fig. 1 illustrates such a scenario. The

input images captured by robots or UAVs are first analyzed

2017 IEEE 35th International Conference on Computer Design

1063-6404/17 $31.00 © 2017 IEEE

DOI 10.1109/ICCD.2017.49

273

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功