S. Dhargupta, M. Ghosh and S. Mirjalili et al. / Expert Systems With Applications 151 (2020) 113389 3

of cell roles (proliferative, quiescent, dying and invasive) and five

types of search rules (growth of all four types of cells and random

walk for all of them) have been presented.

EAs have emerged as a very robust and practical search method

for global optimum. In the last few years, numerous algorithms

have been proposed in the literature. EA generally works through

use of three general procedures namely selection, crossover and

mutation. Besides GA, Differential Evolution (DE) is another popu-

lar approach for solving nonlinear and non-differentiable optimiza-

tion problems. Though it has good exploration ability, it lacks ex-

ploitation capacity. Also, DE requires problem-specific parameter

setting. Evolutionary Programming (EP) allows the structure of the

optimizing program to be fixed to evolve the parameters. On the

other hand, Evolution Strategy (ES) primarily uses mutation and

selection as search operators.

Many techniques have been proposed for the improvement of

these metaheuristics. Some of these techniques include the use of

OBL, chaos, hybridization and so on. The No Free Lunch theorem

( Wolpert & Macready, 1997 ) has kept research in this field alive.

Of these techniques, a popular and effective one is OBL ( Tizhoosh,

2005 ). OBL algorithms have attracted the interests of numerous

computer scientists in the past years. Many of the previously dis-

cussed algorithms such as GSA, SA , GA , PSO, ACO, ABC, DE etc. have

been enhanced by the application of OBL on them ( Mahdavi, Rah-

namayan, & Deb, 2018 ).

Ergezer and Simon (2014) have introduced fitness-based quasi-

reflection concept, where relative fitness of candidate solutions is

used to generate new candidates. Their paper has presented re-

sults considering one dimensional search space but it can further

be extended to higher dimensional space. Liu et al. (2014) have

proposed an Opposite Center Learning (OCL), where they have

defined opposite point as the optimum solution among a pair-

wise sampling of search space given a random initial point. This

method accelerates the convergence of population-based search al-

gorithms. Rahnamayan, Jesuthasan, Bourennani, Salehinejad, and

Naterer (2014) have suggested an Oppositional Target-Domain Es-

timation (OTDS) where they divided the search space into grids,

making the estimation of the target domain faster. However, the

computational complexity increases if the solution lies adjacent to

a grid border.

Literature survey reveals that there are only few works avail-

able where researchers have used OBL alongside GWO. The elite

opposition-based learning (EOGWO) ( Zhang, Luo & Zhou, 2017 )

performs a simplex based opposition on all the wolves. Instead of

taking the upper and lower limits of the function, opposition is

done using the limits of all the wolves. In Improved Grey Wolf Op-

timizer (IGWO) ( Nasrabadi, Sharafi, & Tayari, 2016 ), the wolves are

divided into two sub-groups and the best wolves of one sub-group

replaces the worst wolves of the other sub-group. In the two parts,

one part is partially opposed (in one dimension) and the rest are

opposed in all dimensions. With iterations, the size of the full op-

posed wolves is decreased.

Some authors have included chaos as well as OBL in their meth-

ods. In 2018, Gupta and Deep (2018) have proposed an opposi-

tion based chaotic GWO where they have introduced OBL and local

chaotic search to the GWO to increase the exploration power of the

algorithm. This method shows better convergence as well as better

avoidance of local minima than GWO. In 2018, Ibrahim, Elaziz, and

Lu (2018) have introduced a chaotic opposition based GWO with

DE and disruption operator to solve global optimization problem.

Here, the initial population is enhanced with the help of chaotic

OBL and later is repeatedly updated using DE operators alongside

GWO. In this algorithm, GWO serves for better exploration whereas

DE operators help avoiding the local optima providing better ex-

ploitation. To balance between these two, disruptive operator is

used, so that the population remains diverse after each iteration.

Pradhan, Roy, and Pal (2017) have combined the concept of

GWO and OBL, for accelerating the convergence rate of the algo-

rithm, and then used it to resolve the Economic Load Dispatch

(ELD) problem of power system optimally. On applying this algo-

rithm to solve any type of ELD problem, it has showed competitive

convergence compared to GWO. Another field where OBL and GWO

have been applied is parallel machine scheduling in cloud comput-

ing ( Natesan and Chokkalingam, 2017 ).

In all these papers, the opposite of a candidate is found for all

dimensions or in 1 randomly chosen dimension. However, the ap-

proach of only opposing certain dimensions of a solution to en-

hance the result has not been attempted before. Moreover, to al-

low for a faster convergence, OBL has been applied taking into

mind the extremum present in the current set of wolves. This es-

sentially binds the solutions created using OBL within the current

range of the population. The opposition-based GWO technique in-

troduced in our proposed method can be enhanced by hybridizing

it with other swarm intelligence algorithms or EAs discussed above

for better optimal results and convergence.

3. Basic theory and notations

The two key topics related to our work namely GWO and OBL

are discussed in Sections 3.1 and 3.2 respectively. After that Spear-

man’s coefficient is described in Section 3.3 .

3.1. Grey Wolf Optimizer (GWO)

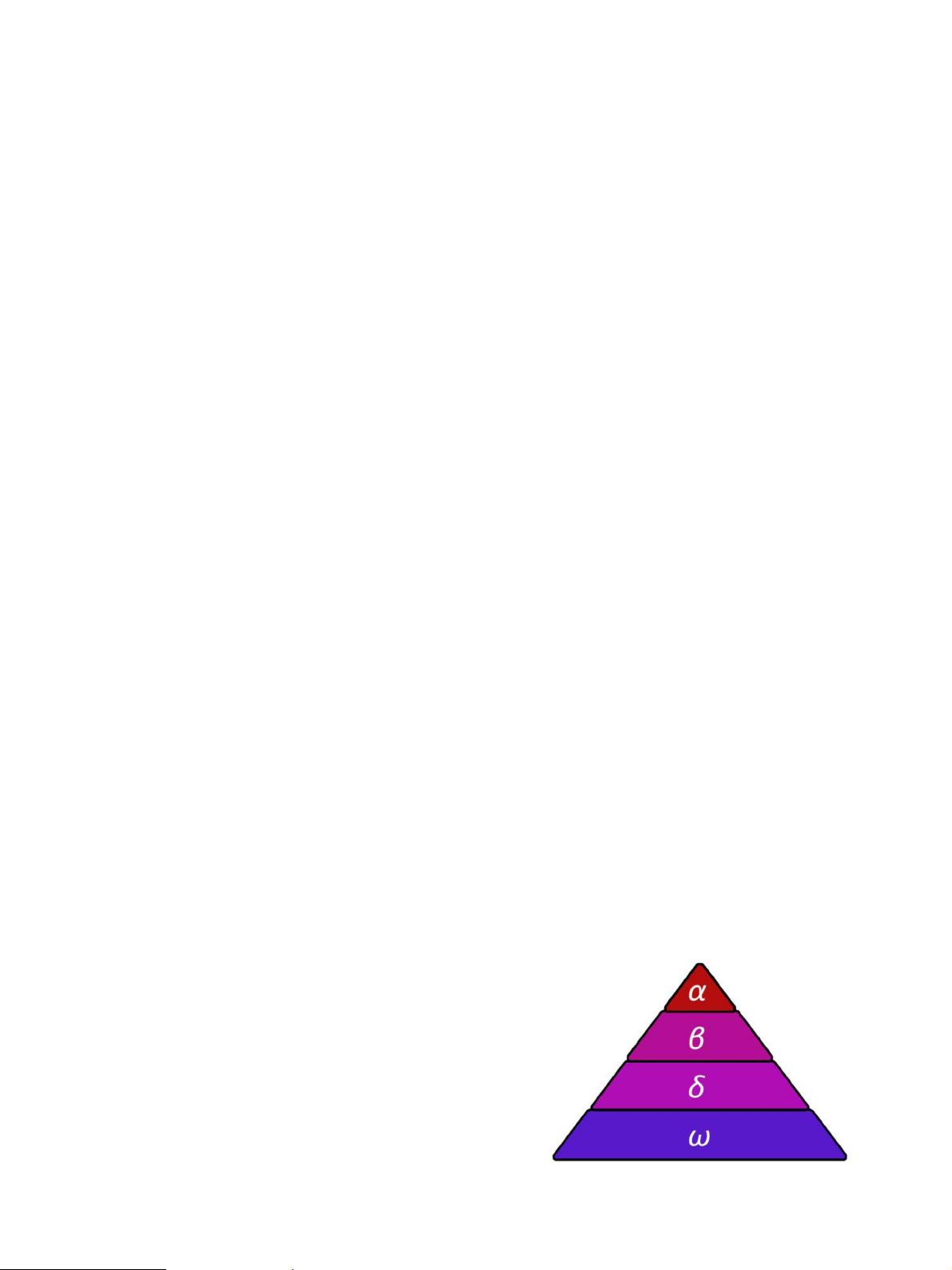

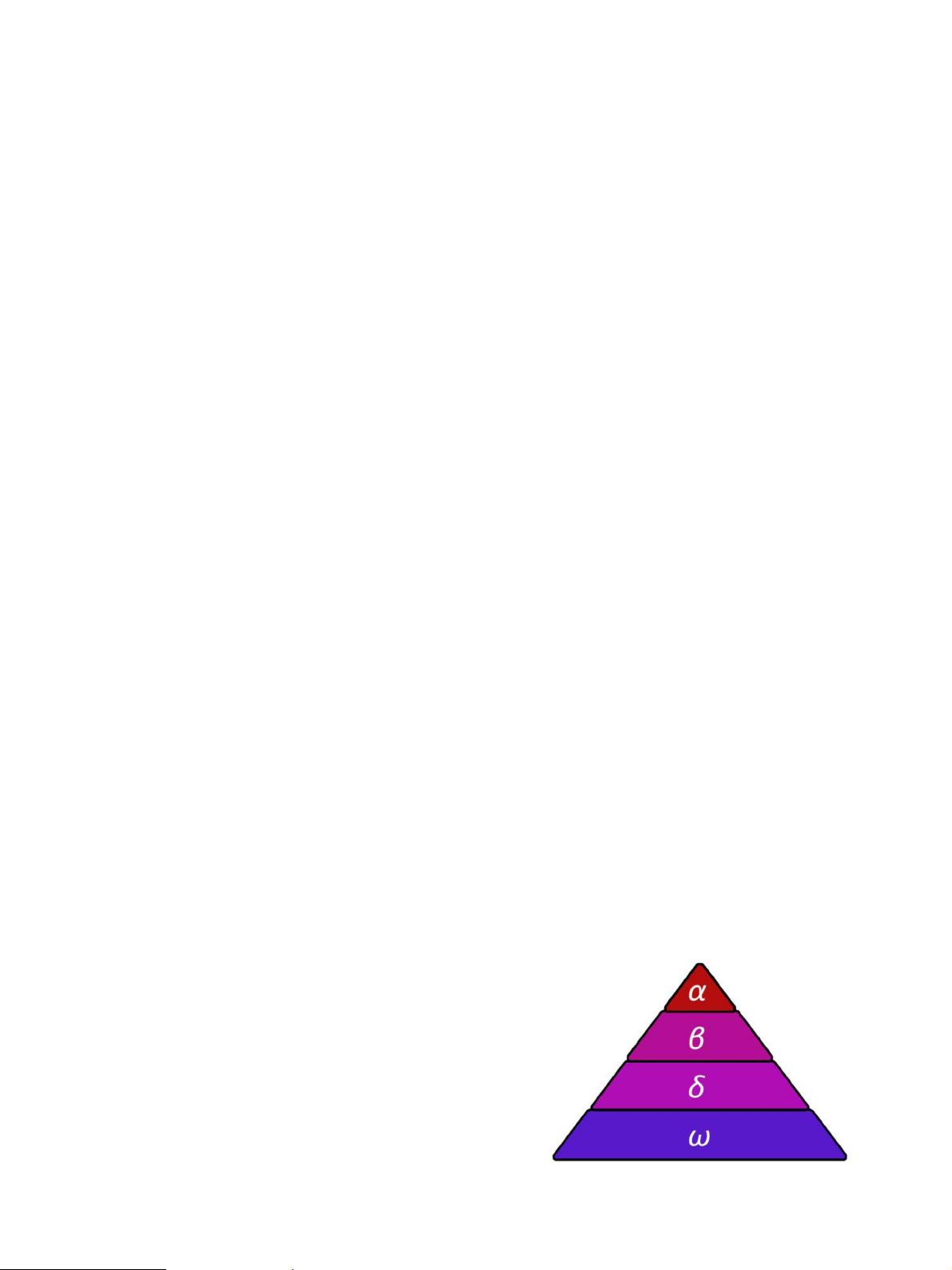

GWO is a metaheuristic optimizer based on the hunting tech-

nique and social hierarchy (see Fig. 1 ) of grey wolves ( canis lupus ),

which has been proposed by Mirjalili et al. (2014) . In a pack of grey

wolves, the leader is called alpha ( α), its subordinates in decision-

making or pack activities are called beta ( β) and the lowest rank-

ing wolves are called omega ( ω). If any wolf does not fit in any of

the three categories then the wolf is called delta ( δ).

During a hunt, the grey wolves encircle the prey, which can be

mathematically expressed as the following equations:

D =

−→

C

1

.

X

p

(

it

)

−

−→

X

w

(

it

)

(3.1)

−→

X

w

(

it + 1

)

=

X

p

(

it

)

−

−→

C

2

.

D (3.2)

where,

it is the current iteration number,

X

p

is the position vector of the prey,

−→

X

w

is the position vector of a grey wolf,

−→

C

1

,

−→

C

2

are the coefficient vectors which are calculated as:

−→

C

2

= 2

d .

−→

rv

1

−

d (3.3)

−→

C

1

= 2 .

−→

rv

2

(3.4)

Fig. 1. Social hierarchy of Grey Wolves.