Multimodal Chain-of-Thought Reasoning in Language Models

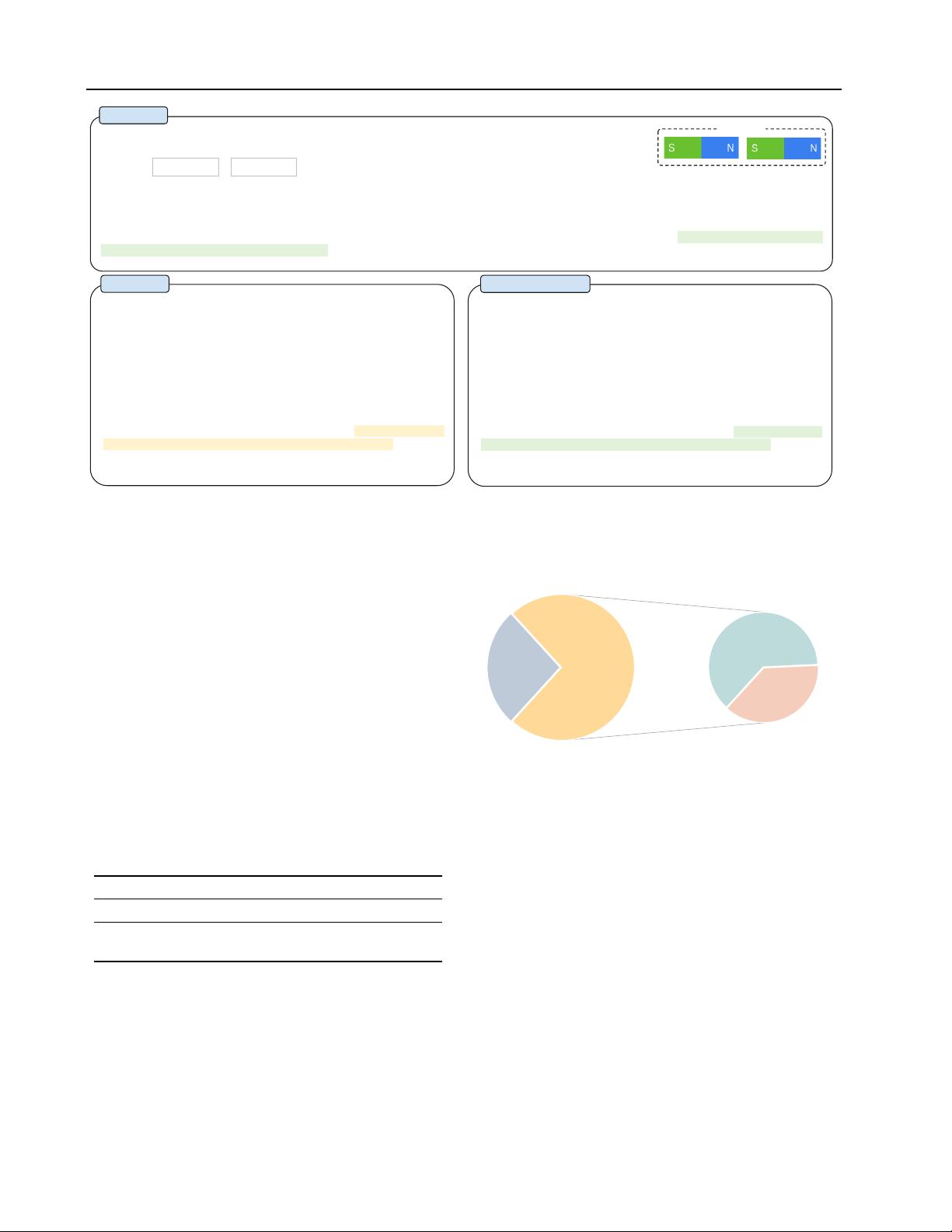

Generated Rationale: Magnets can pull or push on each other

without touching. When magnets attract, they pull together. When

magnets repel, they push apart. Whether a magnet attracts or repels

other magnets depends on the positions of its poles, or ends. Every

magnet has two poles, called north and south. Here are some

examples of magnets. The north pole of each magnet is marked N,

and the south pole is marked S. If different poles are closest to each

other, the magnets attract. The magnets in the pair below attract. If the

same poles are closest to each other, the magnets repel. The magnets

in both pairs below repel. Will these magnets attract or repel? To find

out, look at which poles are closest to each other. The south pole of

one magnet is closest to the south pole of the other magnet. Poles that

are the same repel. So, these magnets will repel each other.

Answer: The answer is (B).

Options:

(B) repel

(A) attract

Problem

Baseline

Question: Will these magnets attract or repel each other?

Context: Two magnets are placed as shown. Hint: Magnets that attract pull together. Magnets that repel push apart.

Gold Rationale: Magnets can pull or push on each other without touching. When magnets attract, they pull together. When magnets repel, they push

apart. Whether a magnet attracts or repels other magnets depends on the positions of its poles, or ends. Every magnet has two poles, called north and

south. Here are some examples of magnets. The north pole of each magnet is marked N, and the south pole is marked S. If different poles are closest

to each other, the magnets attract. The magnets in the pair below attract. If the same poles are closest to each other, the magnets repel. The magnets

in both pairs below repel. Will these magnets attract or repel? To find out, look at which poles are closest to each other. The north pole of one magnet

is closest to the south pole of the other magnet. Poles that are different attract. So, these magnets will attract each other.

Answer: The answer is (A).

Generated Rationale: Magnets can pull or push on each other

without touching. When magnets attract, they pull together. When

magnets repel, they push apart. Whether a magnet attracts or repels

other magnets depends on the positions of its poles, or ends. Every

magnet has two poles, called north and south. Here are some

examples of magnets. The north pole of each magnet is marked N,

and the south pole is marked S. If different poles are closest to each

other, the magnets attract. The magnets in the pair below attract. If the

same poles are closest to each other, the magnets repel. The magnets

in both pairs below repel. Will these magnets attract or repel? To find

out, look at which poles are closest to each other. The north pole of

one magnet is closest to the south pole of the other magnet. Poles that

are different attract. So, these magnets will attract each other.

Answer: The answer is (A).

+ Vision Features

Vision

Figure 2.

Example of the two-stage framework without vision features (baseline) and with vision features (ours) for generating rationales

and predicting answers. The upper part presents the problem details with a gold rationale, and the lower part shows the outputs of the

baseline and our method incorporated with vision features. We observe that the baseline fails to predict the right answer due to the

misleading by hallucinated rationales. More examples are shown in Appendix A.1.

3.2. Misleading by Hallucinated Rationales

To dive into how the rationales affect the answer prediction,

we separate the CoT problem into two stages, rationale

generation and answer inference. We report the RougeL

score and accuracy for the rationale generation and answer

inference, respectively. Table 3 shows the results based

on the two-stage framework. Although the two-stage base-

line model achieves a 91.76 RougeL score of the rationale

generation, the answer inference accuracy is only 70.53%.

Compared with the QCM

→

A variant (80.40%) in Table 2,

the result shows that the generated rationale in the two-stage

framework does not improve answer accuracy.

Table 3.

Two-stage setting of (i) rationale generation (RougeL) and

(ii) answer inference (Accuracy).

Method (i) QCM→ R (ii) QCMR→ A

Two-Stage Framework 91.76 70.53

w/ Captions 91.85 71.12

w/ Vision Features 96.97 84.91

Then, we randomly sample 50 error cases and find that the

model tends to generate hallucinated rationales that mislead

the answer inference. As an example shown in Figure 2, the

model (left part) hallucinates that, “The south pole of one

magnet is closest to the south pole of the other magnet”, due

to the lack of reference to the vision content. We find that

such mistakes occur at a ratio of 64% among the error cases

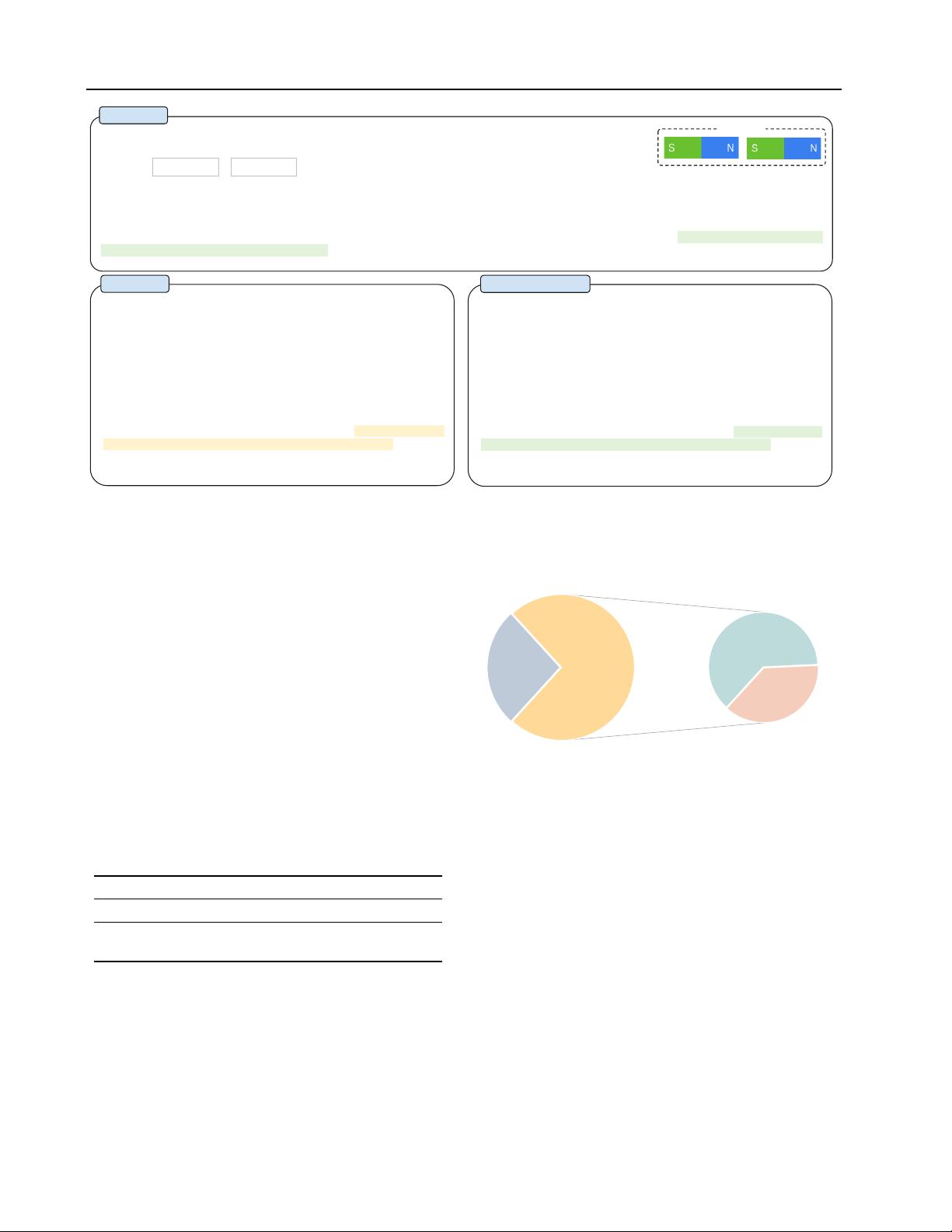

Hallucination

(64%)

(a) ratio of hallucination mistakes

(b) correction rate w/ vision features

Figure 3.

The ratio of hallucination mistakes (a) and correction

rate w/ vision features (b).

(Figure 3(a)).

3.3. Multimodality Contributes to Effective Rationales

We speculate that such a phenomenon of hallucination is

due to a lack of necessary vision contexts for performing

effective Multimodal-CoT. To inject vision information, a

simple way is to transform the paired image into a caption

(Lu et al., 2022a) and then append the caption in the input of

both stages. However, as shown in Table 3, using captions

only yields marginal performance gains (

↑

0.59%). Then,

we explore an advanced technique by incorporating vision

features into the language model. Concretely, we feed the

paired image to the DETR model (Carion et al., 2020) to

extract vision features. Then we fuse the vision features