IEEE TRANSACTIONS ON PATTERN ANALYSIS AND MACHINE INTELLIGENCE, VOL. XX,NO. XX, XXX. XXXX 3

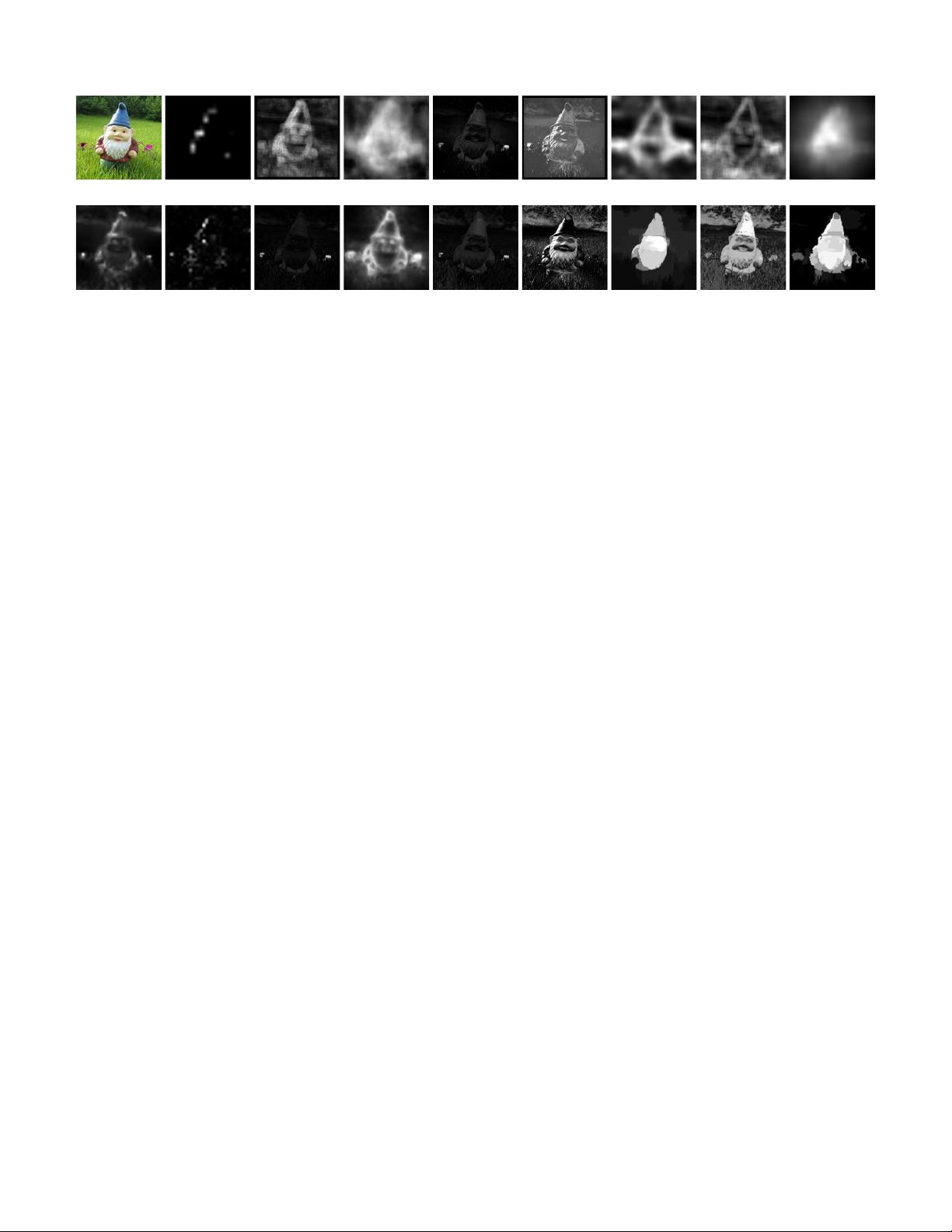

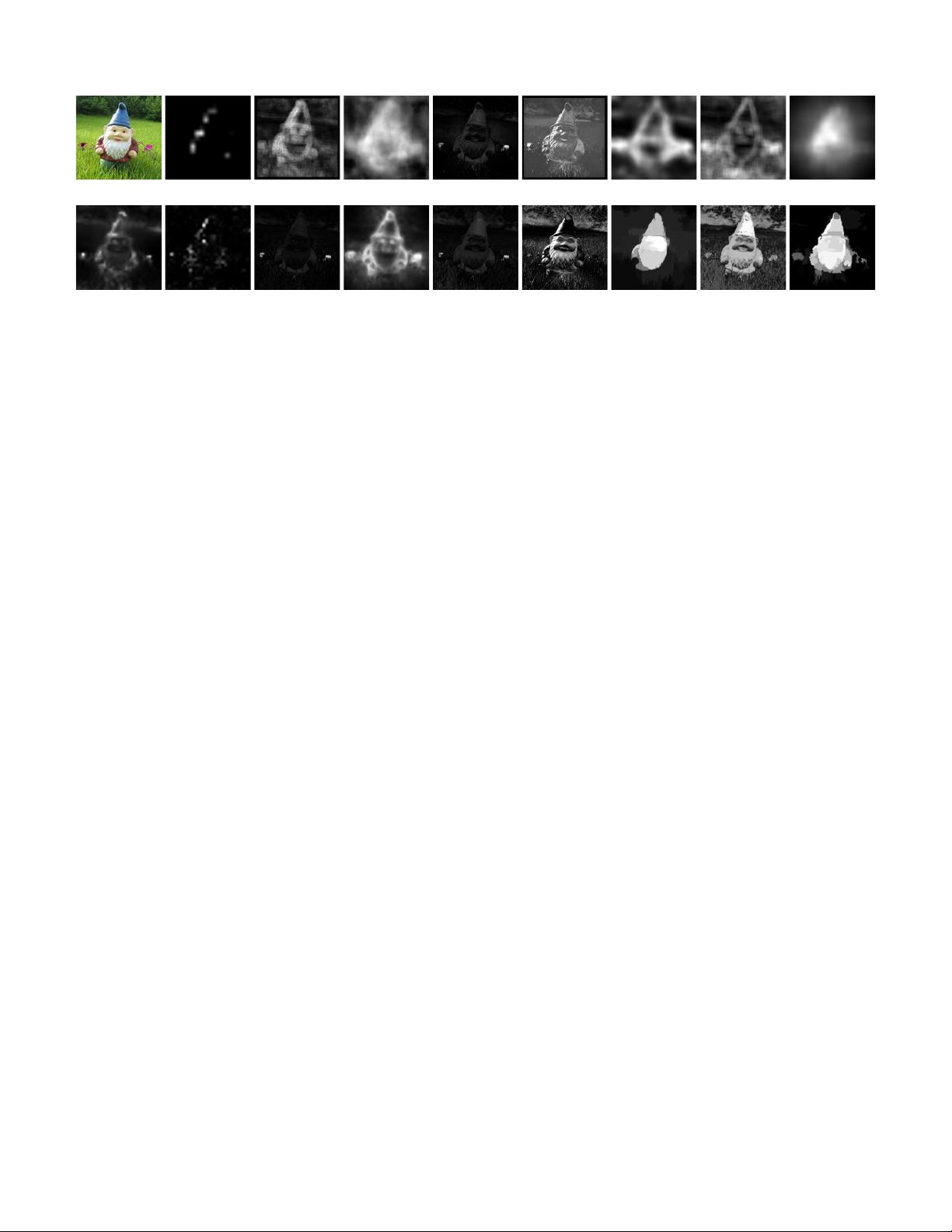

(a) original (b) IT [17] (c) AIM [22] (d) IM [23] (e) MSS [24] (f) SEG [25] (g) SeR [26] (h) SUN [27] (i) SWD [28]

(j) GB [29] (k) SR [30] (l) AC [31] (m) CA [32] (n) FT [33] (o) LC [34] (p) CB [35] (q) HC (r) RC

Fig. 2. Saliency maps computed by different state-of-the-art methods (b-p), and with our proposed HC (q) and RC

methods (r). Most results highlight edges, or are of low resolution. See also Fig. 9 and our project webpage.

conspicuous parts and permit combination with other

importance maps. The model is simple, biologically

plausible, and easy to parallelize. Liu et al. [2] find

multi-scale contrast by linearly combining contrast in

a Gaussian image pyramid. More recently, Goferman

et al. [32] simultaneously model local low-level clues,

global considerations, visual organization rules, and

high-level features to highlight salient objects along with

their contexts. Such methods using local contrast tend

to produce higher saliency values near edges instead

of uniformly highlighting salient objects (see Fig. 2).

Note that Reinagel et al. [37] observe that humans tend

to focus attention in image regions with high spatial

contrast and local variance in pixel correlation.

Global contrast based methods evaluate saliency of an

image region using its contrast with respect to the entire

image. Zhai and Shah [34] define pixel-level saliency

based on a pixel’s contrast to all other pixels. How-

ever, for efficiency they use only luminance information,

thus ignoring distinctiveness clues in other channels.

Achanta et al. [33] propose a frequency tuned method

that directly defines pixel saliency using a pixel’s color

difference from the average image color. The elegant ap-

proach, however, only considers first order average color,

which can be insufficient to analyze complex variations

common in natural images. In Figures 9 and 12, we

show qualitative and quantitative weaknesses of such

approaches. Furthermore, these methods ignore spatial

relationships across image parts, which can be critical

for reliable and coherent saliency detection (see Sec. 6).

Saliency maps are widely employed for unsupervised

object segmentation: Ma and Zhang [41] find rectangular

salient regions by fuzzy region growing on their saliency

maps. Ko and Nam [43] select salient regions using

a support vector machine trained on image segment

features, and then cluster these regions to extract salient

objects. Han et al. [44] model color, texture, and edge

features in a Markov random field framework to grow

salient object regions from seed values in the saliency

maps. More recently, Achanta et al. [33] average saliency

values within image segments produced by mean-shift

segmentation, and then find salient objects via adaptive

thresholding. We propose a different approach that ex-

tends GrabCut [38] method and automatically initialize

it using our saliency detection methods. Experiments on

our 10, 000 images dataset (see Sec. 6.1.1) demonstrate

the significant advantages of our method compared to

other state-of-the-art methods.

Subsequent to our preliminary results [1], Jiang et

al. [35] propose a comparable method also making use of

region level contrast to model image saliency. In the seg-

mentation step, their method also expands and shrinks

the initial trimap and iteratively applies graphcut and

histogram appearance model. Since GrabCut is an iter-

ative process of using graphcut and GMM appearance

mode, the two segmentation methods share a strong sim-

ilarity. Compared to the CB method [35], experimental

results show that our RC salient object region detection

and segmentation is more accurate (Fig. 12(a)(c)), 20×

faster (Fig. 7), and more robust to center-bias (Fig. 12(b)).

3 HISTOGRAM BASED CONTRAST

Our biological vision system is highly sensitive to con-

trast in visual signal. Based on this observation, we pro-

pose a histogram-based contrast (HC) method to define

saliency values for image pixels using color statistics of

the input image. Specifically, the saliency of a pixel is

defined using its color contrast to all other pixels in the

image, i.e., the saliency value of a pixel I

k

in image I is,

S(I

k

) =

X

∀I

i

∈I

D(I

k

, I

i

), (1)

where D(I

k

, I

i

) is the color distance metric between pix-

els I

k

and I

i

in the L

∗

a

∗

b

∗

space for perceptual accuracy.

(1) can be expanded by pixel order as,

S(I

k

) = D(I

k

, I

1

) + D(I

k

, I

2

) + · · · + D(I

k

, I

N

), (2)

where N is the number of pixels in image I. It is

easy to see that pixels with the same color have the

same saliency under this definition, since the measure is

oblivious to spatial relations. Thus, rearranging (2) such

that the terms with the same color value c

j

are grouped

together, we get saliency value for each color as,

S(I

k

) = S(c

l

) =

X

n

j=1

f

j

D(c

l

, c

j

), (3)

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功