Porting and Optimizing NAMD on Sunway TaihuLight Supercomputer

Wenjun Yao, Weikeng Chen, Zhichao Su, Junshi Chen, Chenzhi Liao and Hong An

Department of Computer Science and Technology, University of Science and Technology of China

Email: {yaowj,cwk32,suzc,chenjs,czliao}@mail.ustc.edu.cn, han@ustc.edu.cn

Abstract—NAMD (Nanoscale Molecular Dynamics) an open-

source molecular dynamics simulation software, which can

simulate millions of atoms macromolecules in massively parallel

computer system. The NAMD uses atom partition and force

division to utilize the parallel computing with load balance

control. In this paper, we focus on the porting and optimizing

of NAMD to one of top supercomputers, Sunway TaihuLight.

Sunway TaihuLight is a supercomputer with a theoretical

peak performance of 125.4 Pflop/s and LINPACK efficiency

no less than 70%, and has 10 million cores. Performance

analysis shows that the total performance is increased nearly

30 times, and the single-core performance is twice more than

that of Intel Xeon E5-2650 V2. The theoretical scalability of our

porting reached about 1.8 million core, which is a breakthrough

compared with previous experiments on NAMD.

Keywords-Molecular dynamics simulation; Heterogeneous

many-core processor;

I. INTRODUCTION

NAMD (Nanoscale Molecular Dynamics) [1], [2] is a

molecular dynamics simulation software which supports the

simulation of millions of atoms in large-scale parallel com-

puter system. It uses forcefields (like Amber, CHARMM,

and Dreiding) for numerical solution of motion equations

to simulate the motion of atoms. It can simulate molecular

dynamics in different scales and is widely used in the

research of computational chemistry [3], [4].

Sunway TaihuLight supercomputer [5] is the top super-

computer developed by National Research Center of Parallel

Computer Engineering & Technology (NRCPC) with peak

performance of 125.4 Pflop/s. The supercomputer under

linpack benchmark achieves peak flops/W at 6 Gflops/W and

efficiency at 74.15%. The Sunway supercomputer provides

two types of nodes in the system. The first type is the high-

performance computing node with the ALPHA architecture,

which is specially used for computation. The second type is

the management node under the commercial Intel platform,

which is used for system management, software develop-

ment, and job submission. In the computer node, each CPU

has four core groups (CGs), and in each one core group

there are one management processing element (MPE) as

main cores and 64 computing processing element (CPEs) as

slave cores that shares the same memory space of that core

group. The computing nodes in Sunway supercomputer are

managed by those Intel platform management nodes. When

developers want job submission, they log in to the remote

management nodes which are with Intel platform, use the

cross compilation tools to make application programs, and

submit the job using bsub command.

In this paper, we port NAMD to the Sunway TaihuLight

supercomputer and optimize for better efficiency in simu-

lation. We need to port three third-party libraries that are

used in NAMD before porting the main program: TCL,

FFTW, and CHARM++ [6], [7], which is ported by cross

compilation. Here the CHARM++ is the parallel program-

ming framework supporting NAMD that manages the task

scheduling and the load balancing. We modify the NAMD

to make use of the instructions of Sunway CPU to boost the

main computation.

II. SUNWAY CPU ARCHITECTURE

The Sunway supercomputer uses the processors produced

by Shanghai High Performance IC Design Center. In the

aspect of software, it has a customized x64 Linux operating

system with the compiler for basic languages like C/C++,

Fortran, and some parallel programming languages like MPI,

OpenMP, and OpenACC. The Sunway also provides a high-

performance thread library for the fine-grained management

of parallel computing. The Sunway processors work with

the frequency of 1.5GHz.

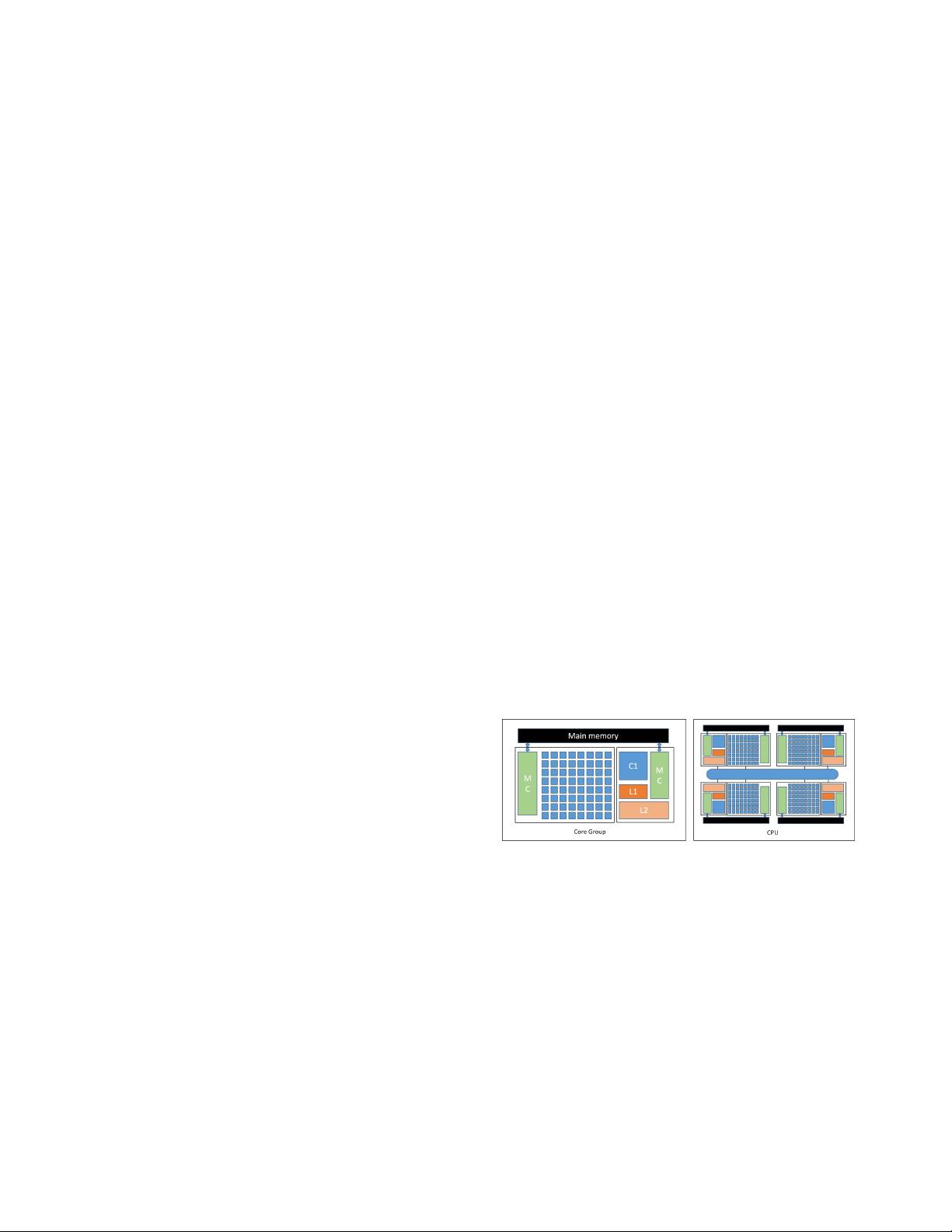

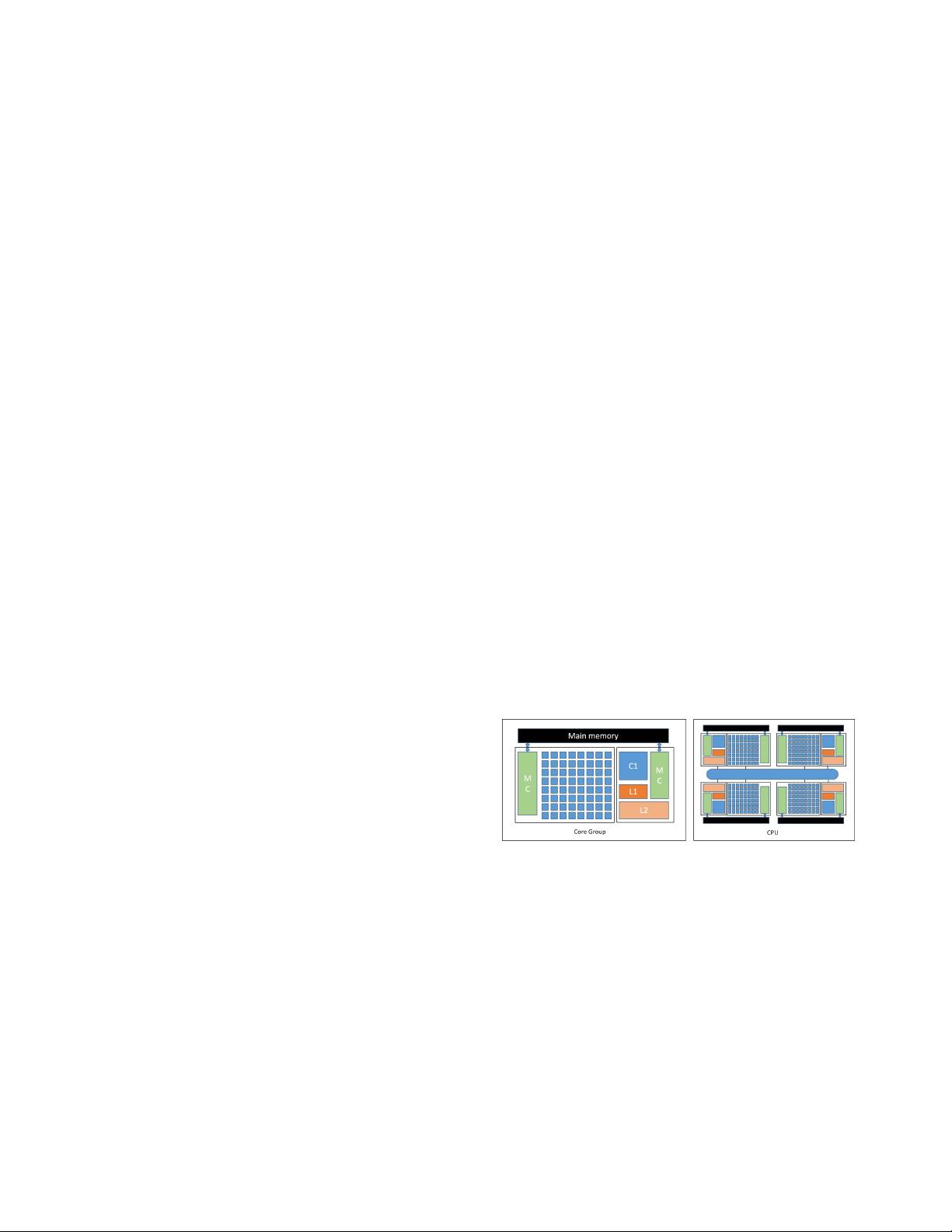

Figure 1. Sunway Core Group and Sunway Processor

Each processor has four core groups (CGs), and each core

group has one management processing element (MPE) and

8×8 array of computing processing elements (CPEs). Each

one processor has a memory controllers (MC) for memory

access. These components on the CPU are interconnected.

For four core groups in one processor, they are connected

by network on chip (NoC) with private memory space. For

elements in a core group, MPE and CPEs are connected

by the memory controllers (MC) and the memory space.

All processors are interconnected through a system interface

(SI). The structure of a core group and a Sunway CPU is

showed in Fig. 1.