AUTOMATIC IMAGE DATASET CONSTRUCTION WITH MULTIPLE TEXTUAL

METADATA

Yazhou Yao

1,2

, Jian Zhang

1

, Fumin Shen

3

, Xiansheng Hua

4

, Jingsong Xu

1

, Zhenmin Tang

2

1

University of Technology Sydney, Australia,

2

Nanjing University of Science and Technology, China

3

University of Electronic Science and Technology of China,

4

Alibaba Group, Hangzhou, China

{yaoyazhou, fumin.shen, huaxiansheng}@gmail.com, tzm.cs@njust.edu.cn

{jian.zhang, jingsong.xu}@uts.edu.au

ABSTRACT

The goal of this work is to automatically collect a large num-

ber of highly relevant images from the Internet for given

queries. A novel image dataset construction framework is

proposed by employing multiple textual metadata. In spe-

cific, the given queries are first expanded by searching in the

Google Books Ngrams Corpora to obtain a richer semantic

description, from which the visually non-salient and less rel-

evant expansions are then filtered. After retrieving images

from the Internet with filtered expansions, we further filter

noisy images by clustering and progressively Convolutional

Neural Networks (CNN). To verify the effectiveness of our

proposed method, we construct a dataset with 10 categories,

which is not only much larger than but also have compara-

ble cross-dataset generalization ability with manually labeled

dataset STL-10 and CIFAR-10.

Index Terms— Automatic Image Dataset Construction,

Multiple textual metadata, Clustering, Progressively CNN

1. INTRODUCTION

Labelled image datasets have played a critical role in high-

level image understanding and drive the progress of feature

designing. For example, ImageNet has acted as one of the

most important factors in the recent advance of developing

and deploying visual representation learning models (e.g.,

deep CNN). However, the process of constructing ImageNet

is both time consuming and labor intensive. It is consequently

a natural idea to leverage image search engine (e.g., Google

Image) or social network (e.g., Flickr) to construct the desired

image dataset. Generally, Google Image search engine has

a relatively higher accuracy than social network like Flickr.

However, directly constructing image dataset with retrieved

images from Google is not practical. It is mainly due to the

download restrictions for each query and the unsatisfactory

accuracy of ranking relatively rearward images. In order to

tackle this problem, we propose a novel image dataset con-

structing framework, through which a large of highly relevant

images are automatically extracted from the Internet.

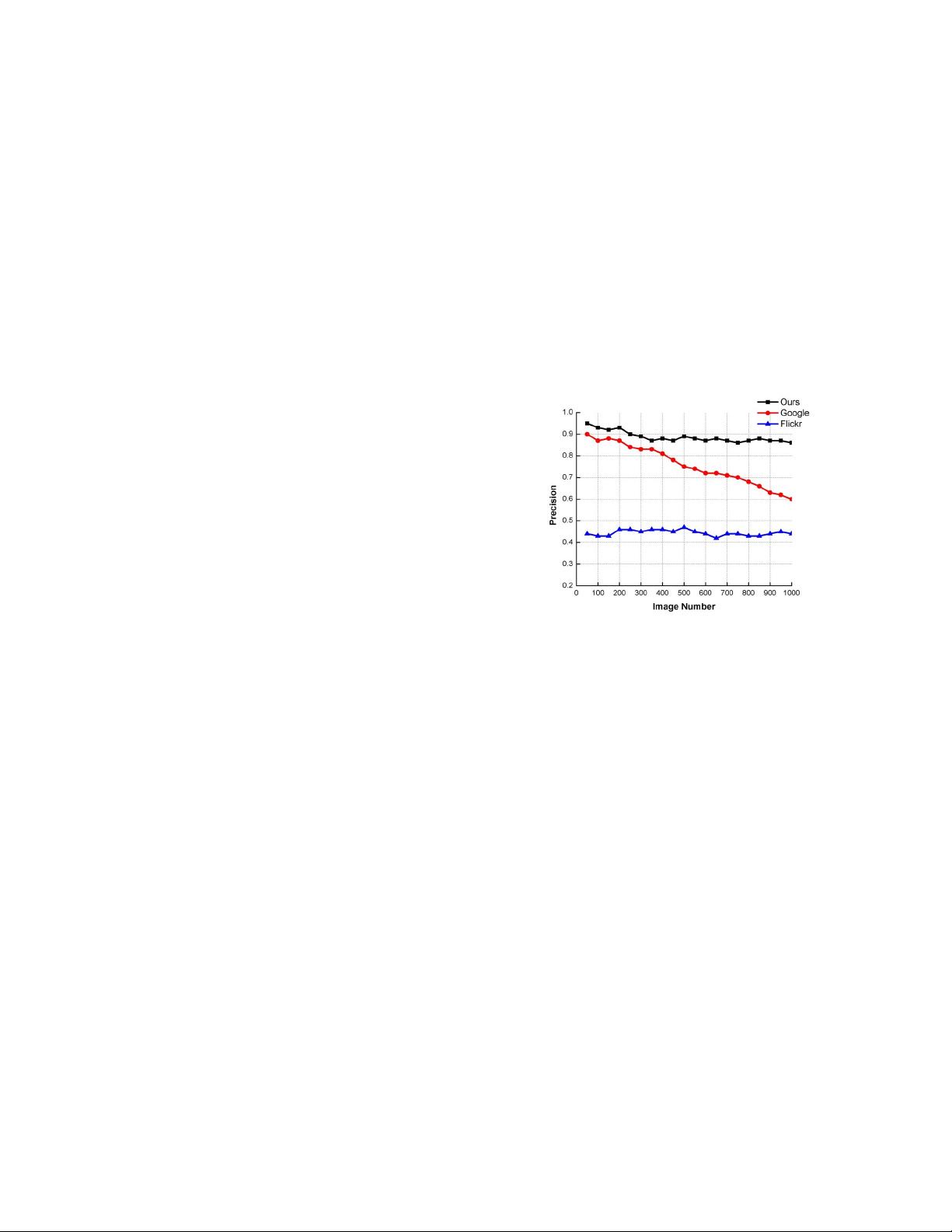

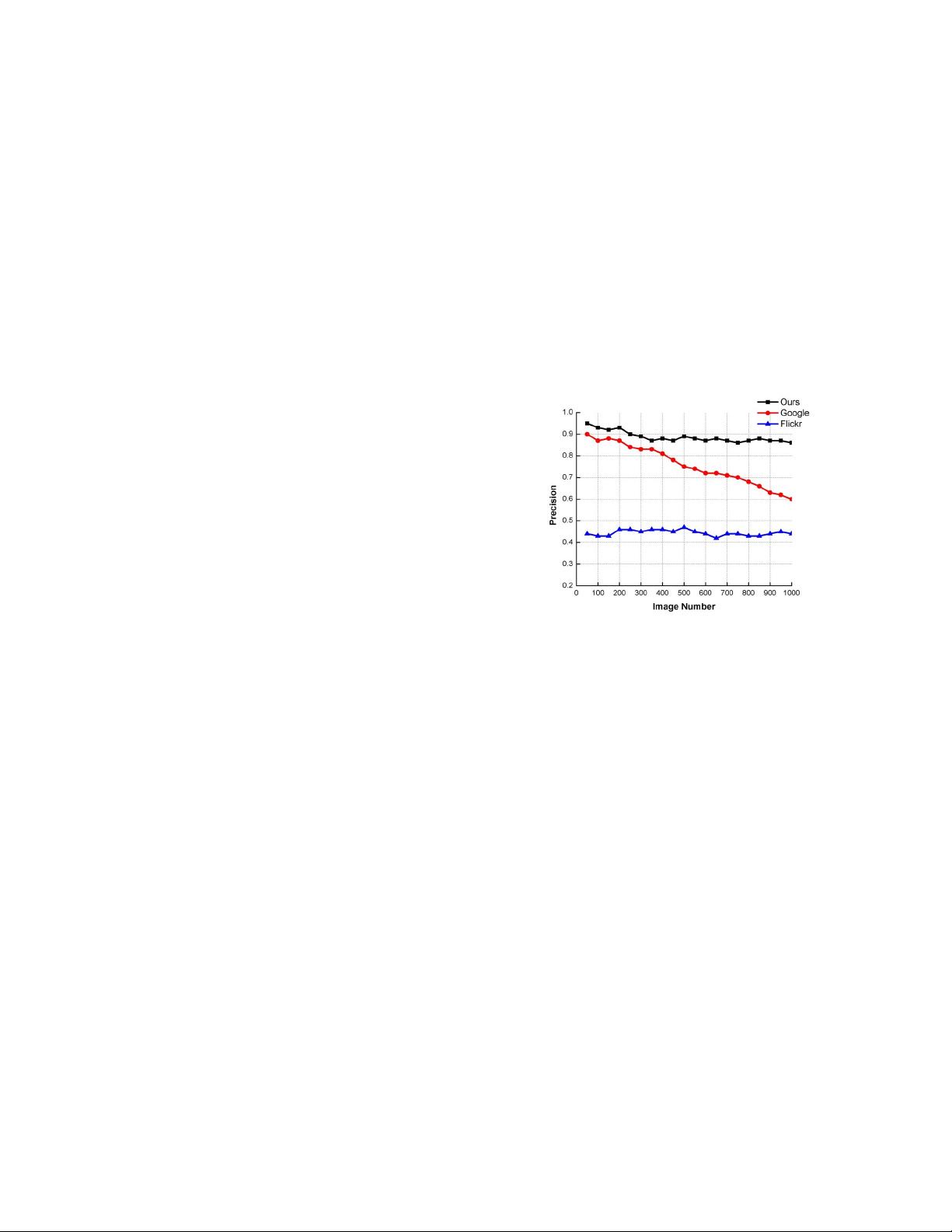

Fig. 1: The average precision of top 1000 images in Google

image, Flickr and our dataset for 10 queries.

In order to build a high-quality image dataset from Inter-

net, we propose to construct the collection for each query by

three major steps: query expanding, noisy expansions filter-

ing and noisy images filtering. Specifically, by searching in

the Google Books Ngrams Corpora (GBNC), we firstly ex-

pand the given query to a set of semantically rich expansions,

from which the noisy query expansions are then removed by

exploiting both word-word and visual-visual similarity. After

we obtain the candidate images by retrieving these filtered ex-

pansions with search engine, as an important step, clustering

and progressively CNN based methods are applied to further

remove these noisy images. To verify effectiveness of the

proposed automatic image dataset construction method, we

build a image dataset with 10 categories named AutoImgSet-

10. We evaluate its precision by comparing with methods

[1, 2, 3]. In addition, we also evaluate the cross-dataset gen-

eralization ability by comparing with two manually labeled

image datasets STL-10 and CIFAR-10. Fig.1 demonstrates

the improvement achieved by our method over the initially

downloaded images from Google and Flickr.

2. RELATED WORK

To our knowledge, there are three principal methods of con-

structing image dataset: manual annotation, semi-automatic

method and automatic method. Manual annotation has a high

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功