inputs.

Zhang

et

al.

[11]

utilized

recurrent

3D

CNN

(R3DCNN)

to

learn

spatial-spectral-temporal

EEG

features

for

cross-task

mental

workload

assessment.

Wu

et

al.

[12]

proposed

a

deep

stacked

contractive

autoencoder

network

(DCAEN)

to

learn

the

fatigue-related

features

from

raw

EEG

data

in

order

to

recognize

the

pilot's

fatigue

status.

Gao

et

al.

[13]

developed

an

EEG-based

spatial-temporal

convolutional

neural

network

(ESTCNN)

to

detect

the

subject's

state

of

fatigue

with

high

accuracy.

However,

these

studies

used

the

single

modality

alone

to

detect

the

cognitive

states.

Using

multimodal

sensors

is

an

effective

way

to

improve

the

detection

performance

compared

with

a

single

sensor-

based

recognition.

Also,

various

combinations

of

biosignal

modalities

(e.g.,

EEG,

ECG,

photoplethysmogram,

EOG,

EDA,

respiration,

and

EMG)

were

used

for

analyzing

the

fatigue

states

[41–46].

Hogervorst

et

al.

[15]

tested

the

combined

information

of

EEG,

skin

conductance,

respiration,

ECG,

pupil

size,

and

eye

blinks

for

mental

workload

estimation.

Ahn

et

al.

[16]

collected

EEG,

fNIRs,

and

ECG

data

simultaneously

to

develop

algorithms

that

allow

researchers

to

explore

the

neurophysiological

correlates

of

subjects'

state

of

fatigue.

The

combination

of

LDA

methods

yielded

substantial

improve-

ments

in

the

ability

to

discriminate

between

well-rested

(i.e.,

normal)

state

and

sleep

deprived

(i.e.,

fatigue)

state.

Liu

et

al.

[17]

integrated

EEG,

fNIRS,

and

physiological

measures

for

the

classification

of

three

workload

levels

in

an

n-back

working

memory

task.

They

showed

that

the

fusion

of

these

modalities

could

improve

the

classification

performance.

Zhang

et

al.

[18]

used

EEG

and

ECG

signals

to

validate

the

effectiveness

of

the

interactive

mutual

information

modeling

(IMIM),

which

is

a

feature-weight-driven

signal-fusion

method

based

on

mutual

information.

However,

to

the

extent

of

our

knowledge,

multimodal

biosignals

have

not

been

combined

with

MDL

methods

(See

the

summary

of

related

works

in

Table

1).

From

these

multi-modality

studies,

hand-crafted

features

for

each

biosignal

were

extracted,

and

were

then

used

to

classify

the

mental

states.

For

example,

in

EEG

signals,

power

spectral

density

(PSD)

features

in

specific

frequency

bands

including

delta

(1-4

Hz),

theta

(4–8

Hz),

alpha

(8–13

Hz),

beta

(13–30

Hz),

and

gamma

(30–40

Hz)

were

normally

used

[6,12].

In

ECG

signals,

time-domain

features

(e.g.,

mean

heart

rate

(MHR)

and

standard

deviation

of

normal-to-normal

intervals

(SDNN))

and

frequency-domain

features

(e.g.,

PSD

from

0.04

to

0.15

Hz

frequency

range

for

low

frequency

band

(LF)

and

0.15

to

0.40

Hz

range

for

high

frequency

band

(HF),

and

that

of

the

ratio

(LFHF))

were

employed

[16,47–49].

In

respiration

signals,

the

standard

deviations

of

the

amplitude

from

the

abdomen

and

thorax

channels

(i.e.,

SDAbd

and

SDThor,

respectively)

were

used

as

time-domain

features

[17].

Further,

the

PSD

of

the

dominant

respiration

frequency

band

from

the

abdomen

and

thorax

channels

(i.e.,

DRFAbd

and

DRFThor,

respectively)

were

extracted

as

frequency-domain

respiration

features.

For

the

EDA

signals,

the

mean

amplitude

of

EDA

(MEDA)

and

the

standard

deviation

of

the

amplitude

(SDEDA)

were

used

as

time-domain

features.

Also,

the

PSD

extracted

from

the

EDA

index

of

the

frequency

bands

of

the

sympathetic

nervous

system

(EDASymp)

was

investigated

for

frequency-domain

features

[15,50].

3.

Methods

3.1.

Experiment

3.1.1.

Participants

Eight

healthy

subjects

(6

males

and

2

females,

age:

25.7

2.6)

underwent

flight

experience

for

over

100

hours

in

the

Taean

Flight

Education

Center.

This

study

was

reviewed

and

approved

by

the

Institutional

Review

Board

at

Korea

University

[1040548-KU-IRB-18-92-A-2],

and

written

informed

consent

was

obtained

from

all

participants

before

the

experiments.

All

subjects

had

a

normal

or

corrected-to-normal

vision,

normal

hearing,

and

no

history

of

psychiatric

or

neurological

diseases.

They

were

asked

to

refrain

from

alcohol

and

coffee

and

to

sleep

(6–8

h)

before

the

experiment.

They

were

instructed

to

fill

out

the

questionnaires

for

recording

the

subjects'

status

and

for

evaluating

our

experimental

paradigm.

3.1.2.

Experimental

setup

We

designed

an

experimental

environment

using

a

flight

simulator

system

(Cessna

172,

FRASCA

International,

Inc.)

(see

Fig.

1).

The

cockpit

consisted

of

the

wide

visual

display

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

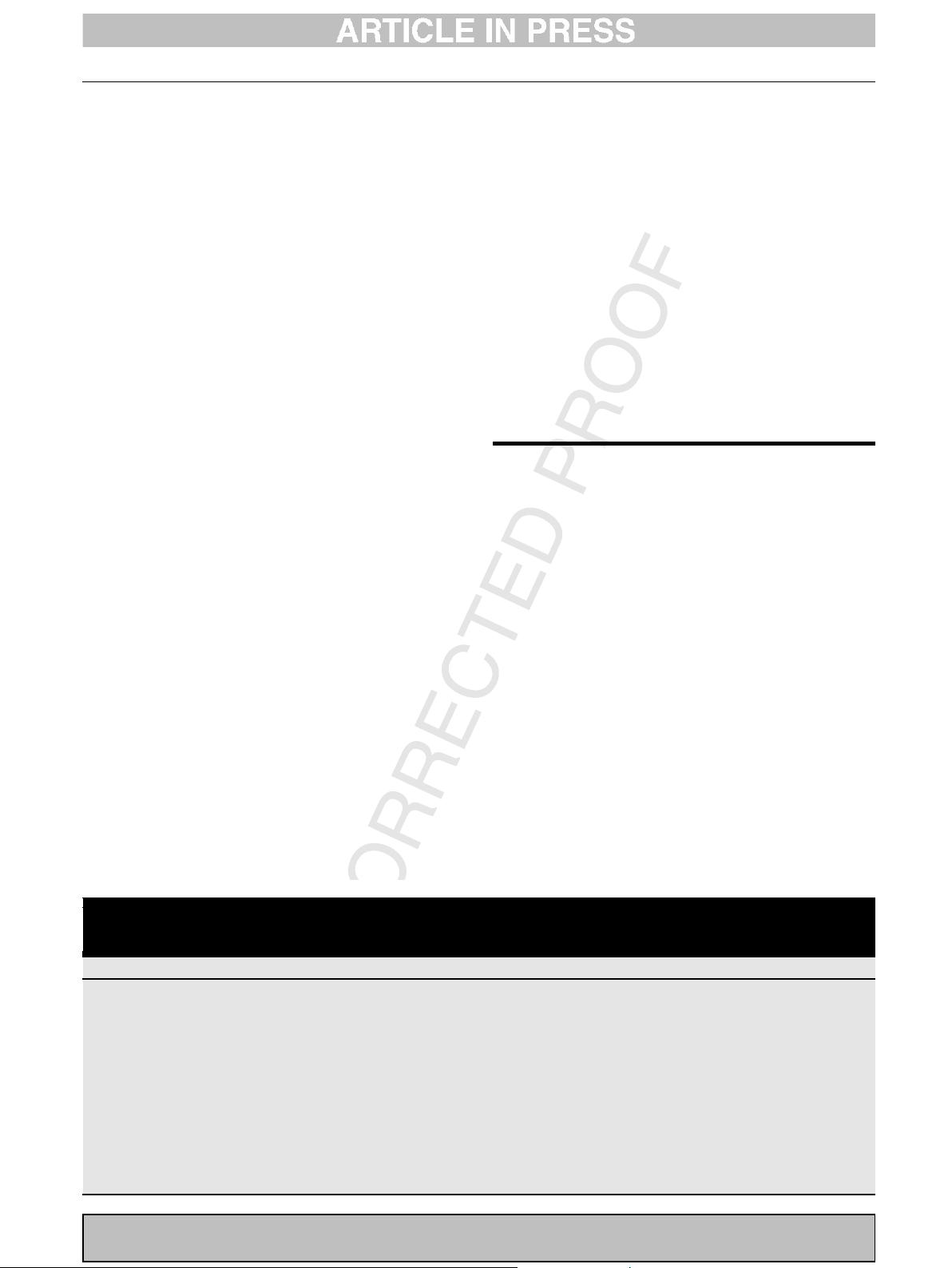

Table

1

–

Summary

of

related

works:

N,

D,

W,

and

F

indicate

normal,

distraction,

workload,

and

fatigue,

respectively.

Moreover,

W

i

and

F

i

indicate

i

levels

of

the

corresponding

mental

states.

Note

that

performance

values

are

the

representative

results

of

the

referred

studies.

Performance

could

be

different

depending

on

the

experimental

conditions.

References

Modalities

#

of

class

(types)

#

of

subjects

Methods

Performance

Sonnleitner

et

al.

[5]

EEG

2

(D/N)

20

rLDA

92%

Chaudhuri

et

al.

[7]

EEG

2

(F/N)

12

Source

feature,

SVM

86.8%

Dehais

et

al.[8]

EEG

2

(W/N)

18

sLDA

70.8%

Bashivan

et

al.

[9]

EEG

4

(W

1

/W

2

/W

3

/W

4

)

13

RCNN

91.1%

Jiao

et

al.

[10]

EEG

4

(W

1

/W

2

/W

3

/W

4

)

13

CNN,

PGBM

92.4%

Zhang

et

al.

[11]

EEG

2

(W/N)

20

R3DCNN

88.9%

Wu

et

al.

[12]

EEG

3

(F

1

/F

2

/N)

40

DCAEN

91.7%

Gao

et

al.

[13]

EEG

2

(F/N)

8

ESTCNN

97.4%

Patel

et

al.

[14]

ECG

2

(F/N)

12

Neural

network

90%

Hogervorst

et

al.

[15]

EEG,

eye-tracking

measures

2

(W

1

/W

2

)

14

Logistic

regression

91%

Ahn

et

al.

[16]

EEG,

fNIRs,

ECG

2

(F/N)

11

LDA

75.9%

Liu

et

al.

[17]

EEG,

fNIRS,

ECG,

respiration

3

(W

1

/W

2

/W

3

)

21

LDA,

Naive-Bayes

65.1%

Zhang

et

al.

[18]

EEG,

ECG

3

(W

1

/W

2

/W

3

)

10

IMIM,

SVM

89.9%

b

i

o

c

y

b

e

r

n

e

t

i

c

s

a

n

d

b

i

o

m

e

d

i

c

a

l

e

n

g

i

n

e

e

r

i

n

g

x

x

x

(

2

0

1

9

)

x

x

x

–

x

x

x

3

BBE

405

1–13

Please

cite

this

article

in

press

as:

Han

S-Y,

et

al.

Classification

of

pilots'

mental

states

using

a

multimodal

deep

learning

network.

Biocybern

Biomed

Eng

(2019),

https://doi.org/10.1016/j.bbe.2019.12.002

已收录资源合集

已收录资源合集