Simulating results are shown in Section 4 and the conclu-

sion of this work is in Section 5.

2. Problem formulation

In this section, we firstly solve the decoding problem in

Fig. 1(a), because it is the basis of the whole framework.

2.1. Decoding analysis

Let X⊂ℝ be a discrete alphabet source. f

X

ðxÞ is the

probability density function (PDF). We consider each

channel of the network as a binary error memoryless

channel (BEC). It has the input alphabet f0; 1g and the

output may be intact or inverted. We denote e∈f0; 1g as the

error. The probabilities of error transition are supposed as

Pð1j0Þ¼Pð0j1Þ¼p. Supposing the input sequence as

X ¼ x

0

; x

1

; x

2

; :::, each of the elements is quantized by

scalar quantizer and bijective mapped to a finite central

codebook C by the function Q

c

: X-C ¼f0; 1; 2; :::; M1g

with M elements. Then in AMDQ step, each central code-

word is mapped to a side codeword pair to generate two

descriptions by the function Q

s

: C-fI; Jg, where

I≜f I

0

; I

1

; :::; I

N

1

1

g and J≜fJ

0

; J

1

; :::; J

N

2

1

g are two finite side

codebooks. Each element in set I and J can be rewritten as

a binary format: i

0

i

1

:::i

b

1

1

∈f0; 1g

b

1

and j

0

j

1

:::j

b

2

1

∈f0; 1g

b

2

,

where b

1

¼ ⌈log

2

N

1

⌉ and b

2

¼ ⌈log

2

N

2

⌉. The bit rates of

two side descriptions and the central description of MDC

are R

1

¼ log

2

N

1

, R

2

¼ log

2

N

2

, and R

c

¼ log

2

M (before

entropy coding) respectively, and the redundancy rate of

MDC, denoted γ, can be calculated as

γ ¼

R

1

þ R

2

R

c

¼

log

2

N

1

N

2

log

2

M

ð1Þ

We denote the generated two sequences of the input X

as I

X

and J

X

. They are entropy coded by variable length

code (VLC), and transmitted in a BEC channel separately. At

the terminal, the received two sequences are decoded and

denoted

^

I

X

and

^

J

X

. Then the signal is reconstructed as

Y ¼ y

0

; y

1

; y

2

; :::. The optimization problem is to find the

reconstructed signal

^

X of the original input X, that is

^

X ¼ argMAX

X

½PðXjYÞ ð2Þ

We suppose the input sequence as an independent and

identical distributed (i.i.d.) source. For the correlated

source, such as Markovian source, it can be learnt from

[17,20,28]. Then (2) can be rewritten as

^

X ¼ argMAX

X

∏

i ¼ 0; 1;:::

Pðx

i

jy

i

Þ

"#

¼ argMAX

X

∏

i ¼ 0; 1;:::

PðI

i

; J

i

j

^

I

i

;

^

J

i

Þ

"#

¼ argMAX

X

∏

i ¼ 0; 1;:::

ðPðI

i

j

^

I

i

ÞPðJ

i

j

^

J

i

ÞÞ

"#

ð3Þ

PðI

i

j

^

I

i

Þ is the transiting probability from

^

I

i

to I

i

, and can be

calculated as

PðI

i

j

^

I

i

Þ¼p

hðI

i

j

^

I

i

Þ

ð1pÞ

b

1

hðI

i

j

^

I

i

Þ

ð4Þ

where hðÞ denotes the hamming distance function. Simi-

larly, PðJ

i

j

^

J

i

Þ can be calculated as

PðJ

i

j

^

J

i

Þ¼p

hðJ

i

j

^

J

i

Þ

ð1pÞ

b

2

ðJ

i

j

^

J

i

Þ

ð5Þ

It is obvious that

^

X is related with AMDQ and p.Thegoal

is to find an optimal AMDQ solution towards the known

BER of the network. Combining all the elements of I and J,

we can get N

1

N

2

possible pairs, which can be equivalently

denoted as following combined binary format:

W ¼fw

m

¼ i

0

i

1

:::i

b

1

1

j

0

j

1

:::j

b

2

1

∈f0; 1g

b

1

þb

2

;

m ¼ 0; 1; :::; N

1

N

2

1g: ð6Þ

M elements in W will be used to bijective map with the

elements in the set C,denotedV

M

¼fv

m

∈W; m ¼ 1;

2; :::; Mg.From(1) we know that, M is related with the

redundancy rate of MDC. If the transmission is error-free, all

possible set V

M

are equivalent. That is because AMDQ is

reversible. Otherwise, they are not equivalent, because of

the bit errors over the wireless channel. Different set V

M

has different bit-error resilience for the system.

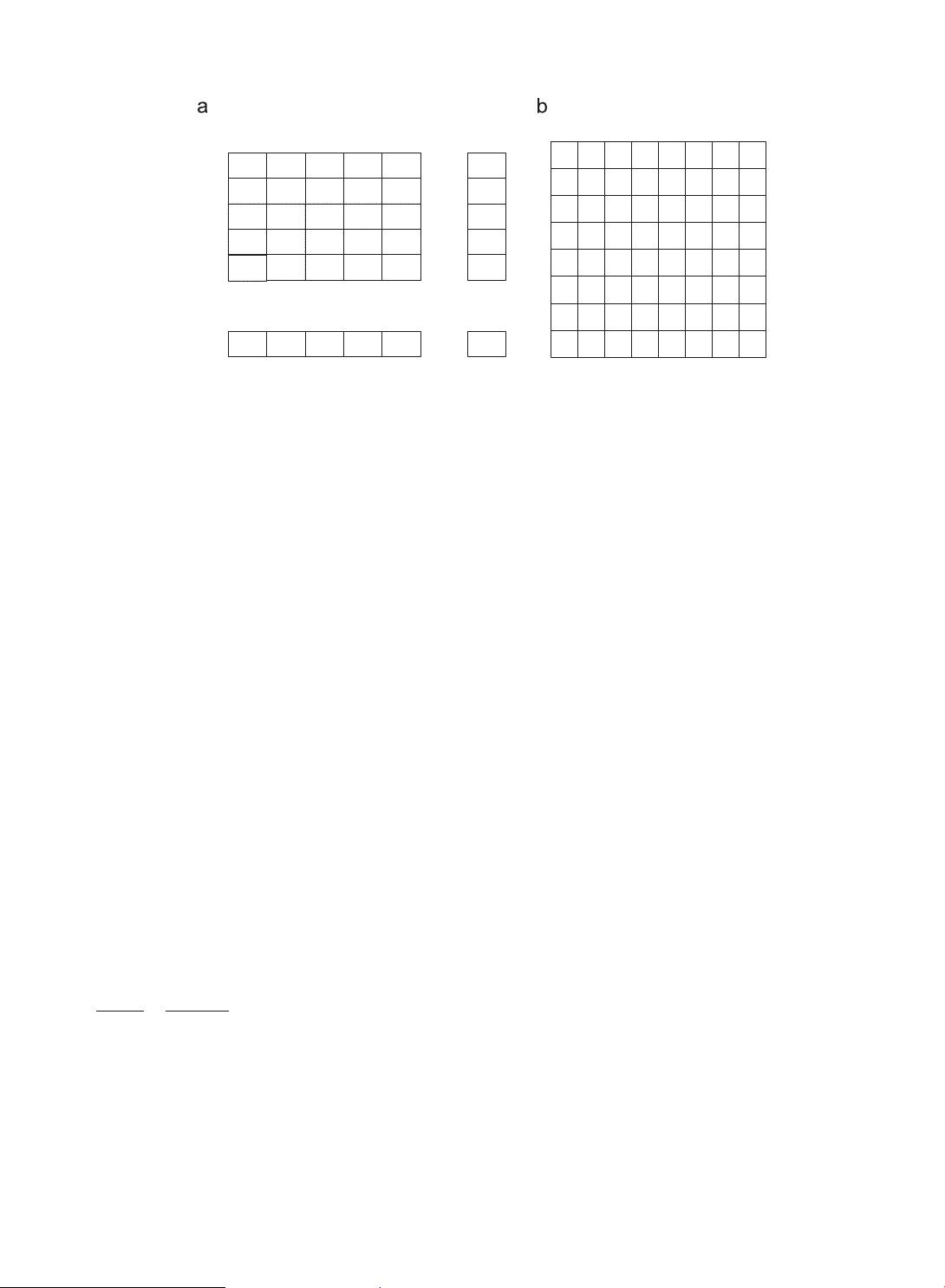

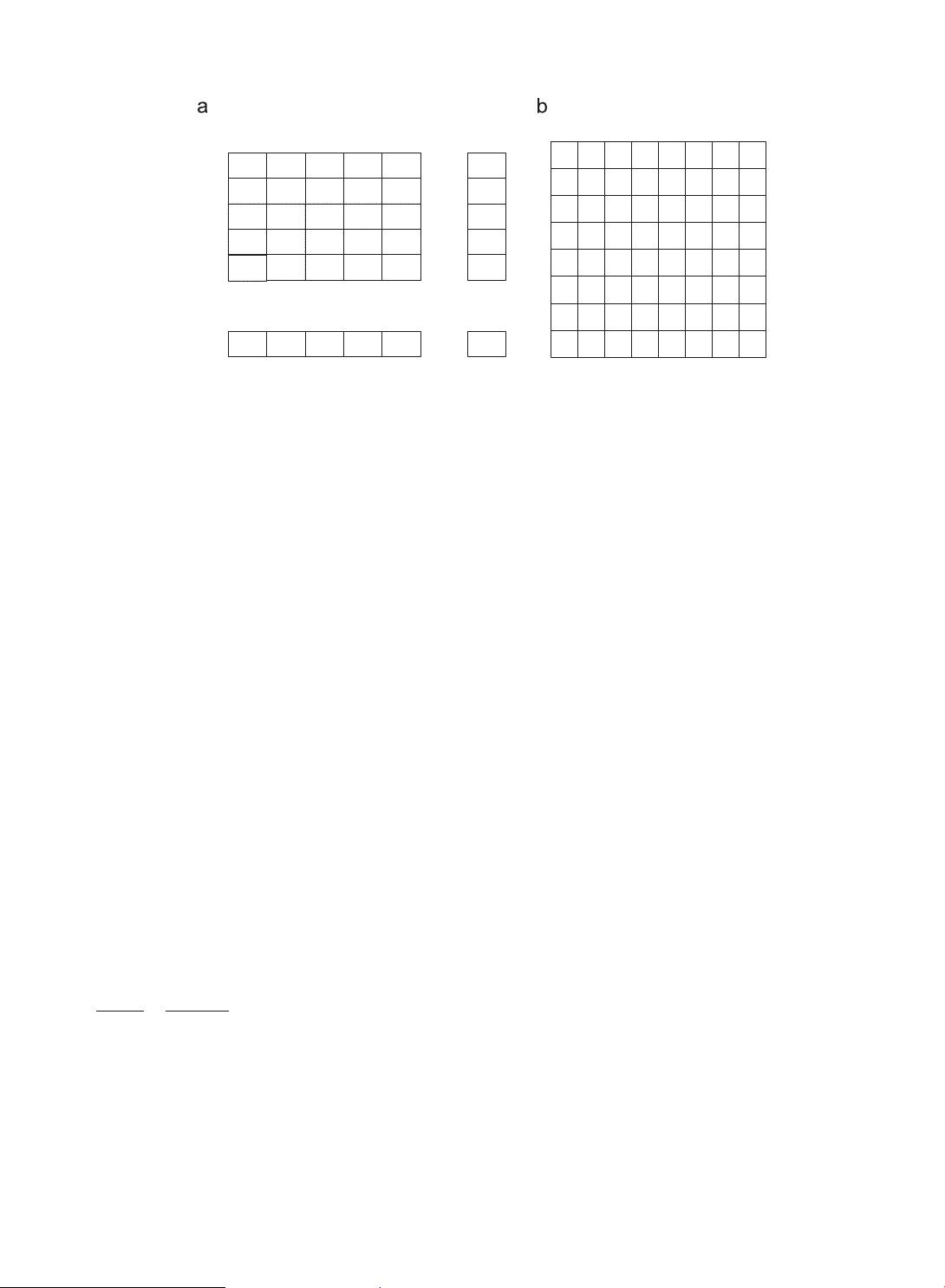

Take the IA of Fig. 2(b) for example, where all the block s

stand for the set W and the blocks with indices stand for

the set V

M

. We assume the input as a single sample x ¼ 11.

It is mapped to the pair ð2; 3Þ as two side descriptions,

whose binary format is ð

0

010 011

0

Þ. When 1-bit error

occurs, there are 6 possible cases for the received code-

word, that is (2,1), (2,2), (3,3), (6,3), (0,3), and (2,7), whose

1 2 3 4 5 N

1

N

1

+1 N

1

+2 N

1

+3 N

1

+4

N

1

+5 2N

1

3N

1

4N

1

5N

1

(N

2

-1)

N

1

+1

(N

2

-1)

N

1

+2

(N

2

-1)

N

1

+3

(N

2

-1)

N

1

+4

(N

2

-1)

N

1

+5

N

1

N

2

12345

N

1

1

2

3

4

5

N

2

j

i

…

…

…

…

…

…

…

…

…

…

…

…

…

…

…

…

…

…

…

…

…

…

0 1 3

01234567

0

2 5 6 8

4 7 10 11 13

9 12 15 16

14 18 19 21

17 20 23 24 26

22 25 28 29

27 30 31

1

2

3

4

5

6

7

Fig. 2. Index table. (a) Initialized index table. (b) MDSQ (M¼32).

M. Yang et al. / Signal Processing: Image Communication 28 (2013) 1132–114 21134