number of patients who received a but were unfortunately

not cured; that is

n

a;0

¼

X

n

i¼1

Iðy

i

¼ 0; a

i

¼ aÞ (4)

n

a;1

¼

X

n

i¼1

Iðy

i

¼ 1; a

i

¼ aÞ: (5)

The convenient conjugate prior then leads to a posteriori

distribution which is also a product of betas

pðw jDÞ¼

Y

K

a¼1

bet a ðw

a

j þn

a;1

;þ n

a;0

Þ: (6)

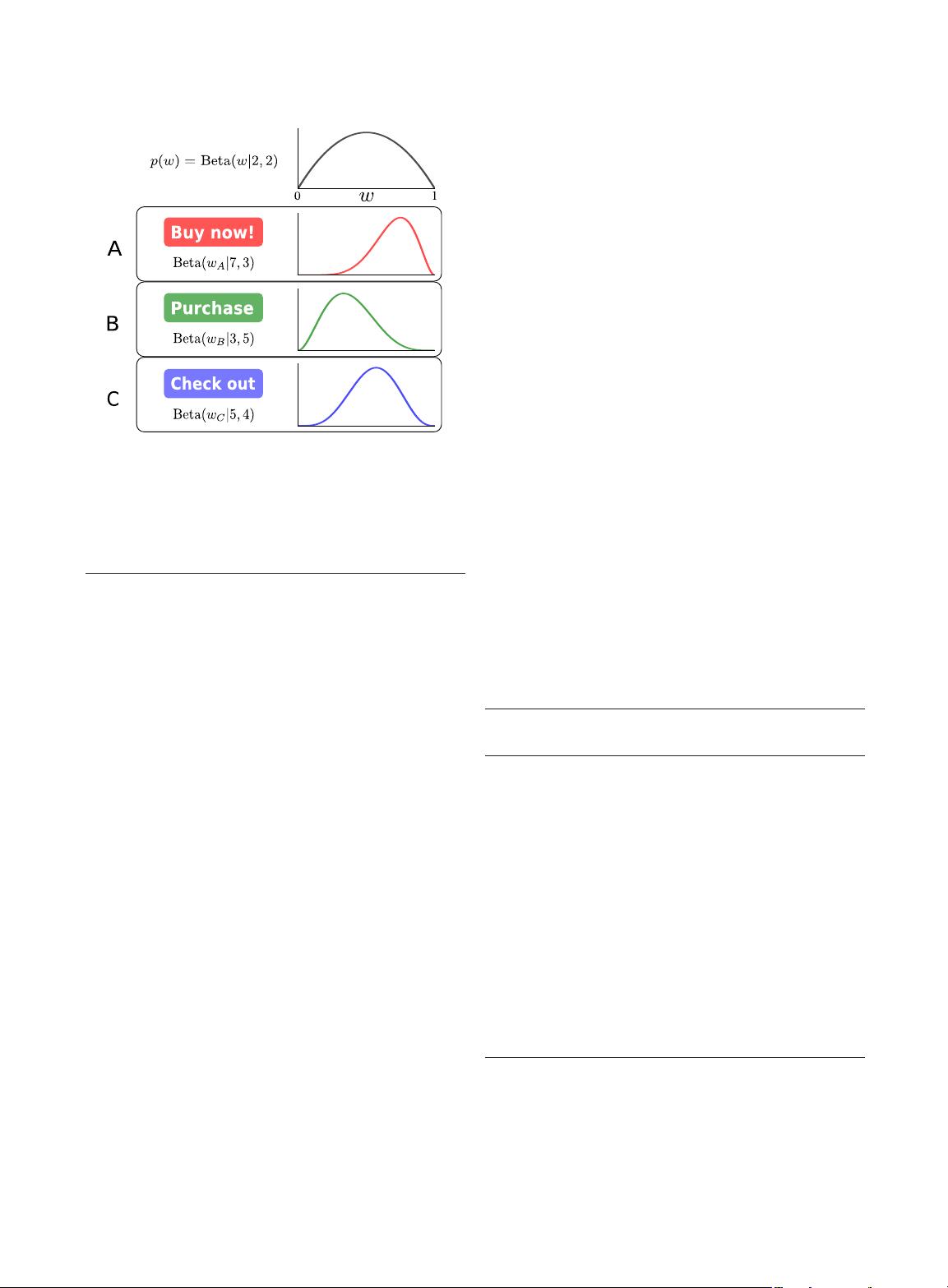

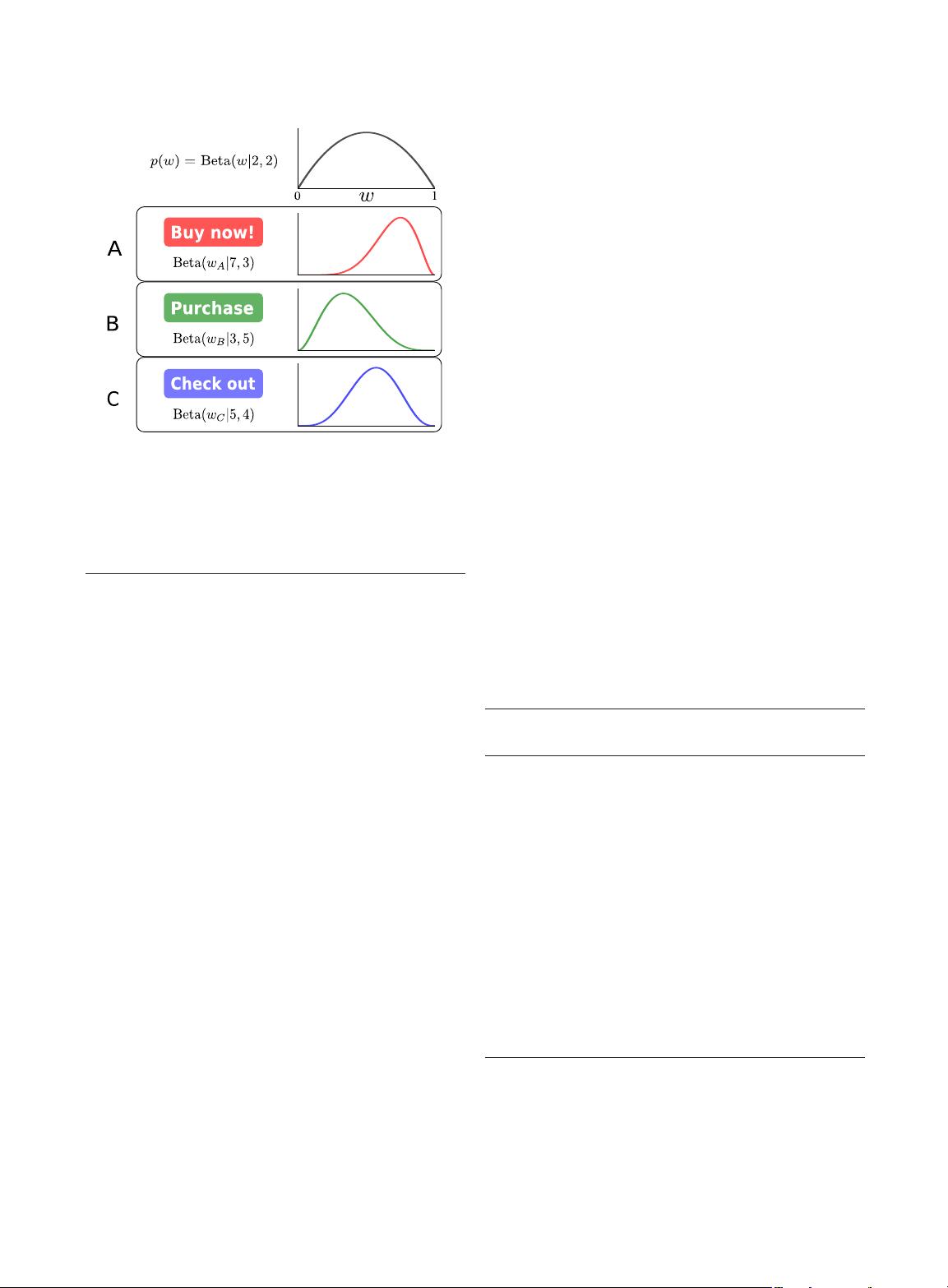

Note that this makes it clear how the hyperparameters ;

>0 in the prior can be interpreted as pseudocounts. Fig. 2

provides a visualization of the posterior of a three-armed

beta-Bernoulli bandit model with a beta(2, 2) prior.

In Section IV, we will introduce various strategies for

selecting the next arm to pull within models like the beta-

Bernoulli, but for the sake of illustration, we introduce TS

[150], the earliest and perhaps the simplest nontrivial

bandit strategy. This strategy is also commonly known as

randomized probability matching [135] because it selects

the arm based on the posterior probability of optimality,

here given by a beta distribution. In simple models like the

beta-Bernoulli, it is possible to compute this distribution

in closed form, but more often it must be estimated via,

e.g., MC.

After observing n patients in our drug example, we can

think of a bandit strategy as being a rule for choosing

which drug to administer to patient n þ 1, i.e., choosing

a

nþ1

among the K options. In the case of TS, this can be

done by drawing a single sample

~

w from the posterior and

then maximizing the resulting surrogate f

~

w

,i.e.,

a

nþ1

¼ arg max

a

f

~

w

ðaÞ; where

~

w pðw jD

n

Þ: (7)

For the beta-Bernoulli, this corresponds to simply drawing

~

w from (6) and then choosing the action with the largest

~

w

a

. This procedure, shown in pseudocode in Algorithm 2,

is also commonly called posterior sampling [127]. It is

popular for several reasons: 1) there are no free parameters

other than the prior hyperparameters of the Bayesian

model; 2) the strategy naturally trades off between

exploration and exploitation based on its posterior beliefs

on w; arms are explored only if they are likely (under the

posterior) to be optimal; 3) the strategy is relatively easy to

implement as long as MC sampling mechanisms are

available for the posterior model; and 4) the randomiza-

tion in TS makes it particularly appropriate for batch or

delayed feedback settings where many selections a

nþ1

are

based on the identical posterior [38], [135].

Algorithm 2: Thompson Sampling for Beta-Bernoulli

Bandit

Require: ; : hyperparameters of the beta prior

1: Initialize n

a;0

¼ n

a;1

¼ i ¼ 0foralla

2: repeat

3: for a ¼ 1; ...; K do

4: ~w

a

betað þ n

a;1

;þ n

a;0

Þ

5: end for

6: a

i

¼ arg max

a

~w

a

7: Observe y

i

by pulling arm a

i

8: if y

i

¼ 0 then

9: n

a

i

;0

¼ n

a

i

;0

þ 1

10: else

11: n

a

i

;1

¼ n

a

i

;1

þ 1

12: end if

13: i ¼ i þ 1

14: until stopping criterion reached

B. Linear Models

In many applications, the designs available to the

experimenter have components that can be varied

independently. For example, in designing an advertise-

ment, one has choices such as artwork, font style, and size;

if there are five choices for each, the total number of

possible configurations is 125. In general, this number

Fig. 2. Example of the beta-Bernoulli model for A/B testing. Three

different buttons are being tested with various colors and text. Each

option is given two successes (click-throughs) and two failures as a

prior (top). As data are observed, each option updates its posterior

over

w. Option A is the current best with five successes and only one

observed failure.

Shahriari et al.: Taking the Human Out of the Loop: A Review of Bayesian Optimization

Vol. 104, No. 1, January 2016 | Proceedings of the IEEE 153