A&A proofs: manuscript no. yolo_sdc1_paper

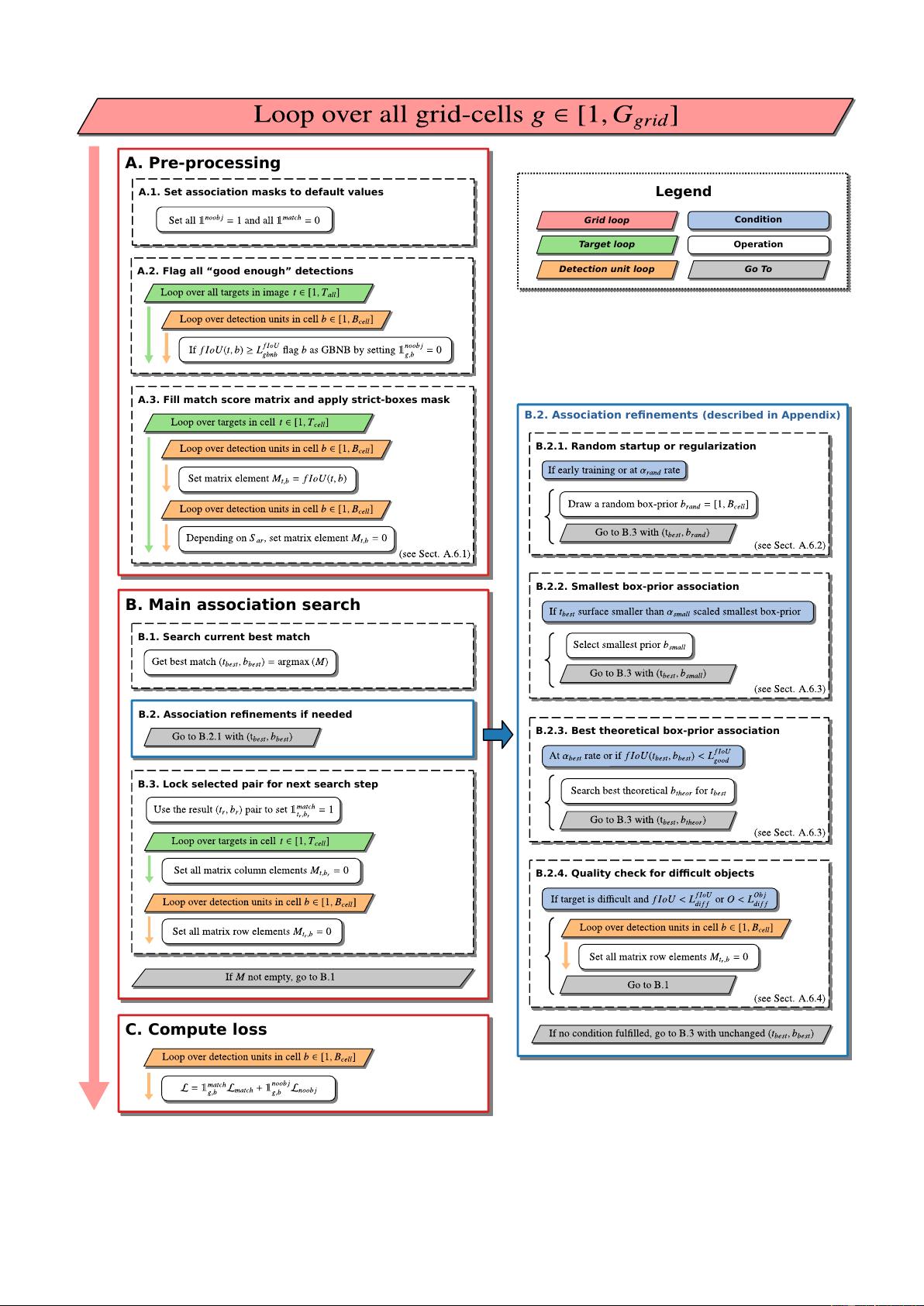

Target boxes Raw pred. boxes Current best pred. Boxes to remove Final pred. boxes

(a) (b) (c) (d)

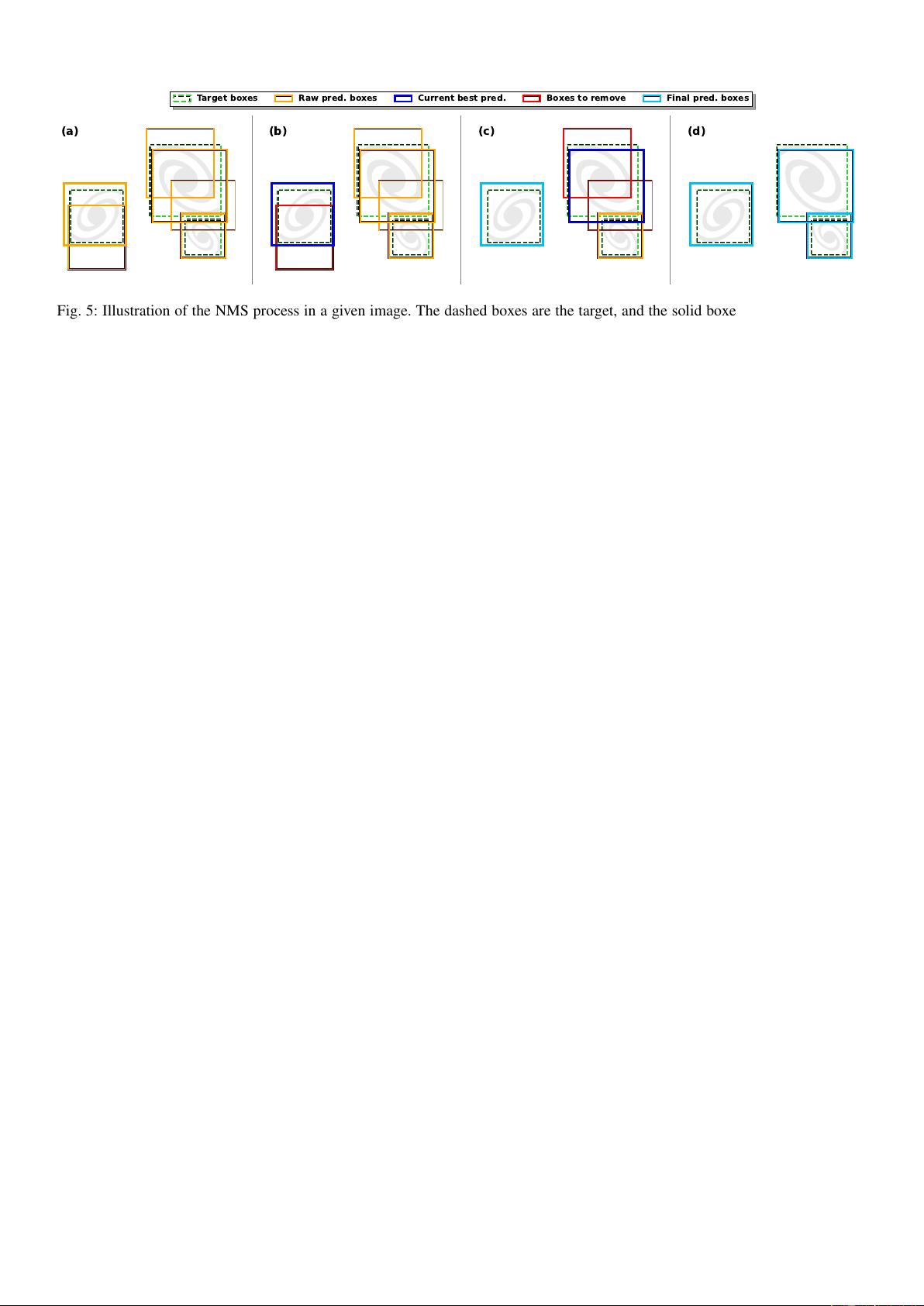

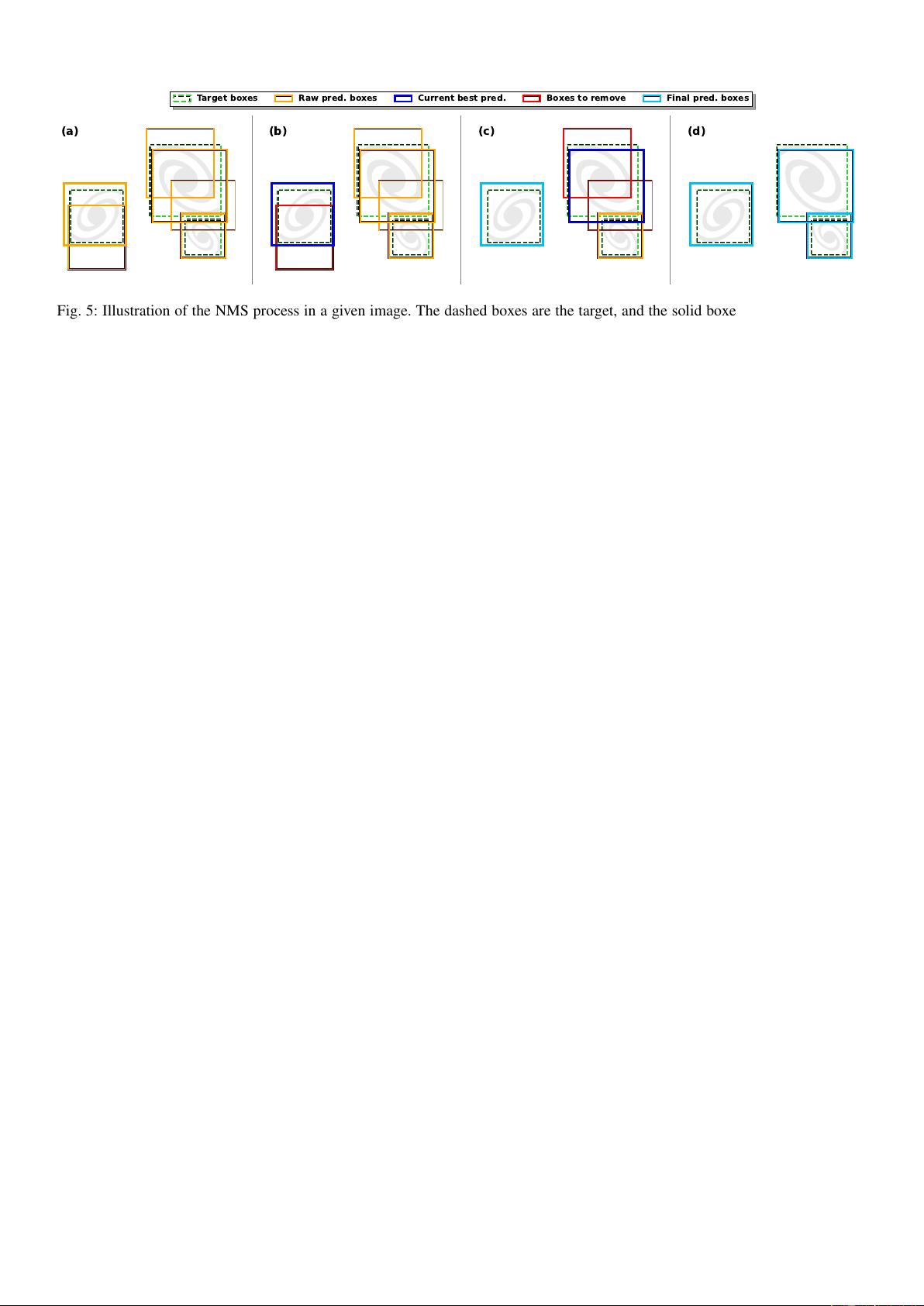

Fig. 5: Illustration of the NMS process in a given image. The dashed boxes are the target, and the solid boxes are the predictions.

The line widths of the boxes are scaled on their respective objectness score. The colors indicate the state of the box in the NMS

process at different steps. Frame (a) represents the targets and the raw network predictions that remain after the objectness filtering.

Frames (b) and (c) represent two successive steps of the NMS process with a different best current box. Frame (d) represents the

remaining boxes after completion of the NMS.

2.8. Prediction filtering and non-maximum suppression

We expect a properly trained detector to order its predictions by

quality based on the predicted objectness score for each detec-

tion unit. The raw detector output is always a static list of boxes

of size

D

g

w

, g

h

, N

b

×(6 + N

c

+ N

p

)

E

regardless of the input con-

tent. Consequently, the predicted boxes must be filtered based on

their objectness score to remove those that are unlikely to repre-

sent an object. By design, the number of actually detectable ob-

jects in the image should be low compared to the total number of

detection units in the grid. Therefore, most of the predicted boxes

belong to the background type with a low objectness score.

While the continuous objectness score is the best direct rep-

resentation of the detector’s inner workings, it is incompatible

with some final metric that needs a list of considered "good" de-

tections. Visualizing the predicted boxes also requires filtering

to preserve only the plausible detection. In such cases, an object-

ness threshold can be used to remove low-confidence detections.

This is usually done on a validation or test dataset not used for

training but for which the targets are known. The threshold can

be optimized to maximize a detection metric on this test dataset.

The main difficulty is that all detection units have fitted

their objectness independently. Due to the fully convolutional

structure of the network, a given detection unit represents the

same objectness fitting over the full grid, meaning that the same

threshold can be used. On the contrary, the predicted objectness

between two independent detection units is not comparable as

it depends on the type and frequency of targets associated with

each of them at training time. The classical solution is to fit an

individual objectness threshold for each of them. However, it

considers that predictions from different detection units are in-

dependent, which is not true for most applications. Still, fitting

a per-detection-unit objectness threshold removes the vast ma-

jority of false positives. To achieve the best results, the object-

ness regimes must be homogenized between the different detec-

tion units from the start by adjusting the individual λ

b

void

factors

(Sect. 2.5). While this is mainly an empirical and iterative pro-

cess, the main principle is to balance the ratio between detection

and background cases based on how the objects from the training

sample are expected to distribute over the detection units.

With most false positives removed, there can still be multi-

ple high-objectness predictions that represent the same object.

To preserve only the best-detected box for each object, we use a

classical post-processing step called non-maximum suppression

(NMS, Felzenszwalb et al. 2010; Girshick et al. 2013). It con-

sists of an iterative search for the box with the highest objectness

score in the image to remove all the overlapping predicted boxes.

To consider that there is an overlap, the two boxes must verify

fIoU > L

fIoU

NMS

with the fIoU being computed between the two

predicted boxes. All boxes with a lower objectness score than

the highest-scoring box are removed, and the best box is added

to a static prediction list. This process is repeated until no boxes

are left in the raw-prediction list. It is illustrated in Fig. 5.

The NMS is done regardless of what detection unit generated

the predicted boxes, demonstrating that they are not independent

as they can remove each other based on their respective object-

ness. This is one of the main reasons to force all detection units to

have similar objectness distributions. The detection quality can

only be evaluated after the NMS, so searching for the best λ

b

void

factors is dependent on the L

fIoU

NMS

and respectively.

3. Dataset description and network training

In this section, we present the main aspects of the SDC1 data

along with the expected products and the associated metrics. A

complete description of the SDC1 challenge and its data prod-

ucts can be found in Bonaldi et al. (2021), while the under-

lying T-RECS simulation is detailed in (Bonaldi et al. 2019).

We also present the pre-processing of the data to construct our

training sample. From this, we describe our best-performing

network architecture and specify the corresponding setup and

hyper-parameters for our YOLO-CIANNA detector.

3.1. Sub-challenge definition

The SDC1 is a source detection and characterization task in sim-

ulated SKA-like data products (Sect. 1) that comprises nine 4GB

images (three frequencies, with three integration times each) of

the same field. The SDC1 is only modestly challenging regard-

ing data volume, especially compared to the SDC2 with its 1TB

data cube. Still, it represents significant challenges for detec-

tion methods in many other aspects. All the images have the

same size of 32768 square pixels. As the frequency increases,

the angular resolution improves while the field of view reduces.

Therefore, images at different frequencies only partially over-

lap, meaning that the problem to solve varies with the position

in the field. In addition, the number of detectable sources varies

significantly with the integration time and frequency (Table 2 in

Article number, page 8 of 40