Δinsert [K

1

, V

3

]

Δdelete [K

1

, V

1

]

Δinsert [K

1

, V

4

]

Δdelete [K

1

, V

4

]

V

4

V

4

V

1

V

4

V

3

V

1

V

4

V

2

V

3

V

1

V

4

Leaf node

Delta record

S

present

S

deleted

K

1

V

1

V

2

K

1

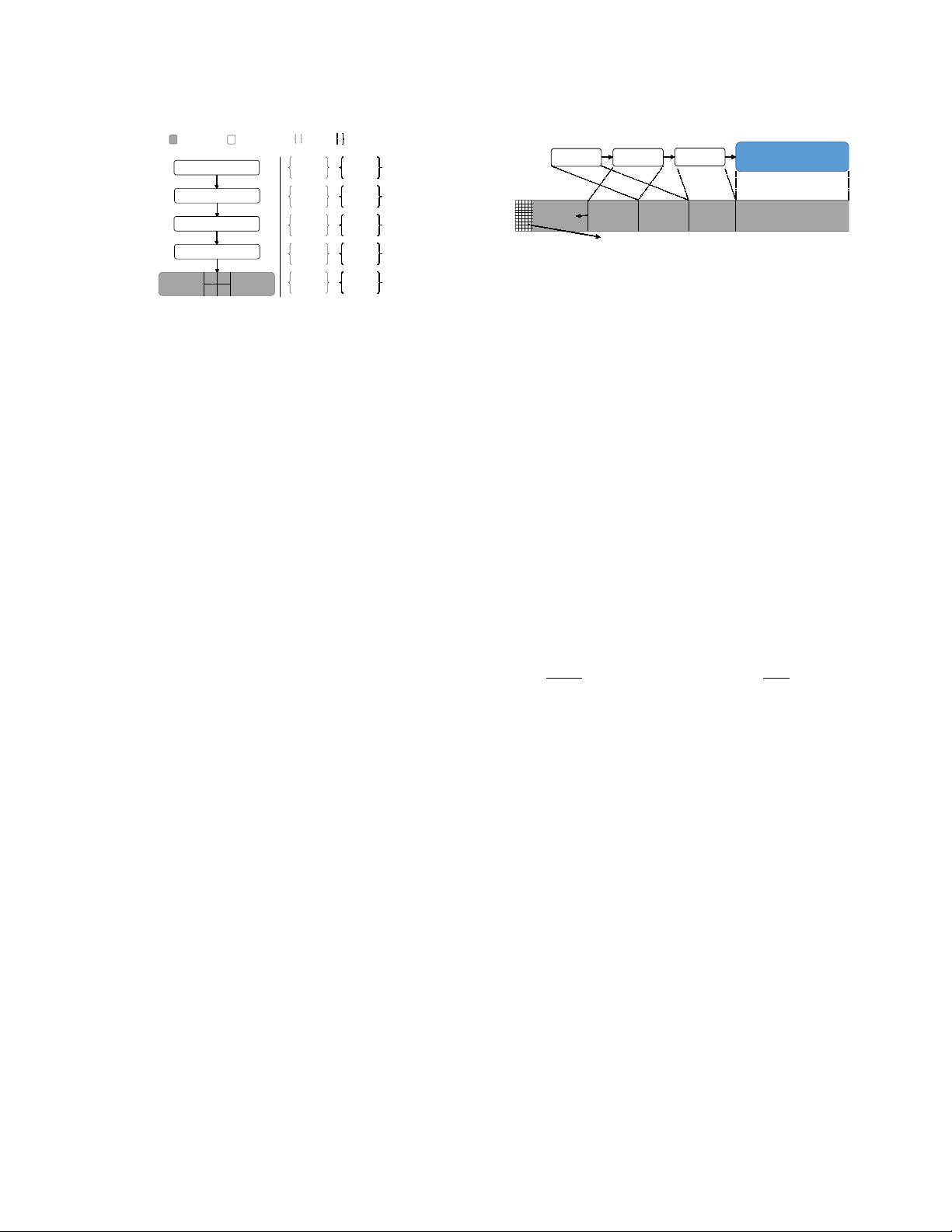

Figure 3: Non-unique Key Support

– The two sets (

S

present

,

S

deleted

) track

the visibility of ∆insert and ∆delete records in the Delta Chain.

a new traversal from the root, using the current low key or high

key to reach the previous or the next sibling node.

3.3 Mapping Table Expansion

Since every thread accesses the Bw-Tree’s Mapping Table multiple

times during traversal, it is important that it is not a bottleneck.

Storing the Mapping Table as an array of physical pointers indexed

by the node ID is the fastest data structure. But using a xed-size

array makes it dicult to dynamically resize the Mapping Table as

the number of items in the tree grows and shrinks. This last point

is the problem that we address here.

The OpenBw-Tree pre-allocates large virtual address space for

the Mapping Table without requesting backing physical pages. This

allows it to leverage the OS to lazily allocate physical memory

without using locks; this technique was previously used in the

KISS-Tree [

18

]. As the index grows, a thread may attempt to access

one of the Mapping Table’s pages that have not been mapped to

the physical memory, incurring a page fault. The OS then allocates

a new empty physical page for the virtual page. In practice, the

amount of virtual address space we reserve is estimated using the

total amount of physical memory and the lower bound of virtual

node size.

Although this approach makes it easy to increase the number of

entries in the Mapping Table as the index grows, it does not solve

the problem of shrinking the size of the Mapping Table. To the best

of our knowledge, there is no lock-free way of doing this. The only

way to shrink the Mapping Table is to block all worker threads and

rebuild the index.

4 COMPONENT OPTIMIZATION

A good-faith implementation of the data structure described in

original Bw-Tree paper design can further be improved. We present

our optimizations for the OpenBw-Tree’s key components to im-

prove its performance and scalability. As we show in Section 5,

these optimizations increase the index’s throughput by 1.1–2.5

×

for multi-threaded environments.

4.1 Delta Record Pre-allocation

As described in Section 2.1, the Delta Chain in Bw-Tree is a linked

list of delta records that is allocated on the heap. Traversing this

linked list is slow because a thread can incur a cache miss for

each pointer dereference. Additionally, excessive allocations of

small objects create contention in the allocator, which becomes a

scalability bottleneck as the number of cores increases.

Δ

1

Base Node

Δ

2

Δ

3

Base node storage

Δ

1

Δ

3

Δ

2

Free

Space

Low address

High address

Logical view

Physical view

Allocation metadata (incl. the marker)

Growing

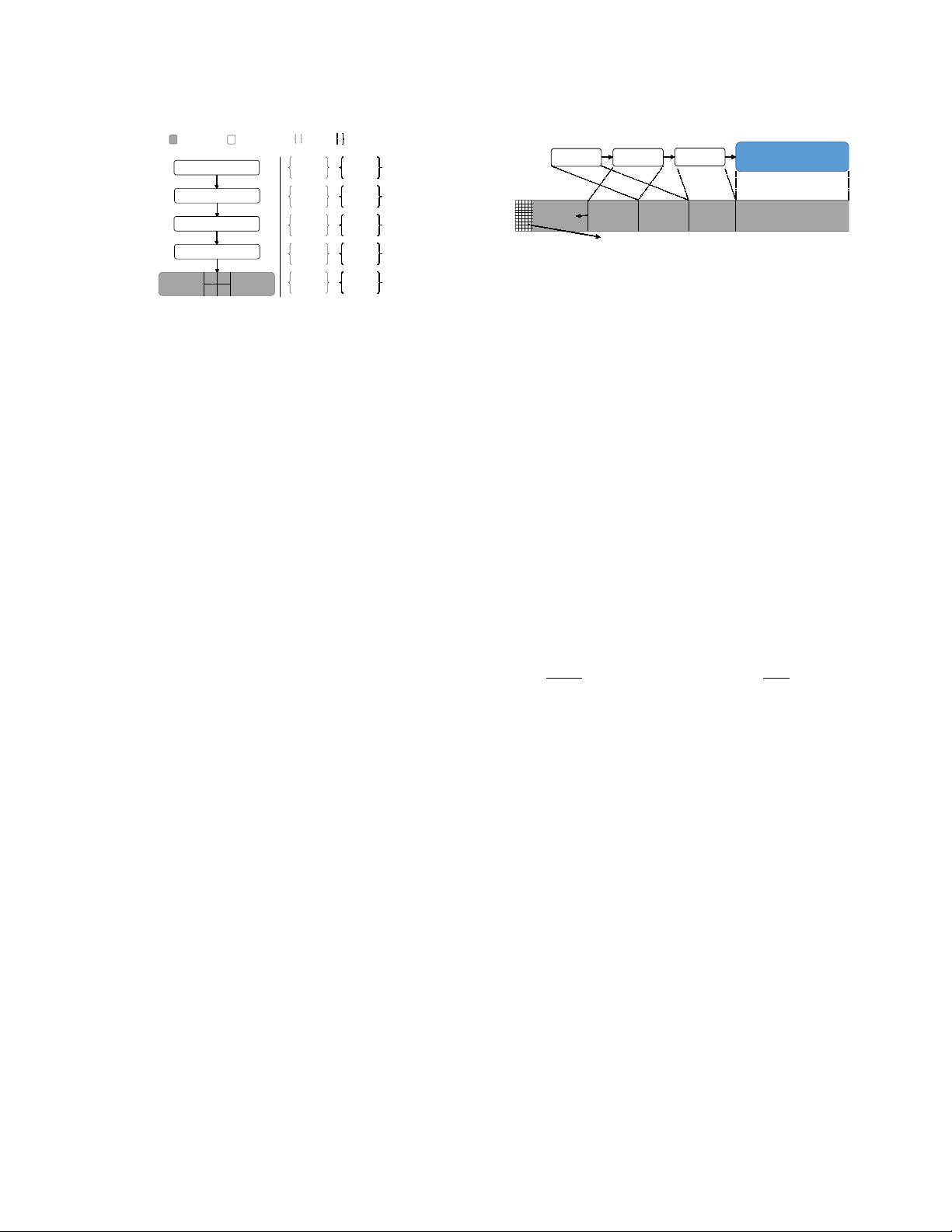

Figure 4: Pre-allocated Chunk

– This diagram depicts the logical view

and physical view of a OpenBw-Tree node. Slots are acquired by threads

using a CaS on the marker, which is part of the allocation metadata on

lower-address of the chunk.

To avoid these problems, the OpenBw-Tree pre-allocates the

delta records inside of each base node. As shown in Fig. 4, it stores

the base node in the high-address end of the pre-allocated chunk

and stores the delta records from high to low addresses (right-to-left

in the gure). Each chain also maintains an allocation marker that

points to the last delta record or the base node. When a worker

thread claims a slot, it decrements this marker by the number of

bytes for the new delta record using an atomic subtraction. If the

pre-allocated area is full, then this triggers a node consolidation.

This reverse-growth design is optimized for ecient Delta Chain

traversals. Reading delta records in the new-to-old order is likely

to (but not always) access memory linearly from low to high ad-

dresses, which is ideal for modern CPUs with hardware memory

prefetching. But threads must traverse a node’s Delta Chain by

following each delta record’s pointer to nd the next entry, rather

than just scanning from low to high addresses. This is because

the logical order of delta records may not match their physical

locations in memory. Slot allocations and Delta Chain appendings

are not atomic, permitting multiple threads to interleave them. For

example, Fig. 4 shows that delta record

∆

3

was logically added to

the node

before

delta record

∆

2

, but

∆

3

appears

after ∆

2

physically

in memory.

4.2 Garbage Collection

The OpenBw-Tree adopts a garbage collection (GC) scheme that

is similar to the one used in Silo [

34

] and Deuteronomy [

24

]. The

epoch-based GC scheme of the original Bw-Tree [

25

] provides

safe memory reclamation that prevents the index from reusing

memory when there may exist a thread that is accessing it. With

this approach, the index maintains a list of global epoch objects,

and appends new epoch objects to the end of this list at xed

intervals (e.g., every 40 ms). Every thread must enter the epoch

by enrolling itself in the current epoch object before it accesses

the index’s internal data structures (e.g., performing a key lookup).

When the thread completes its operation, it removes itself from the

epoch it has entered. Any objects that are marked for deletion by a

thread are added into the garbage list of the current epoch. Once all

threads exit an epoch, the index’s GC component can then reclaim

the objects in that epoch that are marked for deletion.

Fig. 5a illustrates the centralized GC scheme with three active

epochs, three worker threads (

t

1

,

t

2

,

t

3

), and a background GC

thread (

t

дc

). In this diagram,

t

2

adds a new node to the garbage list

of epoch 103. At the same time, the GC thread

t

дc

installs a new

epoch object to the epoch list. Since the counter inside epoch 101

has reached zero, t

дc

will reclaim all entries in its garbage list.