An End-to-End Spatio-Temporal Attention Model for Human Action Recognition

from Skeleton Data

Sijie Song

1

, Cuiling Lan

2

∗

, Junliang Xing

3

, Wenjun Zeng

2

, Jiaying Liu

1

∗

1

Institute of Computer Science and Technology, Peking University, Beijing, China

2

Microsoft Research Asia, Beijing, China

3

Institute of Automation, Chinese Academy of Sciences, Beijing, China

{ssj940920, liujiaying}@pku.edu.cn, {culan,wezeng}@microsoft.com, jlxing@nlpr.ia.ac.cn

Abstract

Human action recognition is an important task in computer

vision. Extracting discriminative spatial and temporal fea-

tures to model the spatial and temporal evolutions of dif-

ferent actions plays a key role in accomplishing this task.

In this work, we propose an end-to-end spatial and tempo-

ral attention model for human action recognition from skele-

ton data. We build our model on top of the Recurrent Neural

Networks (RNNs) with Long Short-Term Memory (LSTM),

which learns to selectively focus on discriminative joints of

skeleton within each frame of the inputs and pays different

levels of attention to the outputs of different frames. Further-

more, to ensure effective training of the network, we propose

a regularized cross-entropy loss to drive the model learning

process and develop a joint training strategy accordingly. Ex-

perimental results demonstrate the effectiveness of the pro-

posed model, both on the small human action recognition

dataset of SBU and the currently largest NTU dataset.

1 Introduction

Recognition of human action is a fundamental yet challeng-

ing task in computer vision. It facilitates many applications

such as intelligent video surveillance, human-computer in-

teraction, video summary and understanding (Poppe 2010;

Weinland, Ronfard, and Boyerc 2011). The key to the suc-

cess of this task is how to extract discriminative spatial tem-

poral features to effectively model the spatial and temporal

evolutions of different actions.

One general approach focuses on the recognition from

RGB videos (Weinland, Ronfard, and Boyerc 2011). Since

each frame is a capture of the highly articulated human in

a two-dimensional space, it loses some information of the

three-dimensional (3D) space and then loses the flexibil-

ity of achieving human location and scale invariance. The

other general approach leverages the high level information

of skeleton data, which represents a person by the 3D co-

ordinate positions of key joints (i.e., head, neck,· · · , foot).

Such representation is robust to variations of locations and

∗

Corresponding author. This work was done at Microsoft Re-

search Asia. This work was supported by National Natural Sci-

ence Foundation of China under contract No. 61472011 and No.

61303178.

Copyright

c

2017, Association for the Advancement of Artificial

Intelligence (www.aaai.org). All rights reserved.

… ……

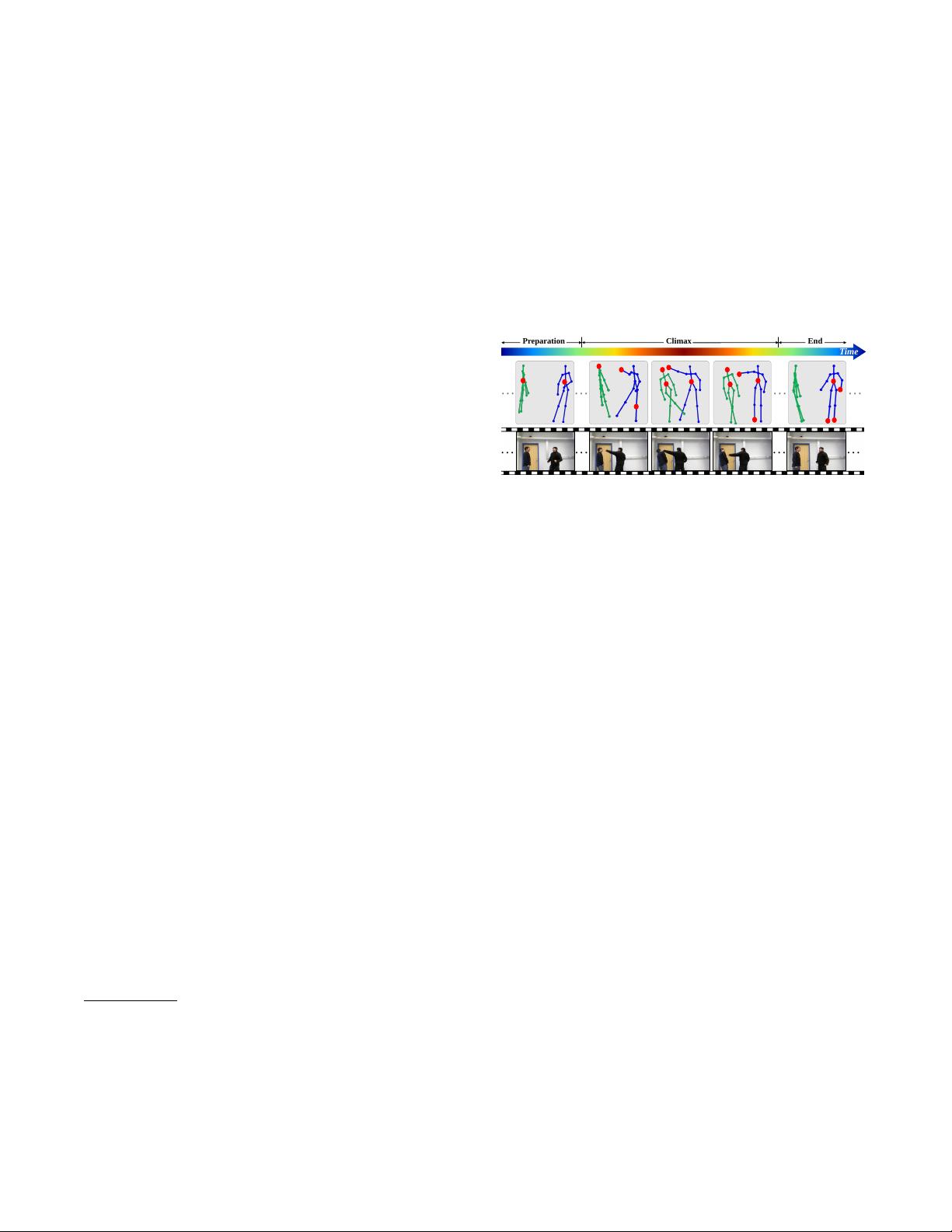

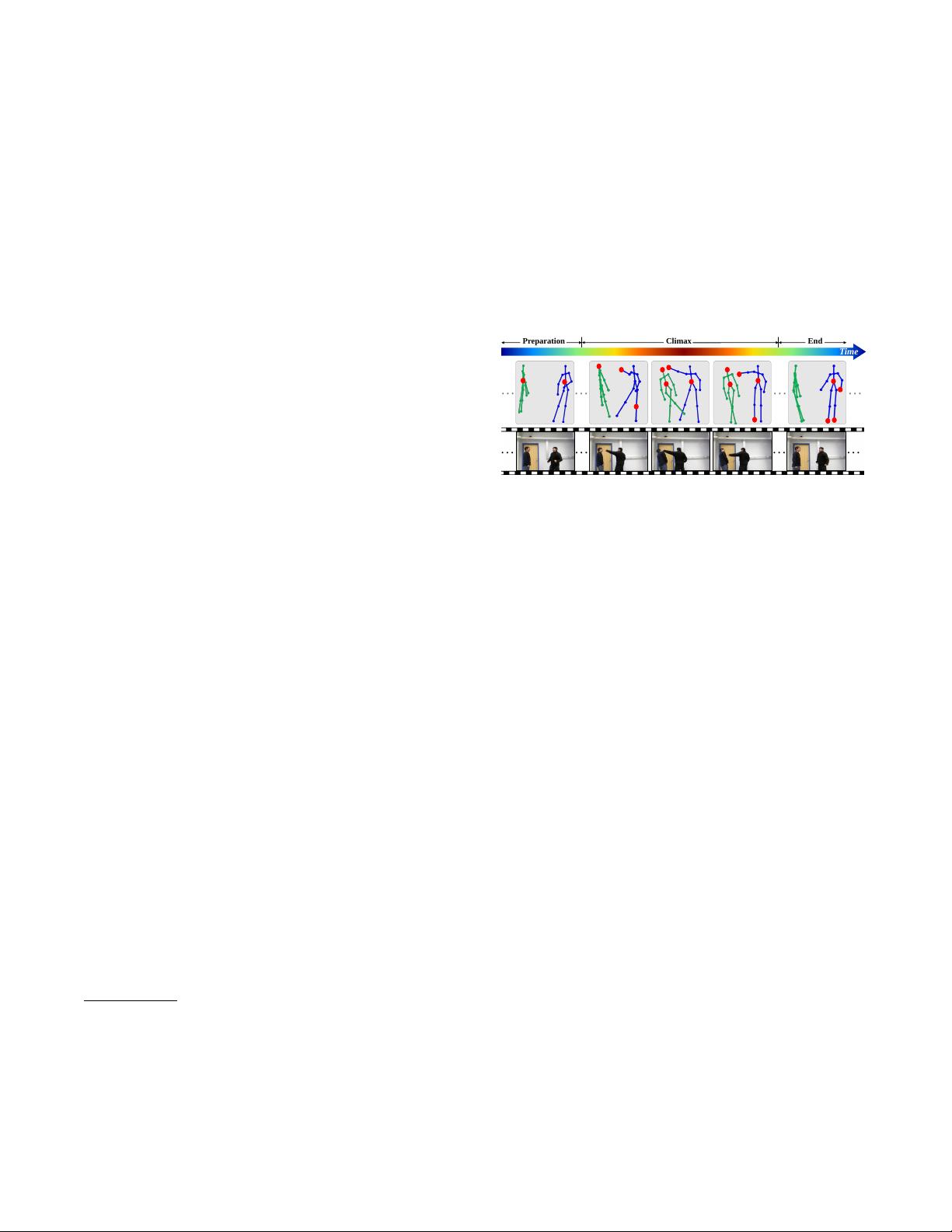

Preparation Climax End

Time

……

…

… …

Figure 1: Illustration of the procedure for an action “punch-

ing”. An action may experience different stages, and involve

different discriminative subsets of joints (as the red circles).

viewpoints. Without combining RGB information, there is a

lack of appearance information. Fortunately, biological ob-

servations from the early seminal work of Johansson suggest

that the positions of a small number of joints can effectively

represent human behavior even without appearance informa-

tion (Johansson 1973). Skeleton-based human representa-

tion has attracted increasing attention for recognizing human

actions thanks to its high level representation and robustness

to variations of locations and appearances (Han et al. 2016).

The prevalence of cost-effective depth cameras such as Mi-

crosoft Kinect (Zhang 2012) and the advance of a powerful

human pose estimation technique from depth (Shotton et al.

2011) make 3D skeleton data easily accessible. This boosts

research on skeleton-based human action recognition. In this

work, we focus on recognition from skeleton data.

Fig. 1 shows an example of a series of skeleton frames

(and RGB images) for the action “punching”. Each human

body is represented by key joints in terms of coordinate po-

sitions in the 3D space. The articulated configurations of

joints constitute various postures and a series of postures in

a certain time order identifies an action. With the skeleton

as an explicit high level representation of human pose, many

works design algorithms taking the positions of joints as in-

puts. There are two basic components in these works. One

is the design and mining of discriminative features from the

skeleton, such as histograms of 3D joint locations (HOJ3D)

(Xia, Chen, and Aggarwal 2012), pairwise relative posi-

tion features (Wang, Liu, and Yuan 2012), relative 3D ge-

ometry features (Vemulapalli, Arrate, and Chellappa 2016).

The other is the modeling of temporal dynamics, such as

Hidden Markov Model (Xia, Chen, and Aggarwal 2012),

Conditional Random Fields (Sminchisescu, Kanaujia, and

arXiv:1611.06067v1 [cs.CV] 18 Nov 2016