0018-9340 (c) 2015 IEEE. Personal use is permitted, but republication/redistribution requires IEEE permission. See

http://www.ieee.org/publications_standards/publications/rights/index.html for more information.

This article has been accepted for publication in a future issue of this journal, but has not been fully edited. Content may change prior to final publication. Citation information: DOI

10.1109/TC.2015.2456015, IEEE Transactions on Computers

SUBMITTED TO IEEE TRANSACTIONS ON COMPUTERS, MANUSCRIPT ID XXXX 3

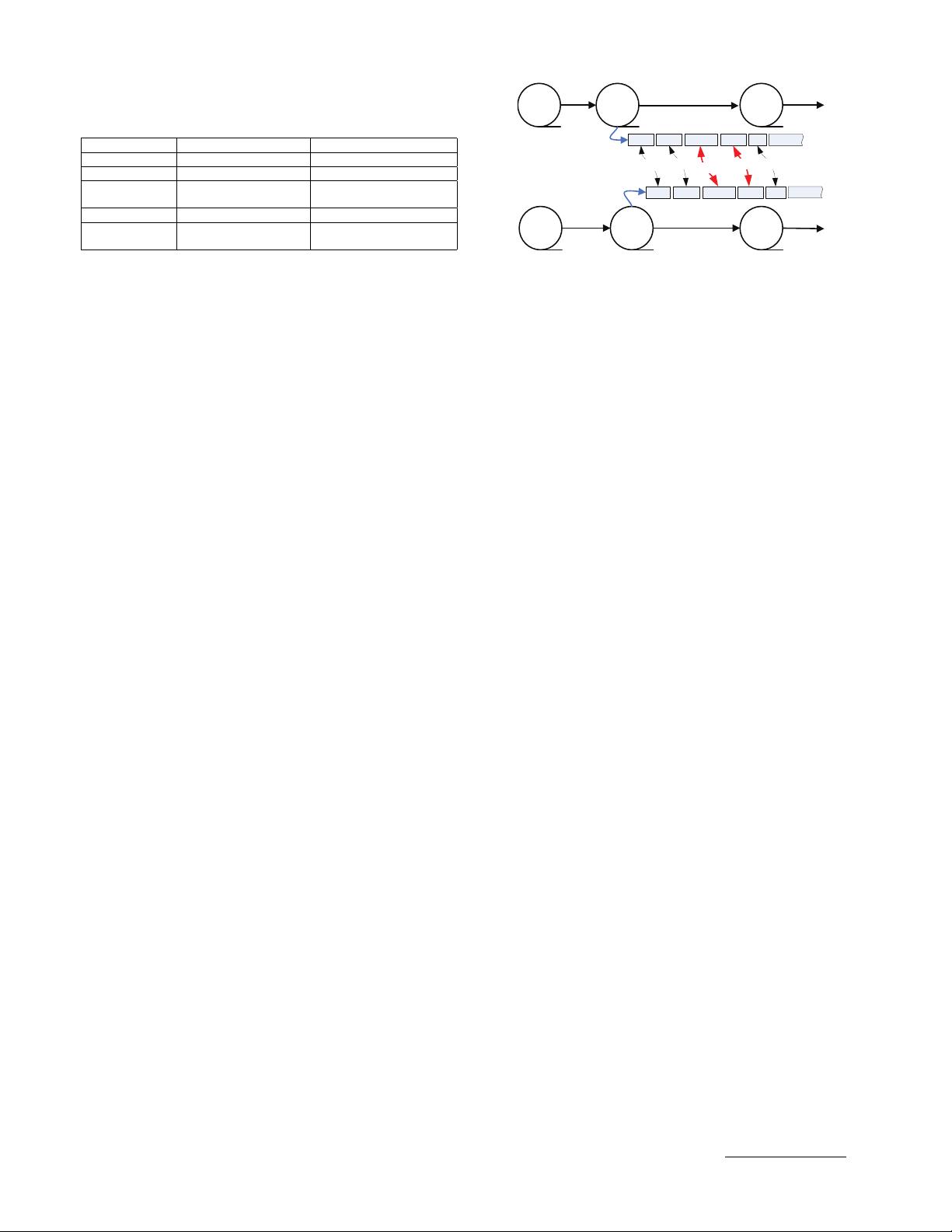

TABLE 1

Comparisons between duplicate detection and

resemblance detection for data reduction systems.

Duplicate Detection Resemblance Detection

Objects Duplicate data Similar data

Granularity Chunk-level Byte-level

Rep. Methods Secure-Fingerprint Super-Feature based

based Deduplication Delta Compression

Scalability Strong Weak

Rep. Systems LBFS [11], Venti[5], REBL[16], DERD[15],

DDFS [6] SIDC[12]

Another challenge for data deduplication is how to

maximally detect and eliminate data redundancy in stor-

age systems by determining appropriate data chunking

schemes. In order to find more redundant data, the

Content-Defined Chunking (CDC) approach was pro-

posed in LBFS to find the proper cut-point of each chunk

in the files and address the boundary-shift problem [11],

[30], [9]. Re-chunking approaches were also proposed to

divide those non-duplicate chunks into smaller ones to

expose and detect more redundancy [31], [32], [33].

Resemblance detection with delta compression[16],

[15], [26], as another approach to data reduction in

storage systems, was proposed more than ten years

ago but was later overshadowed by fingerprint-based

deduplication [6], [25], [24] due to the former’s scala-

bility issue. Table 1 compares these two data reduction

approaches. Resemblance detection detects redundancy

among similar data at the byte level while duplicate

detection finds totally identical data at the chunk level,

which makes the latter much more scalable than the

former in mass storage systems.

REBL[16] and DERD [15] are typical super-feature-

based resemblance detection approaches for data reduc-

tion. They compute the features of the data stream (e.g.,

Rabin Fingerprints [34]) and group features into super-

features to capture the resemblance of data and then

delta compress the data. TAPER [35] presents a Bloom-

Filter solution that measures the similar files based on

the chunk fingerprints recorded in Bloom Filters. All

these approaches require high computation and indexing

overheads for resemblance detection. As a result, the

simpler and faster deduplication method has become a

more popular data reduction approach in the last five

years [8], [6], [7].

Nevertheless, resemblance detection is gaining in-

creasing tractions in storage systems because of its ability

to capture and eliminate data redundancy among similar

but non-duplicate data chunks that effectively comple-

ments fingerprint-based deduplication. Difference En-

gine [20] employs Xdelta [23] to further eliminate mem-

ory redundancy and thus enlarge the logical RAM

space in VM environments. I-CASH [18] delta com-

presses similar data to enlarge the logical space of SSD

caches. Shilane et al. [12] proposed a stream-informed

delta compression approach to reducing similar data

transmission and thus accelerating data replication in a

WAN environment. This approach is super-feature based

and complements the chunk-level deduplication by only

detecting resemblance among non-duplicate chunks in

Similar

File BFile A File C

File EFile D File F

B

1

B

2

B

3

B

5

B

4

E

1

E

2

E

3

E

5

ĂĂ

ĂĂ

Similar

Duplicate

E

4

Data stream 1

Data stream 2

Duplicate

Similar

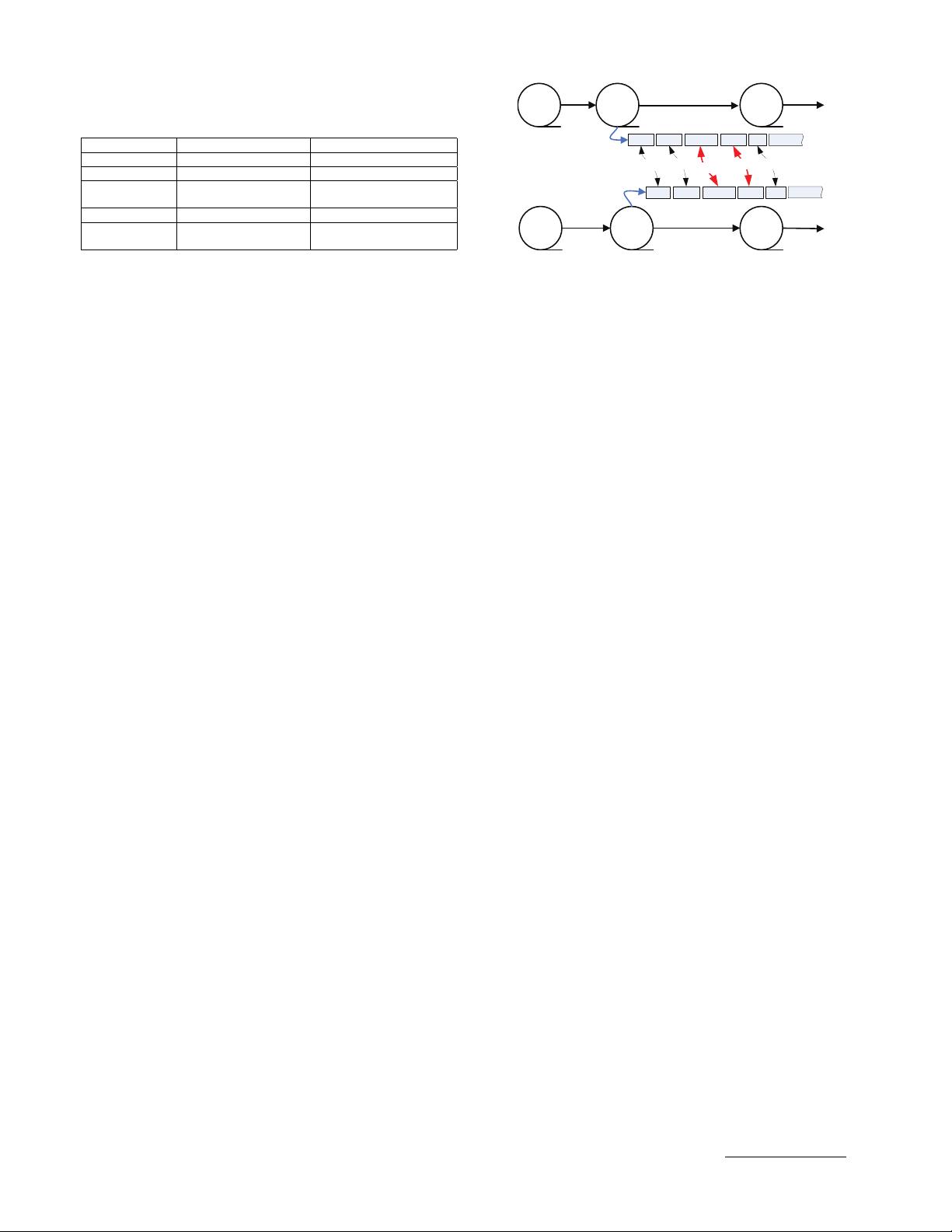

Fig. 1. A conceptual illustration of the duplicate adjacency. The

non-duplicate chunks adjacent to duplicate ones are considered

potentially similar and thus good delta compression candidates.

the cache that preserves the backup stream locality. It

avoids the costly global indexing, at a limited loss of

resemblance detection. While the combined detection of

duplicate and resemblance promises to achieve a supe-

rior data reduction performance, challenges of relatively

high computation and indexing overheads stemming

from resemblance detection remain [17].

Note that SIDC [12] is the most related work to DARE.

Different from SIDC that implements traditional super-

feature based delta compression in a stream-informed

(i.e., locality preserved) cache, DARE first employs a

duplicate-adjacency based resemblance detection scheme

(see Section 3.2) and then an improved super-feature

based approach (see Section 3.3) to jointly and more

effectively reduce the indexing and computation over-

heads for delta compression.

2.2 Fact of Duplicate Adjacency

As discussed in Section 2.1, the modified chunks may

be very similar to their previous versions in a backup

system while unmodified chunks will remain duplicate

and are easily identified by the deduplication process.

For those non-duplicate chunks that are location-adjacent to

known duplicate data chunks in a deduplication system, it

is intuitive and quite possible that only a few bytes of them

are modified from the last backup, making them potentially

excellent delta compression candidates.

Figure 1 illustrates a case of duplicate data chunks and

their immediate non-duplicate neighbors. As mentioned

above, our intuition is that the latter are highly likely to

be similar and thus good delta compression candidates.

Specifically, since chunks B

3

& B

4

are duplicates of

chunks E

3

& E

4

in Figure 1 respectively, their immediate

neighbors, the chunk-pairs B

1

& E

1

, B

2

& E

2

, and

B

5

& E

5

, are then considered good delta compression

candidates, which is consistent with the aforementioned

backup-stream locality [6], [12], [29], [25], [36].

If we can make full use of the existing knowledge

about duplicate data chunks in a deduplication system,

it is possible for us to detect similar chunks without the

overheads of computing and storing features & super-

features and then accessing their on-disk index. Figure

2 shows important preliminary results of this duplicate-

adjacency-based resemblance detection approach, called

DupAdj, on several real-world datasets whose workload

characteristics are detailed in Table 2 in Section 4.1.

First, the similarity degree (i.e.,

dela compressed size

chunk size

) of