PySpark入门到精通指南

需积分: 47 56 浏览量

更新于2024-07-14

1

收藏 14.79MB PDF 举报

"《Learning Apache Spark with Python》是由Wenqiang Feng编写的关于使用Python进行Spark开发的教程,旨在帮助读者从入门到精通。该书涵盖了Spark的基础知识、配置平台、Spark的核心概念、组件架构,以及使用RDD进行编程,并涉及统计学和线性代数的初步知识。"

在《pyspark从入门到精通》这本书中,作者首先介绍了为何选择Spark作为大数据处理的工具,强调了Spark的高效性和灵活性。Spark是为大规模数据处理设计的并行计算框架,它提供了一种快速、通用且可扩展的处理方式。对于Python开发者来说,PySpark提供了友好的接口,使得数据科学家和工程师可以利用Python丰富的库进行数据分析。

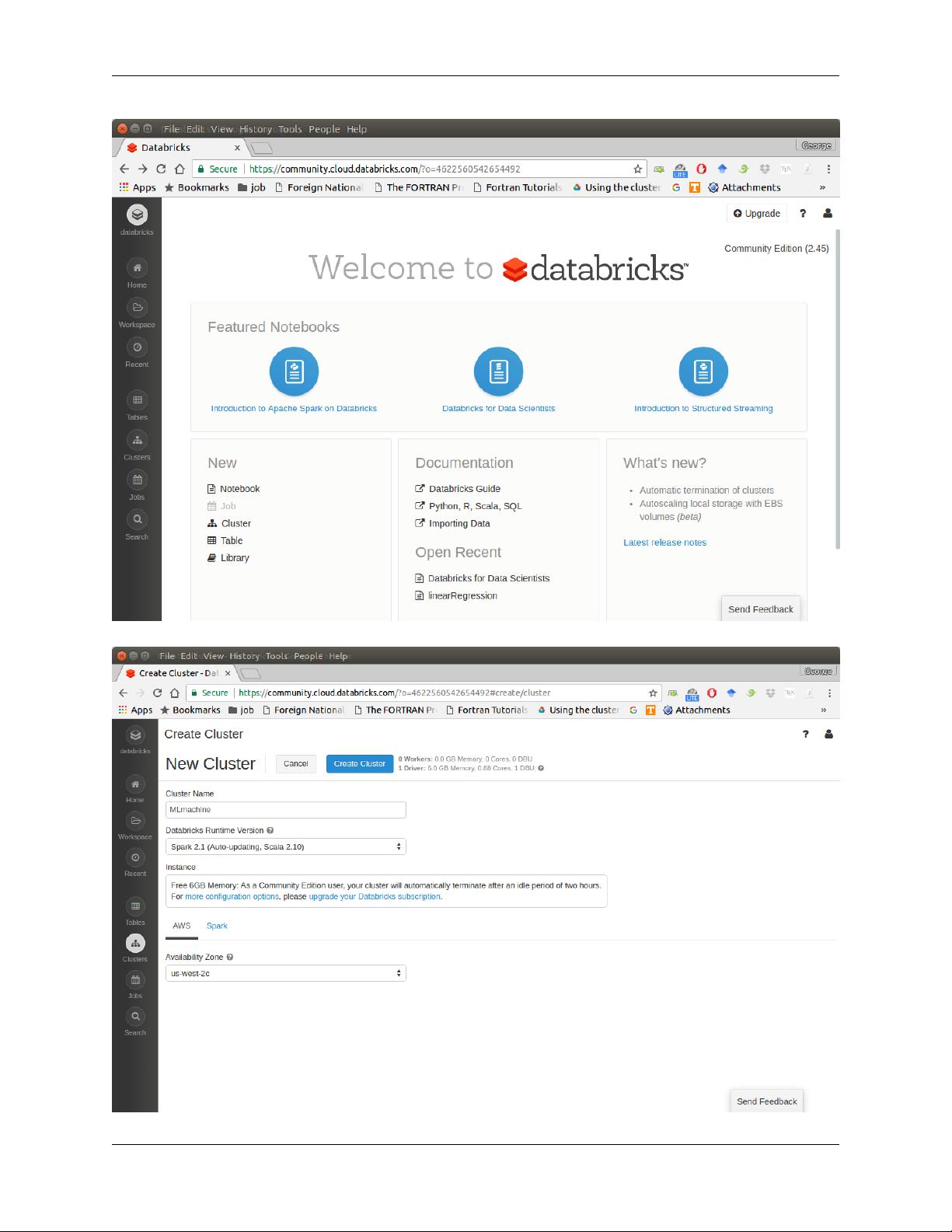

在配置运行平台的部分,书中详细讲解了如何在不同环境下设置Spark,包括在Databricks社区云上运行、在Mac和Ubuntu系统上的配置、在Windows系统上的安装,以及如何使用文本编辑器或IDE(如PyCharm)与PySpark配合工作。此外,还介绍了如何配置Sparkling Water(Spark与H2O的结合)和在云端部署Spark。特别地,书中提到了在Google Colaboratory上使用PySpark,这是一个免费的在线Jupyter Notebook环境,方便学习和实验。

接下来,作者深入浅出地讲解了Apache Spark的核心概念,包括RDD(弹性分布式数据集)、Spark的主要组件以及其层次化架构。Spark的工作原理通过简单的例子进行了阐述,帮助读者理解其并行计算的机制。

在编程与RDD的章节,读者将学习如何创建和操作RDD,包括基本的转换和动作操作。此外,还对比了RDD与DataFrame的区别,DataFrame提供了更高级别的抽象,更适合于数据建模和SQL查询。

统计学和线性代数是数据分析的基础,书中介绍了相关符号、线性代数的基本概念,以及用于评估模型性能的测量公式,如混淆矩阵。同时,简要概述了统计检验,这些都是数据科学中常用的方法。

《pyspark从入门到精通》是一本全面的指南,涵盖了从安装配置到实际编程的各个环节,适合初学者和有一定经验的Spark开发者。通过这本书,读者可以系统地学习和掌握使用PySpark进行大数据分析的技能。

2017-05-12 上传

2017-03-04 上传

点击了解资源详情

点击了解资源详情

点击了解资源详情

2021-11-22 上传

点击了解资源详情

点击了解资源详情

Mr_Hzz

- 粉丝: 7

- 资源: 9

最新资源

- 火炬连体网络在MNIST的2D嵌入实现示例

- Angular插件增强Application Insights JavaScript SDK功能

- 实时三维重建:InfiniTAM的ros驱动应用

- Spring与Mybatis整合的配置与实践

- Vozy前端技术测试深入体验与模板参考

- React应用实现语音转文字功能介绍

- PHPMailer-6.6.4: PHP邮件收发类库的详细介绍

- Felineboard:为猫主人设计的交互式仪表板

- PGRFileManager:功能强大的开源Ajax文件管理器

- Pytest-Html定制测试报告与源代码封装教程

- Angular开发与部署指南:从创建到测试

- BASIC-BINARY-IPC系统:进程间通信的非阻塞接口

- LTK3D: Common Lisp中的基础3D图形实现

- Timer-Counter-Lister:官方源代码及更新发布

- Galaxia REST API:面向地球问题的解决方案

- Node.js模块:随机动物实例教程与源码解析