USENIX Association 14th USENIX Conference on File and Storage Technologies (FAST ’16) 135

ports range queries, snapshots, and other features that are

useful in modern applications. In this section, we briefly

describe the core design of LevelDB.

The overall architecture of LevelDB is shown in Fig-

ure 1. The main data structures in LevelDB are an on-

disk log file, two in-memory sorted skiplists (memtable

and immutable memtable), and seven levels (L

0

to L

6

)

of on-disk Sorted String Table (SSTable) files. LevelDB

initially stores inserted key-value pairs in a log file and

the in-memory memtable. Once the memtable is full,

LevelDB switches to a new memtable and log file to

handle further inserts from the user. In the background,

the previous memtable is converted into an immutable

memtable, and a compaction thread then flushes it to the

disk, generating a new SSTable file (about 2 MB usually)

at level 0 (L

0

); the previous log file is discarded.

The size of all files in each level is limited, and in-

creases by a factor of ten with the level number. For

example, the size limit of all files at L

1

is 10 MB, while

the limit of L

2

is 100 MB. To maintain the size limit,

once the total size of a level L

i

exceeds its limit, the

compaction thread will choose one file from L

i

, merge

sort with all the overlapped files of L

i+1

, and generate

new L

i+1

SSTable files. The compaction thread con-

tinues until all levels are within their size limits. Also,

during compaction, LevelDB ensures that all files in a

particular level, except L

0

, do not overlap in their key-

ranges; keys in files of L

0

can overlap with each other

since they are directly flushed from memtable.

To serve a lookup operation, LevelDB searches the

memtable first, immutable memtable next, and then files

L

0

to L

6

in order. The number of file searches required to

locate a random key is bounded by the maximum number

of levels, since keys do not overlap between files within

a single level, except in L

0

. Since files in L

0

can con-

tain overlapping keys, a lookup may search multiple files

at L

0

. To avoid a large lookup latency, LevelDB slows

down the foreground write traffic if the number of files

at L

0

is bigger than eight, in order to wait for the com-

paction thread to compact some files from L

0

to L

1

.

2.3 Write and Read Amplification

Write and read amplification are major problems in

LSM-trees such as LevelDB. Write (read) amplification

is defined as the ratio between the amount of data writ-

ten to (read from) the underlying storage device and the

amount of data requested by the user. In this section, we

analyze the write and read amplification in LevelDB.

To achieve mostly-sequential disk access, LevelDB

writes more data than necessary (although still sequen-

tially), i.e., LevelDB has high write amplification. Since

the size limit of L

i

is 10 times that of L

i−1

, when merg-

ing a file from L

i−1

to L

i

during compaction, LevelDB

may read up to 10 files from L

i

in the worst case, and

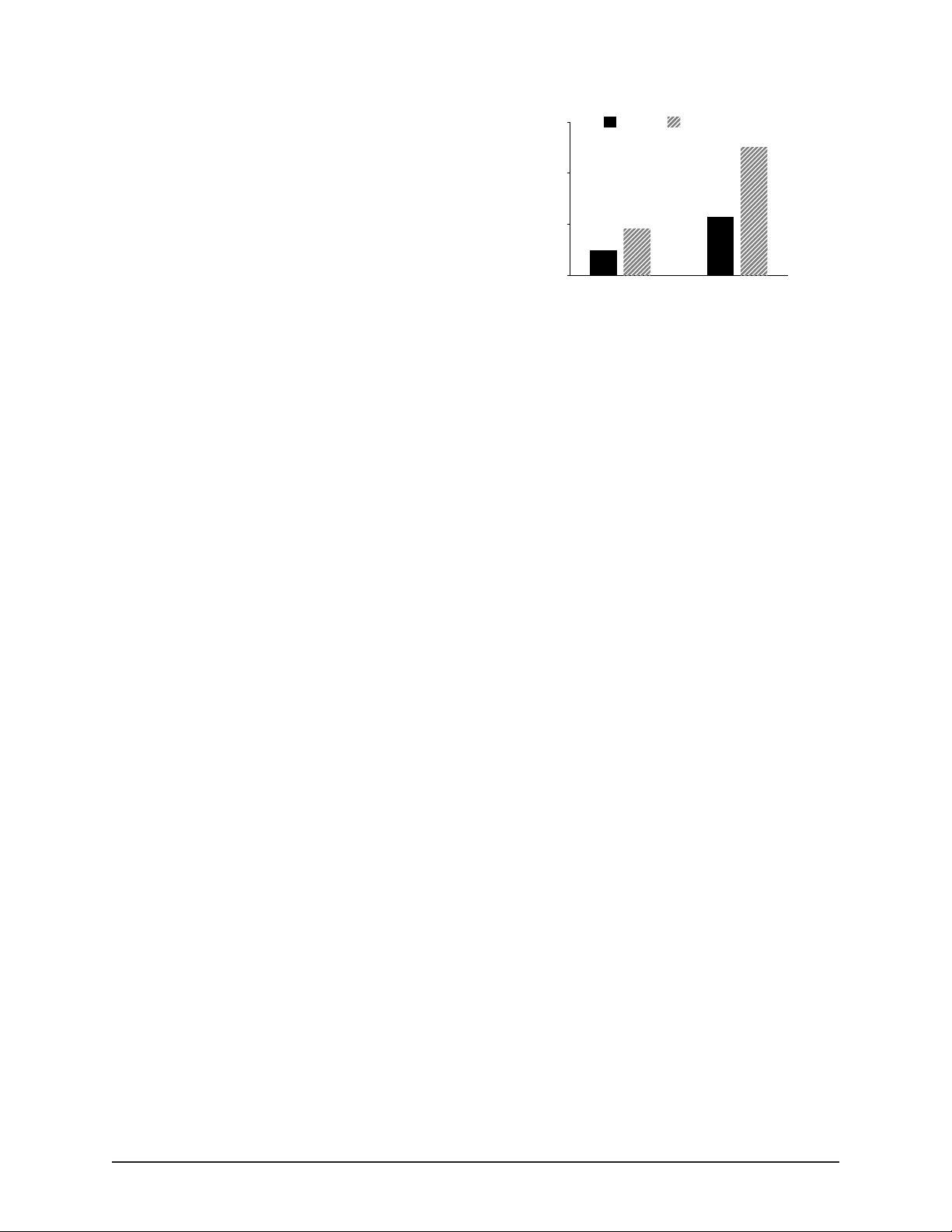

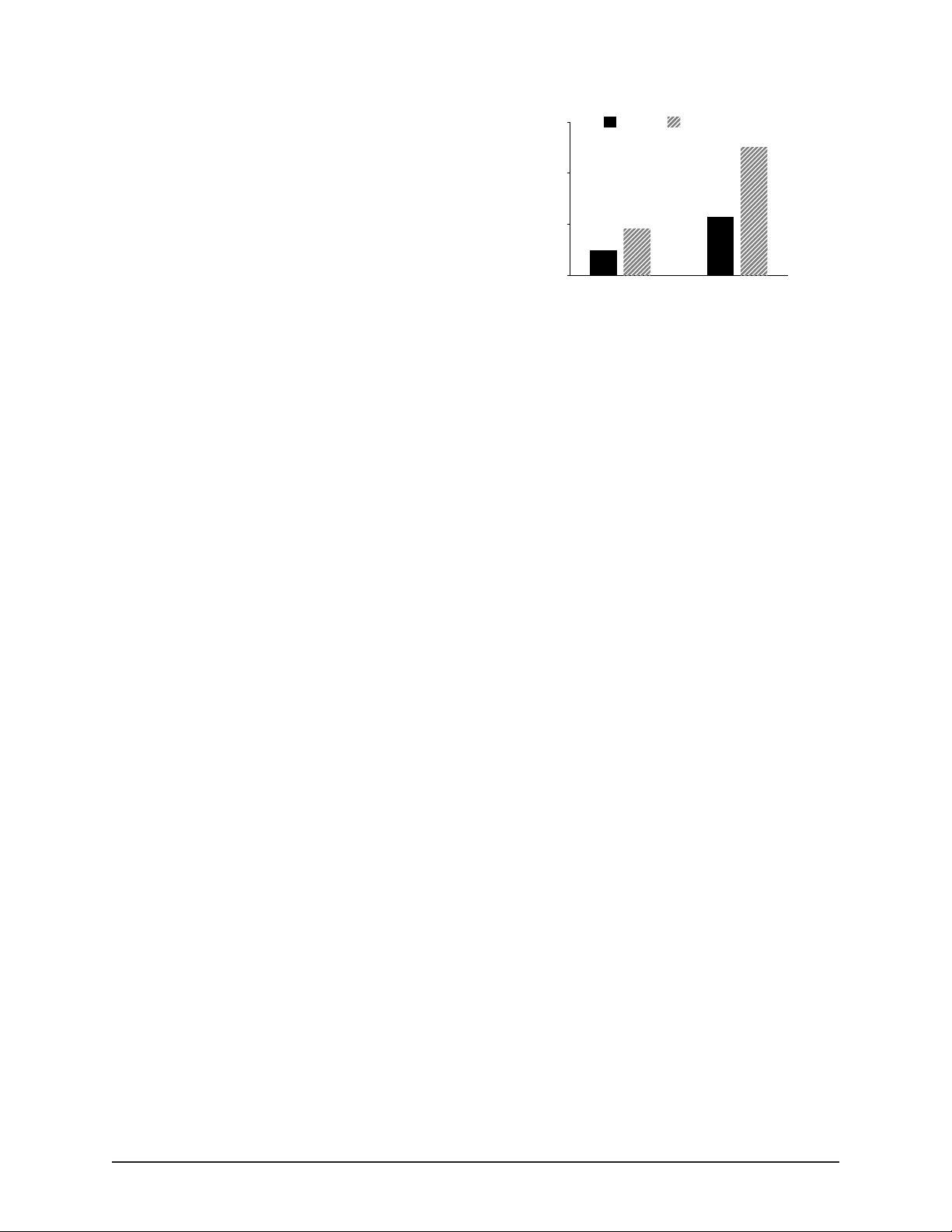

1

10

100

1000

Amplification Ratio

3.1

14

1 GB

8.2

327

100 GB

Write Read

Figure 2: Write and Read Amplification. This fig-

ure shows the write amplification and read amplification of

LevelDB for two different database sizes, 1 GB and 100 GB.

Key size is 16 B and value size is 1 KB.

write back these files to L

i

after sorting. Therefore, the

write amplification of moving a file across two levels can

be up to 10. For a large dataset, since any newly gen-

erated table file can eventually migrate from L

0

to L

6

through a series of compaction steps, write amplification

can be over 50 (10 for each gap between L

1

to L

6

).

Read amplification has been a major problem for

LSM-trees due to trade-offs made in the design. There

are two sources of read amplification in LevelDB. First,

to lookup a key-value pair, LevelDB may need to check

multiple levels. In the worst case, LevelDB needs

to check eight files in L

0

, and one file for each of

the remaining six levels: a total of 14 files. Sec-

ond, to find a key-value pair within a SSTable file,

LevelDB needs to read multiple metadata blocks within

the file. Specifically, the amount of data actually read

is given by

(index block + bloom-filter blocks +

data block)

. For example, to lookup a 1-KB key-value

pair, LevelDB needs to read a 16-KB index block, a 4-

KB bloom-filter block, and a 4-KB data block; in total,

24 KB. Therefore, considering the 14 SSTable files in

the worst case, the read amplification of LevelDB is 24

× 14 = 336. Smaller key-value pairs will lead to an even

higher read amplification.

To measure the amount of amplification seen in prac-

tice with LevelDB, we perform the following experi-

ment. We first load a database with 1-KB key-value

pairs, and then lookup 100,000 entries from the database;

we use two different database sizes for the initial load,

and choose keys randomly from a uniform distribution.

Figure 2 shows write amplification during the load phase

and read amplification during the lookup phase. For a 1-

GB database, write amplification is 3.1, while for a 100-

GB database, write amplification increases to 14. Read

amplification follows the same trend: 8.2 for the 1-GB

database and 327 for the 100-GB database. The rea-

son write amplification increases with database size is

straightforward. With more data inserted into a database,

the key-value pairs will more likely travel further along