扩散模型在图像生成中超越GAN

需积分: 1 185 浏览量

更新于2024-06-25

1

收藏 37.95MB PDF 举报

"Diffusion Models Beat GANs on Image Synthesis.pdf"

本文主要探讨了扩散模型在图像合成领域超越当前最先进的生成对抗网络(GANs)的研究成果。由Prafulla Dhariwal和Alex Nichol等人发表,他们来自OpenAI,展示了如何通过一系列的消融研究找到更优的架构,提升无条件图像合成的质量。扩散模型是一种新兴的生成模型,通过逐步扩散和恢复过程来创建高逼真度的图像。

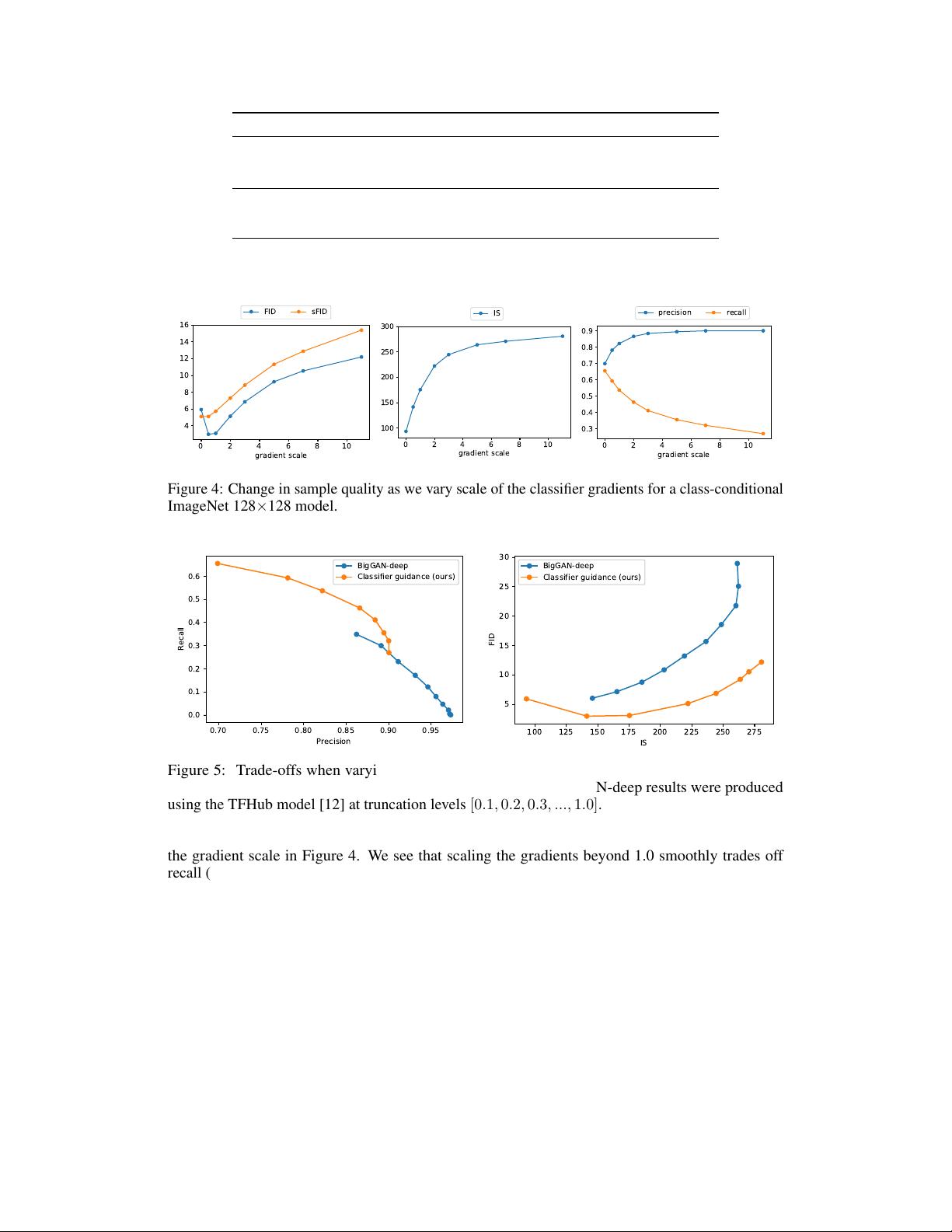

在无条件图像合成任务中,作者们通过对模型进行改进,实现了比当前最佳方法更高的图像质量。对于有条件图像合成,他们引入了分类器指导(classifier guidance)技术,这是一种高效的方法,通过利用分类器的梯度在多样性与保真度之间做出平衡。这种技术能够提高样本的质量,同时保持对分布的更好覆盖。

实验结果显示,他们的模型在ImageNet的128x128、256x256和512x512分辨率上分别达到了2.97、4.59和7.72的Fréchet Inception Distance (FID)分数,FID是一种评估生成图像质量和真实图像之间相似度的指标,数值越低表示质量越高。值得注意的是,即使每个样本仅进行25次前向传递,该模型也能与BigGAN-deep相媲美,这在计算效率上具有显著优势。

此外,作者发现分类器指导与上采样扩散模型相结合能产生更优的效果,将FID进一步降低到256x256分辨率下的3.94和512x512分辨率下的3.85。这些结果表明,扩散模型在图像合成领域的表现已经超过了传统的GANs,并且在保持高质量的同时,还能实现更高的效率和多样性。

论文最后提到,研究代码已开源,可在https://github.com/openai/guided-diffusion获取,这为其他研究者和开发者提供了深入研究和应用扩散模型的平台。

总结起来,这篇研究揭示了扩散模型在图像生成上的优越性,特别是在与分类器结合使用时,不仅提高了生成图像的逼真度,还降低了计算成本,这将推动AI和深度学习领域在图像生成技术方面的进步。同时,这也为未来研究提供了一个新的方向,即如何更好地优化和利用扩散模型,以实现更加多样化且高质量的图像合成。

2024-04-03 上传

2024-07-11 上传

2019-05-08 上传

2024-07-11 上传

2019-09-07 上传

2023-04-28 上传

2024-07-11 上传

2024-07-11 上传

2023-05-18 上传

IT徐师兄

- 粉丝: 2401

- 资源: 2862

最新资源

- 人工智能导论-拼音输入法.zip

- 协同测距matlab程序和数据.rar

- CPP.rar_人物传记/成功经验_Visual_C++_

- sslpod

- matlab拟合差值代码-PSCFit:Matlab代码,包括GUI,用于分析相和强直突触后电流(PSC)

- postman-twitter-ads-api:Twitter Ads API的Postman集合

- Cactu-Love_my-first-project

- 中英文手机网站源代码

- PscdPack:SEGA Genesis Classics ROM包装机

- 人工智能大作业-无人机图像目标检测.zip

- Advanced Image Upload and Manager Script-开源

- 00.rar_棋牌游戏_Visual_C++_

- INJECT digital creativity for journalists-crx插件

- bert_models

- HTP_SeleniumSmokeTest

- Remote Torrent Adder-crx插件