GOOSSENS ET AL. 3of15

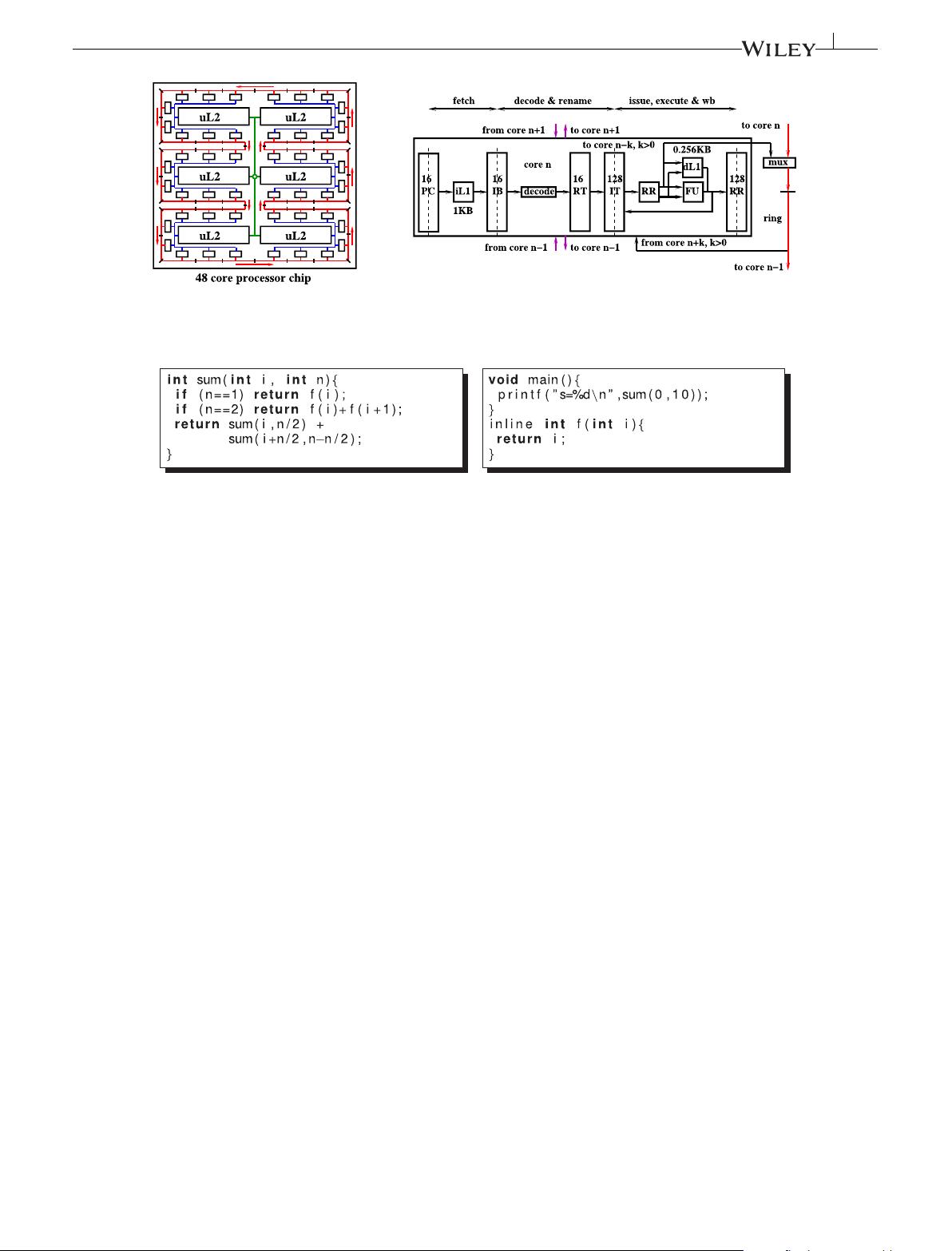

FIGURE 2 A many-core processor. dL1, data L1 cache; IB, instruction buffer; iL1, instruction L1 cache; IT, instruction table; PC, program counter;

RR, renaming registers; RT, renaming table; uL2, L2 unified cache; FU, functional unit

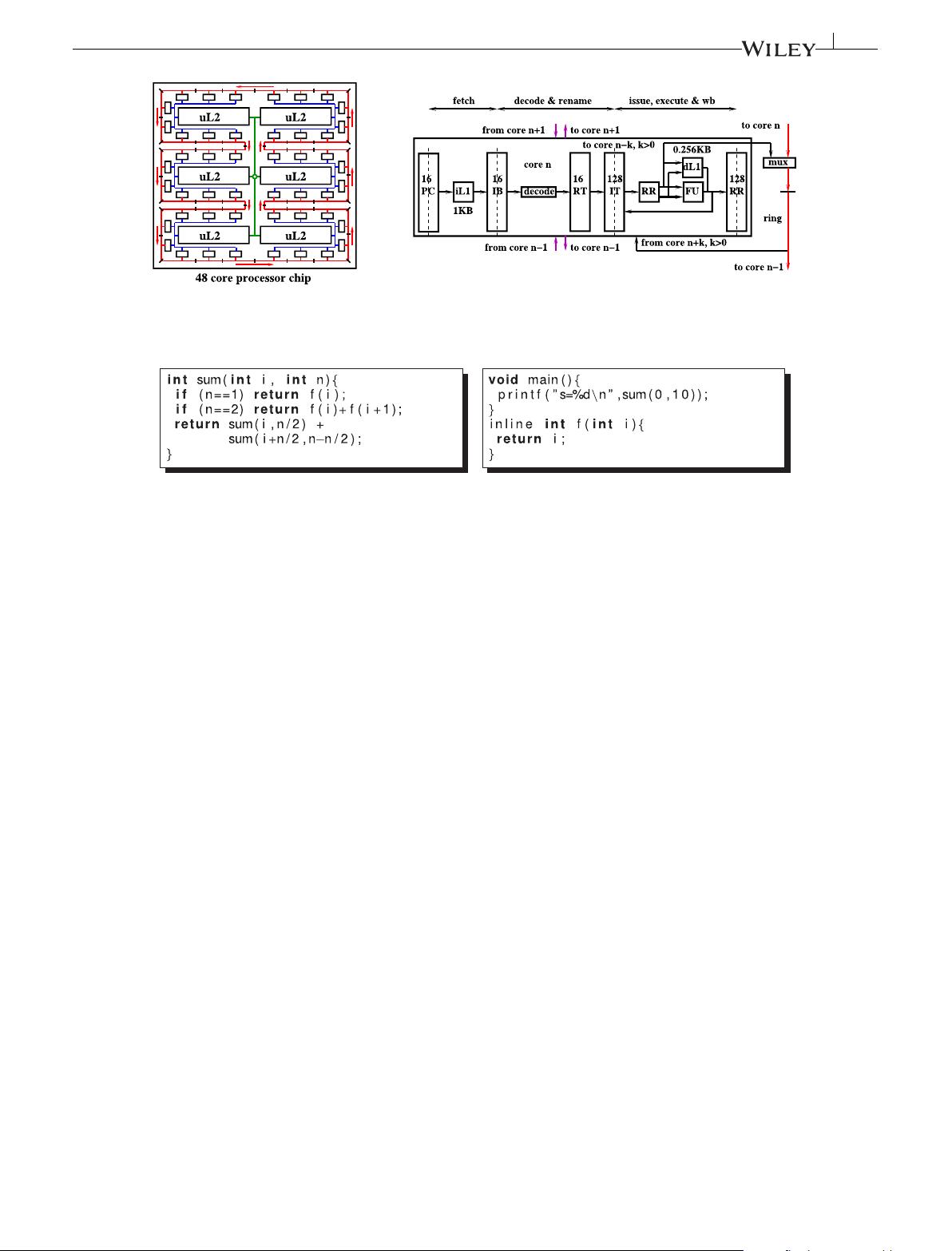

FIGURE 3 A sum reduction programmed in C

Once on the ring, the data moves nonstop to the destination, crossing 1

separator per cycle.

Each core hosts a set of thread slots (eg, 16 thread slots per core).

A thread is represented by its program counter (PC) and its renaming

table. The thread code is fetched from an instruction L1 cache (iL1).

The fetch stage selects one ready thread PC to fetch 1 instruction

from the iL1. The instruction is saved in the thread instruction buffer.

The decode and rename stage selects 1 full instruction buffer, and the

instruction it holds is decoded and renamed through the thread renam-

ing table. The renamed instruction is saved in the instruction table. The

issue, execute, and write back stage selects 1 ready instruction in the

instruction table, which is executed: it reads its sources from and writes

its result to the renaming registers (RR). At full speed, the processor

runs 1 instruction per cycle per core (eg, 1K instruction per cycle for a

1K-core processor).

The instruction set architecture is restricted to register-register,

control, and Input/Output (I/O) instructions. There are no

memory-access instructions. I/O instructions use a data memory hier-

archy starting from the data L1 cache (dL1). A register may be written

to an output file, at a position given by a descriptor and an offset (eg,

OUT %rdi, %rbp, %rbx: store rbx to file descriptor rdi at position rbp). A

register may also be loaded from a file descriptor and a read position

(eg,IN %rdi, %rbp, %rbx: load rbx from file descriptor rdi at position rbp).

External accesses are organized as file accesses rather than ran-

dom memory accesses to simplify memory coherency control. The

file access pattern is to synchronize a set of parallel file reads on a

matching set of parallel file writes. We replace the individual mem-

ory read-after-write (mem-RAW) dependences of each read with each

write by a unique dependence of the read phase with the write phase.

As we will see (Section 3.3), this unique dependence is a control flow

one, which is more simple to manage by hardware than memory depen-

dences. A control between a parallel read and the next parallel write

satisfies the mem-RAW dependence of each read with its matching

write.

The memory hierarchy design is out of the scope of this paper.

However, it does not have the same constraints as a traditional multi-

core shared memory hierarchy. In a traditional memory, a reader con-

sumes a data which may be produced by any thread in any core. This

implies to keep the hierarchy coherent to ensure memory consistency.

In our design, a reading thread consumes memory data coming only

from predecessor threads. We will see in this section that threads

are ordered. Hence, a writer writes to its local cache and a reader

gets its data from the closest writer. Strict consistency (a read opera-

tion always gets the data written by the most recent write operation)

is obtained without the need to invalidate prior writes. The mem-

ory write-after-read and write-after-write dependences are ensured

by a serialization from a control flow instruction, like mem-RAW

dependences.

Figure 3 is a sum reduction programmed in C and Figure 4 is its trans-

lation in

×86. The code does not use any array but a function returning

1 element (function f could return an array element read from an input

file instead of providing it itself; in the example, f

(i) returns i).

On Figure 4, the fork instruction at line 11 creates a new thread on

the successor core. The local core decoding the fork instruction allo-

cates a free thread slot in its successor. The local core initializes the

allocated thread PC when the next call instruction at line 15 is decoded.

The PC is set to the called function return address, ie, instruction at line

16. Between the fork and the call are push instructions at lines 12 to

14. A push r instruction sends its local renaming of r to the new thread

(when push r is issued, ie, r value is known). For example, the 3 push

instructions on lines 12 to 14 send RR identifiers for rdi, rsi,andrbx to

the created thread.

The call instruction sends the resume code address. Once the

new thread has received the PC value, it starts fetching. Its first