ISSCC 2019 / February 18, 2019 / 8:45 AM

17DIGEST OF TECHNICAL PAPERS •

1

(differentiable) associative-memory modules; (6) networks that

manipulate annotated graphs instead of tensors. However, chances are

that the bulk of the computation in future DL systems will still consist

primarily of convolutions.

References

[1] L.D. Jackel, R.E. Howard, H.P. Graf, B. Straughn, J.S. Denker,

“Artificial Neural Networks for Computing”. Journal of Vacuum Science &

Technology B: Microelectronics Processing and Phenomena, 4(1), pp. 61-

63, 1986.

[2]

H. Graf, P. de Vegvar, “A CMOS Associative Memory Chip Based on

Neural Networks”, ISSCC, pp. 304- 305, 1987.

[3] G. Indiveri, et al., “Neuromorphic Silicon Neuron Circuits”, Frontiers

in Neuroscience, 5, p. 73, 2011.

[4]

S.B. Furber, F. Galluppi, S. Temple, L. Plana, “The Spinnaker Project”,

Proceedings of the IEEE, 102(5), pp. 652-665, 2014.

[5] F. Rosenblatt, “The Perceptron, A Perceiving and Recognizing

Automaton (Project Para)”. Cornell Aeronautical Laboratory, 1957.

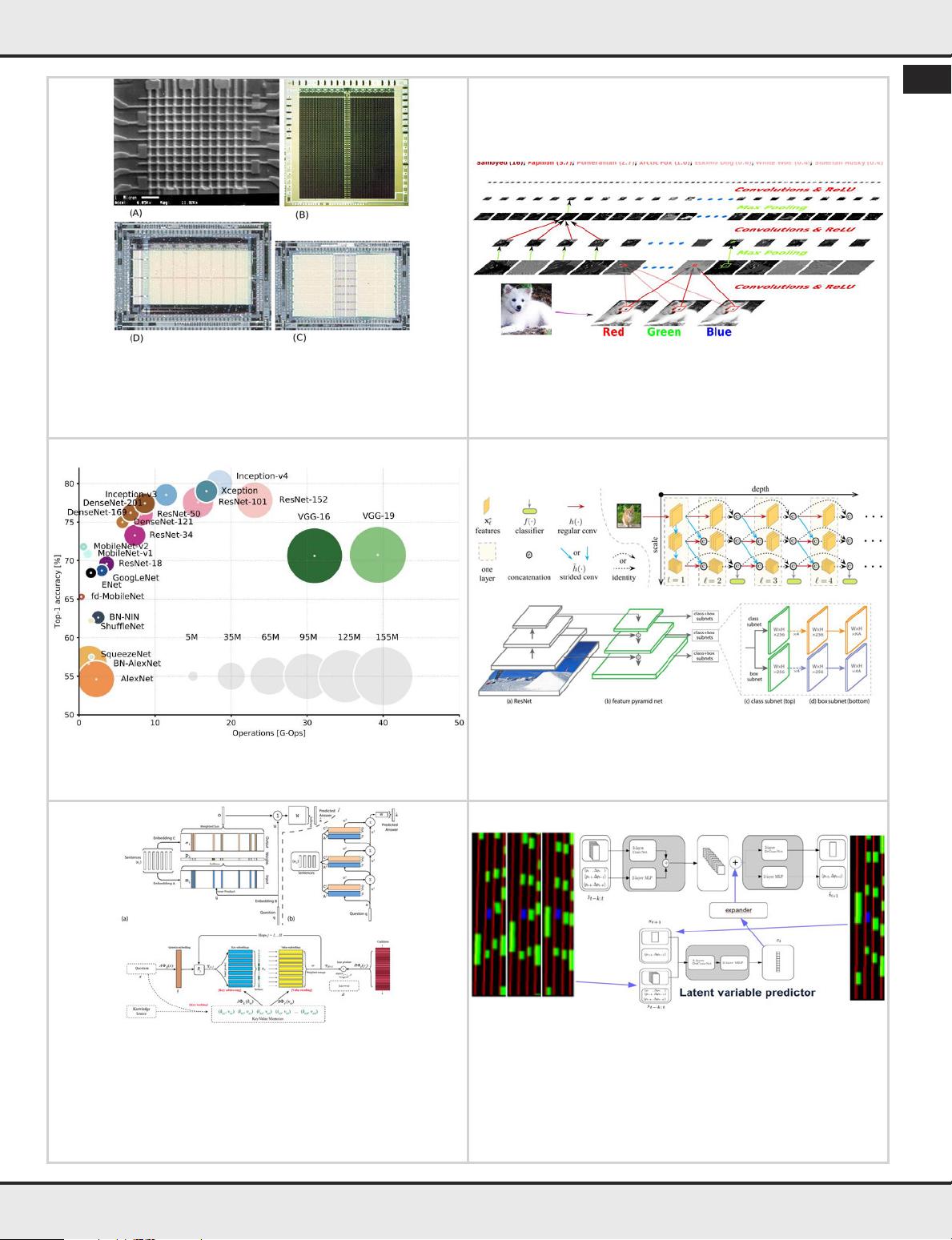

[6]

B. Widrow, W.H. Pierce, J.B. Angell. “Birth, Life, and Death in

Microelectronic Systems”, IRE Trans. Mil. Electron., 1051(3), pp. 191- 201,

1961.

[7] R.W. Lucky, “Automatic Equalization for Digital Communication”,

Bell System Technical Journal, 44(4), pp. 547-588, 1965.

[8] M. Minsky, S.A. Papert, “Perceptrons: An Introduction to

Computational Geometry”. MIT press, 1969.

[9] J.J. Hopfield. “Neural Networks and Physical Systems with Emergent

Collective Computational Abilities”. Proceedings of the National

Academy of Sciences, 79(8), pp. 2554-2558, 1982.

[10] G.E. Hinton, T.J. Sejnowski, “Optimal Perceptual Inference”,

Proceedings of the IEEE conference on Computer Vision and Pattern

Recognition, pp. 448-453, June 1983.

[11] D.E. Rumelhart, G.E. Hinton, R.J. Williams, “Learning

Representations by Back-Propagating Errors. Nature, 323(6088), pp.

533, 1986.

[12] Y. LeCun, B.E. Boser, J.S. Denker, D. Henderson, R.E. Howard, W.

Hubbard, L.D. Jackel, “Backpropagation Applied to Handwritten Zip Code

Recognition”, Neural Computation, 1(4), pp. 541-551, 1989.

[13] Y. LeCun, B.E. Boser, J.S. Denker, D. Henderson, R.E. Howard, W.E.

Hubbard, L.D. Jackel, “Handwritten Digit Recognition with a Back-

Propagation Network”, NIPS, pp. 396-404, 1989.

[14]

H.P. Graf, R. Janow, D. Henderson, R. Lee, “Reconfigurable Neural

Net chip with 32K Connections”, Advances in Neural Information

Processing Systems, pp. 1032-1038, 1991.

[15]

B.E. Boser, E. Sackinger, J. Bromley, Y. Le Cun, L.D. Jackel,

“An

Analog Neural Network Processor with Programmable Topology”. IEEE

Journal of Solid-State Circuits, 26(12), pp. 2017-2025, 1991.

[16] E. Sackinger, B.E. Boser, J. Bromley, Y. LeCun, L.D. Jackel,

“Application of the ANNA Neural Network Chip to High-Speed Character

Recognition”, IEEE Transactions on Neural Networks, 3(3), pp. 498-505,

1992.

[17]

J. Cloutier, E. Cosatto, S. Pigeon, F.R. Boyer, P.Y. Simard, “VIP: An

FPGA-Based Processor for Image Processing and Neural Networks”, Proc.

of Int. Conf. Microelectronics for Neural Networks, pp. 330-336, 1996.

[18]

Y. LeCun, L. Bottou, Y. Bengio, P. Haffner, “Gradient-Based Learning

Applied to Document Recognition”, Proceedings of the IEEE, 86(11), pp.

2278-2324, 1998.

[19] L. Bottou, P. Gallinari, “A Framework for the Cooperation of

Learning Algorithms”, Advances in Neural Information Processing

Systems, pp. 781-788, 1991.

[20] C. Farabet, C. Poulet, Y. LeCun, “An FPGA-Based Stream Processor

for Embedded Real-Time Vision with Convolutional Networks, ICCV

Workshops, pp. 878-885, September 2009.

[21]

C. Farabet, Y. LeCun, K. Kavukcuoglu, E. Culurciello, B. Martini, P.

Akselrod, S. Talay, “Large-Scale FPGA-Based Convolutional Networks”, R.

Bekkerman, M. Bilenko, J. Langford (Eds.), “Scaling up Machine Learning:

Parallel and Distributed Approaches”, pp. 399-419, Cambridge University

Press, 2011.

[22] A. Canziani, A. Paszke, E. Culurciello, “An Analysis of Deep Neural

Network Models for Practical Applications, arxiv: 1605.07678, 2017.

[23] Y.H. Chen, T. Krishna, J.S. Emer, V. Sze, “Eyeriss: An Energy-

Efficient Reconfigurable Accelerator for Deep Convolutional Neural

Networks”. IEEE Journal of Solid-State Circuits, 52(1), pp. 127-138, 2017.

[24] Y.H. Chen, J. Emer, V. Sze, “Eyeriss v2: A Flexible and High-

Performance Accelerator for Emerging Deep Neural Networks”.

arXiv:1807.07928, 2018.

[25] G.E. Hinton, R.R. Salakhutdinov, “Reducing the Dimensionality of

Data with Neural Networks”, Science, 313(5786), pp. 504-507, 2006.

[26] G.E. Hinton, L. Deng, D. Yu, G.E. Dahl, A.R. Mohamed, N. Jaitly, A.

Senior, V. Vanhoucke, P. Nguyen, T.N. Sainath, B. Kingsbury, “Deep Neural

Networks for Acoustic Modeling in Speech Recognition: The shared views

of four research groups”, IEEE Signal Processing Magazine, 29(6), pp. 82-

97, 2012.

[27] R. Collobert, J. Weston, “A Unified Architecture for Natural

Language Processing: Deep Neural Networks with Multitask Learning.

ICML, pp. 160-167, 2008.

[28] R. Collobert, J. Weston, L. Bottou, M. Karlen, K. Kavukcuoglu, P.

Kuksa, “Natural Language Processing (almost) from Scratch”, Journal of

Machine Learning Research, pp. 2493-2537, August 2011.

[29] C. Farabet, C., Couprie, L. Najman, Y. LeCun, “Scene Parsing with

Multiscale Feature Learning, Purity Trees, and Optimal Covers”, ICML

arXiv:1202.2160, 2012.

[30] P. Sermanet, K. Kavukcuoglu, S. Chintala, Y. LeCun, “Pedestrian

Detection with Unsupervised Multi-Stage Feature Learning”, CVPR pp.

3626-3633, 2013.

[31] A. Krizhevsky, I. Sutskever, G.E. Hinton, “Imagenet Classification

with Deep Convolutional Neural Networks”, Advances in Neural

Information Processing Systems, pp. 1097-1105, 2012.

[32] A. Joulin, E. Grave, P. Bojanowski, T. Mikolov, “Bag of Tricks for

Efficient Text Classification”, Proc 15th Conference of the European Chapter

of the Association for Computational Linguistics: Volume 2, Short Papers.

Vol. 2, pp. 427-431, 2017.

[33] D. Tran, H. Wang, L. Torresani, J. Ray, Y. LeCun, M. Paluri,

“A Closer Look at Spatiotemporal Convolutions for Action Recognition:,

Proc. Computer Vision and Pattern Recognition, pp. 6450-6459, 2018.

[34]

M. Ott, S. Edunov, D. Grangier, M. Auli, “Scaling Neural Machine

Translation”. arXiv:1806.00187, 2018.

[35] J. Johnson, “Rethinking Floating Point for Deep Learning”,

ArXiv:1811.01721, 2018.

[36]

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A.N.

Gomez, L. Kaiser, I. Polosukhin, “Attention is all you need” NIPS, pp.

5998-6008, 2017.

[37] P. Sermanet, D. Eigen, X. Zhang, M. Mathieu, R. Fergus, Y. LeCun,

“Overfeat: Integrated Recognition, Localization and Detection Using

Convolutional Networks”. Proc. ICLR, arXiv:1312.6229, 2014.

[38] K. He, G. Gkioxari, P. Dollr, R. Girshick, R. (2017, “Mask R-CNN”, Proc.

ICCV, pp.2980-2988, October 2017.

[39] T.Y. Lin, P. Dollr, R.B. Girshick, K. He, B. Hariharan, S.J. Belongie,

“Feature Pyramid Networks for Object Detection” CVPR, Vol. 1, No. 2,

p. 4, 2017.

[40] T.Y. Lin, P. Goyal, R. Girshick, K. He, P. Dollr, “Focal Loss for Dense

Object Detection”, Proc. ICCV, arXiv:1708.02002, 2017.

[41] O. Ronneberger, P. Fischer, T. Brox, “U-Net: Convolutional

Networks for Biomedical Image Segmentation”, International

Conference on Medical Image Computing and Computer-Assisted

Intervention”, pp. 234-241, October 2015.

[42] P. Jaeger, S. Kohl, S. Bickelhaupt, F. Isensee, T.A.Kuder,

H.-P. Schlemmer, K. Maier-Hein, “Retina U-Net: Embarrassingly Simple

Exploitation of Segmentation Supervision for Medical Object Detectio”,

arXiv:1811.08661, 2018.

[43]

M. Mathieu, C. Couprie, Y. LeCun, “Deep Multi-Scale Video Prediction

Beyond Mean Square Error”, ICLR, arXiv:1511.05440, 2016.

[44] C.Finn, I. Goodfellow, S. Levine, “Unsupervised Learning for Physical

Interaction Through Video Prediction”, Advances in Neural Information

Processing Systems, pp. 64-72, 2016.

[45]

M. Henaff, A. Canziani, Y. LeCun, “Model-Predictive Policy

Learning with Uncertainty Regularization for Driving in Dense Traffic”

To appear in 2019.

[46]

P. Luc, C. Couprie, Y. LeCun, J. Verbeek, “Predicting Future Instance

Segmentations by Forecasting Convolutional Features”. ECCV,

arXiv:1803.11496, 2018.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功