SMO算法:训练支持向量机的高效方法

需积分: 10 106 浏览量

更新于2024-07-23

收藏 88KB PDF 举报

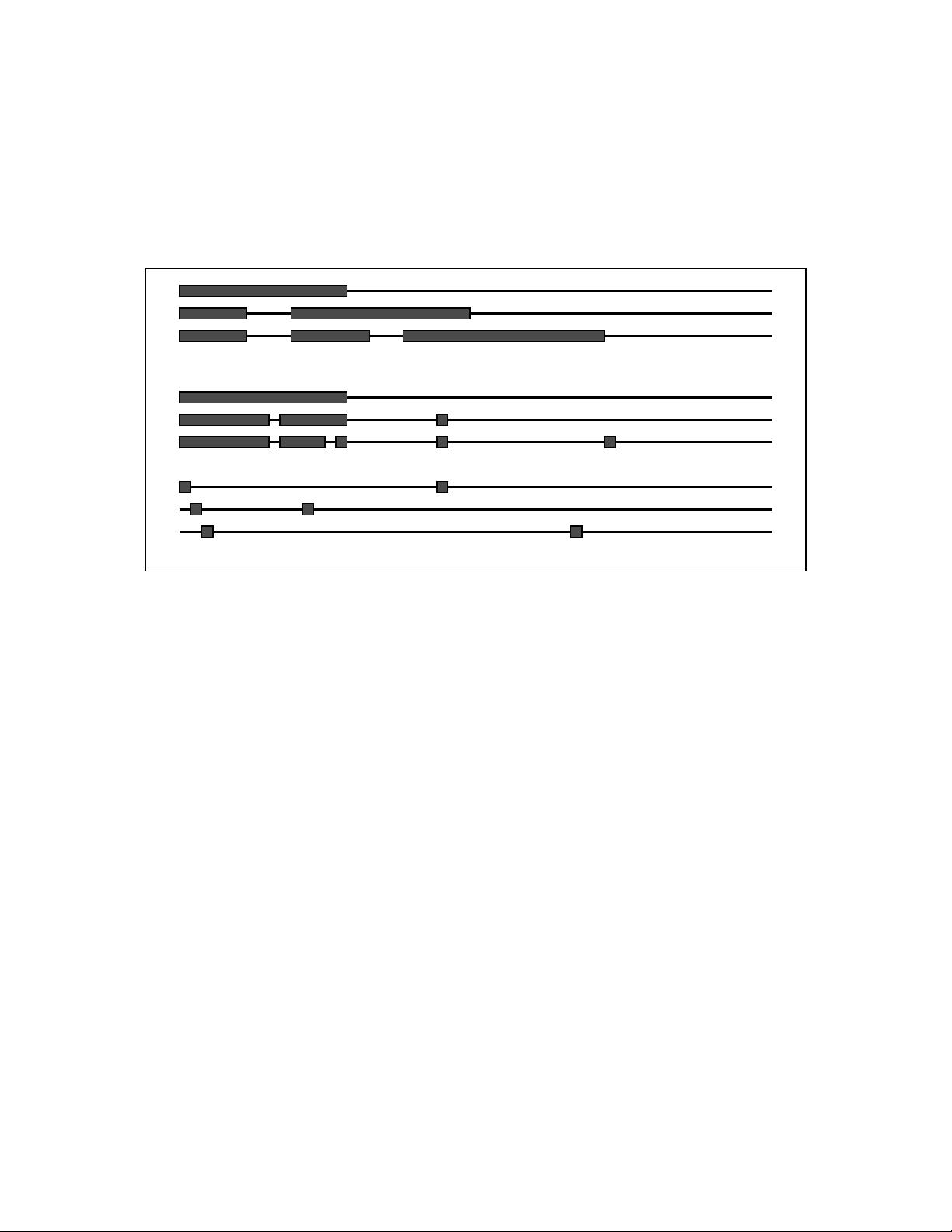

本文档主要探讨了"SMO优化算法",一种针对支持向量机(SVM)训练的高效方法。Sequential Minimal Optimization,或简称SMO,是John Platt在1998年提出的一种创新算法,发表于Microsoft Research的技术报告MSR-TR-98-14中。SMO算法的核心在于它将原本的大规模二次规划(QP)问题分解为一系列最小子问题,这些子问题可以通过解析解法解决,避免了耗时的数值优化循环。这显著提高了算法的计算效率。

SMO算法的优势在于内存需求与训练集大小成线性关系,这意味着它可以处理非常庞大的数据集,这是传统SVM算法难以实现的。由于SMO算法减少了矩阵运算,其时间复杂度在不同测试问题中表现良好,对于线性SVM和稀疏数据集尤其表现出色。相比之下,传统的分块SVM算法在训练集大小上的计算开销更大,可能达到线性到立方的增长。

SMO的计算瓶颈主要在于支持向量的评估,但这正是其速度优势的来源。因此,当数据集易于处理且问题为线性时,SMO算法可以提供显著的性能提升。总结来说,这篇文章不仅阐述了SMO算法的原理和设计,还强调了它在实际应用中的高效性和对大型数据集的友好性,为SVM模型的训练提供了一种重要的优化手段。

2020-03-06 上传

2021-06-04 上传

点击了解资源详情

点击了解资源详情

2021-05-26 上传

2019-09-07 上传

2019-09-11 上传

keaiwenwen

- 粉丝: 12

- 资源: 3

最新资源

- c#基础教程(pdf)

- Addison.Wesley.Modern.C++.Design-.Generic.Programming.and.Design.Patterns.Applied.pdf

- Addison.Wesley.Efficient.C++.Performance.Programming.Techniques.pdf

- JSP如何连接mysql

- cookies的注入方法和原理.doc

- Addison.Wesley.Effective.Stl.50.Specific.Ways.To.Improve.Your.Use.Of.Stl.pdf

- 2007年上半年程序员上午题

- Addison.Wesley.Concrete.Mathematics.A.Foundation.for.Computer.Science.pdf

- 《网络攻击透视与防范》上机指导书

- Addison.Wesley.Bjarne.Stroustrup.The.C++.Programming.Language.Third.Edition.pdf

- A R M 开 发 详 解

- autorun.inf文件夹如何删除

- the art of designing embedded system

- TOAD快速入门中文教程

- windows DOS命令大全

- 西门子S7-300编程手册