142

M. I. JORDAN

K

Zi

X

zCi

N~

Ui

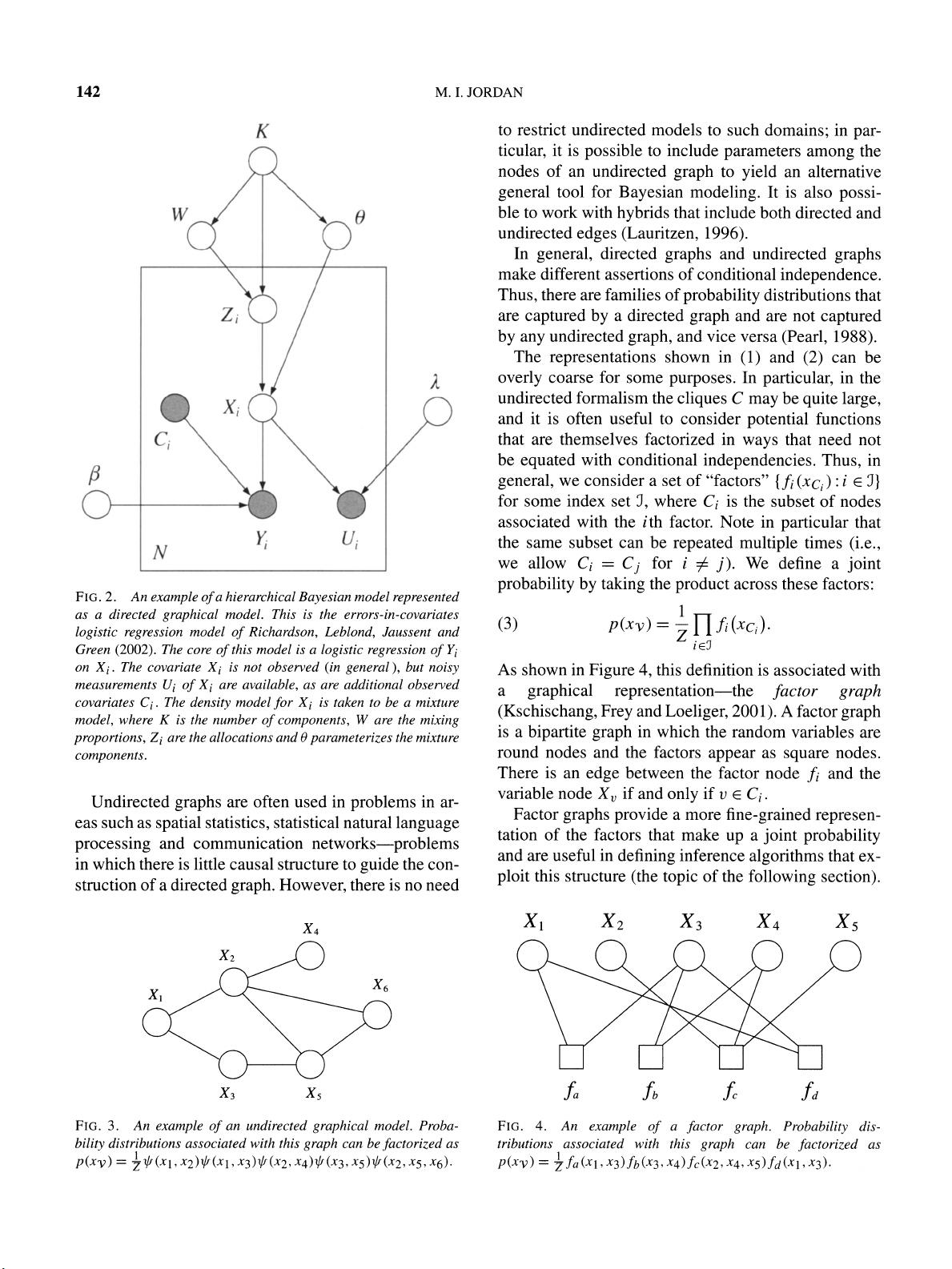

FIG. 2. An

example

of

a hierarchical

Bayesian

model

represented

as a directed

graphical

model. This is the

errors-in-covariates

logistic regression

model

of

Richardson,

Leblond,

Jaussent and

Green

(2002).

The core

of

this model is a

logistic regression of

Yi

on

Xi.

The covariate

Xi

is not observed

(in

general),

but

noisy

measurements

Ui

of

Xi

are

available,

as are additional

observed

covariates

Ci.

The

density

model

for

Xi

is taken to

be a mixture

model,

where K

is the number

of components,

W

are the

mixing

proportions,

Zi

are the allocations and

0

parameterizes

the mixture

components.

Undirected

graphs

are often used

in

problems

in

ar-

eas such as

spatial

statistics,

statistical natural

language

processing

and communication

networks-problems

in

which there is little causal structure to

guide

the

con-

struction of a directed

graph.

However,

there is no

need

to restrict undirected

models to such

domains;

in

par-

ticular,

it is

possible

to

include

parameters

among

the

nodes of an

undirected

graph

to

yield

an

alternative

general

tool

for

Bayesian modeling.

It is also

possi-

ble

to work with

hybrids

that include both

directed

and

undirected

edges

(Lauritzen,

1996).

In

general,

directed

graphs

and

undirected

graphs

make different

assertions of

conditional

independence.

Thus,

there are

families of

probability

distributions that

are

captured by

a directed

graph

and are not

captured

by any

undirected

graph,

and

vice versa

(Pearl,

1988).

The

representations

shown in

(1)

and

(2)

can be

overly

coarse for

some

purposes.

In

particular,

in

the

undirected formalism the

cliques

C

may

be

quite

large,

and it is often useful to

consider

potential

functions

that are themselves

factorized

in

ways

that need

not

be

equated

with

conditional

independencies.

Thus,

in

general,

we consider a set

of

"factors"

{

fi

(xc)

: i

E

J}

for some index set

J,

where

Ci

is

the subset of

nodes

associated

with

the ith factor.

Note

in

particular

that

the same subset can

be

repeated

multiple

times

(i.e.,

we allow

Ci

=

Cj

for i

0 j).

We define a

joint

probability by taking

the

product

across

these factors:

1

(3) p(xv)

=-

Z

fi

(xc)

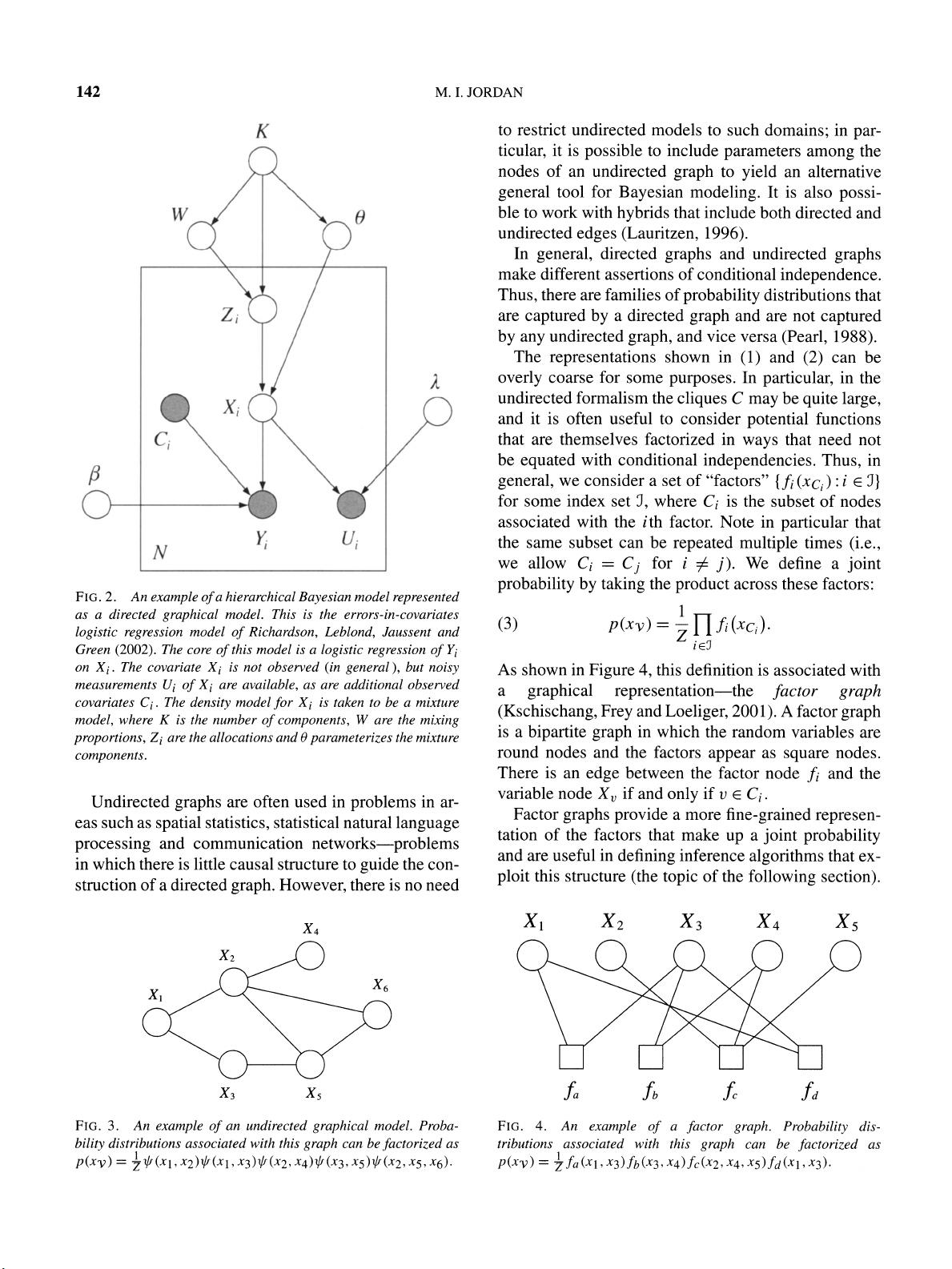

As shown in

Figure

4,

this

definition is

associated with

a

graphical representation-the factor

graph

(Kschischang,

Frey

and

Loeliger,

2001).

A

factor

graph

is a

bipartite graph

in

which

the random variables

are

round nodes and the

factors

appear

as

square

nodes.

There is an

edge

between the

factor node

fi

and the

variable node

X,

if

and

only

if

v

Ci

.

Factor

graphs provide

a more

fine-grained

represen-

tation of the factors

that make

up

a

joint

probability

and are useful

in

defining

inference

algorithms

that ex-

ploit

this

structure

(the

topic

of the

following

section).

X4

X2

XX

X6

X3

X5

FIG. 3.

An

example of

an undirected

graphical

model. Proba-

bility

distributions associated with this

graph

can be

factorized

as

p(xv)

=

I

*(x

1,

x2)

*

(xa

,

x3)

(x2,

x4)

(x3, x5)

*

(x2,

x5, x6).

X1

X2

X3

X4

X5

fa

fb

ffd

FIG. 4. An

example of

a

factor

graph.

Probability

dis-

tributions

associated with this

graph

can be

factorized

as

p(xv)-=

7

fa

(xl,

x3)fb(x3, x4)fc(x2,

x4,

x5)fd(xl, x3).