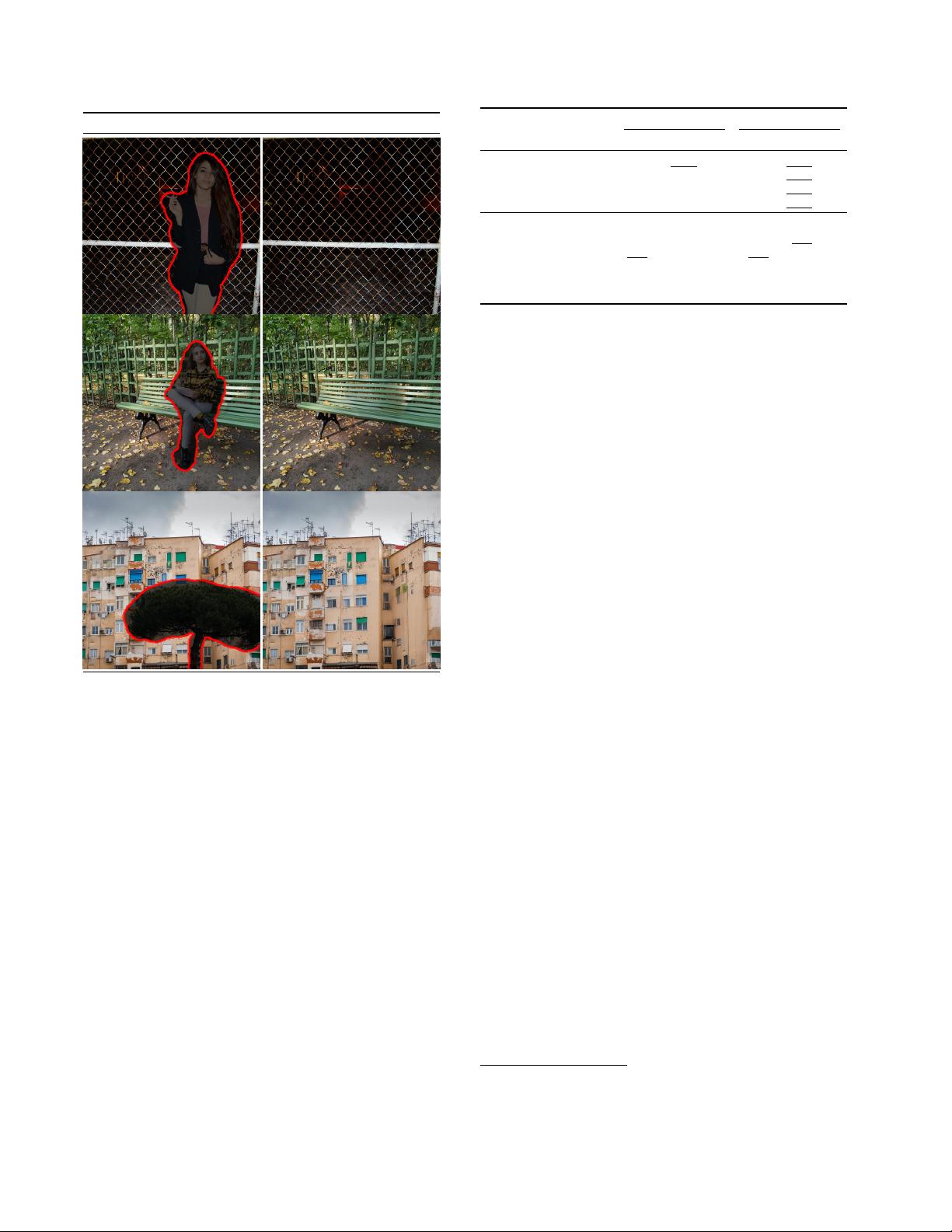

bicubic LDM-SR SR3

Figure 10. ImageNet 64→256 super-resolution on ImageNet-Val.

LDM-SR has advantages at rendering realistic textures but SR3

can synthesize more coherent fine structures. See appendix for

additional samples and cropouts. SR3 results from [72].

[72] and fix the image degradation to a bicubic interpola-

tion with 4×-downsampling and train on ImageNet follow-

ing SR3’s data processing pipeline. We use the f = 4 au-

toencoding model pretrained on OpenImages (VQ-reg., cf .

Tab. 8) and concatenate the low-resolution conditioning y

and the inputs to the UNet, i.e. τ

θ

is the identity. Our quali-

tative and quantitative results (see Fig. 10 and Tab. 5) show

competitive performance and LDM-SR outperforms SR3

in FID while SR3 has a better IS. A simple image regres-

sion model achieves the highest PSNR and SSIM scores;

however these metrics do not align well with human per-

ception [106] and favor blurriness over imperfectly aligned

high frequency details [72]. Further, we conduct a user

study comparing the pixel-baseline with LDM-SR. We fol-

low SR3 [72] where human subjects were shown a low-res

image in between two high-res images and asked for pref-

erence. The results in Tab. 4 affirm the good performance

of LDM-SR. PSNR and SSIM can be pushed by using a

post-hoc guiding mechanism [15] and we implement this

image-based guider via a perceptual loss, see Sec. D.6.

SR on ImageNet Inpainting on Places

User Study Pixel-DM (f1) LDM-4 LAMA [88] LDM-4

Task 1: Preference vs GT ↑ 16.0% 30.4% 13.6% 21.0%

Task 2: Preference Score ↑ 29.4% 70.6% 31.9% 68.1%

Table 4. Task 1: Subjects were shown ground truth and generated

image and asked for preference. Task 2: Subjects had to decide

between two generated images. More details in E.3.6

Since the bicubic degradation process does not generalize

well to images which do not follow this pre-processing, we

also train a generic model, LDM-BSR, by using more di-

verse degradation. The results are shown in Sec. D.6.1.

Method FID ↓ IS ↑ PSNR ↑ SSIM ↑ N

params

[

samples

s

](

∗

)

Image Regression [72] 15.2 121.1 27.9 0.801 625M N/A

SR3 [72] 5.2 180.1 26.4 0.762 625M N/A

LDM-4 (ours, 100 steps) 2.8

†

/4.8

‡

166.3 24.4±3.8 0.69±0.14 169M 4.62

emphLDM-4 (ours, big, 100 steps) 2.4

†

/4.3

‡

174.9 24.7±4.1 0.71±0.15 552M 4.5

LDM-4 (ours, 50 steps, guiding) 4.4

†

/6.4

‡

153.7 25.8±3.7 0.74±0.12 184M 0.38

Table 5. ×4 upscaling results on ImageNet-Val. (256

2

);

†

: FID

features computed on validation split,

‡

: FID features computed

on train split;

∗

: Assessed on a NVIDIA A100

train throughput sampling throughput

†

train+val FID@2k

Model (reg.-type) samples/sec. @256 @512 hours/epoch epoch 6

LDM-1 (no first stage) 0.11 0.26 0.07 20.66 24.74

LDM-4 (KL, w/ attn) 0.32 0.97 0.34 7.66 15.21

LDM-4 (VQ, w/ attn) 0.33 0.97 0.34 7.04 14.99

LDM-4 (VQ, w/o attn) 0.35 0.99 0.36 6.66 15.95

Table 6. Assessing inpainting efficiency.

†

: Deviations from Fig. 7

due to varying GPU settings/batch sizes cf . the supplement.

4.5. Inpainting with Latent Diffusion

Inpainting is the task of filling masked regions of an im-

age with new content either because parts of the image are

are corrupted or to replace existing but undesired content

within the image. We evaluate how our general approach

for conditional image generation compares to more special-

ized, state-of-the-art approaches for this task. Our evalua-

tion follows the protocol of LaMa [88], a recent inpainting

model that introduces a specialized architecture relying on

Fast Fourier Convolutions [8]. The exact training & evalua-

tion protocol on Places [108] is described in Sec. E.2.2.

We first analyze the effect of different design choices for

the first stage. In particular, we compare the inpainting ef-

ficiency of LDM-1 (i.e. a pixel-based conditional DM) with

LDM-4, for both KL and VQ regularizations, as well as VQ-

LDM-4 without any attention in the first stage (see Tab. 8),

where the latter reduces GPU memory for decoding at high

resolutions. For comparability, we fix the number of param-

eters for all models. Tab. 6 reports the training and sampling

throughput at resolution 256

2

and 512

2

, the total training

time in hours per epoch and the FID score on the validation

split after six epochs. Overall, we observe a speed-up of at

least 2.7× between pixel- and latent-based diffusion models

while improving FID scores by a factor of at least 1.6×.

The comparison with other inpainting approaches in

Tab. 7 shows that our model with attention improves the

overall image quality as measured by FID over that of [88].

LPIPS between the unmasked images and our samples is

slightly higher than that of [88]. We attribute this to [88]

only producing a single result which tends to recover more

of an average image compared to the diverse results pro-

duced by our LDM cf . Fig. 21. Additionally in a user study

(Tab. 4) human subjects favor our results over those of [88].

Based on these initial results, we also trained a larger dif-

fusion model (big in Tab. 7) in the latent space of the VQ-

regularized first stage without attention. Following [15],

the UNet of this diffusion model uses attention layers on

three levels of its feature hierarchy, the BigGAN [3] residual

block for up- and downsampling and has 387M parameters

8

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功