3

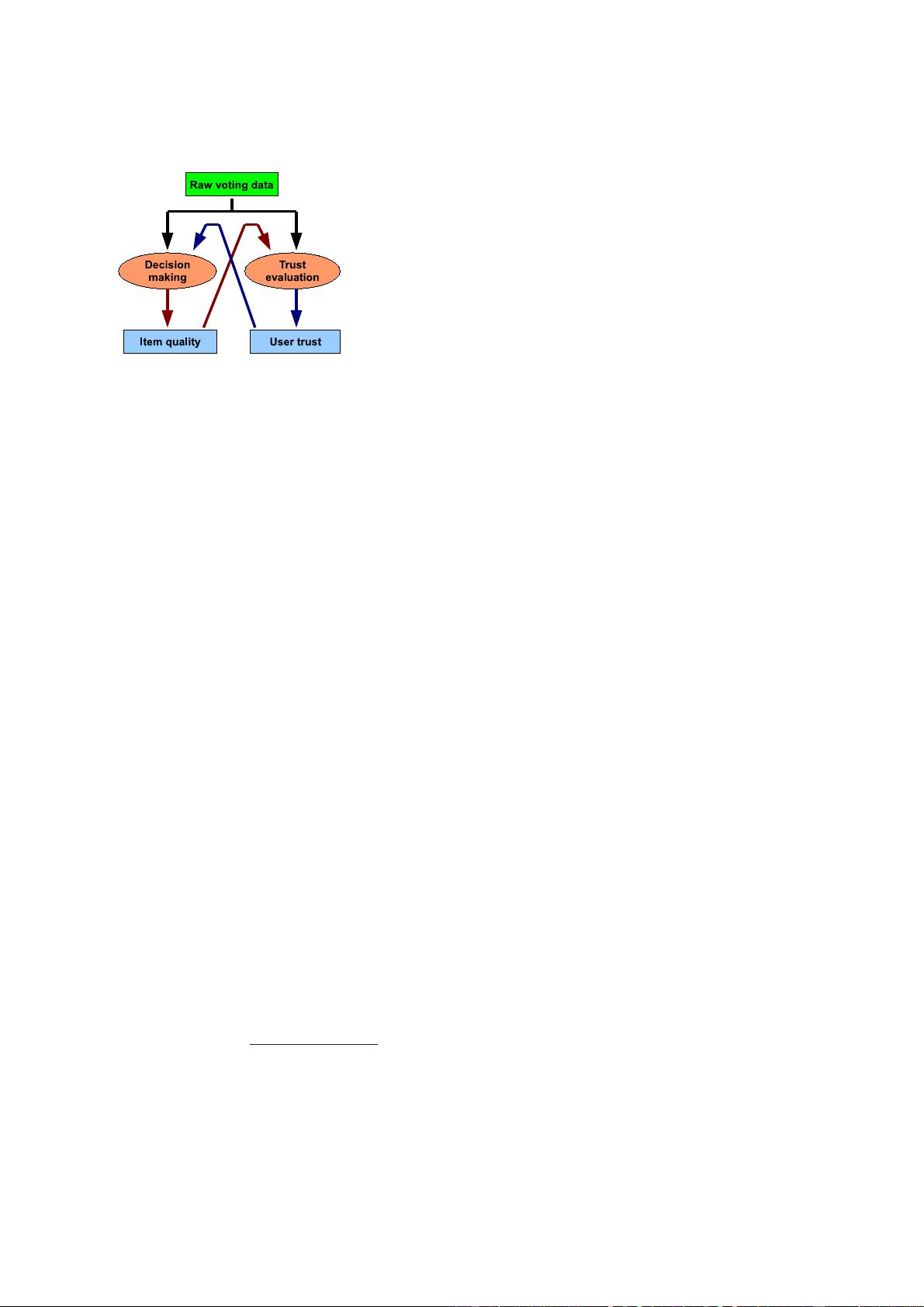

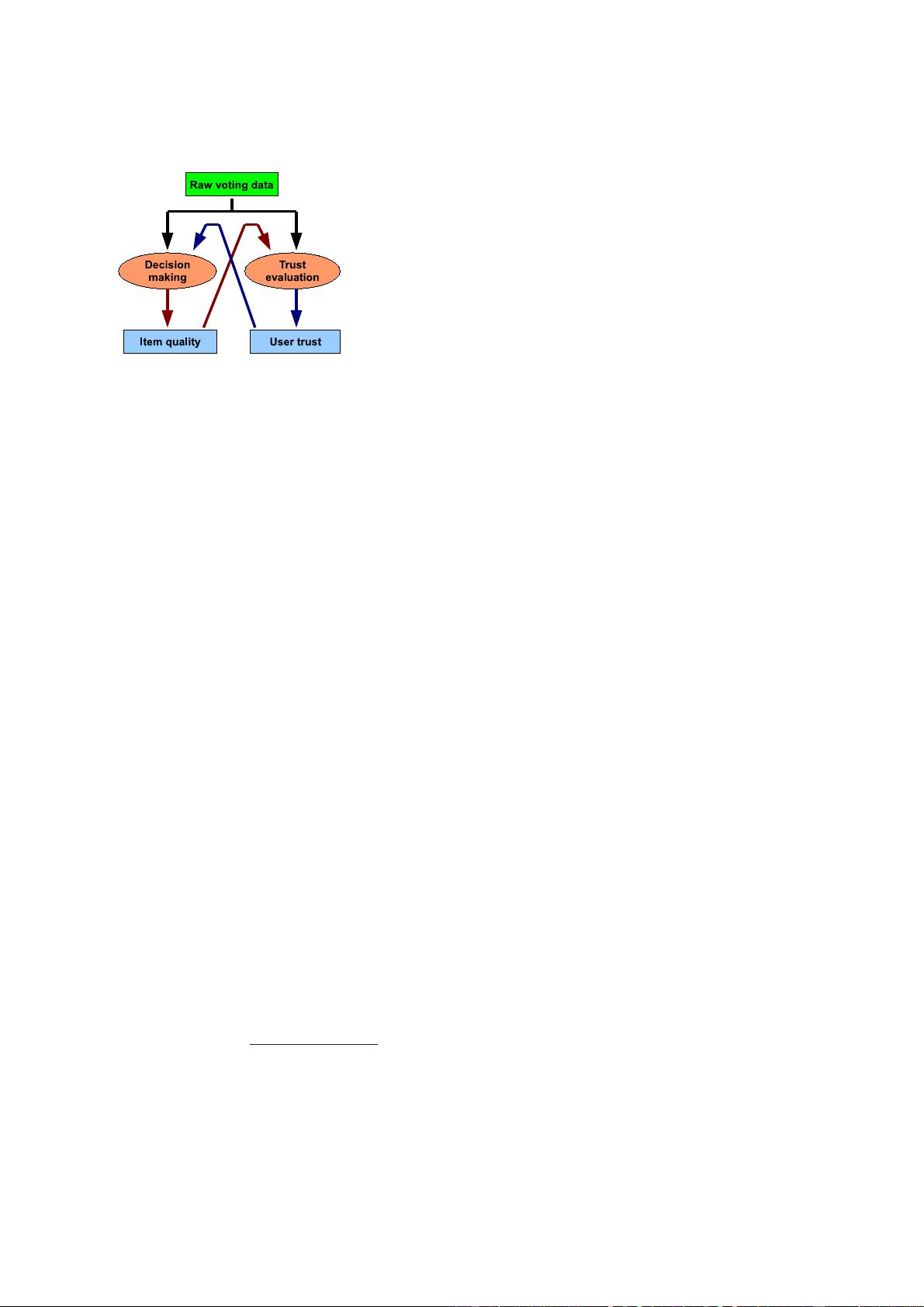

Fig. 2. Architecture of voting systems with trust mechanisms

by the system should agree with the majority’s opinion.

Specifically, for each item, let n

0

denote the number of

users who vote 0 and n

1

denote the number of users who

vote 1. The decision is 0 if n

0

> n

1

and 1 if n

0

< n

1

.

For trust mechanisms, one of the most popular design

is based on beta function. It first counts the number of

honest and dishonest behaviors a user has conducted,

and then calculates the trust value with beta function [3].

If we combine them together, the system is as follows.

• Item quality algorithm: Let U

1

k

and U

0

k

denote

the set of users who vote item I

k

with 1 and 0,

respectively. The trust value of user u

j

is denoted

by T (u

j

). Then, the quality of item I

k

, denoted by

Q(I

k

), is calculated with majority voting as

Q(I

k

) =

(

1 if G(I

k

) ≥ B(I

k

),

0 if G(I

k

) < B(I

k

).

(1)

where

G(I

k

) =

X

u

j

∈U

1

k

T (u

j

) ; B(I

k

) =

X

u

i

∈U

0

k

T (u

i

). (2)

In this calculation, the system utilizes trust values

as weight factors, and compares the summation of

the weights of the users who vote 0 and that of the

users who vote 1.

• User trust algorithm: When a vote is given to item

I

k

by user u

j

, if the vote value is the same as the

item quality Q(I

k

) identified by the item quality

algorithm, this vote is considered as an honest vote.

Otherwise, it is considered as a dishonest vote. For a

user u

j

, the system calculates the number of honest

votes given by this user, denoted by H(u

j

), and

the number of dishonest votes given by this user,

denoted by D(u

j

). The trust value of user u

j

is

calculated with beta function [3] as:

T (u

j

) =

H(u

j

) + 1

H(u

j

) + D(u

j

) + 2

. (3)

User trust and item quality is calculated in an iterative

way as follows.

1) For each user u

j

, initialize T (u

j

) as 0.5.

2) For each item I

j

, update G(I

j

) and B(I

j

) using (2),

and calculate Q(I

j

) using (1).

3) For each user u

j

, update H(u

j

) and D(u

j

), and

calculate T (u

j

) using (3).

4) If T (u

j

) for any user u

j

changes in step 3, go to

step 2; else END.

2.3 Related work

Online voting systems have been adopted in many real-

life systems, including Google, Digg, eBay, Amazon,

IMDB and so on. Most of them simply present raw

data or conduct straightforward aggregation (e.g. the

average voting scores). Two types of attacks have been

identified in current online voting systems. In the first

type of attacks, an attacker can register many user IDs

[4] and then manipulate the quality of the target item by

inserting dishonest votes directly. This is often referred to

as the bad-mouthing attack [20] [27], and we use RepBad

to denote this attack.

Trust mechanisms are developed to defeat the bad-

mouthing attack by calculating and utilizing the trust

values of the voters. Reputation and trust have been

well studied in P2P area. Representative schemes include

EigenTrust [6] for reputation management in P2P net-

works, PET [15] for reputation and risk evaluation in P2P

resource sharing, Credence [16] for identifying fake files

in P2P file-sharing systems, PeerTrust [12] for supporting

reputation-based trust for P2P electronic communities,

PowerTrust [29] and GossipTrust [33] for trust aggrega-

tion in P2P networks. Ooi et al. [5] examined the issue

of managing trust in P2P systems. Vu and Aberer [30]

proposed a probabilistic framework in the computation

of quality and trust in decentralized systems. Damiani

et al. [9] proposed digital reputations that can be seen as

the P2P counterparts of client-server digital certificates.

Sensoy et al. [22] proposed an ontology-based service

representation and selection approach. Khambatti et al.

[11] proposed a role-based trust model f or P2P commu-

nities and dynamic coalitions. Fan et al. [14] proposed

a reputation system based on exponential smoothing

that can serve as a sustained incentive mechanism for

the sellers. A good survey about trust and reputation

systems for online service provision can be found in

[26] and a comparison of different rating aggregation

algorithms can be found in [31]. In addition, a lot of

work [13], [21], [24], [25] has discussed t rust negotiation

as an approach for establishing trust in open systems.

Though trust mechanisms make an adversary’s attack

more difficult to succeed, these mechanisms do not pre-

vent the attackers to misuse and abuse the trust evalua-

tion schemes. For example, the attackers can strategically

give honest votes to the items they are not interested

in for the purpose of boosting their trust value, before

giving dishonest votes to the items they really want to

attack. This is the second type of attacks and it is often

referred to as self-promoting [27] or reputation recovery

[20]. In this paper, we use RepSelf to denote it.

To counter RepSelf, the key is an effective identity

management scheme to prevent Sybil attack [4]. It means

limiting the resources can be used by the attackers. Two

kinds of defense schemes are designed. The first kind is

trying to introduce a process to judge whether a user is

a human or a machine. In this process, a task which is

easy to human but hard to machine is introduced, such

as t he reCAPTCHA. The second kind is based on the