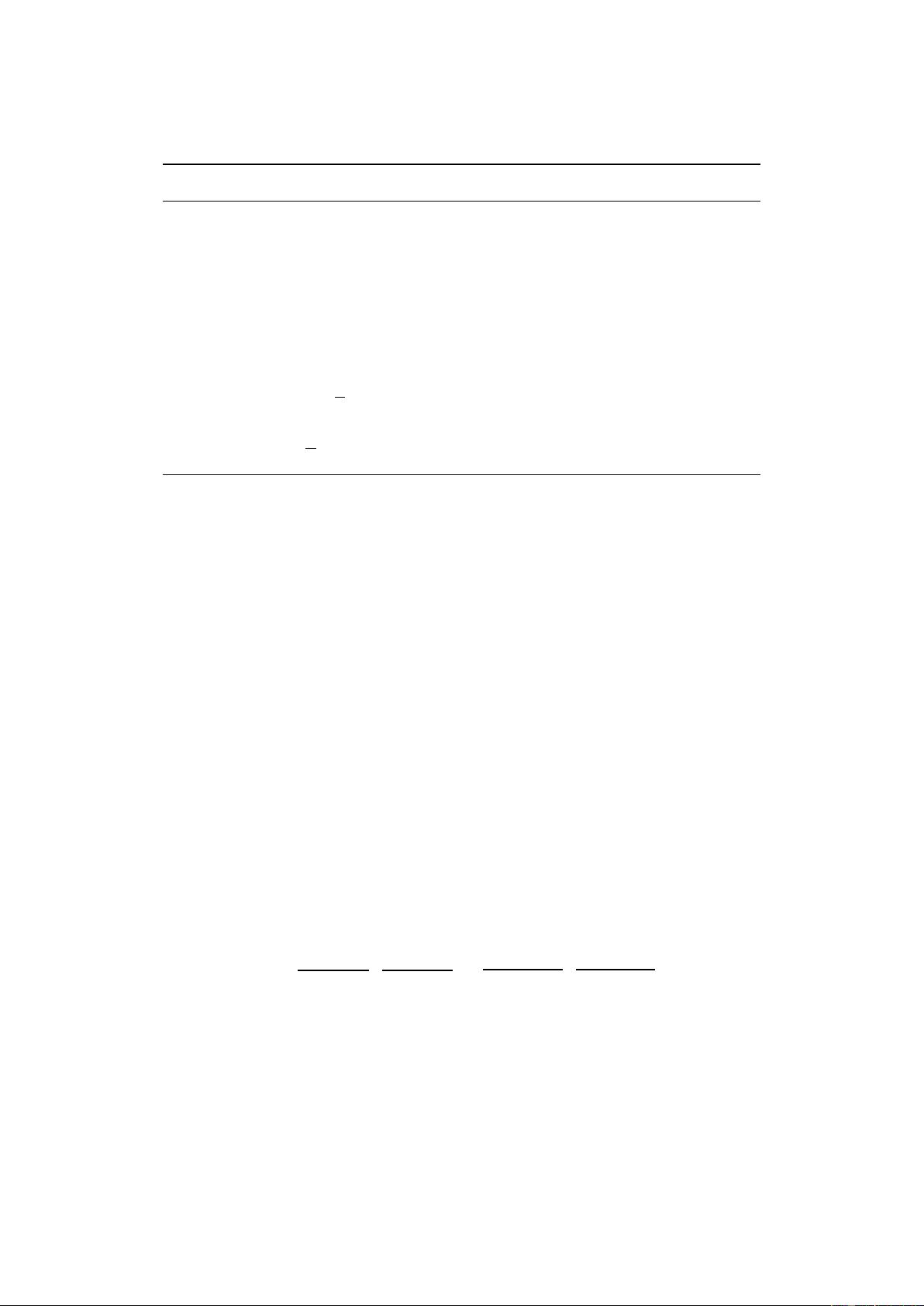

Algorithm 1 WGAN with gradient penalty. We use default values of λ = 10, n

critic

= 5, α =

0.0001, β

1

= 0, β

2

= 0.9.

Require: The gradient penalty coefficient λ, the number of critic iterations per generator iteration

n

critic

, the batch size m, Adam hyperparameters α, β

1

, β

2

.

Require: initial critic parameters w

0

, initial generator parameters θ

0

.

1: while θ has not converged do

2: for t = 1, ..., n

critic

do

3: for i = 1, ..., m do

4: Sample real data x ∼ P

r

, latent variable z ∼ p(z), a random number ∼ U [0, 1].

5:

˜

x ← G

θ

(z)

6:

ˆ

x ← x + (1 − )

˜

x

7: L

(i)

← D

w

(

˜

x) − D

w

(x) + λ(k∇

ˆ

x

D

w

(

ˆ

x)k

2

− 1)

2

8: end for

9: w ← Adam(∇

w

1

m

P

m

i=1

L

(i)

, w, α, β

1

, β

2

)

10: end for

11: Sample a batch of latent variables {z

(i)

}

m

i=1

∼ p(z).

12: θ ← Adam(∇

θ

1

m

P

m

i=1

−D

w

(G

θ

(z)), θ, α, β

1

, β

2

)

13: end while

critic. In each case, the critic trained with weight clipping ignores higher moments of the data dis-

tribution and instead models very simple approximations to the optimal functions. In contrast, our

approach does not suffer from this behavior.

3.2 Exploding and vanishing gradients

We observe that the WGAN optimization process is difficult because of interactions between the

weight constraint and the cost function, which result in either vanishing or exploding gradients

without careful tuning of the clipping threshold c.

To demonstrate this, we train WGAN on the Swiss Roll toy dataset, varying the clipping threshold c

in [10

−1

, 10

−2

, 10

−3

], and plot the norm of the gradient of the critic loss with respect to successive

layers of activations. Both generator and critic are 12-layer ReLU MLPs without batch normaliza-

tion. Figure 1b shows that for each of these values, the gradient either grows or decays exponentially

as we move farther back in the network. We find our method results in more stable gradients that

neither vanish nor explode, allowing training of more complicated networks.

4 Gradient penalty

We now propose an alternative way to enforce the Lipschitz constraint. A differentiable function

is 1-Lipschtiz if and only if it has gradients with norm at most 1 everywhere, so we consider di-

rectly constraining the gradient norm of the critic’s output with respect to its input. To circumvent

tractability issues, we enforce a soft version of the constraint with a penalty on the gradient norm

for random samples

ˆ

x ∼ P

ˆ

x

. Our new objective is

L = E

˜

x∼P

g

[D(

˜

x)] − E

x∼P

r

[D(x)]

| {z }

Original critic loss

+ λ E

ˆ

x∼P

ˆ

x

(k∇

ˆ

x

D(

ˆ

x)k

2

− 1)

2

.

| {z }

Our gradient penalty

(3)

Sampling distribution We implicitly define P

ˆ

x

sampling uniformly along straight lines between

pairs of points sampled from the data distribution P

r

and the generator distribution P

g

. This is

motivated by the fact that the optimal critic contains straight lines with gradient norm 1 connecting

coupled points from P

r

and P

g

(see Proposition 1). Given that enforcing the unit gradient norm

constraint everywhere is intractable, enforcing it only along these straight lines seems sufficient and

experimentally results in good performance.

Penalty coefficient All experiments in this paper use λ = 10, which we found to work well across

a variety of architectures and datasets ranging from toy tasks to large ImageNet CNNs.

4