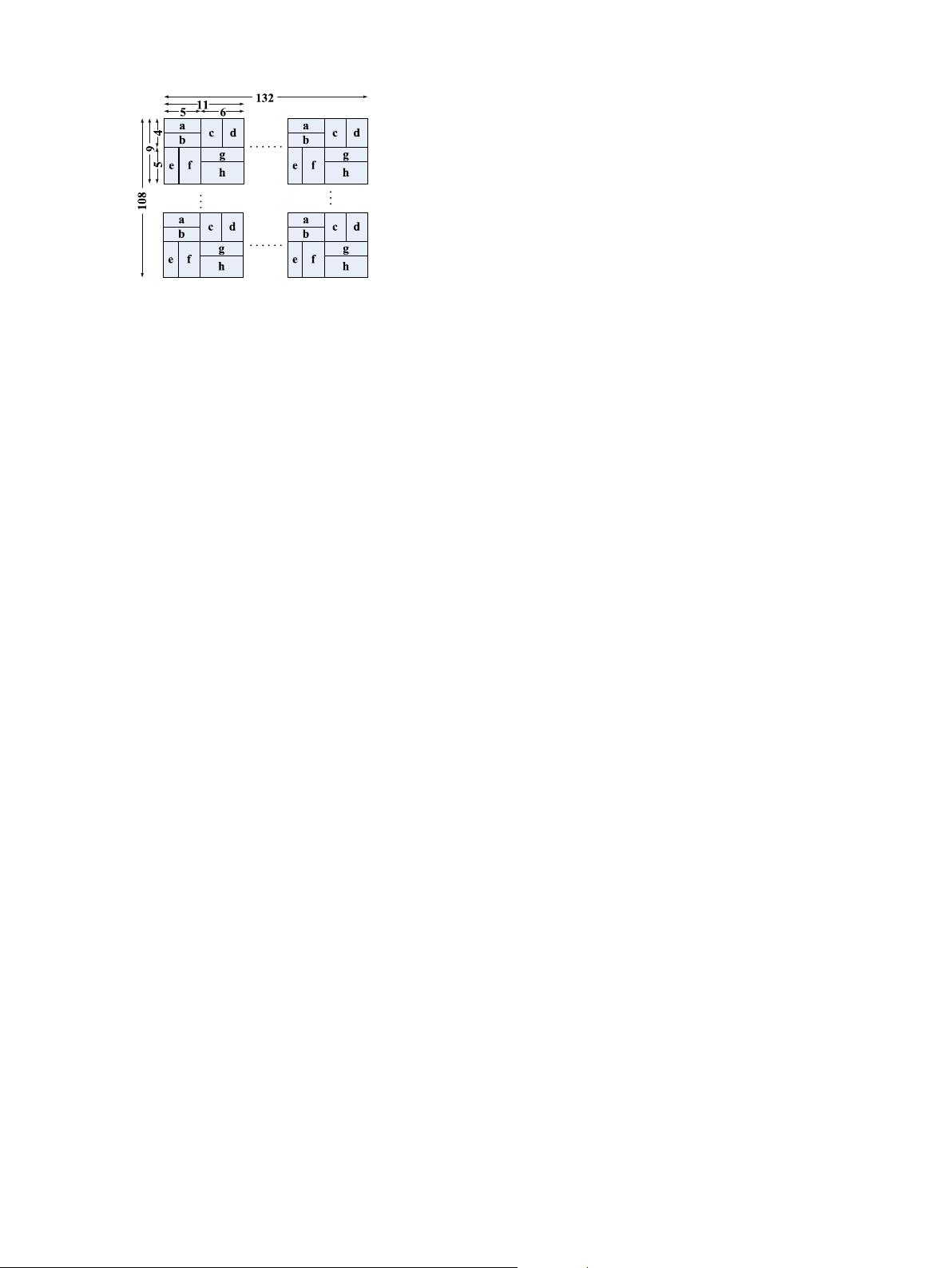

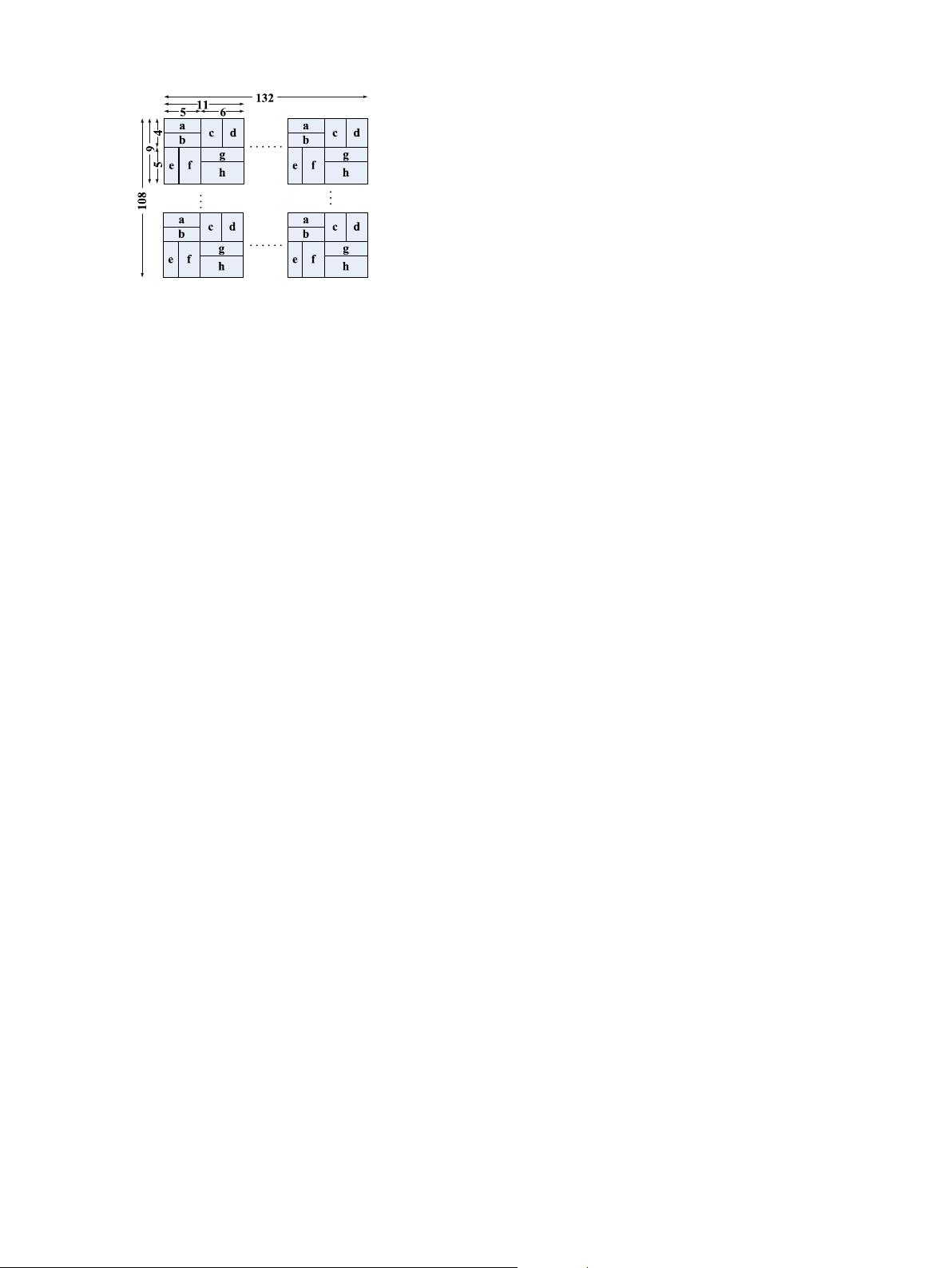

into 3/4QCIF (108 132). The size of QCIF has been widely applied

in video processing field.

In Refs. [10–12], the down sampling methods are adopted to

reduce image resolution into 32 32. The fingerprint of the frame

is made up of two parts: (1) local characteristic, (2) global

characteristic-confidence of the frame. The first one presents the

average value of local luminance and the differential of multi-

resolution. Each average value or differential is called frame ele-

ment. However, we believe that most of the information will be

eliminated or obfuscated by down sample the whole image into

32 32, and the extracted fingerprint will be thus insufficient to

represent the whole image. So, here we just borrow the ideas from

the above references and extract the fingerprint from the QCIF

image. The details are shown in Fig. 2 (In the 9 11 sub-region, a

to h is the average value of the local pixels). The exaction method

of the frame element is: (1) the mean element of 9 11 sub-

region; (2) four differential elements a–b, c–d, e–f and g–h. There

are 720 elements in total, including 144 mean elements marked as

A elements and 576 differential elements marked as D elements.

For a large video database, normally the elements are stored as

float-point format. This consumes a lot of storage space and is thus

not good for the implementation of video copyright authenticity,

tracing, and retrieving. To improve the situation, the elements are

commonly operated as integers. In Refs. [8,16,17], the Hashing

value is obtained by binarizing these elements. In Refs. [10–12]

these elements are operated by ternary qualification. Though these

methods can save storage memory and increase the efficiency of

the computer work; however the accuracy of the authentication

will be reduced correspondingly. Comparing with those methods,

the technique of quaternion qualification proposed in this paper

can deliver more accurate results.

Let A

i

represents the value of element A. A

i

is quantified into

quaternion value x

i

by using Eq. (1). Where i¼1, 2,…,144 denotes

the dimension index of A.

x

i

¼

3; if ðA

i

1284 ThAÞ

2; if 0o A

i

128r ThAÞ

1; if ðThAo A

i

128r 0Þ

0; if ðA

i

128r ThAÞ

8

>

>

>

>

<

>

>

>

>

:

ð1Þ

The threshold value ThA can be simply defined as (max(A

i

)

min(A

i

))/4. However, this approach only divides the value between

the maximum value and minimum value of A

i

into four equivalent

values. If an abrupt change value in A element occurs, such as a

large difference between the maximum and second largest or

between the minimum and second smallest, all the values of

element A will be quantified into a binary value. To avoid such a

situation, in this paper, the threshold value ThA is set dynamically

according to Refs. [11,12].

1. Let a

i

¼abs(A

i

128), where abs(⊡) is the operator to get the

absolute value, sorting a

i

in the ascending order. Let a

k

¼

a

1

; a

2

; ⋯; a

N

denote the values sorted in the ascending order.

Note that k¼1, 2,⋯,144 is an index representing the order of the

sorted values, and is different from dimension index i.

2. The threshold ThA¼a

k

, where k¼floor(0.25*N), N¼144.

Similarly, for the element with the D

i

dimensions from 145

to 720, they are quantified into the quaternion value x

i

:

x

i

¼

3; if ðD

i

4 ThDÞ

2; if ð0o D

i

r ThDÞ

1; if ðThDo D

i

r 0Þ

0; if ðD

i

r ThDÞ

8

>

>

>

>

<

>

>

>

>

:

ð2Þ

The process to get the threshold ThD is as follows:

1. Take d

i

¼abs(D

i

), sorting d

i

in ascending order, and let d

k

¼{d

1

,d

2

,

…,d

N

} denote the values sorted in ascending order. Note that

k¼1, 2,…, 576 is an index representing the order of sorted

values, and is different from dimension index i.

2. The threshold ThD¼d

k

, ThD¼d

k

, where k¼floor(0.25*N),

N¼576.

Seven hundred twenty elements will occupy 720 bytes, if we

take the quaternion value {3,2,1,0} to represent the video scene

frame without any special operation. The space required to store

the fingerprint data is therefore becoming huge for the large

amount of video database for video authentication. So, the four

dimensions value is converted to 8 bits, called word, by storing the

extracted four weight value in binary formats, and the total bits

will be 720/4¼180 words (1440 bits).

Let word

i

, i¼1, 2,…, 180 denotes the encoded value of each 4-

dimensional unit which will be calculated as

word

i

¼4

3

x

ði 1Þ4 þ1

þ4

2

x

ði 1Þ4 þ2

þ4

x

ði 1Þ4 þ3

þx

ði 1Þ4 þ4

ð3Þ

Obviously, word

i

A [0, 255]. As the method encodes four units to

one-byte, it saves 3/4¼75% storage space. Thus, 180 words are

extracted from each scene frame.

3. Video fingerprint extraction based on scene frame

In order to compare our fingerprint extraction method with

other extraction methods, we will first review the existing video

fingerprint extraction methods.

3.1. The extraction methods for video fingerprint at present

Recently, the fingerprint technology is widely used in the field

of copyright authentication, copy detection, multimedia index and

so on. The algorithms of video fingerprint put forward by

researchers can be classified into 4 categories [8,14,18]: color-

space-based, temporal, spatial and spatial–temporal.

The color-space-based approach relies on the color histogram

of video spatial–temporal domain [14] to extract the fingerprint by

the statistics of color characteristics. Due to the 24 bits true color,

the statistics is too big to get a reasonable fingerprint extraction

speed. Besides, the color will be changed very much [8] in the

different formats of videos. Furthermore, the fingerprint extraction

method for the color space cannot be applied to the white–black

videos. It is the reason why this method is not widely used.

The temporal algorithm is to extract temporal characteristics

from the video frame sequence [13]. It can be applied to the long

duration videos, but not the short-time segments. Consequently it

is not suitable for online application which is full of the short-time

videos.

Fig. 2. Extraction of frame fingerprint elements, various average and difference

elements are used to create the complete frame fingerprint.

J. Mao et al. / Neurocomputing 173 (2016) 2022–20322024