Theorem 1. Let L

1

, L

2

, L

3

and L

4

be the number of examples of Type I,

Type II, Type III and Type IV respectively in the up-to-date chunk.

Then

L

1

p

g

c

1

l n

L

2

pð1

g

c

1

Þl n

L

3

p

g

c

1

ð1lÞn

L

4

pð1

g

c

1

Þð1lÞn ð1Þ

where

g

4 0 is a constant coefficient.

Proof. Recall that stream S flows at a speed of n examples

per second, the concept drifting probability is c, and the labeling

rate is l. The number of target domain examples is inversely

proportional to the concept drifting rate c with a coefficient of

g

,

so it can be easily estimated that

g

c

1

n examples in the up-to-

date chunk have the same distribution as the testing data.

The remaining ð1

g

c

1

Þn examples have a similar distribution

to the testing examples. From the estimates the theorem follows

immediately. &

Learning priority: Not all the four types of training examples

have to be used in model construction. For example, consider a

data stream where the concept drifting is low and the labeling

rate is high, the training chunk will have a large portion of Type I

examples. In this case, we are able to build a satisfactory model by

training only on the Type I examples. We observe that the four

types of training examples have the following learning priorities.

Observation 1. The learning priority of the four types of training

examples is

Type I 4 Type III4 Type II 4 Type IV ð2Þ

What is the intuition behind Observation 1? Generally, exam-

ples from the target domain (Types I and III) are capable of

capturing the genuine concept of the testing data, and thus have a

high priority than examples from similar domains (Types II

and IV). Besides, since Type I examples are labeled, they have a

higher priority than Type III examples. Similarly, Type II examples

have a higher priority than Type IV examples.

Based on Observation 1, when a particular type dominates the

training examples, examples with lower priorities will not be

used for training. For example, if Type III dominates the training

examples, only Type I and Type III examples will be used for

training. This is because Type I examples have a higher priority

than Type III examples, and the remaining two types have lower

priorities. By doing so, we gain in efficiency by building a simple

model, comparing to a very complex model if we have to learn

from all four types of training examples. On the other hand, the

most informative examples are utilized in model construction and

the learning accuracy is not sacrificed.

Learning cases: Aiming at both accuracy and efficiency in

learning prediction models, we categorize learning from data

streams into the following four cases:

Case 1: Type I dominates. When labeling rate is high and the

concept drifting probability is low, Type I dominates the

training examples. In this case, we can train a satisfactory

model by using only Type I examples.

Case 2: Type III dominates. When both labeling rate and

concept drifting probability are low, Type III dominates the

training examples. According to the learning priority, it is

necessary to combine both Type I and Type III examples for

training.

Case 3: Type II dominates. When both labeling rate and

concept drifting probability are high, Type II dominates the

training examples, and we will use Type I, Type II and Type III

examples for training.

Case 4: Type IV dominates. When labeling rate is low and the

concept drifting probability is high, Type IV dominates the

training examples. This is the most difficult case because most

examples are unlabeled and not from the target domain.

According to the learning priority, we need to use all the four

types of training examples for training.

These learning cases are further illustrated in Fig. 3.

3. Learning models

We have introduced the four learning cases. In this section, we

present their corresponding learning models.

Throughout the section, T

1

¼ðx

1

, y

1

Þ, ..., ðx

L

1

, y

L

1

Þ denotes the

set of Type I examples. T

2

¼fðx

L

1

þ 1

, y

L

1

þ 1

Þ, ..., ðx

L

, y

L

Þg denotes the

set of Type II examples, where L ¼ L

1

þL

2

. T

3

¼fx

L þ 1

, ..., x

L þ U

g

denotes the set of Type III examples, where U is the set of

unlabeled examples. T

4

¼fx

L þ U þ 1

, ..., x

L þ U þ N

g denotes the set of

Type IV examples, where N is the set of unlabeled examples.

3.1. Case 1: Type I dominates

In this case, Type I examples T

1

dominate the training chunk

and has the highest learning priority. Thus, only T

1

will be used

for training. Formally, to learn from T

1

¼fðx

1

, y

1

Þ, ..., ðx

L

1

, y

L

1

Þg,

a generic SVM model can be trained by maximizing the margin

II

IV

III

IV III

III

IV III

I

II

IV

III

I

III

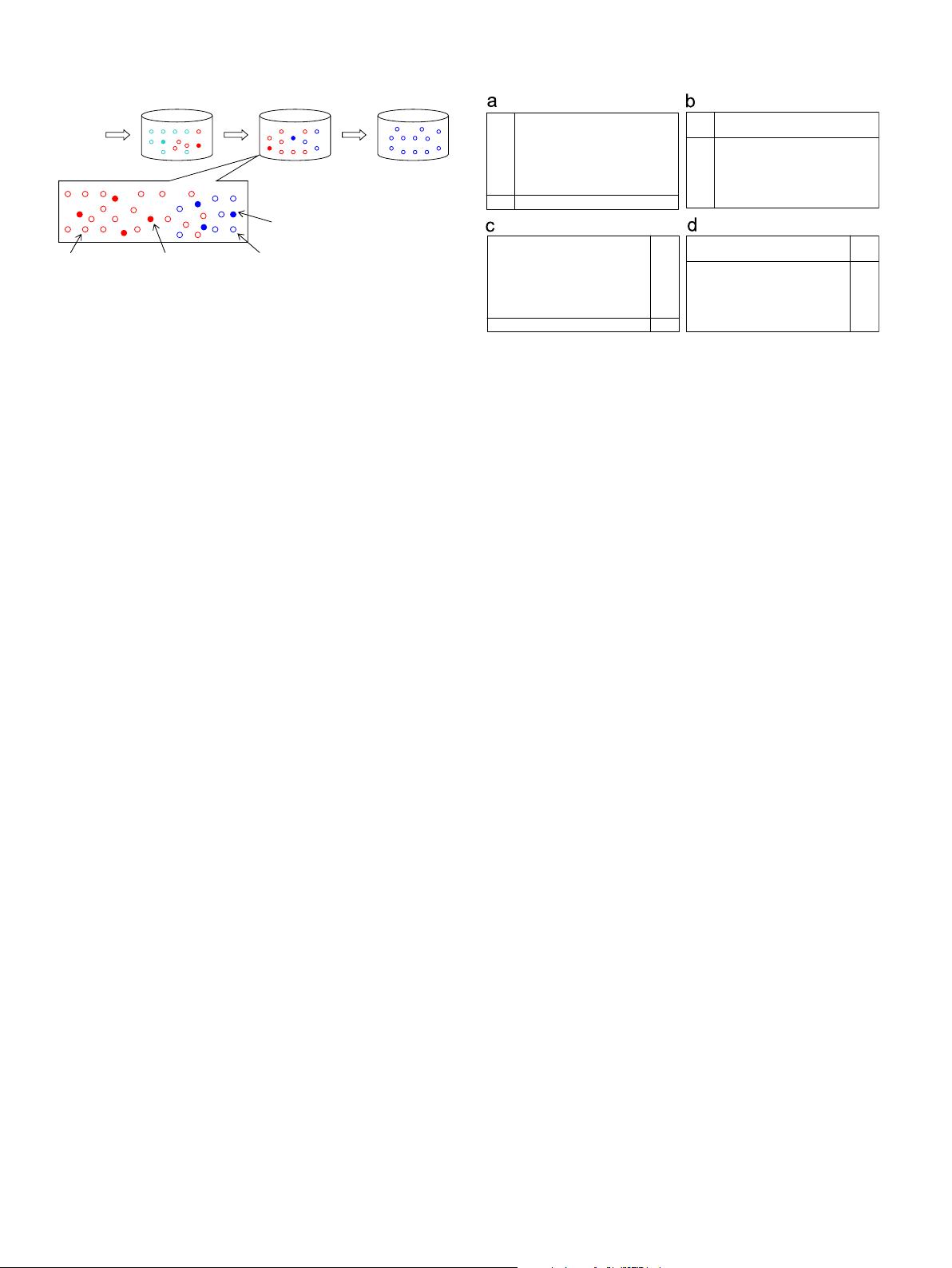

Fig. 3. The proportion of the four types of training examples with respect to

different labeling rate l and concept drifting probability c. (a) l is high and c is low.

Case 1, (b) both l and c are low. Case 2, (c) Both l and l are high. Case 3 and (d) l is

low and c is high. Case 4.

……

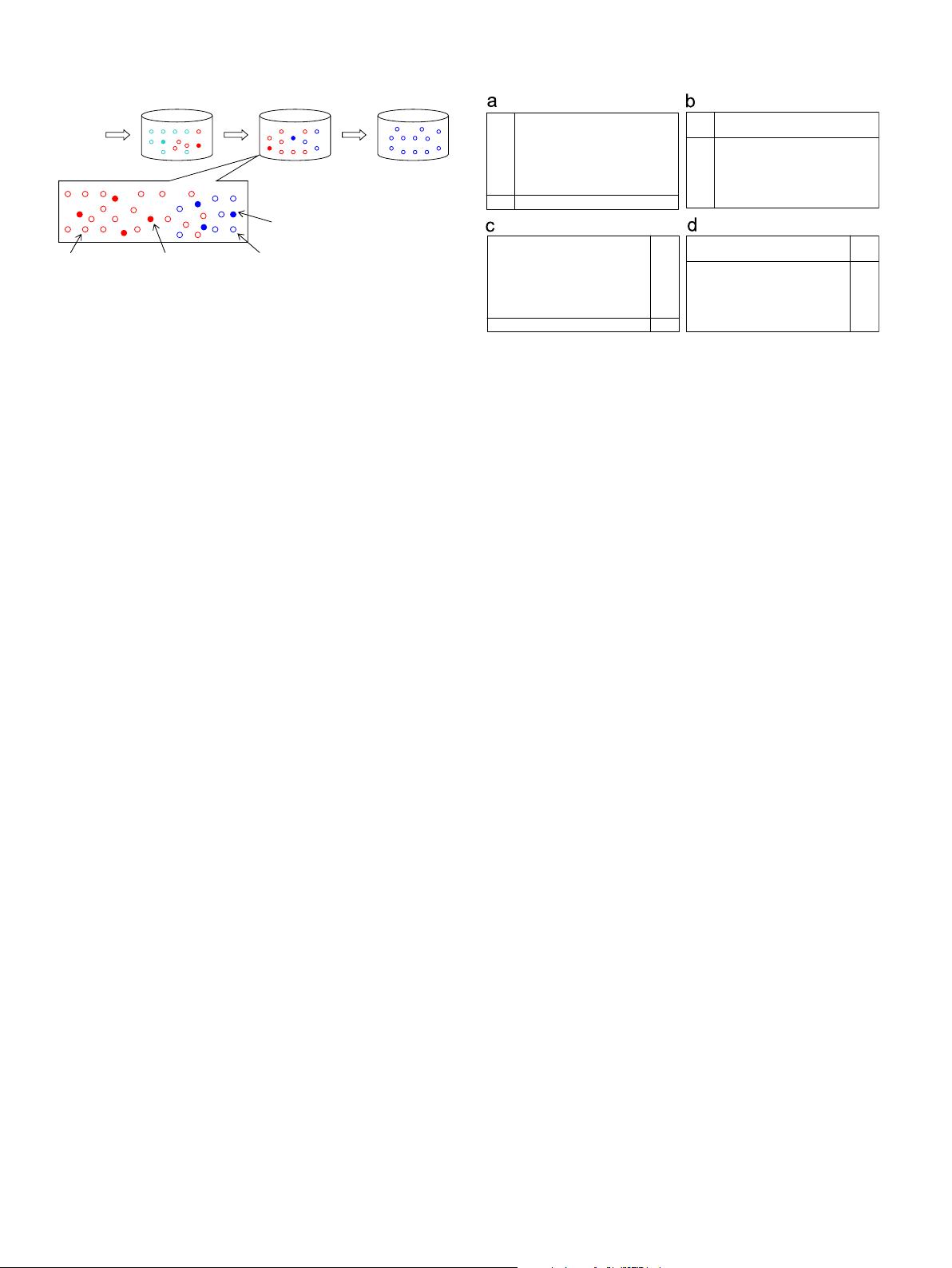

Type I

Type III

Type IV

T

pe II

Test chunk Training chunk

Up-to-date chunk Yet-to-come chunk Historical stream data

Fig. 2. An illustration of the four types of training examples in an up-to-date

training chunk. (For interpretation of the references to color in this figure legend,

the reader is referred to the web version of this article.)

P. Zhang et al. / Neurocomputing 92 (2012) 170–182172