Defeats GAN: A Simpler Model Outperforms in Knowledge Representation

Learning

Heng Wang

School of Data and Computer Science

Sun Yat-sen University

Guangzhou, China

e-mail: wangh376@mail2.sysu.edu.cn

Mingzhi Mao

School of Data and Computer Science

Sun Yat-sen University

Guangzhou, China

e-mail: mcsmmz@mail.sysu.edu.cn

Abstract—The goal of knowledge representation learning is to

embed entities and relations into a low-dimensional,

continuous vector space. How to push a model to its limit and

obtain better results is of great significance in knowledge

graph's applications. We propose a simple and elegant method,

Trans-DLR, whose main idea is dynamic learning rate control

during training. Our method achieves remarkable

improvement, compared with recent GAN-based method.

Moreover, we introduce a new negative sampling trick which

corrupts not only entities, but also relations, in different

probabilities. We also develop an efficient way, which fully

utilizes multiprocessing and parallel computing, to speed up

evaluation of the model in link prediction tasks. Experiments

show that our method is effective.

Knowledge representation; dynamic learning rate; negative

sampling; multiprocessing; parallel computing

I. INTRODUCTION

Knowledge Graph is a directed graph structure which is

composed of various kinds of entities and their relations in

our world. Typical knowledge graphs include Wordnet [1],

Freebase [2], Yago [3], to name a few. Knowledge graph is

playing a pivotal role in many NLP applications, such as

relation extraction [4], question answering [5], and social

network mining [6].

Facts in a knowledge graph are commonly represented as

triples (head entity, relation, tail entity), abbreviated as (h, r,

t). They are obtained by human labor, rules or distant

supervision [7], which are usually far from complete.

Knowledge graph representation aims to represent entities

and relations as symbols, numbers, or vectors, aiding in

completing missing links and finding new facts for a

knowledge graph. Inspired by [8], a great deal of effort have

been made to embed entities and relations into a low-

dimensional, continuous vector space, such as [9-12], with

different loss functions adopted. Let denote all the triples

in a knowledge base. A triple

is positive if

, otherwise negative if

. The basic ideas behind

these models is that the loss of negative triples should be at

least greater than the loss of positive triples, which is

known as margin loss. Readers can refer to Section II to get

more detailed introduction.

During training, all the models mentioned above suffer

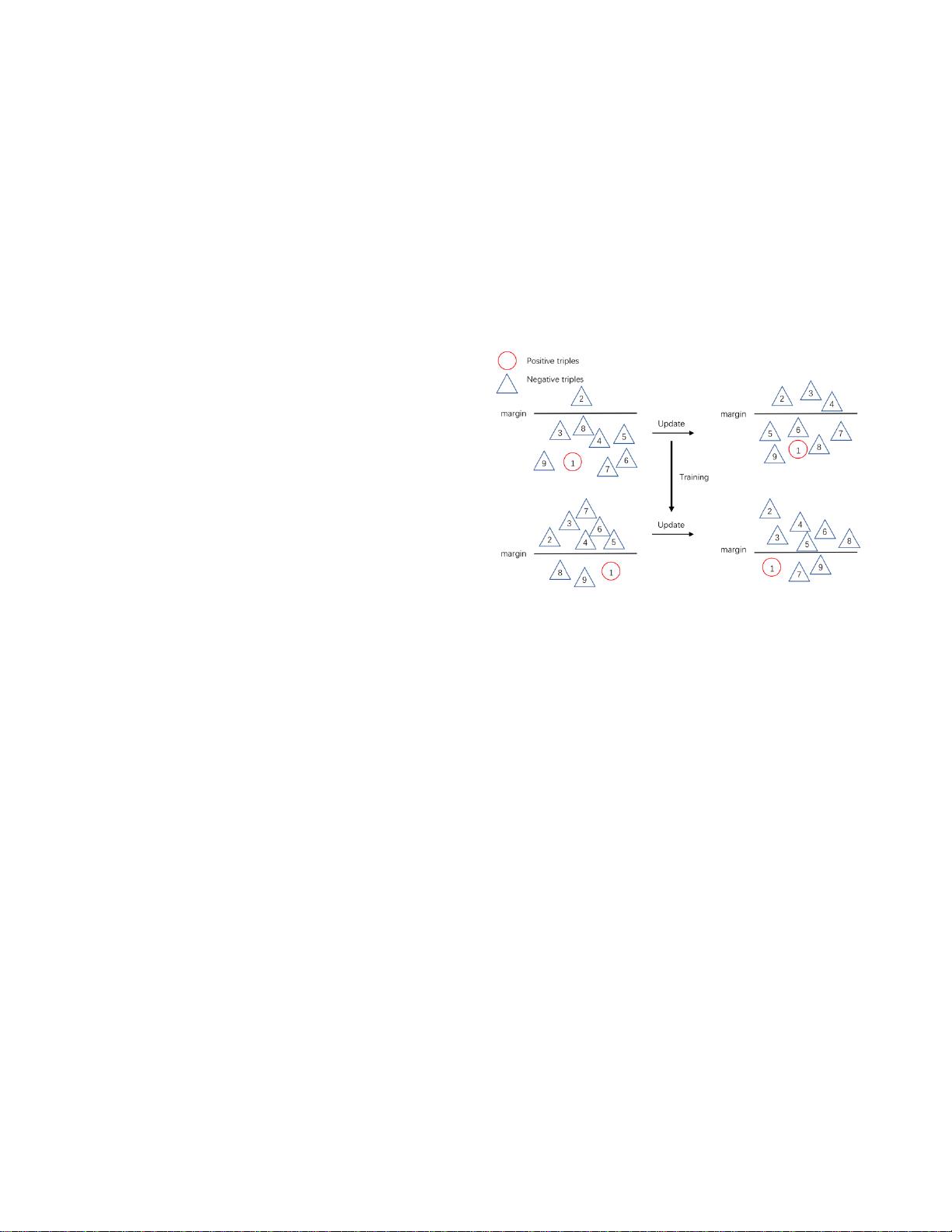

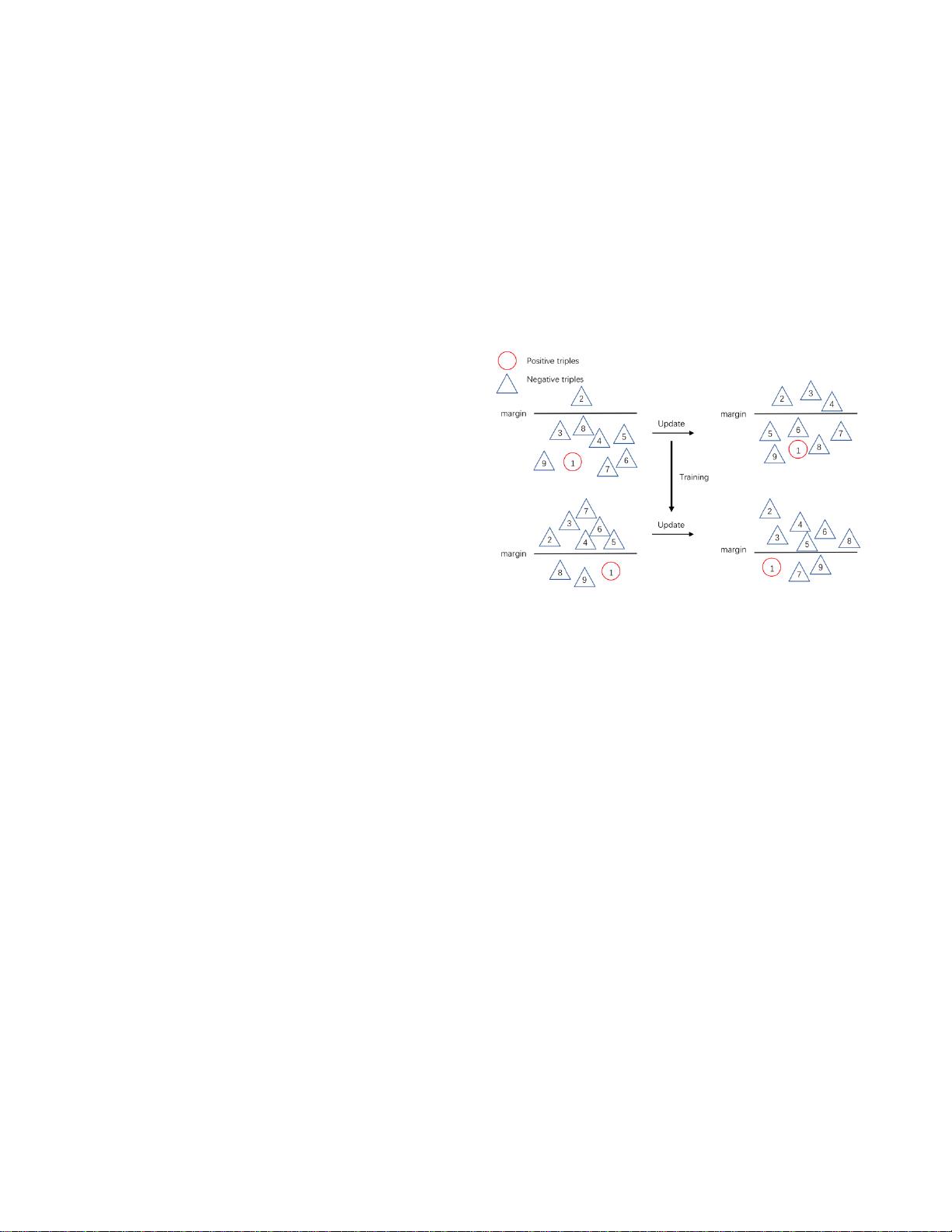

Figure 1. Illustration of local optimum in training. After training for a while,

the model pushes nearly the same number of negative triples out of and into

the margin, resulting in no improvement of performance.

from the problem of local optimum and inability to step

forward in performance (See Fig. 1). How to push a model to

its limit and learn a better representation is of great

significance in knowledge graph's downstream applications.

Recently, [13] proposes a knowledge embedding framework

which utilizes GAN in negative sampling, called Trans-

GAN, to mine the potential of models by generating high-

level negative samples. However, it has several drawbacks.

Firstly, GAN often faces the problem of non-convergence or

collapse in training, leading to a poor result when it happens.

Secondly, GAN consists of generator and discriminator

networks, which needs more parameters.

In this paper, we propose a simpler and more elegant

method whose main idea behind is dynamic learning rate

(DLR). Experiments show that our DLR-based methods

outperforms GAN-based methods remarkably under most

circumstances.

Our contributions in this paper are as follows:

We incorporate DLR in knowledge representation

learning which can dynamically adjust the learning rate

of a model, pushing the model to a better optimum.

We propose a new negative sampling method which not

only corrupts entities, but also relations in different

probabilities. So the model can learn better

representation for both entities and relations.