1318 IEEE TRANSACTIONS ON ROBOTICS, VOL. 34, NO. 5, OCTOBER 2018

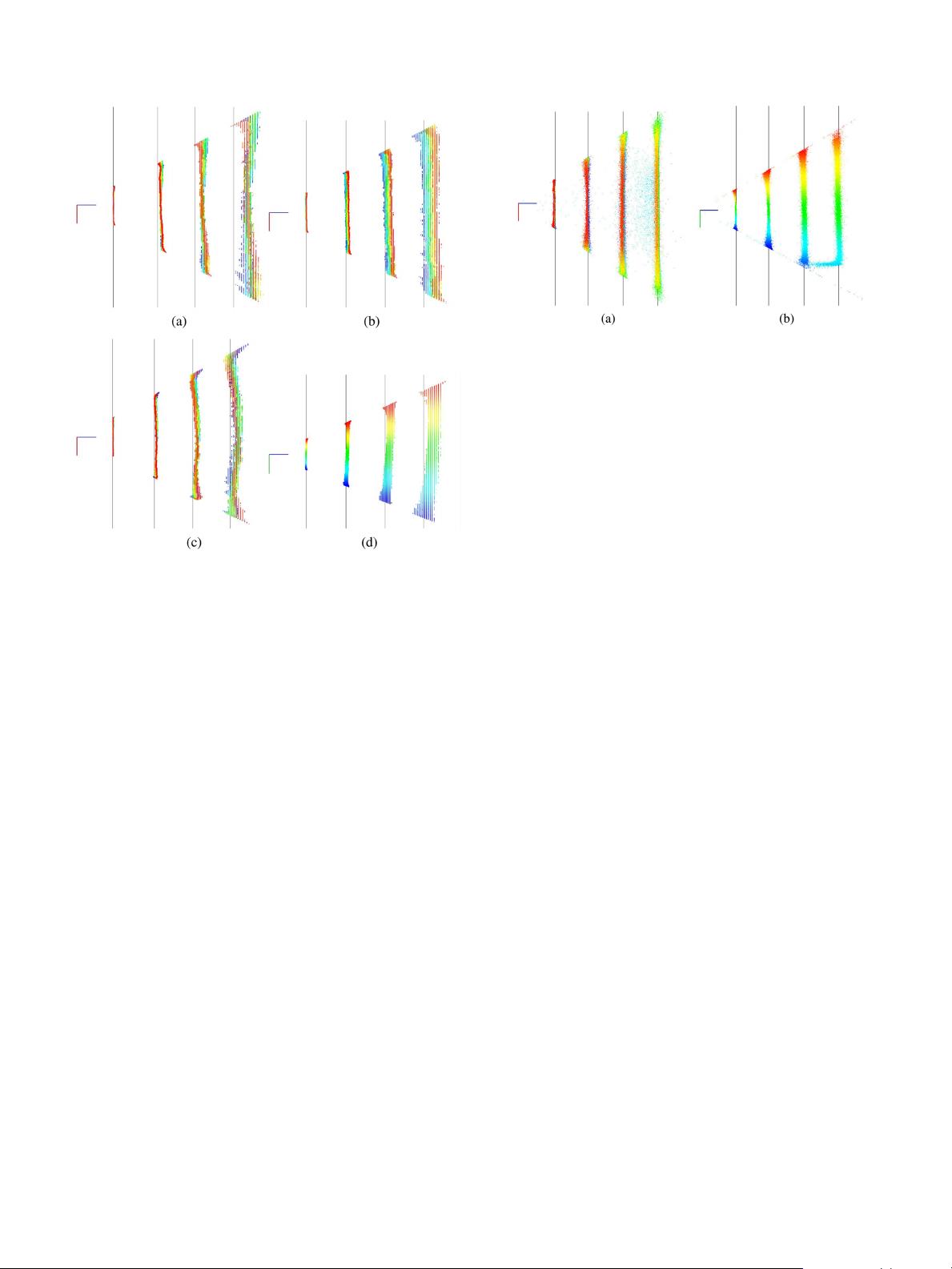

Fig. 1. Top and side views of the point clouds generated by some different SL

depth sensors: two Kinects (

KINECT1A, KINECT1B) and an Asus Xtion Pro Live

(

ASUS). The gray lines represent the ground truth measured with the laser dis-

tance meters, points with different y-coordinates are drawn with different colors.

(a)

KINECT1A—top. (b) KINECT1B—top. (c) ASUS—top. (d) KINECT1B—side.

method is inspired by the work in [24] and [30], while our error

model has been designed taking inspiration from both the error

models presented in [14] and [29].

III. D

EPTH ERROR ANALYSIS

In this section, we introduce our depth error correction model,

derived from an experimental analysis performed using two

popular SL-based depth sensors (the Microsoft Kinect and the

Asus Xtion Pro Live RGB-D cameras) and a ToF sensor (the

Microsoft Kinect 2 RGB-D camera).

To analyze the systematic and random errors in the depth

measurements, we positioned each sensor parallel to a flat wall at

increasing distances. For each position, we collected the sensors

readings (i.e., the depth images and the generated point clouds)

while measuring the real distances (i.e., the ground truth) using

two high precision laser distance meters [e.g., Fig. 12(a)]. Some

qualitative results of such analysis are r eported in Figs. 1 and 2.

From our analysis, we noticed the following statements.

1) In the case of the SL sensors, the surfaces defined by

the point clouds are not properly planar as they should

be, and this “local distortion” effect becomes more

accentuated for increasing distances (this is the myopic

property defined in [30]). Moreover, each sensor has

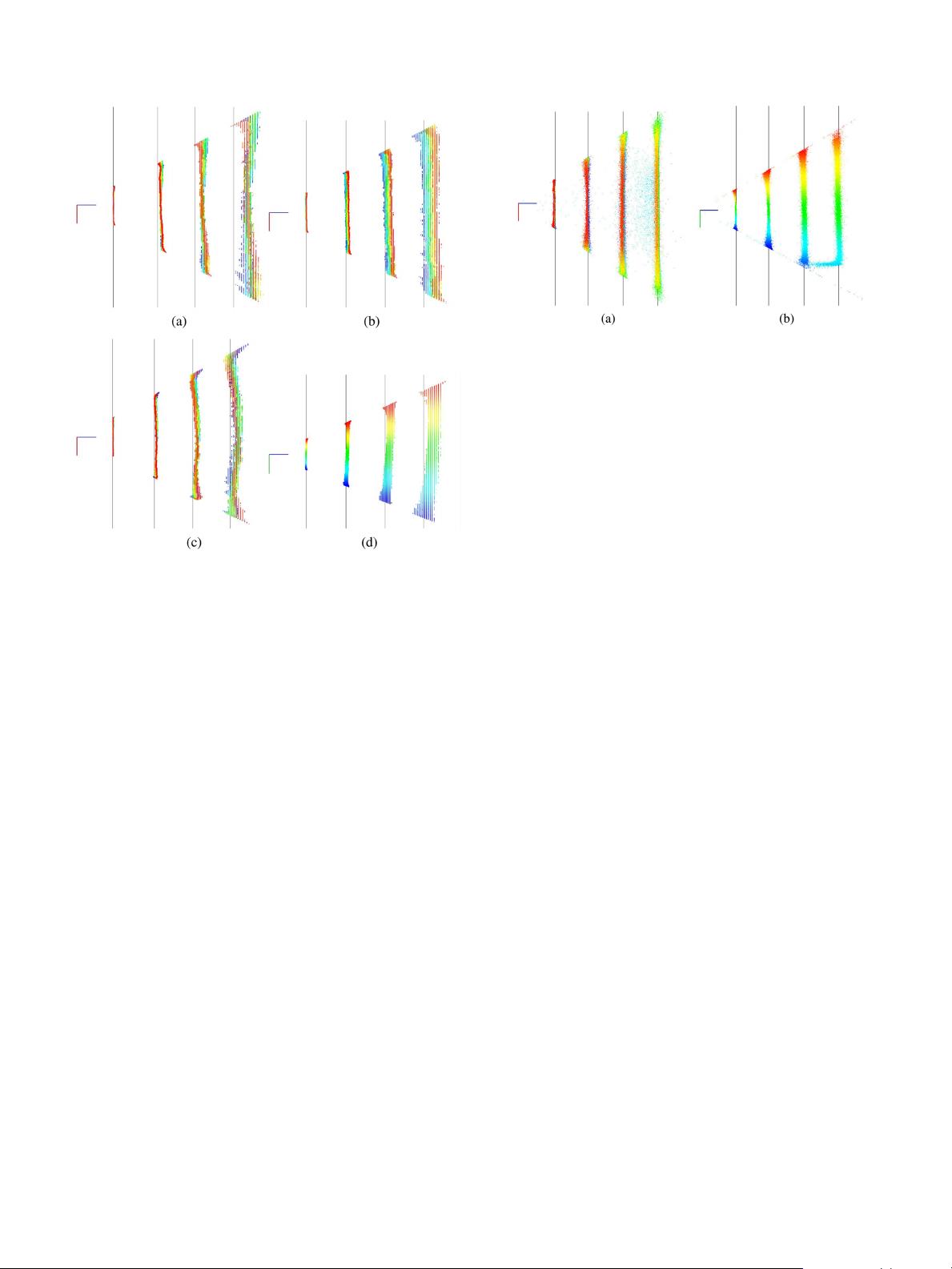

a different “distortion pattern.” The ToF sensor is also

affected by similar local distortion effect, but to a lesser

extent (typically less than 1 cm).

Fig. 2. Top and side views of the point clouds generated by a Kinect 2 ToF

camera (

KINECT2). The gray lines represent the ground truth measured with

the laser distance meters, points with different y-coordinates are drawn with

different colors. Note that the cloud on the right frames a part of the floor too.

(a)

KINECT2—top. (b) KINECT2—side.

2) The average depth of each point cloud is not generally

correct and, in the case of SL sensors, sometimes even the

average orientation is wrong.

3) The quantization error for the SL sensors becomes not

negligible for increasing distances. ToF sensors are

affected by a negligible quantization error.

3

The effect of 1) is to produce a local alteration of an object

shape, while 2) is a systematic bias in the measurements.

In this paper, we aim to remove both 1) and 2), while it is

usually not possible to remove the quantization error 3) of SL

sensors.

4

In the following, we refer to these error components as distor-

tion error and global error, respectively. These errors in the case

of SL sensors arise from a combination of two main sources:

The radial and tangential lens distortion of the camera used

for stereo triangulation [21], and the misalignment between the

pattern projector and the camera. In the case of ToF cameras,

there are different sources of errors that contribute to the distor-

tion error, among others the built-in pixel-related errors and the

amplitude-related errors, while the global error arise from the

so called wiggling error that appears due to irregularities in the

internal modulation process [8]. Our method does not directly

address other ToF related sources of errors, such as multipath

interference and reflectivity related deviations.

In order to analyze the distortion error trend, we compared

the measured point clouds with the planes that best fit to them

(some results are reported in Fig. 3(a), where we used three SL

sensors and one ToF sensor). In particular, for each incoming

point cloud, we computed the root-mean-square (RMS) error on

the (signed) distance between the plane and the points. It can be

3

Continuous-wave-based ToF sensors like the Kinect 2 measure the depth by

means of a phase differences between an emitted sinusoidal light wave signal

and the backscattered signals received. This phase is evaluated in closed-form

using four equally spaced samples [10]: The quantization error, that is mainly

due to the precision used in storing the samples, is usually neglected.

4

In SL-based depth sensors, the quantization error originates from the discrete

nature of the disparity map used to extract the depth: This error is commonly

mitigated by means of subpixel stereo matching algorithms, unfortunately these

algorithms require to access low-level data that is usually not accessible from

the user side.