284 K. Liu, X. Yang, H. Yu et al. / Knowledge-Based Systems 165 (2019) 282–296

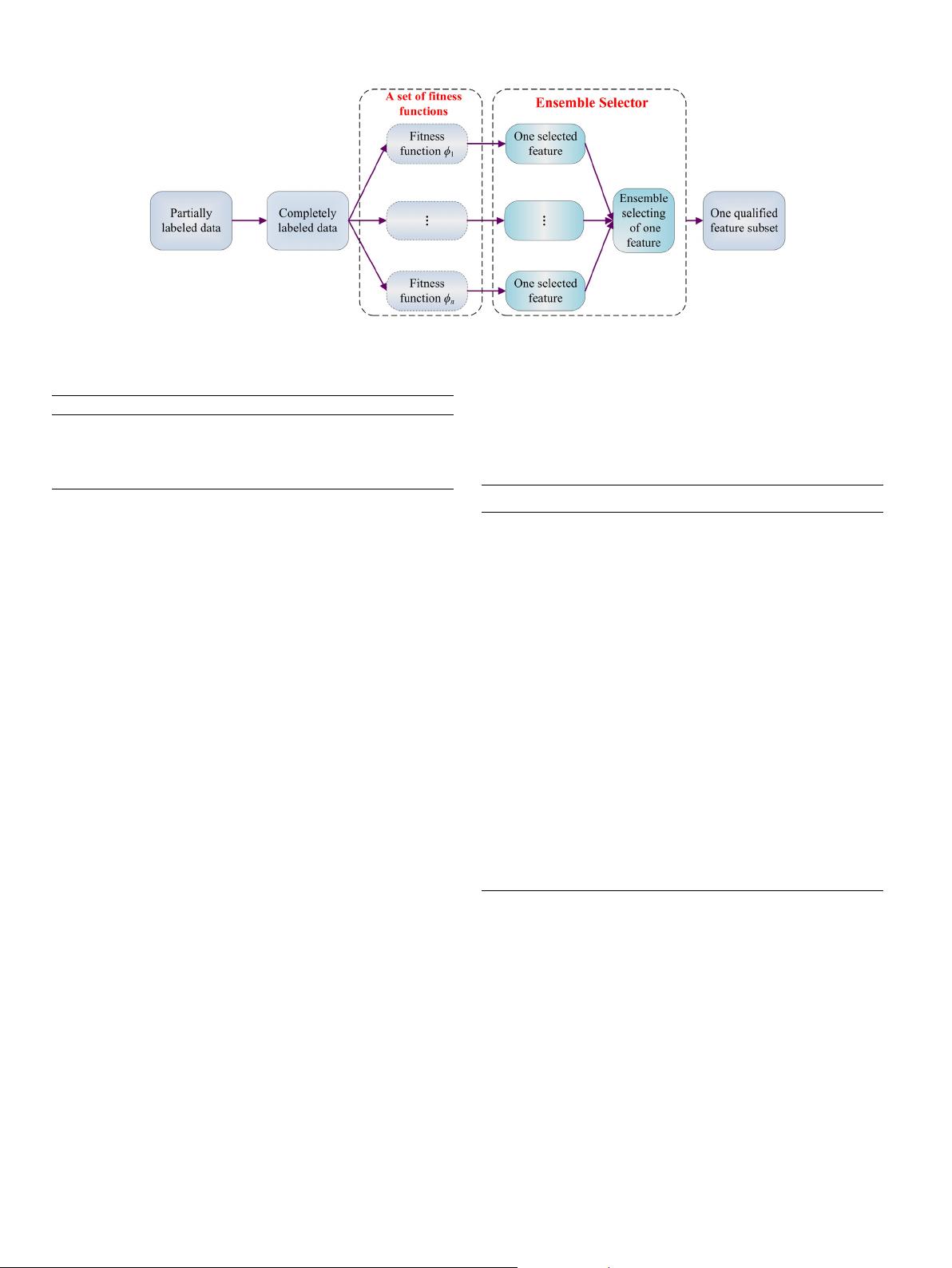

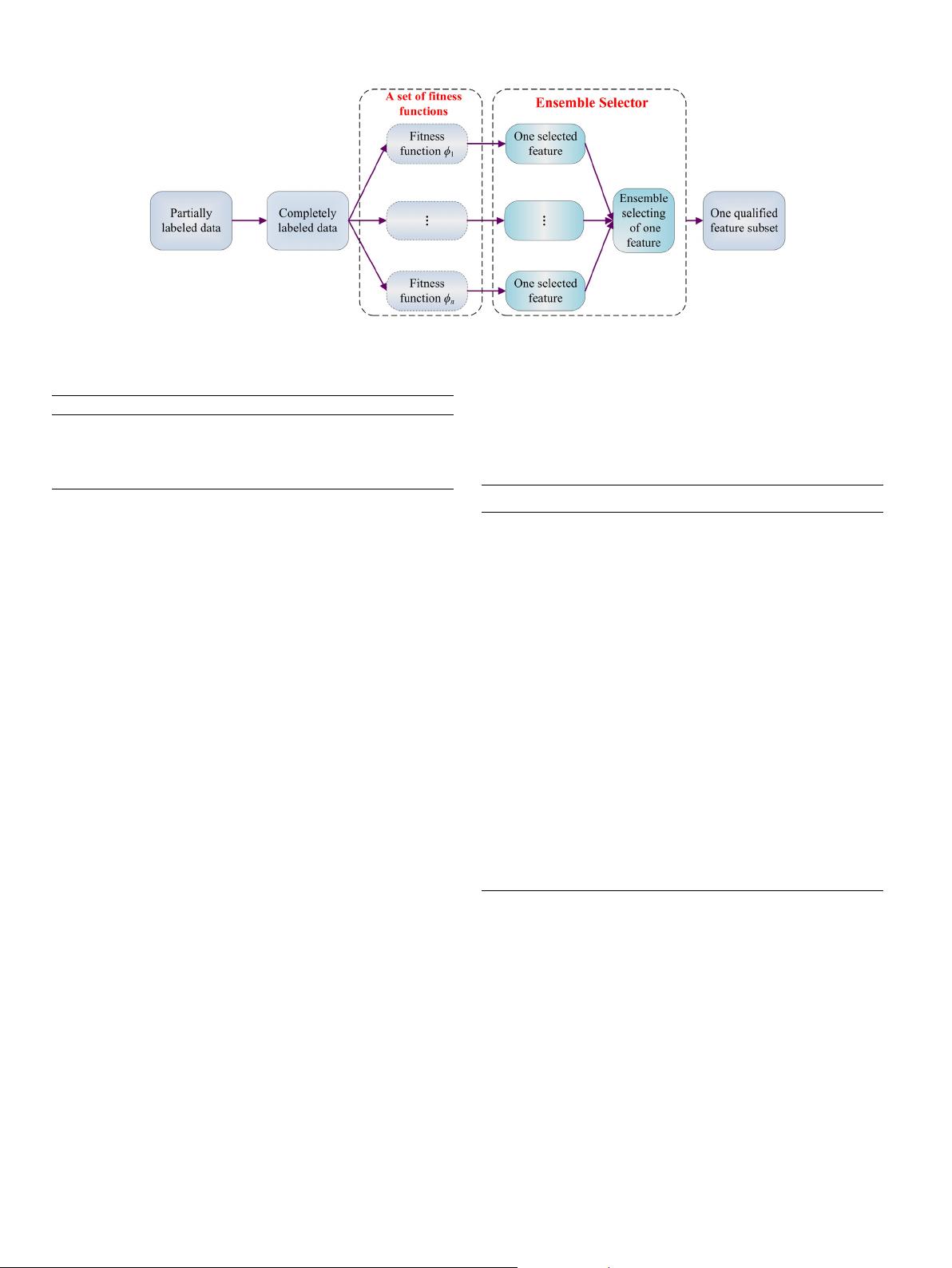

Fig. 2. Rough set based semi-supervised feature selection via ensemble selector.

Table 1

A decision system for partially labeled data.

U

l

∪ U

u

a

1

a

2

a

3

d

x

1

0.1 0.3 0.1 1

x

2

0.1 0.3 0.2 2

x

3

0.3 0.1 0.2 1

x

4

0.2 0.3 0.1 ∗

x

5

0.2 0.1 0.3 ∗

2.2. Semi-supervised feature selection methods for partially labeled

data

It is universally acknowledged that most of the traditional

feature selection methods are not suitable for dealing with par-

tially labeled data. To solve such problem, recently, various semi-

supervised feature selection methods have been proposed to han-

dle partially labeled data. As what have been pointed out in Sec-

tion 1, two frameworks of semi-supervised feature selections have

been addressed in partially labeled data. In the first framework, the

unlabeled samples should be predicted before feature selection,

and it follows that partially labeled data can be transformed into

completely labeled data. For example, in Refs. [16,19,20,52], var-

ious approaches included in this framework have been explored.

And in the second framework, the measurements for evaluating

the significance of each candidate feature are constructed by con-

sidering both labeled and unlabeled samples simultaneously. For

instance, a rough set based approach for measuring the significance

of feature has been reported by Dai et al. in Ref. [15].

2.2.1. Forward semi-supervised feature selection

As a representative method in the first framework, through

assigning labels to the unlabeled samples in partially labeled data,

Forward Semi-supervised Feature Selection (FSFS) proposed by

Ren et al. in Ref. [16], has been paid much attention by many

researchers.

The main process of FSFS includes the following four steps:

1. Supervised-sequential Forward Feature Selection (SFFS), one

of the most widely adopted supervised feature selection

methods, is used to select some features initially over the

labeled samples;

2. The selected features obtained in the first step are used to

train a classifier, and then the raw unlabeled samples can be

predicted based on such classifier;

3. Some updated labeled samples are randomly selected based

on a given sampling rate and combined with the raw labeled

samples, the new joint labeled samples are formed, and then

a feature subset is obtained over such joint labeled samples

through using SFFS;

4. The above step is repeated several times and then several

feature subsets have been obtained, the most frequently

selected feature which is not in the feature subset derived

by the first step is added into such subset.

The detailed algorithm of FSFS is described as follows.

Algorithm 1. Forward Semi-supervised Feature Selection (FSFS)

Inputs: DS, sampling rate ρ, sampling times s and entire itera-

tion times t;

Outputs: A qualified feature subset A.

1. A ← ∅;

2. Obtain the initial feature subset R in U

l

by using SFFS and

then A ← R;

3. For p =1 to t

(1) Train a classifier over U

l

with respect to A;

(2) ∀x

u

∈ U

u

, obtain the predicted labels of x

u

as d(x

u

);

(3) For q = 1 to s

(i) By ratio ρ, randomly select a set of samples in

U

u

, it is denoted by U

u

ρ

;

(ii) U

l∗

← U

l

∪ (U

u

ρ

);

(iii) Obtain the random feature subset A

q

in U

l∗

;

End For

(4) Obtain random feature subsets A

1

, A

2

, . . . , A

s

, find

the feature b which is the most frequently selected

in these random feature subsets while it is not in

A;

(5) A ← A ∪ {b};

End For

4. Return A.

Remark 1. The time complexity of SFFS is O(|U

l

|

2

× |AT |

2

). More-

over, the neighborhood classifier [53] is used to predict the unla-

beled samples in this paper and then the time complexity of the

third step is O(|U

l

|

2

× |AT | × t + |U

l∗

|

2

× |AT |

2

× s × t). Finally,

the overall complexity of Algorithm 1 is O(|U

l

|

2

× |AT |

2

+ |U

l

|

2

×

|AT | × t + |U

l∗

|

2

× |AT |

2

× s × t).

2.2.2. Rough set based semi-supervised feature selection

The above approach aims to transform the unlabeled samples

into labeled samples by some techniques and then execute feature

selection over the updated labeled samples. Another natural think-

ing is to execute feature selection over the whole data without

predicting the unlabeled samples. Such strategy requires the evalu-

ation of the significance of feature by considering both labeled and

unlabeled samples, simultaneously. From this point of view, Dai

et al. [15] have proposed a typical Rough set based Semi-supervised