1.2: Error-correcting codes for the binary symmetric channel 7

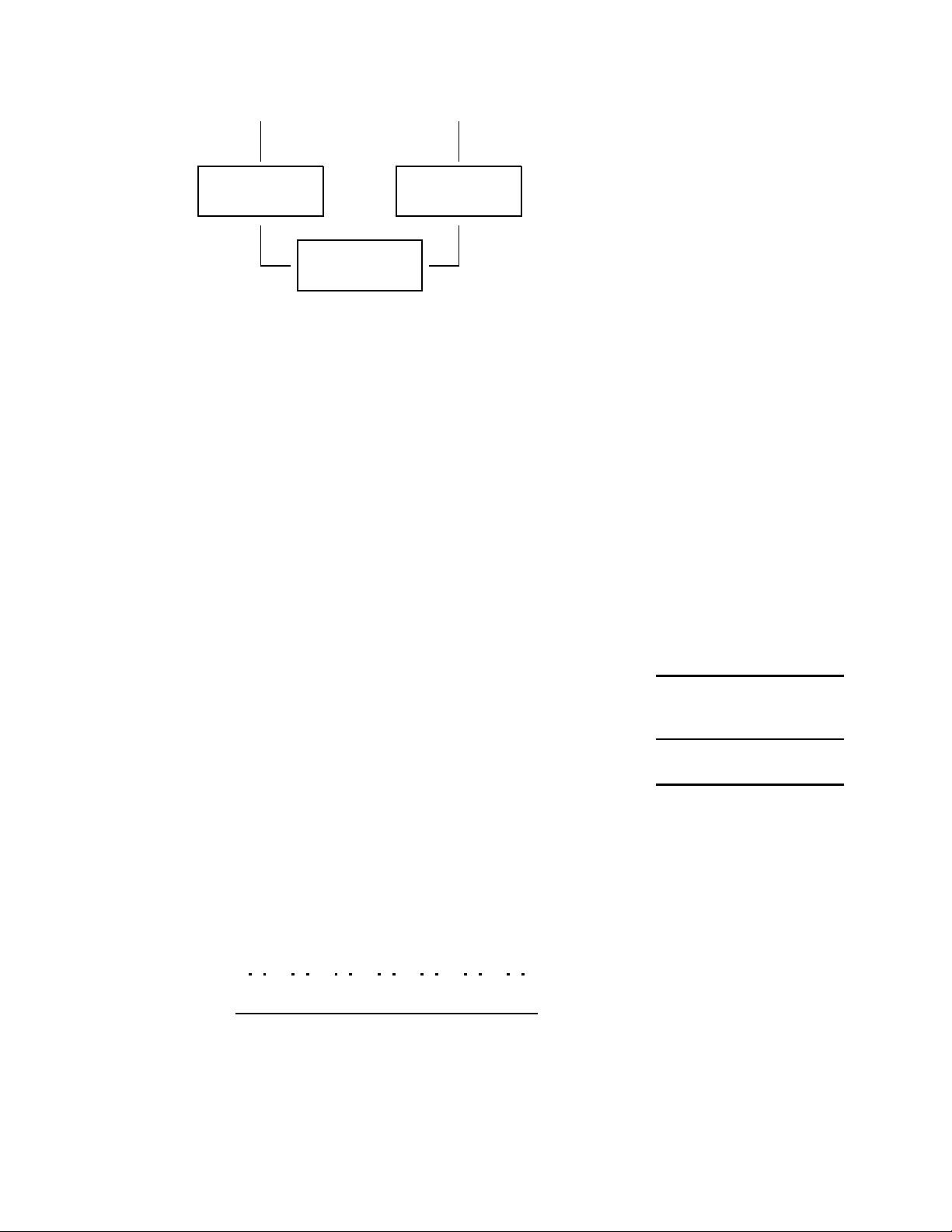

as a 0 – which in this case corrects the error. Not all errors are corrected,

however. If we are unlucky and two errors fall in a single block, as in the fifth

triplet of figure 1.8, then the decoding rule gets the wrong answer, as shown

in figure 1.10.

s 0 0 1 0 1 1 0

t

z

}|{

0 0 0

z}|{

0 0 0

z}|{

1 1 1

z}|{

0 0 0

z}|{

1 1 1

z}|{

1 1 1

z}|{

0 0 0

n 0 0 0 0 0 1 0 0 0 0 0 0 1 0 1 0 0 0 0 0 0

r 0 0 0

|

{z}

0 0 1

|{z}

1 1 1

|{z}

0 0 0

|{z}

0 1 0

|{z}

1 1 1

|{z}

0 0 0

|{z}

ˆ

s 0 0 1 0 0 1 0

corrected errors

undetected errors

Figure 1.10. Decoding the received

vector from figure 1.8.

Exercise 1.2.

[2, p.16]

Show that the error probability is reduced by the use of The exercise’s rating, e.g.‘[2 ]’, indi-

cates its difficulty: ‘1’ exercises are

the easiest. Exercises that are accom-

panied by a marginal rat are espe-

cially recommended. If a solution or

partial solution is provided, the page

is indicated after the difficulty rating;

for example, this exercise’s solution is

on page 16.

R

3

by computing the error probability of this code for a binary symmetric

channel with noise level f .

The error probability is dominated by the probability that two bits in

a block of three are flipped, which scales as f

2

. In the case of the binary

symmetric channel with f = 0.1, the R

3

code has a probability of error, after

decoding, of p

b

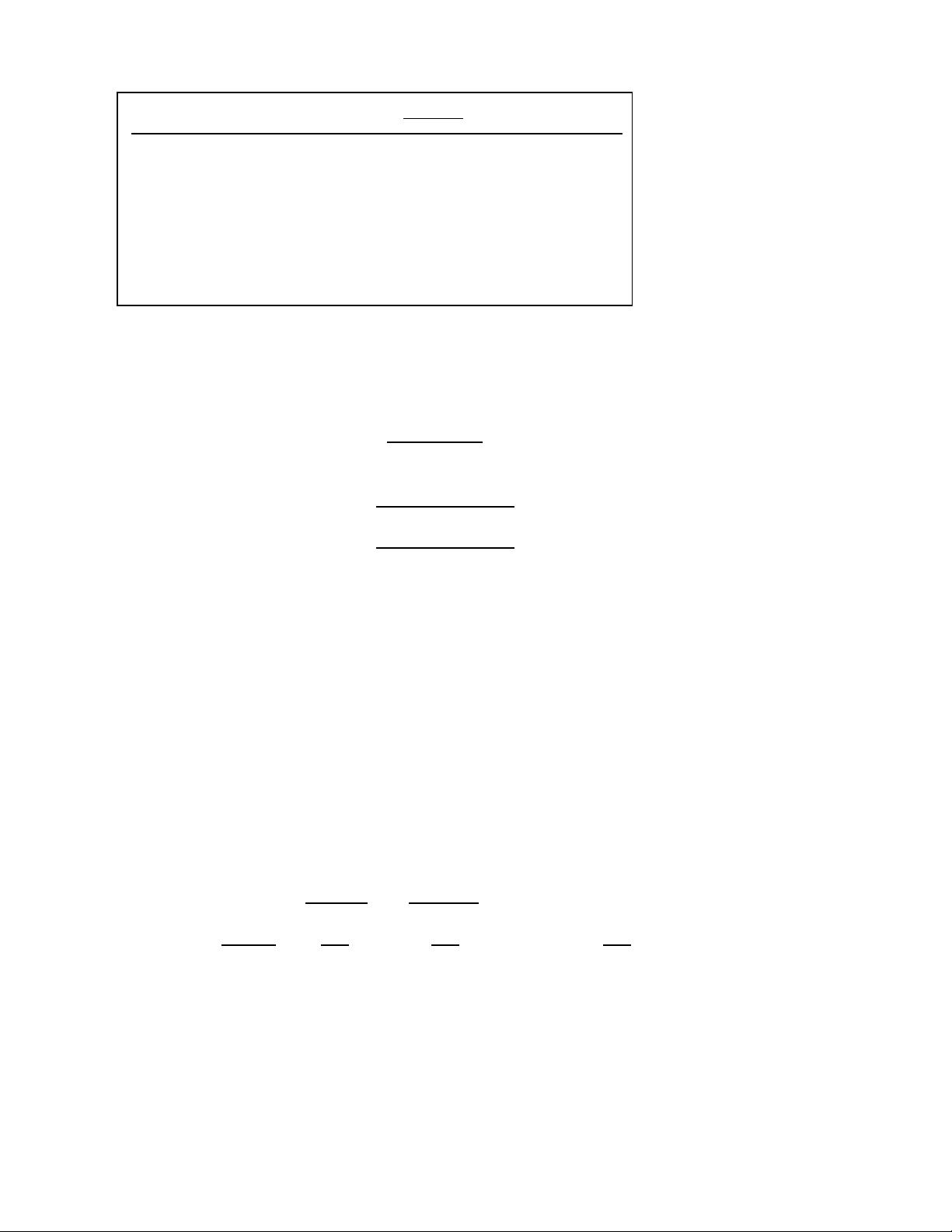

' 0.03 per bit. Figure 1.11 shows the result of transmitting a

binary image over a binary symmetric channel using the repetition code.

The repetition code R

3

has therefore reduced the probability of error, as

desired. Yet we have lost something: our rate of information transfer has

fallen by a factor of three. So if we use a repetition code to communicate data

over a telephone line, it will reduce the error frequency, but it will also reduce

our communication rate. We will have to pay three times as much for each

phone call. Similarly, we would need three of the original noisy gigabyte disk

drives in order to create a one-gigabyte disk drive with p

b

= 0.03.

Can we push the error probability lower, to the values required for a sell-

able disk drive – 10

−15

? We could achieve lower error probabilities by using

repetition codes with more repetitions.

Exercise 1.3.

[3, p.16]

(a) Show that the probability of error of R

N

, the repe-

tition code with N repetitions, is

p

b

=

N

X

n=(N+1)/2

N

n

!

f

n

(1 − f)

N−n

, (1.24)

for odd N.

(b) Assuming f = 0.1, which of the terms in this sum is the biggest?

How much bigger is it than the second-biggest term?

(c) Use Stirling’s approximation (p.2) to approximate the

N

n

in the

largest term, and find, approximately, the probability of error of

the repetition code with N repetitions.

(d) Assuming f = 0.1, find how many repetitions are required to get

the probability of error down to 10

−15

. [Answer: about 60.]

So to build a single gigabyte disk drive with the required reliability from noisy

gigabyte drives with f = 0.1, we would need sixty of the noisy disk drives.

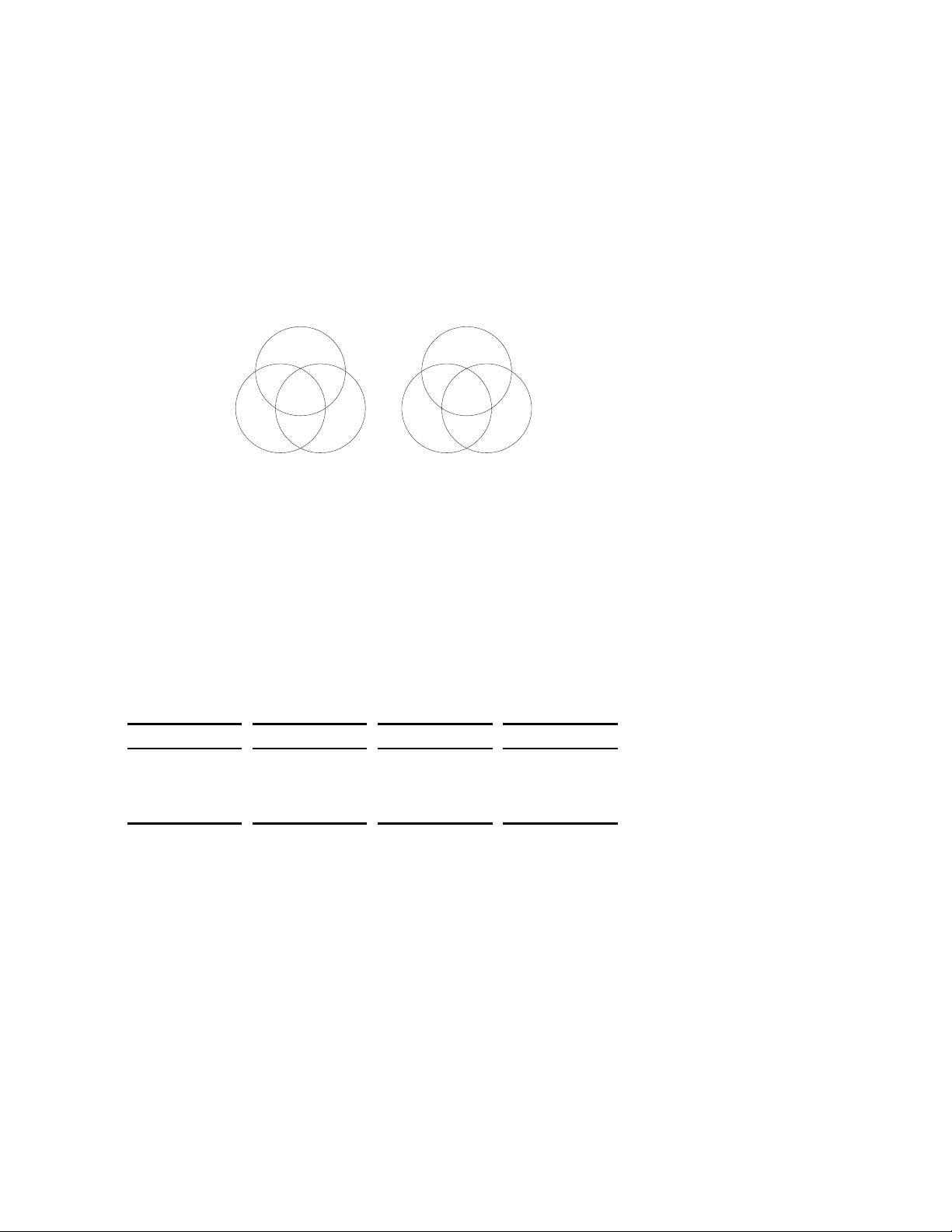

The tradeoff between error probability and rate for repetition codes is shown

in figure 1.12.