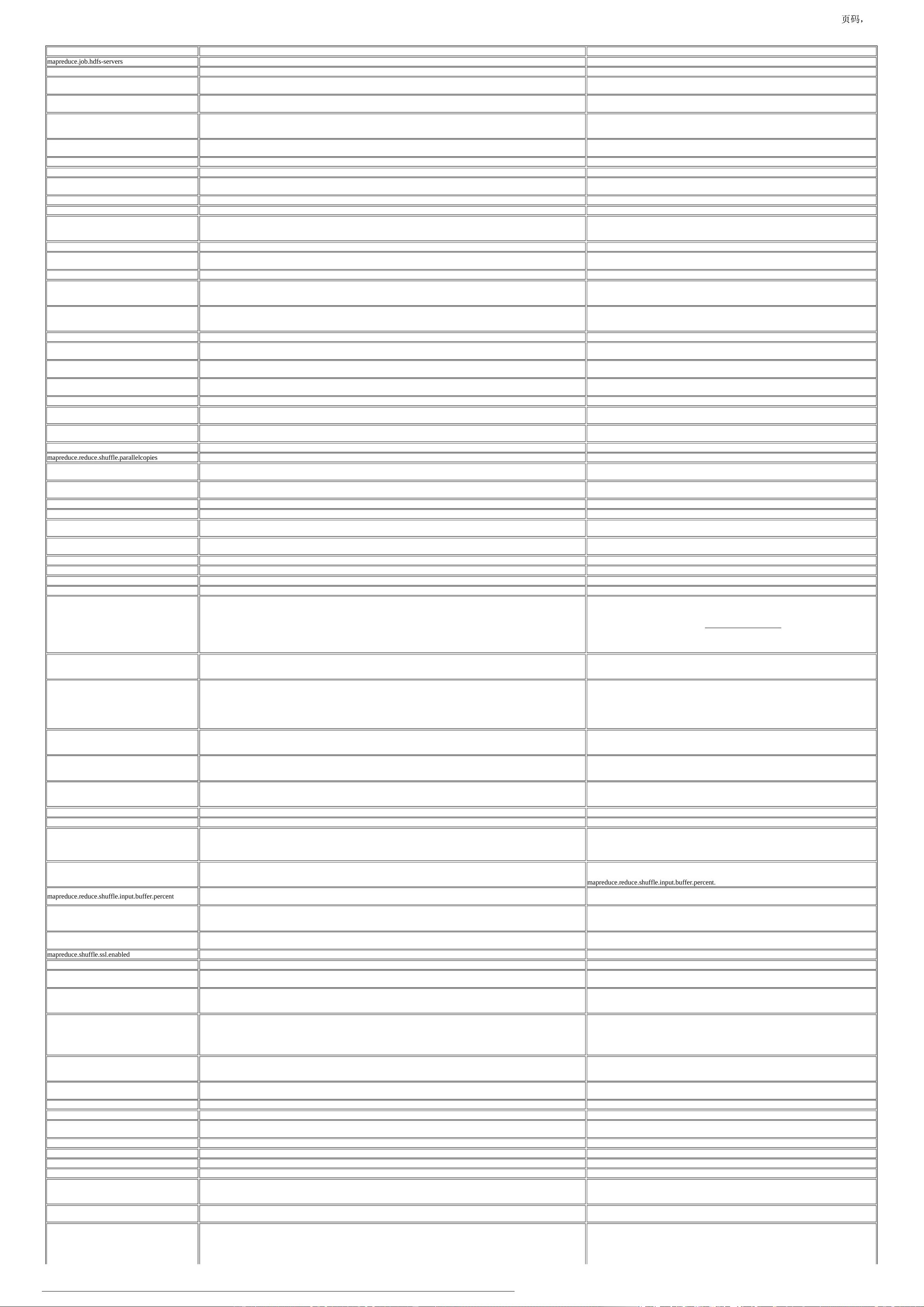

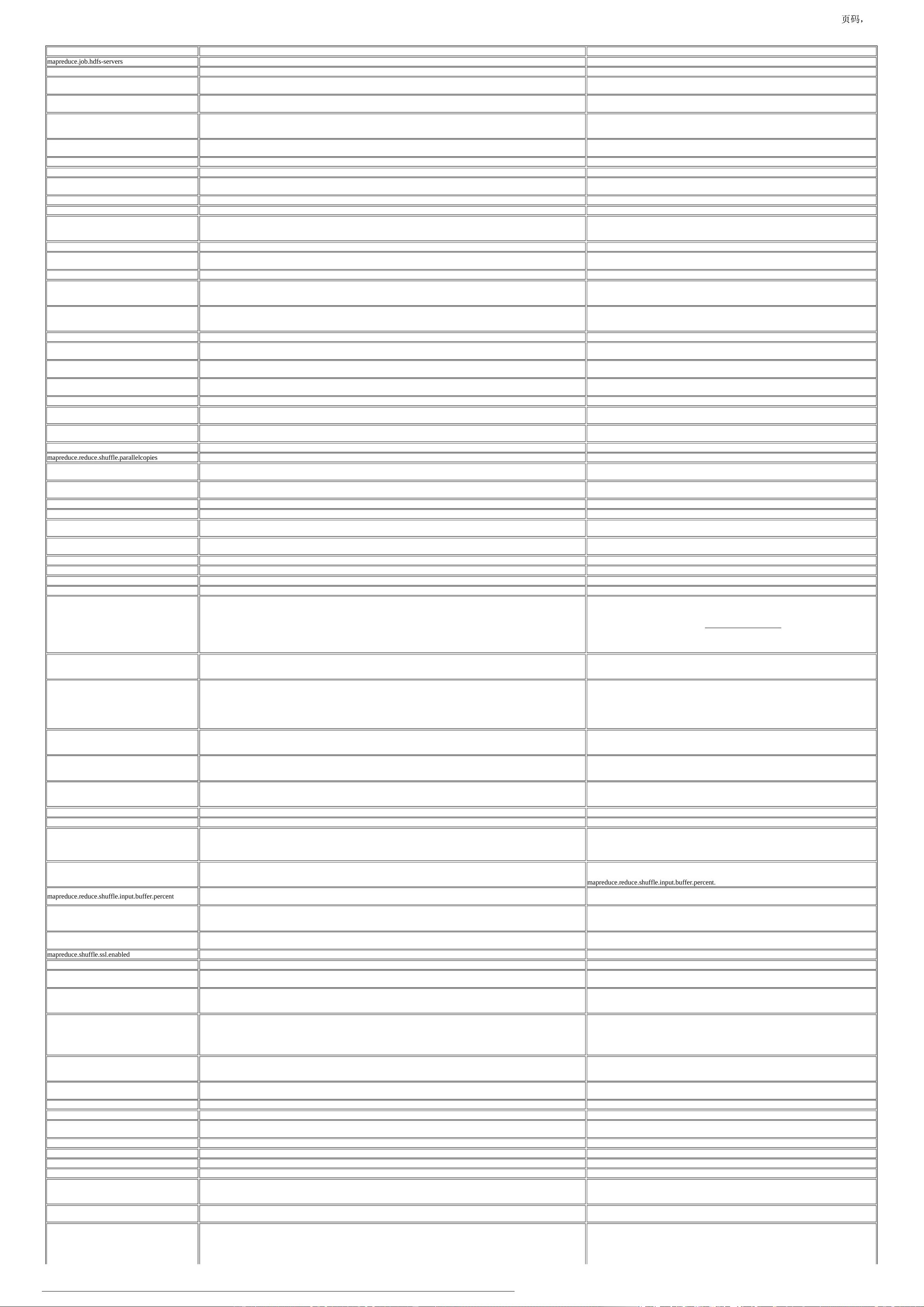

name value description

mapreduce.job.hdfs-servers ${fs.defaultFS}

mapreduce.job.committer.setup.cleanup.needed true true, if job needs job-setup and job-cleanup. false, otherwise

mapreduce.task.io.sort.factor 10

The number of streams to merge at once while sorting files. This determines the number of open file

handles.

mapreduce.task.io.sort.mb 100

The total amount of buffer memory to use while sorting files, in megabytes. By default, gives each

merge stream 1MB, which should minimize seeks.

mapreduce.map.sort.spill.percent 0.80

The soft limit in the serialization buffer. Once reached, a thread will begin to spill the contents to disk in

the background. Note that collection will not block if this threshold is exceeded while a spill is already

in progress, so spills may be larger than this threshold when it is set to less than .5

mapreduce.jobtracker.address local

The host and port that the MapReduce job tracker runs at. If "local", then jobs are run in-process as a

single map and reduce task.

mapreduce.local.clientfactory.class.name org.apache.hadoop.mapred.LocalClientFactory This the client factory that is responsible for creating local job runner client

mapreduce.jobtracker.system.dir ${hadoop.tmp.dir}/mapred/system The directory where MapReduce stores control files.

mapreduce.jobtracker.staging.root.dir ${hadoop.tmp.dir}/mapred/staging

The root of the staging area for users' job files In practice, this should be the directory where users'

home directories are located (usually /user)

mapreduce.cluster.temp.dir ${hadoop.tmp.dir}/mapred/temp A shared directory for temporary files.

mapreduce.job.maps 2 The default number of map tasks per job. Ignored when mapreduce.framework.name is "local".

mapreduce.job.reduces 1

The default number of reduce tasks per job. Typically set to 99% of the cluster's reduce capacity, so that

if a node fails the reduces can still be executed in a single wave. Ignored when

mapreduce.framework.name is "local".

mapreduce.job.running.map.limit 0 The maximum number of simultaneous map tasks per job. There is no limit if this value is 0 or negative.

mapreduce.job.running.reduce.limit 0

The maximum number of simultaneous reduce tasks per job. There is no limit if this value is 0 or

negative.

mapreduce.job.max.map -1 Limit on the number of map tasks allowed per job. There is no limit if this value is negative.

mapreduce.job.reducer.preempt.delay.sec 0

The threshold (in seconds) after which an unsatisfied mapper request triggers reducer preemption when

there is no anticipated headroom. If set to 0 or a negative value, the reducer is preempted as soon as lack

of headroom is detected. Default is 0.

mapreduce.job.reducer.unconditional-

preempt.delay.sec

300

The threshold (in seconds) after which an unsatisfied mapper request triggers a forced reducer

preemption irrespective of the anticipated headroom. By default, it is set to 5 mins. Setting it to 0 leads

to immediate reducer preemption. Setting to -1 disables this preemption altogether.

mapreduce.job.max.split.locations 10 The max number of block locations to store for each split for locality calculation.

mapreduce.job.split.metainfo.maxsize 10000000

The maximum permissible size of the split metainfo file. The MapReduce ApplicationMaster won't

attempt to read submitted split metainfo files bigger than this configured value. No limits if set to -1.

mapreduce.map.maxattempts 4

Expert: The maximum number of attempts per map task. In other words, framework will try to execute a

map task these many number of times before giving up on it.

mapreduce.reduce.maxattempts 4

Expert: The maximum number of attempts per reduce task. In other words, framework will try to

execute a reduce task these many number of times before giving up on it.

mapreduce.reduce.shuffle.fetch.retry.enabled ${yarn.nodemanager.recovery.enabled} Set to enable fetch retry during host restart.

mapreduce.reduce.shuffle.fetch.retry.interval-ms 1000

Time of interval that fetcher retry to fetch again when some non-fatal failure happens because of some

events like NM restart.

mapreduce.reduce.shuffle.fetch.retry.timeout-ms 30000

Timeout value for fetcher to retry to fetch again when some non-fatal failure happens because of some

events like NM restart.

mapreduce.reduce.shuffle.retry-delay.max.ms 60000 The maximum number of ms the reducer will delay before retrying to download map data.

mapreduce.reduce.shuffle.parallelcopies 5 The default number of parallel transfers run by reduce during the copy(shuffle) phase.

mapreduce.reduce.shuffle.connect.timeout 180000

Expert: The maximum amount of time (in milli seconds) reduce task spends in trying to connect to a

remote node for getting map output.

mapreduce.reduce.shuffle.read.timeout 180000

Expert: The maximum amount of time (in milli seconds) reduce task waits for map output data to be

available for reading after obtaining connection.

mapreduce.shuffle.listen.queue.size 128 The length of the shuffle server listen queue.

mapreduce.shuffle.connection-keep-alive.enable false set to true to support keep-alive connections.

mapreduce.shuffle.connection-keep-alive.timeout 5

The number of seconds a shuffle client attempts to retain http connection. Refer "Keep-Alive: timeout="

header in Http specification

mapreduce.task.timeout 600000

The number of milliseconds before a task will be terminated if it neither reads an input, writes an

output, nor updates its status string. A value of 0 disables the timeout.

mapreduce.map.memory.mb 1024 The amount of memory to request from the scheduler for each map task.

mapreduce.map.cpu.vcores 1 The number of virtual cores to request from the scheduler for each map task.

mapreduce.reduce.memory.mb 1024 The amount of memory to request from the scheduler for each reduce task.

mapreduce.reduce.cpu.vcores 1 The number of virtual cores to request from the scheduler for each reduce task.

mapred.child.java.opts -Xmx200m

Java opts for the task processes. The following symbol, if present, will be interpolated: @taskid@ is

replaced by current TaskID. Any other occurrences of '@' will go unchanged. For example, to enable

verbose gc logging to a file named for the taskid in /tmp and to set the heap maximum to be a gigabyte,

pass a 'value' of: -Xmx1024m -verbose:gc -Xloggc:/tmp/@taskid@.gc Usage of -Djava.library.path can

cause programs to no longer function if hadoop native libraries are used. These values should instead be

set as part of LD_LIBRARY_PATH in the map / reduce JVM env using the mapreduce.map.env and

mapreduce.reduce.env config settings.

mapred.child.env

User added environment variables for the task processes. Example : 1) A=foo This will set the env

variable A to foo 2) B=$B:c This is inherit nodemanager's B env variable on Unix. 3) B=%B%;c This is

inherit nodemanager's B env variable on Windows.

mapreduce.admin.user.env

Expert: Additional execution environment entries for map and reduce task processes. This is not an

additive property. You must preserve the original value if you want your map and reduce tasks to have

access to native libraries (compression, etc). When this value is empty, the command to set execution

envrionment will be OS dependent: For linux, use

LD_LIBRARY_PATH=$HADOOP_COMMON_HOME/lib/native. For windows, use PATH = %

PATH%;%HADOOP_COMMON_HOME%\�in.

yarn.app.mapreduce.am.log.level INFO

The logging level for the MR ApplicationMaster. The allowed levels are: OFF, FATAL, ERROR,

WARN, INFO, DEBUG, TRACE and ALL. The setting here could be overriden if

"mapreduce.job.log4j-properties-file" is set.

mapreduce.map.log.level INFO

The logging level for the map task. The allowed levels are: OFF, FATAL, ERROR, WARN, INFO,

DEBUG, TRACE and ALL. The setting here could be overridden if "mapreduce.job.log4j-properties-

file" is set.

mapreduce.reduce.log.level INFO

The logging level for the reduce task. The allowed levels are: OFF, FATAL, ERROR, WARN, INFO,

DEBUG, TRACE and ALL. The setting here could be overridden if "mapreduce.job.log4j-properties-

file" is set.

mapreduce.map.cpu.vcores 1 The number of virtual cores required for each map task.

mapreduce.reduce.cpu.vcores 1 The number of virtual cores required for each reduce task.

mapreduce.reduce.merge.inmem.threshold 1000

The threshold, in terms of the number of files for the in-memory merge process. When we accumulate

threshold number of files we initiate the in-memory merge and spill to disk. A value of 0 or less than 0

indicates we want to DON'T have any threshold and instead depend only on the ramfs's memory

consumption to trigger the merge.

mapreduce.reduce.shuffle.merge.percent 0.66

The usage threshold at which an in-memory merge will be initiated, expressed as a percentage of the

total memory allocated to storing in-memory map outputs, as defined by

mapreduce.reduce.shuffle.input.buffer.percent.

mapreduce.reduce.shuffle.input.buffer.percent 0.70

The percentage of memory to be allocated from the maximum heap size to storing map outputs during

the shuffle.

mapreduce.reduce.input.buffer.percent 0.0

The percentage of memory- relative to the maximum heap size- to retain map outputs during the reduce.

When the shuffle is concluded, any remaining map outputs in memory must consume less than this

threshold before the reduce can begin.

mapreduce.reduce.shuffle.memory.limit.percent 0.25

Expert: Maximum percentage of the in-memory limit that a single shuffle can consume. Range of valid

values is [0.0, 1.0]. If the value is 0.0 map outputs are shuffled directly to disk.

mapreduce.shuffle.ssl.enabled false Whether to use SSL for for the Shuffle HTTP endpoints.

mapreduce.shuffle.ssl.file.buffer.size 65536 Buffer size for reading spills from file when using SSL.

mapreduce.shuffle.max.connections 0

Max allowed connections for the shuffle. Set to 0 (zero) to indicate no limit on the number of

connections.

mapreduce.shuffle.max.threads 0

Max allowed threads for serving shuffle connections. Set to zero to indicate the default of 2 times the

number of available processors (as reported by Runtime.availableProcessors()). Netty is used to serve

requests, so a thread is not needed for each connection.

mapreduce.shuffle.transferTo.allowed

This option can enable/disable using nio transferTo method in the shuffle phase. NIO transferTo does

not perform well on windows in the shuffle phase. Thus, with this configuration property it is possible

to disable it, in which case custom transfer method will be used. Recommended value is false when

running Hadoop on Windows. For Linux, it is recommended to set it to true. If nothing is set then the

default value is false for Windows, and true for Linux.

mapreduce.shuffle.transfer.buffer.size 131072

This property is used only if mapreduce.shuffle.transferTo.allowed is set to false. In that case, this

property defines the size of the buffer used in the buffer copy code for the shuffle phase. The size of this

buffer determines the size of the IO requests.

mapreduce.reduce.markreset.buffer.percent 0.0

The percentage of memory -relative to the maximum heap size- to be used for caching values when

using the mark-reset functionality.

mapreduce.map.speculative true If true, then multiple instances of some map tasks may be executed in parallel.

mapreduce.reduce.speculative true If true, then multiple instances of some reduce tasks may be executed in parallel.

mapreduce.job.speculative.speculative-cap-running-

tasks

0.1 The max percent (0-1) of running tasks that can be speculatively re-executed at any time.

mapreduce.job.speculative.speculative-cap-total-tasks0.01 The max percent (0-1) of all tasks that can be speculatively re-executed at any time.

mapreduce.job.speculative.minimum-allowed-tasks 10 The minimum allowed tasks that can be speculatively re-executed at any time.

mapreduce.job.speculative.retry-after-no-speculate 1000 The waiting time(ms) to do next round of speculation if there is no task speculated in this round.

mapreduce.job.speculative.retry-after-speculate 15000 The waiting time(ms) to do next round of speculation if there are tasks speculated in this round.

mapreduce.job.map.output.collector.class org.apache.hadoop.mapred.MapTask$MapOutputBuffer

The MapOutputCollector implementation(s) to use. This may be a comma-separated list of class names,

in which case the map task will try to initialize each of the collectors in turn. The first to successfully

initialize will be used.

mapreduce.job.speculative.slowtaskthreshold 1.0

The number of standard deviations by which a task's ave progress-rates must be lower than the average

of all running tasks' for the task to be considered too slow.

mapreduce.job.ubertask.enable false Whether to enable the small-jobs "ubertask" optimization, which runs "sufficiently small" jobs

sequentially within a single JVM. "Small" is defined by the following maxmaps, maxreduces, and

maxbytes settings. Note that configurations for application masters also affect the "Small" definition -

yarn.app.mapreduce.am.resource.mb must be larger than both mapreduce.map.memory.mb and

mapreduce.reduce.memory.mb, and yarn.app.mapreduce.am.resource.cpu-vcores must be larger than

1/4

2018/3/15file:///F:/Hadoop/hadoop-2.9.0/share/doc/hadoop/hadoop-mapreduce-client/hadoop-mapreduce-client-core/mapred-default.xml

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功