438 IEEE TRANSACTIONS ON IMAGE PROCESSING, VOL. 28, NO. 1, JANUARY 2019

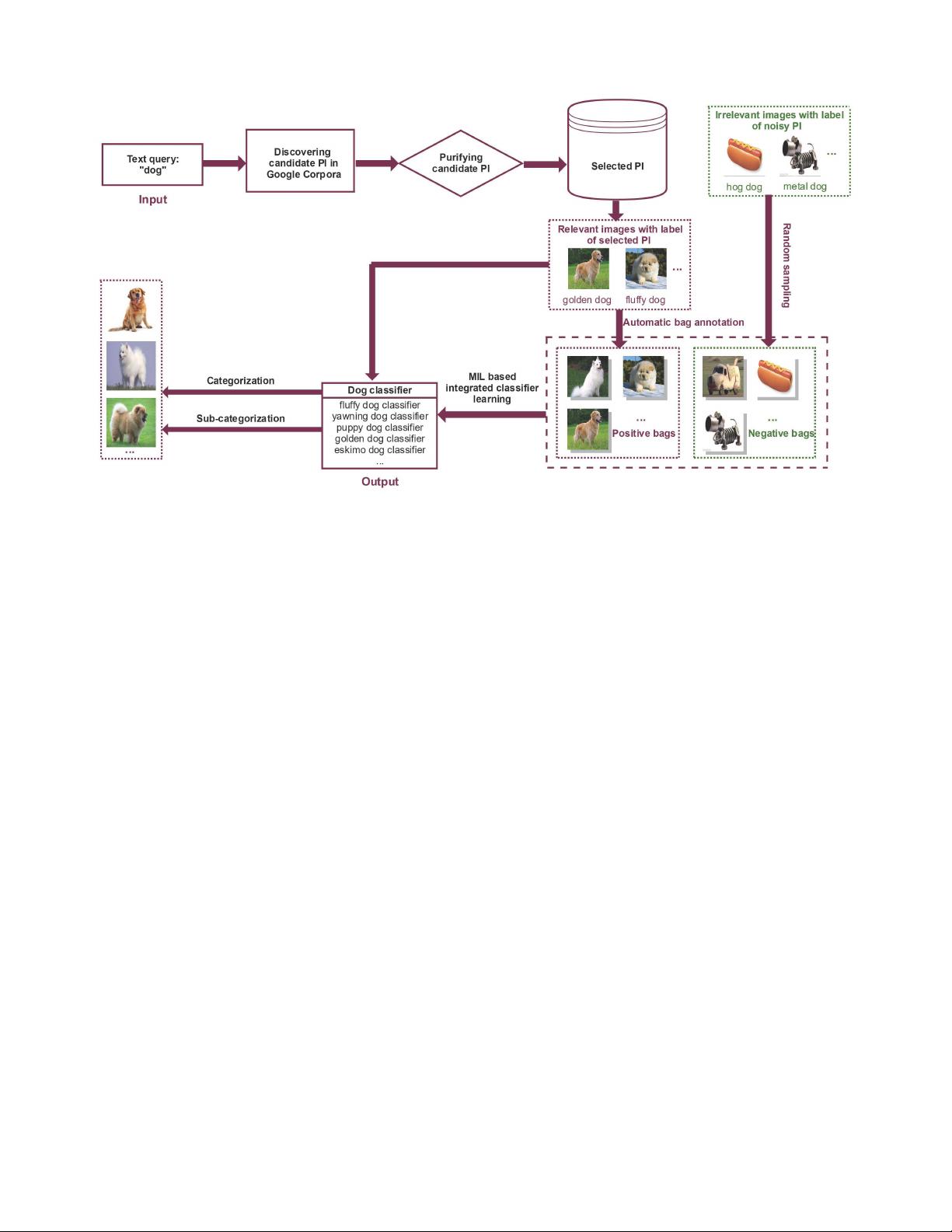

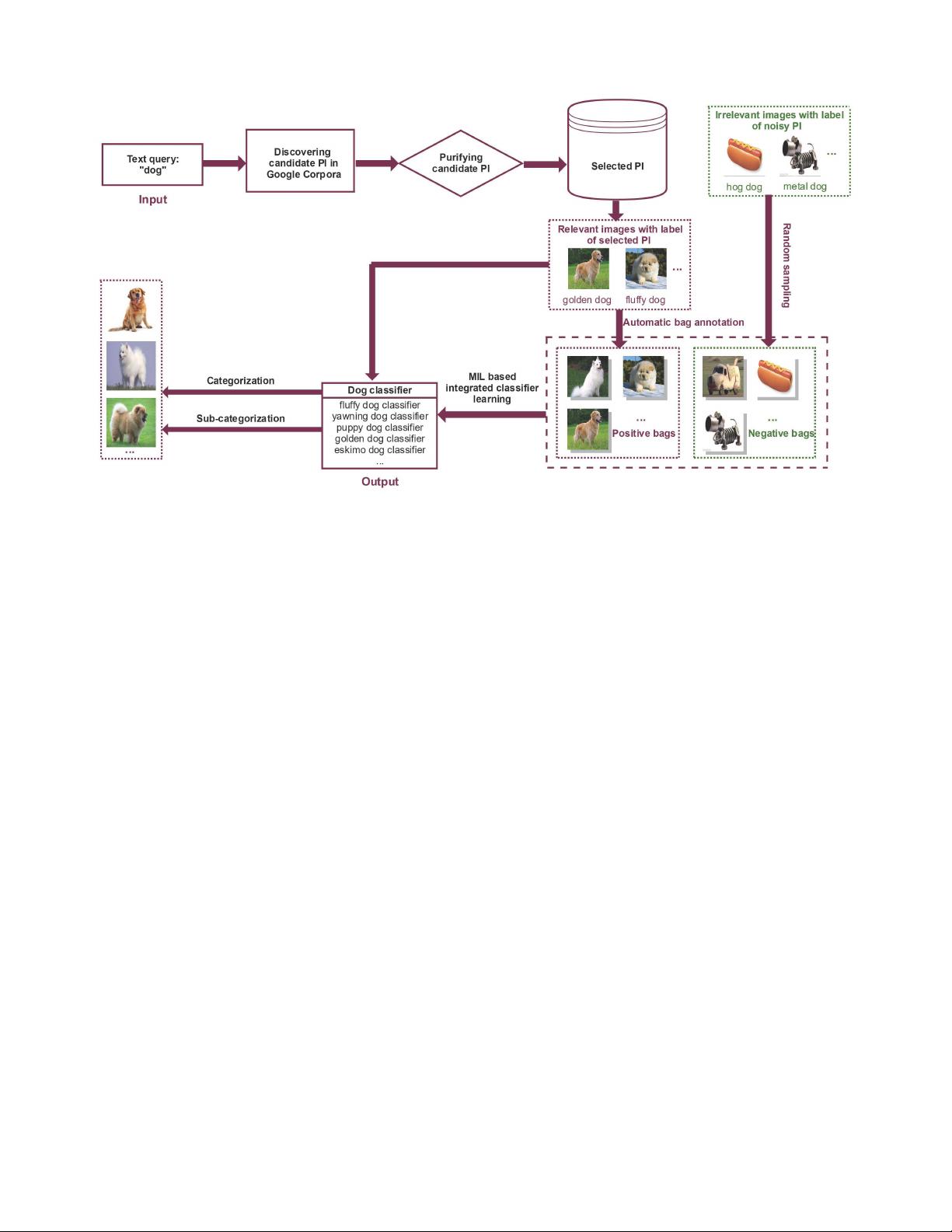

Fig. 2. System overview. Our proposed approach mainly consists of three major steps. Namely, discovering PI, purifying PI, and learning integrated classifier.

proposed in [12]. However, both of [7] and [12] only use the

visual features. In our work, we associate the “bags” with

textual privileged information and propose a new MIL model

to select images and learn the optimal classifiers.

Our work is primarily inspired by the following work.

A visual concept learning system was recently proposed

in [15] and achieved impressive accuracy for object detec-

tion. It discovers an exhaustive vocabulary explaining all the

appearance variations from Google Books Corpora, and trains

full-fledged detection models for it. The differences between

us lie in two aspects. First, we adopt different approaches to

filter out the noisy privileged information. Second, we lever-

aged different strategies to purify the collected web images.

Compared to method [15] which takes an iterative mecha-

nism in the process of noisy images pruning, our method

formulates noisy images removing as an instance-level multi-

instance learning problem. In this way, images from different

distributions can be kept while noise is filtered out.

III. F

RAMEWORK AND METHODS

We seek to automate the process of learning robust classi-

fiers directly from the web data without human intervention.

As shown in Fig 2, our proposed approach mainly consists

of three major steps. Namely, discovering privileged informa-

tion, purifying privileged information, and learning integrated

classifier. In the next, we will give the details of each step.

For ease of presentation, we denote each instance as x

i

with

its label y

i

and each bag G

m

with the label Y

m

. A matrix/vector

is denoted by an uppercase/lowercase letter in boldface. The

transpose of a vector or matrix is represented by

.More-

over, we denote the indicator function as λ(a=b), in which

λ(a=b)=0ifa= b, and λ(a=b)=1, otherwise.

A. Discovering Privileged Information

Inspired by recent works [15], [21], we can use Google

Books Corpora [27] to discover an exhaustive vocabulary

explaining all the appearance variations for the given category.

Compared to manually labeled WordNet [44] and Concept-

Net [49], it is not only much richer but also more general and

exhaustive.

Following [27] (see section 4.3), we specifically treat the

dependency gram data with parts-of-speech (POS) as the

privileged information. For example, given a category (e.g.,

“horse”) and its corresponding POS tag (e.g., ‘jumping,

VERB’), we find all its occurrences annotated with POS

tag within the dependency gram data. Of all the n-gram

dependencies retrieved for the given category, we choose

those whose modifiers are NOUN, VERB, ADJECTIVE, and

ADVERB as the discovered privileged information.

B. Purifying Privileged Information

Not all the discovered privileged information is useful, and

some noise may also be included. In the second PI purifying

step, the so-called “noise” here is the text PI from untagged

corpora (e.g., the bold privileged information in Table I). Using

noisy privileged information to enhance classifier learning will

hurt both of the accuracy and robustness. To this end, we need

to separate useful privileged information from noise before

learning classifiers.

Our basic idea is to filter out the noisy privileged informa-

tion from the perspective of relevance. Specifically, we denote

the semantic distance of all discovered privileged information

by a graph in which the given category (e.g., “dog”) is center

y. Other discovered privileged information has a score S

xy

corresponds to the Normalized Google Distance (NGD) [2]