[40], [41], [42], or learn better relative distances of triplet

training samples [43], [44], [45], [46], or learn better similar-

ity metrics of any pairs [23], [29], [47], [48]. To cope with the

data sparsity problem, Xiao et al. [49] proposed a single

deep network built upon the inception module [50], com-

bined multiple re-id datasets together for training, and

introduced a domain guided dropout strategy to achieve

domain adaptation for each individual dataset. More

recently, variants of Siamese Network have been studied

for person re-id [51], [52]. Pairwise and triplet comparison

objectives were utilized to combine several sub-networks

for person re-id in [27]. Zhong et al. [29] proposed a novel

method to utilize hard sample mining online with triplet

loss in person re-identification. Similarly, Chen et al. [43]

improved triplet loss and proposed a deep quadruplet net-

work. With the success of generative adversarial networks

(GAN) in image generation, Wei et al. [53] and Zhong et al.

[26] applied GAN in the re-id task to solve the problem of

domain gap and overcame the problem of lacking labeled

data in new domains. Among these existing approaches, a

number are closely related which are worth mentioning and

differentiating from our model.

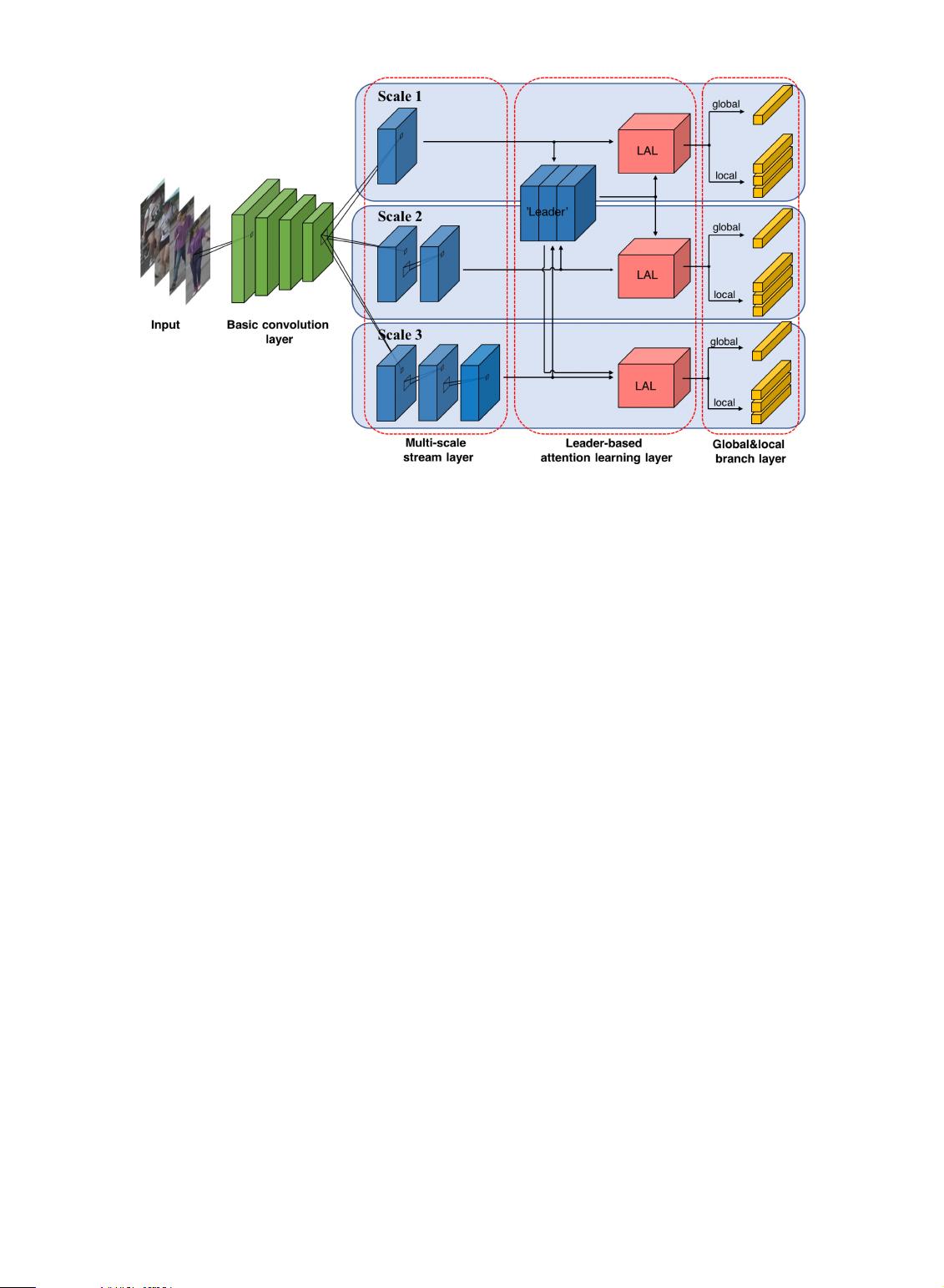

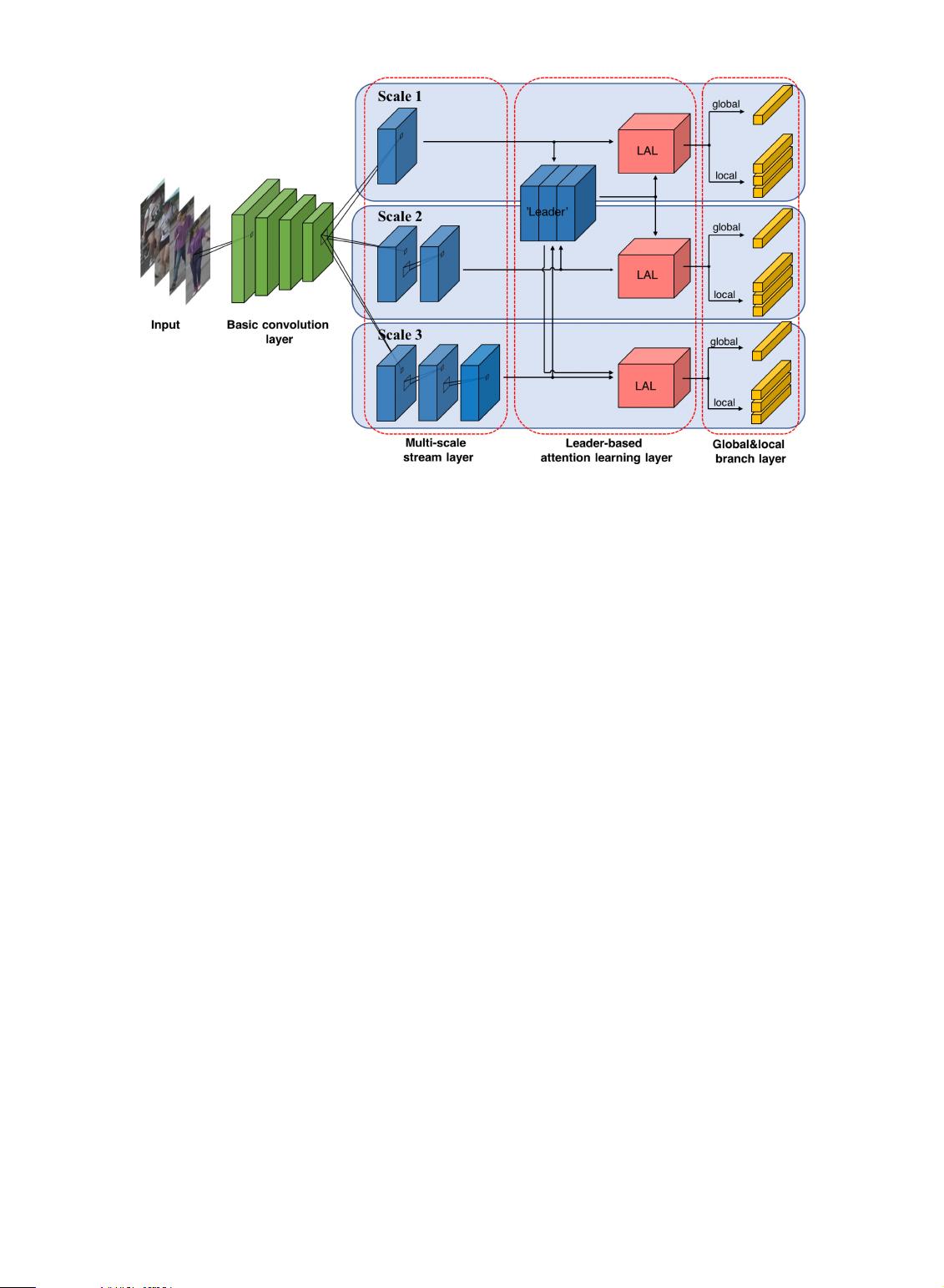

1) Our MuDeep generalizes convolutional layers with

multi-scale representation learning. In particular, we

propose a multi-scale stream layer and a leader-

based attention learning layer for multi-scale learn-

ing, which is clearly different from the ideas of com-

bining multiple sub-networks [27] or channels [30]

with the pairwise or triplet loss.

2) He et al. [54] proposed a multi-branch deep network

to obtain global feature representations and local fea-

ture representations with multiple granularities. In

contrast, our work applies a multi-branch architec-

ture to not only learn the feature representations of

person images at multiple scales, but also share the

weights of previous layers to exploit the complemen-

tarity between multi-scale feature representations.

3) Both Shen et al. [55] and Guo et al. [51] improved the

accuracy of person re-id by using a multi-level simi-

larity, which was computed by multi-level features.

Although the multi-level features are related to ana-

lyzing person images at multiple scales, our MuDeep

is specially designed for multi-scale feature learning

with multiple branches. Importantly, features are

extracted using convolutional filters of different

receptive fields at the same abstraction level, i.e., in

the same convolution block/layer rather than across

different layers. Moreover, in order to learn scale-

specific feature representations, the weights of the

multiple branches are not shared between any two

of them in our network.

2.2 Multi-Scale re-id

The idea of multi-scale learning for re-id was first exploited

in [56]. However, the definition of scale is different: It was

defined as different levels of input resolutions rather than

as in our definition, which applies multi-scale filters.

Despite the similarity between terminology, very different

problems are tackled in these two approaches. Compared

with previous multi-scale methods in re-id, Chen et al. [57]

adopted m scale-specific networks to learn deep pyramidal

features from images with different scales; however, our

MuDeep has a much simpler architecture and only utilizes

one network with multiple branches to extract m scale-

specific representations of one person image. Wang et al.

[58] extracted multi-resolution embeddings in one network

at different stages, and fused them with a simple weig-

hted sum to solve the problem of person re-identification

under resource constraints. Our MuDeep concentrates on

Fig. 2. Overview of MuDeep architecture. The multi-scale stream layer first analyzes feature maps with multiple scales. Then the leader-based

attention learning layer is followed to automatically discover and emphasize important spatial locations. Finally, the global and local branch layer

is utilized to extract discriminate features from global and local parts. Note that the parameters of each scale are not shared. ‘LAL’ means the

Leader-based Attention Learning layer, with further details shown in Fig. 4.

QIAN ET AL.: LEADER-BASED MULTI-SCALE ATTENTION DEEP ARCHITECTURE FOR PERSON RE-IDENTIFICATION 373