methods of network pruning [12, 13, 14, 15, 16, 17] and

connectivity learning [18, 19]. A substantial amount of

work has also been dedicated to changing the connectiv-

ity structure of the internal convolutional blocks such as

in ShuffleNet [20] or introducing sparsity [21] and oth-

ers [22].

Recently, [23, 24, 25, 26], opened up a new direc-

tion of bringing optimization methods including genetic

algorithms and reinforcement learning to architectural

search. However one drawback is that the resulting net-

works end up very complex. In this paper, we pursue the

goal of developing better intuition about how neural net-

works operate and use that to guide the simplest possible

network design. Our approach should be seen as compli-

mentary to the one described in [23] and related work.

In this vein our approach is similar to those taken by

[20, 22] and allows to further improve the performance,

while providing a glimpse on its internal operation. Our

network design is based on MobileNetV1 [27]. It re-

tains its simplicity and does not require any special op-

erators while significantly improves its accuracy, achiev-

ing state of the art on multiple image classification and

detection tasks for mobile applications.

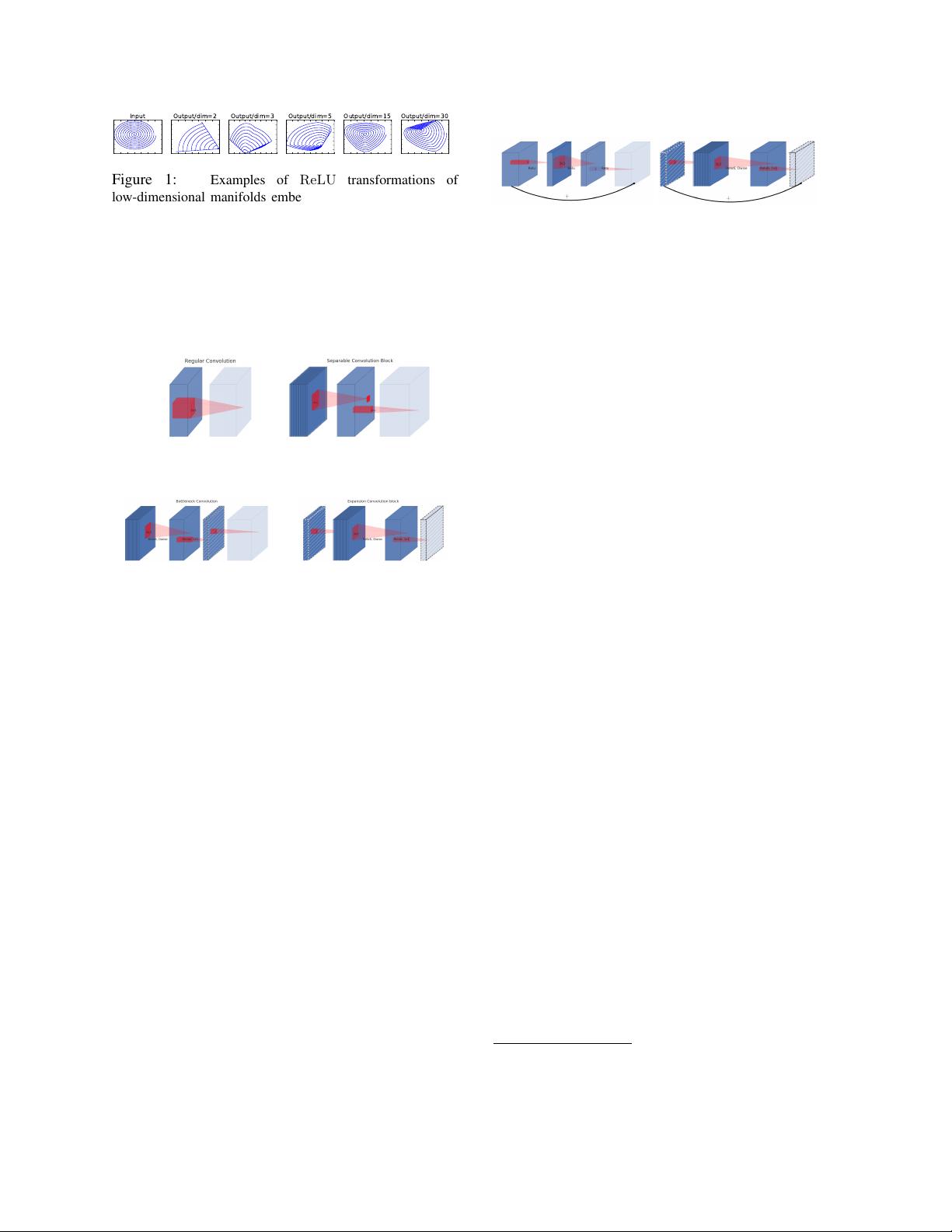

3. Preliminaries, discussion and intuition

3.1. Depthwise Separable Convolutions

Depthwise Separable Convolutions are a key build-

ing block for many efficient neural network architectures

[27, 28, 20] and we use them in the present work as well.

The basic idea is to replace a full convolutional opera-

tor with a factorized version that splits convolution into

two separate layers. The first layer is called a depthwise

convolution, it performs lightweight filtering by apply-

ing a single convolutional filter per input channel. The

second layer is a 1 × 1 convolution, called a pointwise

convolution, which is responsible for building new fea-

tures through computing linear combinations of the in-

put channels.

Standard convolution takes an h

i

× w

i

× d

i

in-

put tensor L

i

, and applies convolutional kernel K ∈

R

k×k×d

i

×d

j

to produce an h

i

× w

i

× d

j

output ten-

sor L

j

. Standard convolutional layers have the compu-

tational cost of h

i

· w

i

· d

i

· d

j

· k · k.

Depthwise separable convolutions are a drop-in re-

placement for standard convolutional layers. Empiri-

cally they work almost as well as regular convolutions

but only cost:

h

i

· w

i

· d

i

(k

2

+ d

j

) (1)

which is the sum of the depthwise and 1 × 1 pointwise

convolutions. Effectively depthwise separable convolu-

tion reduces computation compared to traditional layers

by almost a factor of k

21

. MobileNetV2 uses k = 3

(3 × 3 depthwise separable convolutions) so the compu-

tational cost is 8 to 9 times smaller than that of standard

convolutions at only a small reduction in accuracy [27].

3.2. Linear Bottlenecks

Consider a deep neural network consisting of n layers

L

i

each of which has an activation tensor of dimensions

h

i

× w

i

× d

i

. Throughout this section we will be dis-

cussing the basic properties of these activation tensors,

which we will treat as containers of h

i

× w

i

“pixels”

with d

i

dimensions. Informally, for an input set of real

images, we say that the set of layer activations (for any

layer L

i

) forms a “manifold of interest”. It has been long

assumed that manifolds of interest in neural networks

could be embedded in low-dimensional subspaces. In

other words, when we look at all individual d-channel

pixels of a deep convolutional layer, the information

encoded in those values actually lie in some manifold,

which in turn is embeddable into a low-dimensional sub-

space

2

.

At a first glance, such a fact could then be captured

and exploited by simply reducing the dimensionality of

a layer thus reducing the dimensionality of the oper-

ating space. This has been successfully exploited by

MobileNetV1 [27] to effectively trade off between com-

putation and accuracy via a width multiplier parameter,

and has been incorporated into efficient model designs

of other networks as well [20]. Following that intuition,

the width multiplier approach allows one to reduce the

dimensionality of the activation space until the mani-

fold of interest spans this entire space. However, this

intuition breaks down when we recall that deep convo-

lutional neural networks actually have non-linear per co-

ordinate transformations, such as ReLU. For example,

ReLU applied to a line in 1D space produces a ’ray’,

where as in R

n

space, it generally results in a piece-wise

linear curve with n-joints.

It is easy to see that in general if a result of a layer

transformation ReLU(Bx) has a non-zero volume S,

the points mapped to interior S are obtained via a lin-

ear transformation B of the input, thus indicating that

the part of the input space corresponding to the full di-

mensional output, is limited to a linear transformation.

In other words, deep networks only have the power of

a linear classifier on the non-zero volume part of the

1

more precisely, by a factor k

2

d

j

/(k

2

+ d

j

)

2

Note that dimensionality of the manifold differs from the dimen-

sionality of a subspace that could be embedded via a linear transfor-

mation.

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功

评论0