没有合适的资源?快使用搜索试试~ 我知道了~

首页Deep Facial Expression Recognition A Survey.pdf

Deep Facial Expression Recognition A Survey.pdf

需积分: 25 15 下载量 163 浏览量

更新于2023-03-16

评论

收藏 3.69MB PDF 举报

这篇论文是近几年表情识别方向的一篇较好的综述。 论文对表情识别的相关信息进行了全面的总结,其中包括对FER相关的数据集介绍、对基于深度神经网络的静态图片和动态图片序列(视频)FER相关算法的优缺点总计,以及对FER面临的机遇和挑战给出了说明。

资源详情

资源评论

资源推荐

1

Deep Facial Expression Recognition: A Survey

Shan Li and Weihong Deng

∗

, Member, IEEE

Abstract—With the transition of facial expression recognition (FER) from laboratory-controlled to challenging in-the-wild conditions

and the recent success of deep learning techniques in various fields, deep neural networks have increasingly been leveraged to learn

discriminative representations for automatic FER. Recent deep FER systems generally focus on two important issues: overfitting

caused by a lack of sufficient training data and expression-unrelated variations, such as illumination, head pose and identity bias. In this

paper, we provide a comprehensive survey on deep FER, including datasets and algorithms that provide insights into these intrinsic

problems. First, we introduce the available datasets that are widely used in the literature and provide accepted data selection and

evaluation principles for these datasets. We then describe the standard pipeline of a deep FER system with the related background

knowledge and suggestions of applicable implementations for each stage. For the state of the art in deep FER, we review existing

novel deep neural networks and related training strategies that are designed for FER based on both static images and dynamic image

sequences, and discuss their advantages and limitations. Competitive performances on widely used benchmarks are also summarized

in this section. We then extend our survey to additional related issues and application scenarios. Finally, we review the remaining

challenges and corresponding opportunities in this field as well as future directions for the design of robust deep FER systems.

Index Terms—Facial Expressions Recognition, Facial expression datasets, Affect, Deep Learning, Survey.

F

1 INTRODUCTION

F

ACIAL expression is one of the most powerful, natural and

universal signals for human beings to convey their emotional

states and intentions [1], [2]. Numerous studies have been con-

ducted on automatic facial expression analysis because of its

practical importance in sociable robotics, medical treatment, driver

fatigue surveillance, and many other human-computer interaction

systems. In the field of computer vision and machine learning,

various facial expression recognition (FER) systems have been

explored to encode expression information from facial represen-

tations. As early as the twentieth century, Ekman and Friesen [3]

defined six basic emotions based on cross-culture study [4], which

indicated that humans perceive certain basic emotions in the same

way regardless of culture. These prototypical facial expressions

are anger, disgust, fear, happiness, sadness, and surprise. Contempt

was subsequently added as one of the basic emotions [5]. Recently,

advanced research on neuroscience and psychology argued that the

model of six basic emotions are culture-specific and not universal

[6].

Although the affect model based on basic emotions is limited

in the ability to represent the complexity and subtlety of our

daily affective displays [7], [8], [9], and other emotion description

models, such as the Facial Action Coding System (FACS) [10] and

the continuous model using affect dimensions [11], are considered

to represent a wider range of emotions, the categorical model

that describes emotions in terms of discrete basic emotions is

still the most popular perspective for FER, due to its pioneering

investigations along with the direct and intuitive definition of facial

expressions. And in this survey, we will limit our discussion on

FER based on the categorical model.

FER systems can be divided into two main categories accord-

ing to the feature representations: static image FER and dynamic

sequence FER. In static-based methods [12], [13], [14], the feature

• The authors are with the Pattern Recognition and Intelligent System Lab-

oratory, School of Information and Communication Engineering, Beijing

University of Posts and Telecommunications, Beijing, 100876, China.

E-mail:{ls1995, whdeng}@bupt.edu.cn.

representation is encoded with only spatial information from the

current single image, whereas dynamic-based methods [15], [16],

[17] consider the temporal relation among contiguous frames in

the input facial expression sequence. Based on these two vision-

based methods, other modalities, such as audio and physiological

channels, have also been used in multimodal systems [18] to assist

the recognition of expression.

The majority of the traditional methods have used handcrafted

features or shallow learning (e.g., local binary patterns (LBP) [12],

LBP on three orthogonal planes (LBP-TOP) [15], non-negative

matrix factorization (NMF) [19] and sparse learning [20]) for FER.

However, since 2013, emotion recognition competitions such as

FER2013 [21] and Emotion Recognition in the Wild (EmotiW)

[22], [23], [24] have collected relatively sufficient training data

from challenging real-world scenarios, which implicitly promote

the transition of FER from lab-controlled to in-the-wild settings. In

the meanwhile, due to the dramatically increased chip processing

abilities (e.g., GPU units) and well-designed network architecture,

studies in various fields have begun to transfer to deep learning

methods, which have achieved the state-of-the-art recognition ac-

curacy and exceeded previous results by a large margin (e.g., [25],

[26], [27], [28]). Likewise, given with more effective training data

of facial expression, deep learning techniques have increasingly

been implemented to handle the challenging factors for emotion

recognition in the wild. Figure 1 illustrates this evolution on FER

in the aspect of algorithms and datasets.

Exhaustive surveys on automatic expression analysis have

been published in recent years [7], [8], [29], [30]. These surveys

have established a set of standard algorithmic pipelines for FER.

However, they focus on traditional methods, and deep learning

has rarely been reviewed. Very recently, FER based on deep

learning has been surveyed in [31], which is a brief review without

introductions on FER datasets and technical details on deep FER.

Therefore, in this paper, we make a systematic research on deep

learning for FER tasks based on both static images and videos

(image sequences). We aim to give a newcomer to this filed an

overview of the systematic framework and prime skills for deep

arXiv:1804.08348v2 [cs.CV] 22 Oct 2018

2

2017

2015

2013

2011

2009

2007

Zhao et al. [15] (LBP-TOP, SVM)

Shan et al. [12] (LBP, AdaBoost)

CK+

MMI

FER2013

Zhi et al. [19] (NMF)

Zhong et al. [20] (Sparse learning)

Tang (CNN) [130] (winner of FER2013)

Kahou et al. [57] (CNN, DBN, DAE)

(winner of EmotiW 2013)

EmotioNet

RAF-DB

AffectNet

----

>

----

>

EmotiW

Fan et al. [108] (CNN-LSTM, C3D)

(winner of EmotiW 2016)

LP loss

tuplet cluster loss

Island loss

… …

HoloNet

PPDN

IACNN

FaceNet2ExpNet

… …

Algorithm

Dataset

Fig. 1. The evolution of facial expression recognition in terms of datasets

and methods.

FER.

Despite the powerful feature learning ability of deep learning,

problems remain when applied to FER. First, deep neural networks

require a large amount of training data to avoid overfitting.

However, the existing facial expression databases are not sufficient

to train the well-known neural network with deep architecture that

achieved the most promising results in object recognition tasks.

Additionally, high inter-subject variations exist due to different

personal attributes, such as age, gender, ethnic backgrounds and

level of expressiveness [32]. In addition to subject identity bias,

variations in pose, illumination and occlusions are common in

unconstrained facial expression scenarios. These factors are non-

linearly coupled with facial expressions and therefore strengthen

the requirement of deep networks to address the large intra-class

variability and to learn effective expression-specific representa-

tions.

In this paper, we introduce recent advances in research on

solving the above problems for deep FER. We examine the state-

of-the-art results that have not been reviewed in previous survey

papers. The rest of this paper is organized as follows. Frequently

used expression databases are introduced in Section 2. Section 3

identifies three main steps required in a deep FER system and

describes the related background. Section 4 provides a detailed

review of novel neural network architectures and special network

training tricks designed for FER based on static images and

dynamic image sequences. We then cover additional related issues

and other practical scenarios in Section 5. Section 6 discusses

some of the challenges and opportunities in this field and identifies

potential future directions.

2 FACIAL EXPRESSION DATABASES

Having sufficient labeled training data that include as many

variations of the populations and environments as possible is

important for the design of a deep expression recognition system.

In this section, we discuss the publicly available databases that

contain basic expressions and that are widely used in our reviewed

papers for deep learning algorithms evaluation. We also introduce

newly released databases that contain a large amount of affective

images collected from the real world to benefit the training of

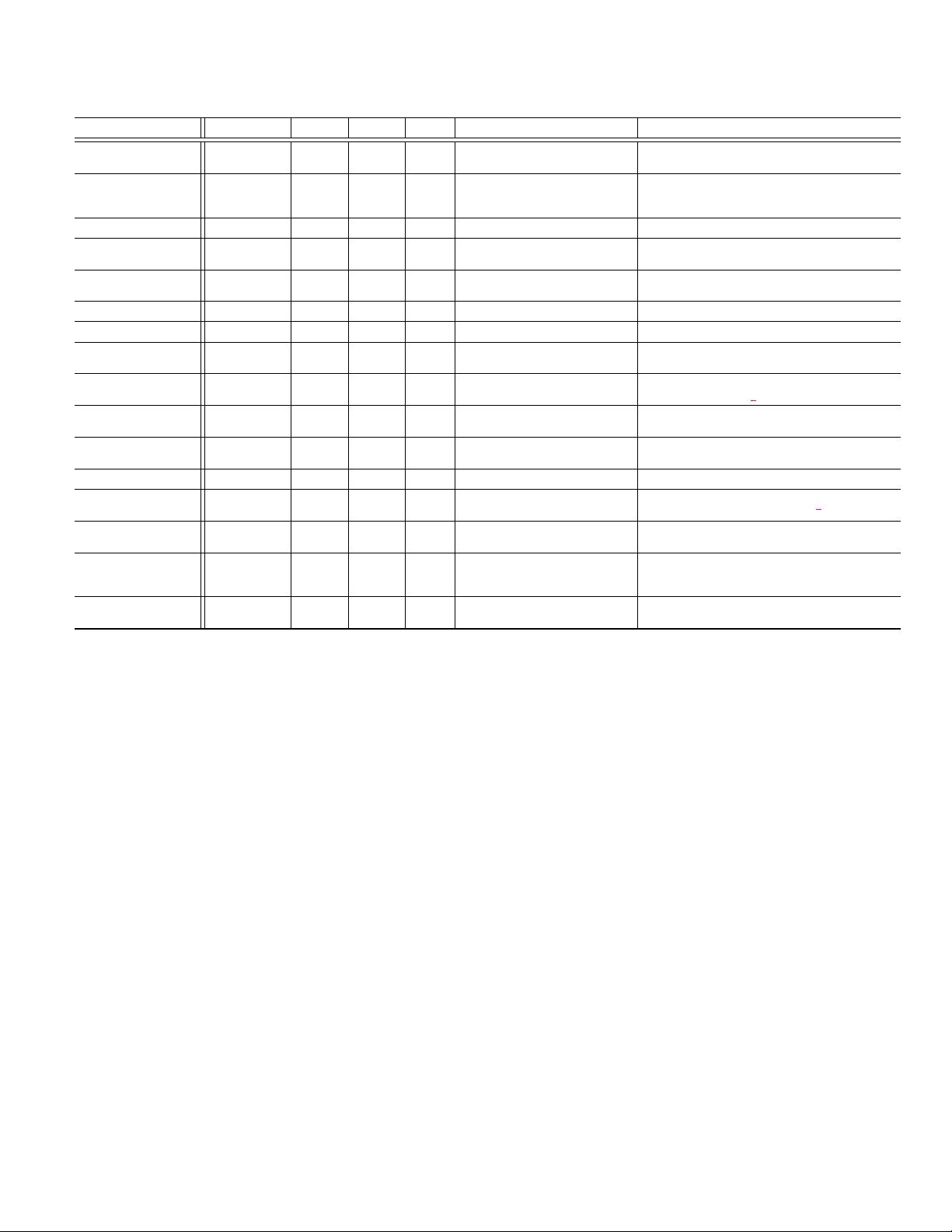

deep neural networks. Table 1 provides an overview of these

datasets, including the main reference, number of subjects, number

of image or video samples, collection environment, expression

distribution and additional information.

CK+ [33]: The Extended CohnKanade (CK+) database is the

most extensively used laboratory-controlled database for evaluat-

ing FER systems. CK+ contains 593 video sequences from 123

subjects. The sequences vary in duration from 10 to 60 frames

and show a shift from a neutral facial expression to the peak

expression. Among these videos, 327 sequences from 118 subjects

are labeled with seven basic expression labels (anger, contempt,

disgust, fear, happiness, sadness, and surprise) based on the Facial

Action Coding System (FACS). Because CK+ does not provide

specified training, validation and test sets, the algorithms evaluated

on this database are not uniform. For static-based methods, the

most common data selection method is to extract the last one to

three frames with peak formation and the first frame (neutral face)

of each sequence. Then, the subjects are divided into n groups for

person-independent n-fold cross-validation experiments, where

commonly selected values of n are 5, 8 and 10.

MMI [34], [35]: The MMI database is laboratory-controlled

and includes 326 sequences from 32 subjects. A total of 213

sequences are labeled with six basic expressions (without “con-

tempt”), and 205 sequences are captured in frontal view. In

contrast to CK+, sequences in MMI are onset-apex-offset labeled,

i.e., the sequence begins with a neutral expression and reaches

peak near the middle before returning to the neutral expression.

Furthermore, MMI has more challenging conditions, i.e., there are

large inter-personal variations because subjects perform the same

expression non-uniformly and many of them wear accessories

(e.g., glasses, mustache). For experiments, the most common

method is to choose the first frame (neutral face) and the three peak

frames in each frontal sequence to conduct person-independent

10-fold cross-validation.

JAFFE [36]: The Japanese Female Facial Expression (JAFFE)

database is a laboratory-controlled image database that contains

213 samples of posed expressions from 10 Japanese females. Each

person has 3˜4 images with each of six basic facial expressions

(anger, disgust, fear, happiness, sadness, and surprise) and one

image with a neutral expression. The database is challenging be-

cause it contains few examples per subject/expression. Typically,

all the images are used for the leave-one-subject-out experiment.

TFD [37]:The Toronto Face Database (TFD) is an amalgama-

tion of several facial expression datasets. TFD contains 112,234

images, 4,178 of which are annotated with one of seven expres-

sion labels: anger, disgust, fear, happiness, sadness, surprise and

neutral. The faces have already been detected and normalized to a

size of 48*48 such that all the subjects eyes are the same distance

apart and have the same vertical coordinates. Five official folds are

provided in TFD; each fold contains a training, validation, and test

set consisting of 70%, 10%, and 20% of the images, respectively.

FER2013 [21]: The FER2013 database was introduced during

the ICML 2013 Challenges in Representation Learning. FER2013

is a large-scale and unconstrained database collected automati-

cally by the Google image search API. All images have been

registered and resized to 48*48 pixels after rejecting wrongfully

labeled frames and adjusting the cropped region. FER2013 con-

tains 28,709 training images, 3,589 validation images and 3,589

test images with seven expression labels (anger, disgust, fear,

happiness, sadness, surprise and neutral).

AFEW [48]: The Acted Facial Expressions in the Wild

(AFEW) database was first established and introduced in [49]

and has served as an evaluation platform for the annual Emo-

3

TABLE 1

An overview of the facial expression datasets. P = posed; S = spontaneous; Condit. = Collection condition; Elicit. = Elicitation method.

Database Samples

Subject Condit. Elicit.

Expression distribution Access

CK+ [33]

593 image

sequences

123 Lab P & S

6 basic expressions plus contempt

and neutral

http://www.consortium.ri.cmu.edu/ckagree/

MMI [34], [35]

740 images

and 2,900

videos

25 Lab P

6 basic expressions plus neutral https://mmifacedb.eu/

JAFFE [36] 213 images

10 Lab P

6 basic expressions plus neutral http://www.kasrl.org/jaffe.html

TFD [37]

112,234

images

N/A Lab P

6 basic expressions plus neutral josh@mplab.ucsd.edu

FER-2013 [21] 35,887 images

N/A Web P & S

6 basic expressions plus neutral

https://www.kaggle.com/c/challenges-in-representatio

n-learning-facial-expression-recognition-challenge

AFEW 7.0 [24] 1,809 videos

N/A Movie P & S

6 basic expressions plus neutral https://sites.google.com/site/emotiwchallenge/

SFEW 2.0 [22] 1,766 images

N/A Movie P & S

6 basic expressions plus neutral https://cs.anu.edu.au/few/emotiw2015.html

Multi-PIE [38]

755,370

images

337 Lab P

Smile, surprised, squint, disgust,

scream and neutral

http://www.flintbox.com/public/project/4742/

BU-3DFE [39] 2,500 images

100 Lab P

6 basic expressions plus neutral

http://www.cs.binghamton.edu/

∼

lijun/Research/3DFE

/3DFE Analysis.html

Oulu-CASIA [40]

2,880 image

sequences

80 Lab P

6 basic expressions http://www.cse.oulu.fi/CMV/Downloads/Oulu-CASIA

RaFD [41] 1,608 images

67 Lab P

6 basic expressions plus contempt

and neutral

http://www.socsci.ru.nl:8180/RaFD2/RaFD

KDEF [42] 4,900 images

70 Lab P

6 basic expressions plus neutral http://www.emotionlab.se/kdef/

EmotioNet [43]

1,000,000

images

N/A Web P & S

23 basic expressions or compound

expressions

http://cbcsl.ece.ohio-state.edu/dbform emotionet.html

RAF-DB [44], [45] 29672 images

N/A Web P & S

6 basic expressions plus neutral and

12 compound expressions

http://www.whdeng.cn/RAF/model1.html

AffectNet [46]

450,000

images

(labeled)

N/A Web P & S

6 basic expressions plus neutral http://mohammadmahoor.com/databases-codes/

ExpW [47] 91,793 images

N/A Web P & S

6 basic expressions plus neutral

http://mmlab.ie.cuhk.edu.hk/projects/socialrelation/ind

ex.html

tion Recognition In The Wild Challenge (EmotiW) since 2013.

AFEW contains video clips collected from different movies with

spontaneous expressions, various head poses, occlusions and il-

luminations. AFEW is a temporal and multimodal database that

provides with vastly different environmental conditions in both

audio and video. Samples are labeled with seven expressions:

anger, disgust, fear, happiness, sadness, surprise and neutral. The

annotation of expressions have been continuously updated, and

reality TV show data have been continuously added. The AFEW

7.0 in EmotiW 2017 [24] is divided into three data partitions in

an independent manner in terms of subject and movie/TV source:

Train (773 samples), Val (383 samples) and Test (653 samples),

which ensures data in the three sets belong to mutually exclusive

movies and actors.

SFEW [50]: The Static Facial Expressions in the Wild

(SFEW) was created by selecting static frames from the AFEW

database by computing key frames based on facial point clustering.

The most commonly used version, SFEW 2.0, was the bench-

marking data for the SReco sub-challenge in EmotiW 2015 [22].

SFEW 2.0 has been divided into three sets: Train (958 samples),

Val (436 samples) and Test (372 samples). Each of the images is

assigned to one of seven expression categories, i.e., anger, disgust,

fear, neutral, happiness, sadness, and surprise. The expression

labels of the training and validation sets are publicly available,

whereas those of the testing set are held back by the challenge

organizer.

Multi-PIE [38]: The CMU Multi-PIE database contains

755,370 images from 337 subjects under 15 viewpoints and 19

illumination conditions in up to four recording session. Each facial

image is labeled with one of six expressions: disgust, neutral,

scream, smile, squint and surprise. This dataset is typically used

for multiview facial expression analysis.

BU-3DFE [39]: The Binghamton University 3D Facial Ex-

pression (BU-3DFE) database contains 606 facial expression se-

quences captured from 100 people. For each subject, six universal

facial expressions (anger, disgust, fear, happiness, sadness and

surprise) are elicited by various manners with multiple intensities.

Similar to Multi-PIE, this dataset is typically used for multiview

3D facial expression analysis.

Oulu-CASIA [40]: The Oulu-CASIA database includes 2,880

image sequences collected from 80 subjects labeled with six

basic emotion labels: anger, disgust, fear, happiness, sadness, and

surprise. Each of the videos is captured with one of two imaging

systems, i.e., near-infrared (NIR) or visible light (VIS), under

three different illumination conditions. Similar to CK+, the first

frame is neutral and the last frame has the peak expression.

Typically, only the last three peak frames and the first frame

(neutral face) from the 480 videos collected by the VIS System

under normal indoor illumination are employed for 10-fold cross-

validation experiments.

RaFD [41]: The Radboud Faces Database (RaFD) is

laboratory-controlled and has a total of 1,608 images from 67

subjects with three different gaze directions, i.e., front, left and

right. Each sample is labeled with one of eight expressions: anger,

4

contempt, disgust, fear, happiness, sadness, surprise and neutral.

KDEF [42]: The laboratory-controlled Karolinska Directed

Emotional Faces (KDEF) database was originally developed for

use in psychological and medical research. KDEF consists of

images from 70 actors with five different angles labeled with six

basic facial expressions plus neutral.

In addition to these commonly used datasets for basic emo-

tion recognition, several well-established and large-scale publicly

available facial expression databases collected from the Internet

that are suitable for training deep neural networks have emerged

in the last two years.

EmotioNet [43]: EmotioNet is a large-scale database with one

million facial expression images collected from the Internet. A

total of 950,000 images were annotated by the automatic action

unit (AU) detection model in [43], and the remaining 25,000

images were manually annotated with 11 AUs. The second track of

the EmotioNet Challenge [51] provides six basic expressions and

ten compound expressions [52], and 2,478 images with expression

labels are available.

RAF-DB [44], [45]: The Real-world Affective Face Database

(RAF-DB) is a real-world database that contains 29,672 highly

diverse facial images downloaded from the Internet. With man-

ually crowd-sourced annotation and reliable estimation, seven

basic and eleven compound emotion labels are provided for the

samples. Specifically, 15,339 images from the basic emotion set

are divided into two groups (12,271 training samples and 3,068

testing samples) for evaluation.

AffectNet [46]: AffectNet contains more than one million

images from the Internet that were obtained by querying different

search engines using emotion-related tags. It is by far the largest

database that provides facial expressions in two different emotion

models (categorical model and dimensional model), of which

450,000 images have manually annotated labels for eight basic

expressions.

ExpW [47]: The Expression in-the-Wild Database (ExpW)

contains 91,793 faces downloaded using Google image search.

Each of the face images was manually annotated as one of the

seven basic expression categories. Non-face images were removed

in the annotation process.

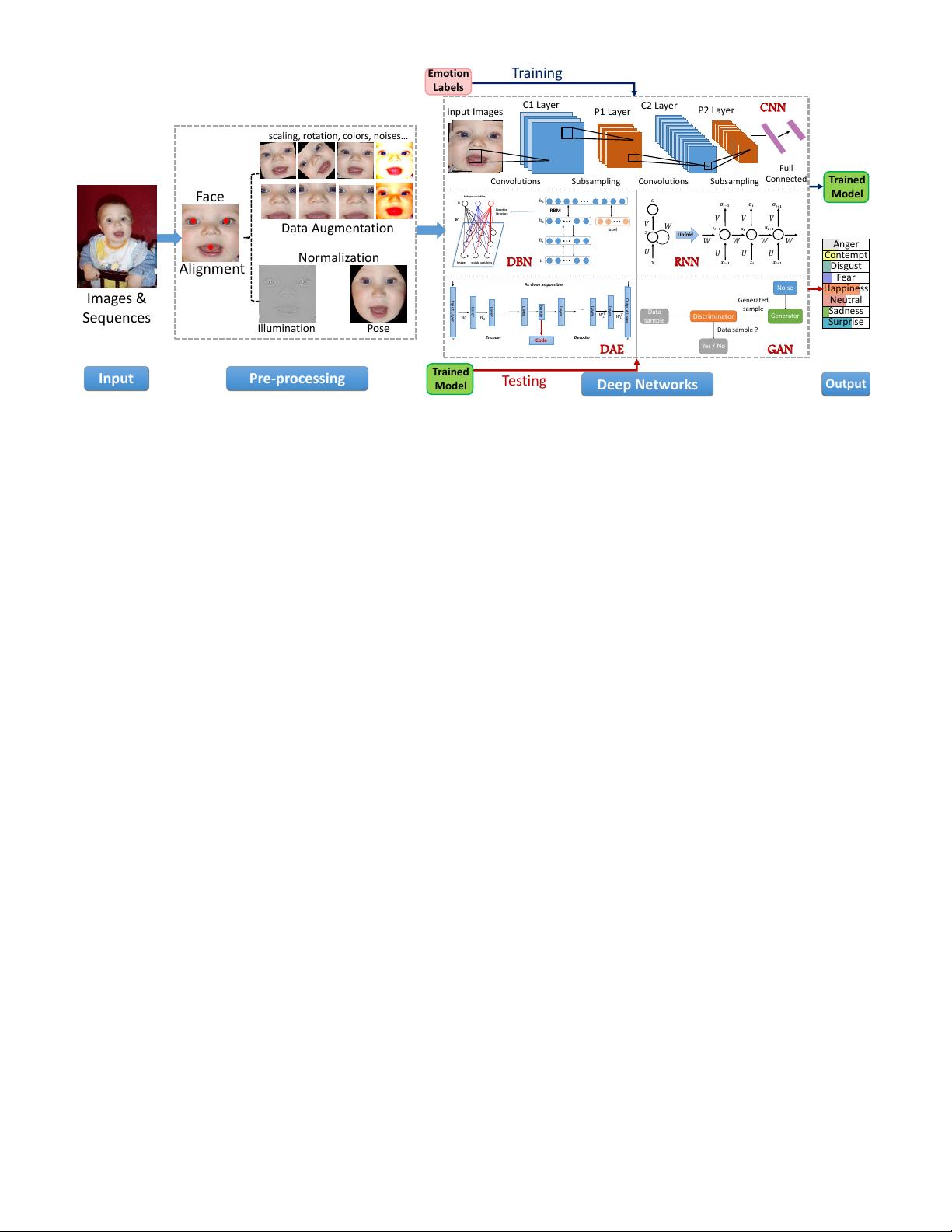

3 DEEP FACIAL EXPRESSION RECOGNITION

In this section, we describe the three main steps that are common

in automatic deep FER, i.e., pre-processing, deep feature learning

and deep feature classification. We briefly summarize the widely

used algorithms for each step and recommend the existing state-of-

the-art best practice implementations according to the referenced

papers.

3.1 Pre-processing

Variations that are irrelevant to facial expressions, such as different

backgrounds, illuminations and head poses, are fairly common in

unconstrained scenarios. Therefore, before training the deep neural

network to learn meaningful features, pre-processing is required

to align and normalize the visual semantic information conveyed

by the face.

3.1.1 Face alignment

Face alignment is a traditional pre-processing step in many face-

related recognition tasks. We list some well-known approaches

TABLE 2

Summary of different types of face alignment detectors that are widely

used in deep FER models.

type # points real-time speed performance used in

Holistic AAM [53] 68 7 fair

poor

generalization

[54], [55]

Part-based

MoT [56] 39/68 7 slow/

fast

good

[57], [58]

DRMF [59] 66 7 [60], [61]

Cascaded

regression

SDM [62] 49 3

fast/

very fast

good/

very good

[16], [63]

3000 fps [64] 68 3 [55]

Incremental [65] 49 3 [66]

Deep

learning

cascaded CNN [67] 5 3

fast

good/

very good

[68]

MTCNN [69] 5 3 [70], [71]

and publicly available implementations that are widely used in

deep FER.

Given a series of training data, the first step is to detect the

face and then to remove background and non-face areas. The

Viola-Jones (V&J) face detector [72] is a classic and widely

employed implementation for face detection, which is robust and

computationally simple for detecting near-frontal faces.

Although face detection is the only indispensable procedure to

enable feature learning, further face alignment using the coordi-

nates of localized landmarks can substantially enhance the FER

performance [14]. This step is crucial because it can reduce the

variation in face scale and in-plane rotation. Table 2 investigates

facial landmark detection algorithms widely-used in deep FER and

compares them in terms of efficiency and performance. The Active

Appearance Model (AAM) [53] is a classic generative model that

optimizes the required parameters from holistic facial appearance

and global shape patterns. In discriminative models, the mixtures

of trees (MoT) structured models [56] and the discriminative

response map fitting (DRMF) [59] use part-based approaches that

represent the face via the local appearance information around

each landmark. Furthermore, a number of discriminative models

directly use a cascade of regression functions to map the image

appearance to landmark locations and have shown better results,

e.g., the supervised descent method (SDM) [62] implemented

in IntraFace [73], the face alignment 3000 fps [64], and the

incremental face alignment [65]. Recently, deep networks have

been widely exploited for face alignment. Cascaded CNN [67]

is the early work which predicts landmarks in a cascaded way.

Based on this, Tasks-Constrained Deep Convolutional Network

(TCDCN) [74] and Multi-task CNN (MTCNN) [69] further lever-

age multi-task learning to improve the performance. In general,

cascaded regression has become the most popular and state-of-

the-art methods for face alignment as its high speed and accuracy.

In contrast to using only one detector for face alignment,

some methods proposed to combine multiple detectors for better

landmark estimation when processing faces in challenging uncon-

strained environments. Yu et al. [75] concatenated three different

facial landmark detectors to complement each other. Kim et al.

[76] considered different inputs (original image and histogram

equalized image) and different face detection models (V&J [72]

and MoT [56]), and the landmark set with the highest confidence

provided by the Intraface [73] was selected.

3.1.2 Data augmentation

Deep neural networks require sufficient training data to ensure

generalizability to a given recognition task. However, most pub-

licly available databases for FER do not have a sufficient quantity

5

Emotion

Labels

Training

Trained

Model

Anger

Neutral

Surprise

Happiness

Contempt

Disgust

Fear

Sadness

RBM

𝑣

ℎ

2

ℎ

1

ℎ

3

label

Convolutions

P1 Layer

C1 Layer

C2 Layer

P2 Layer

Subsampling

Convolutions

Subsampling

Full

Connected

CNN

𝑊 𝑊 𝑊 𝑊

𝑉

𝑶

𝒕−𝟏

𝒔

𝒕−𝟏

𝑈

𝒙

𝒕−𝟏

𝑉

𝑶

𝒕

𝒔

𝒕

𝑈

𝒙

𝒕

𝑈

𝒙

𝒕+𝟏

𝒔

𝒕+𝟏

𝑉

𝑶

𝒕+𝟏

𝑜

𝑠

Unfold

𝑉

𝑈

𝑊

𝑥

Data Augmentation

Images &

Sequences

Pre-processing

Input

Face

Normalization

scaling, rotation, colors, noises…

Illumination Pose

Alignment

Input Images

Testing

Deep Networks

Output

Trained

Model

DBN

DAE

𝑽

Image

visible variables

𝒉

𝑾

hidden variables

Bipartite

Structure

RNN

Input Layer

Layer

Layer

bottle

Output Layer

Layer

Layer

Layer

Layer

…

…

Code

As close as possible

𝑥 𝑥

𝑊

1

𝑊

1

𝑇

𝑊

2

𝑊

2

𝑇

Encoder Decoder

Data

sample

Discriminator

Generator

Yes / No

Noise

Generated

sample

Data sample ?

GAN

Fig. 2. The general pipeline of deep facial expression recognition systems.

of images for training. Therefore, data augmentation is a vital

step for deep FER. Data augmentation techniques can be divided

into two groups: on-the-fly data augmentation and offline data

augmentation.

Usually, the on-the-fly data augmentation is embedded in deep

learning toolkits to alleviate overfitting. During the training step,

the input samples are randomly cropped from the four corners and

center of the image and then flipped horizontally, which can result

in a dataset that is ten times larger than the original training data.

Two common prediction modes are adopted during testing: only

the center patch of the face is used for prediction (e.g., [61], [77])

or the prediction value is averaged over all ten crops (e.g., [76],

[78]).

Besides the elementary on-the-fly data augmentation, various

offline data augmentation operations have been designed to further

expand data on both size and diversity. The most frequently used

operations include random perturbations and transforms, e.g., rota-

tion, shifting, skew, scaling, noise, contrast and color jittering. For

example, common noise models, salt & pepper and speckle noise

[79] and Gaussian noise [80], [81] are employed to enlarge the data

size. And for contrast transformation, saturation and value (S and

V components of the HSV color space) of each pixel are changed

[70] for data augmentation. Combinations of multiple operations

can generate more unseen training samples and make the network

more robust to deviated and rotated faces. In [82], the authors

applied five image appearance filters (disk, average, Gaussian,

unsharp and motion filters) and six affine transform matrices that

were formalized by adding slight geometric transformations to the

identity matrix. In [75], a more comprehensive affine transform

matrix was proposed to randomly generate images that varied in

terms of rotation, skew and scale. Furthermore, deep learning

based technology can be applied for data augmentation. For

example, a synthetic data generation system with 3D convolutional

neural network (CNN) was created in [83] to confidentially create

faces with different levels of saturation in expression. And the

generative adversarial network (GAN) [84] can also be applied to

augment data by generating diverse appearances varying in poses

and expressions. (see Section 4.1.7).

3.1.3 Face normalization

Variations in illumination and head poses can introduce large

changes in images and hence impair the FER performance.

Therefore, we introduce two typical face normalization methods

to ameliorate these variations: illumination normalization and

pose normalization (frontalization).

Illumination normalization: Illumination and contrast can

vary in different images even from the same person with the same

expression, especially in unconstrained environments, which can

result in large intra-class variances. In [60], several frequently

used illumination normalization algorithms, namely, isotropic

diffusion (IS)-based normalization, discrete cosine transform

(DCT)-based normalization [85] and difference of Gaussian

(DoG), were evaluated for illumination normalization. And [86]

employed homomorphic filtering based normalization, which has

been reported to yield the most consistent results among all other

techniques, to remove illumination normalization. Furthermore,

related studies have shown that histogram equalization combined

with illumination normalization results in better face recognition

performance than that achieved using illumination normalization

on it own. And many studies in the literature of deep FER (e.g.,

[75], [79], [87], [88]) have employed histogram equalization to

increase the global contrast of images for pre-processing. This

method is effective when the brightness of the background and

foreground are similar. However, directly applying histogram

equalization may overemphasize local contrast. To solve this

problem, [89] proposed a weighted summation approach to

combine histogram equalization and linear mapping. And in

[79], the authors compared three different methods: global

contrast normalization (GCN), local normalization, and histogram

equalization. GCN and histogram equalization were reported

to achieve the best accuracy for the training and testing steps,

respectively.

Pose normalization: Considerable pose variation is another

common and intractable problem in unconstrained settings. Some

studies have employed pose normalization techniques to yield

剩余24页未读,继续阅读

yep。

- 粉丝: 19

- 资源: 2

上传资源 快速赚钱

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

会员权益专享

最新资源

- stc12c5a60s2 例程

- Android通过全局变量传递数据

- c++校园超市商品信息管理系统课程设计说明书(含源代码) (2).pdf

- 建筑供配电系统相关课件.pptx

- 企业管理规章制度及管理模式.doc

- vb打开摄像头.doc

- 云计算-可信计算中认证协议改进方案.pdf

- [详细完整版]单片机编程4.ppt

- c语言常用算法.pdf

- c++经典程序代码大全.pdf

- 单片机数字时钟资料.doc

- 11项目管理前沿1.0.pptx

- 基于ssm的“魅力”繁峙宣传网站的设计与实现论文.doc

- 智慧交通综合解决方案.pptx

- 建筑防潮设计-PowerPointPresentati.pptx

- SPC统计过程控制程序.pptx

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0