SMT统计机器翻译入门

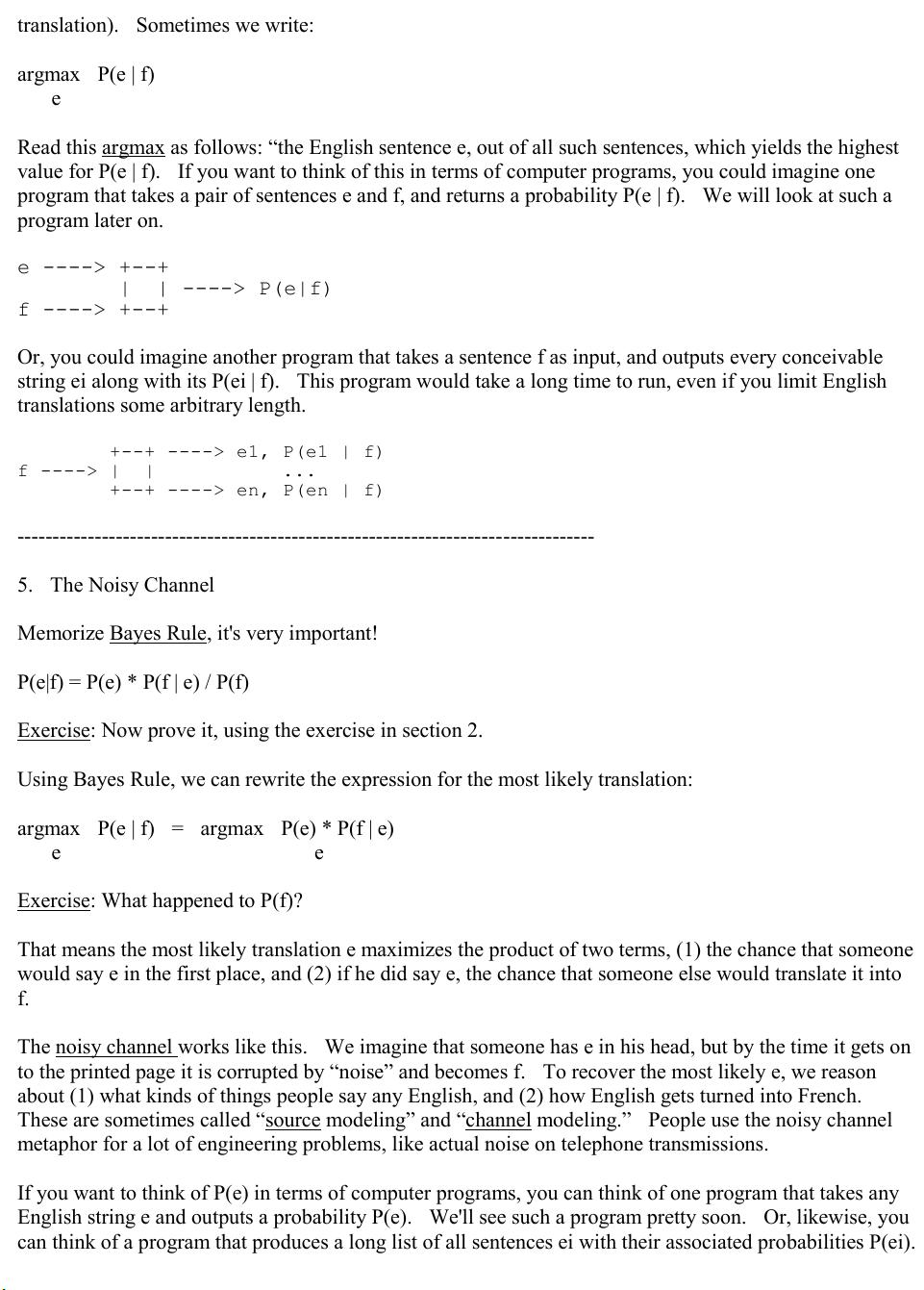

pdf 便于打印 A Statistical MT Tutorial Workbook Kevin Knight prepared in connection with the JHU summer workshop April 30, 1999 ---------------------------------------------------------------------------------- 1. The Overall Plan We want to automatically analyze existing human sentence translations, with an eye toward building general translation rules -- we will use these rules to translate new texts automatically. I know this looks like a thick workbook, but if you take a day to work through it, you will know almost as much about statistical machine translation as anybody! The basic text that this tutorial relies on is Brown et al, “The Mathematics of Statistical Machine Translation”, Computational Linguistics, 1993. On top of this excellent presentation, I can only add some perspective and perhaps some sympathy for the poor reader, who has (after all) done nothing wrong. Important terms are underlined throughout!

剩余34页未读,继续阅读

- 粉丝: 0

- 资源: 1

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

会员权益专享

最新资源

- c++校园超市商品信息管理系统课程设计说明书(含源代码) (2).pdf

- 建筑供配电系统相关课件.pptx

- 企业管理规章制度及管理模式.doc

- vb打开摄像头.doc

- 云计算-可信计算中认证协议改进方案.pdf

- [详细完整版]单片机编程4.ppt

- c语言常用算法.pdf

- c++经典程序代码大全.pdf

- 单片机数字时钟资料.doc

- 11项目管理前沿1.0.pptx

- 基于ssm的“魅力”繁峙宣传网站的设计与实现论文.doc

- 智慧交通综合解决方案.pptx

- 建筑防潮设计-PowerPointPresentati.pptx

- SPC统计过程控制程序.pptx

- SPC统计方法基础知识.pptx

- MW全能培训汽轮机调节保安系统PPT教学课件.pptx

信息提交成功

信息提交成功

评论1