没有合适的资源?快使用搜索试试~ 我知道了~

首页最新「基于Transformer的预训练模型」综述论文

最新「基于Transformer的预训练模型」综述论文

需积分: 0 26 下载量 60 浏览量

更新于2023-04-28

2

收藏 5.63MB PDF 举报

基于Transformer的预训练语言模型(T-PTLMs)在几乎所有的自然语言处理任务中都取得了巨大的成功。这些模型的发展始于GPT和BERT。这些模型建立在Transformer、自监督学习和迁移学习的基础上。基于转换的PTLMs通过自监督学习从大量文本数据中学习通用语言表示,并将这些知识转移到下游任务中。这些模型为下游任务提供了良好的背景知识,避免了对下游模型从头开始的训练。

1

AMMUS : A Survey of Transformer-based

Pretrained Models in Natural Language

Processing

Katikapalli Subramanyam Kalyan, Ajit Rajasekharan, and Sivanesan Sangeetha

Abstract—Transformer-based pretrained language models (T-PTLMs) have achieved great success in almost every NLP task. The

evolution of these models started with GPT and BERT. These models are built on the top of transformers, self-supervised learning

and transfer learning. Transformed-based PTLMs learn universal language representations from large volumes of text data using

self-supervised learning and transfer this knowledge to downstream tasks. These models provide good background knowledge to

downstream tasks which avoids training of downstream models from scratch. In this comprehensive survey paper, we initially give a

brief overview of self-supervised learning. Next, we explain various core concepts like pretraining, pretraining methods, pretraining

tasks, embeddings and downstream adaptation methods. Next, we present a new taxonomy of T-PTLMs and then give brief overview

of various benchmarks including both intrinsic and extrinsic. We present a summary of various useful libraries to work with T-PTLMs.

Finally, we highlight some of the future research directions which will further improve these models. We strongly believe that this

comprehensive survey paper will ser ve as a good reference to learn the core concepts as well as to stay updated with the recent

happenings in T-PTLMs.

Index Terms—Self-Supervised Learning, Transformers, Pretrained Language Models, Survey.

F

CONTENTS

1 Introduction 2

2 Self-Supervised Learning (SSL) 3

2.1 Why Self-Supervised Learning? . . . 3

2.2 What is Self-Supervised Learning? . 3

2.3 Types of Self-Supervised Learning . 4

3 T-PTLM Core Concepts 4

3.1 Pretraining . . . . . . . . . . . . . . . 4

3.1.1 Pretraining Steps . . . . . . 4

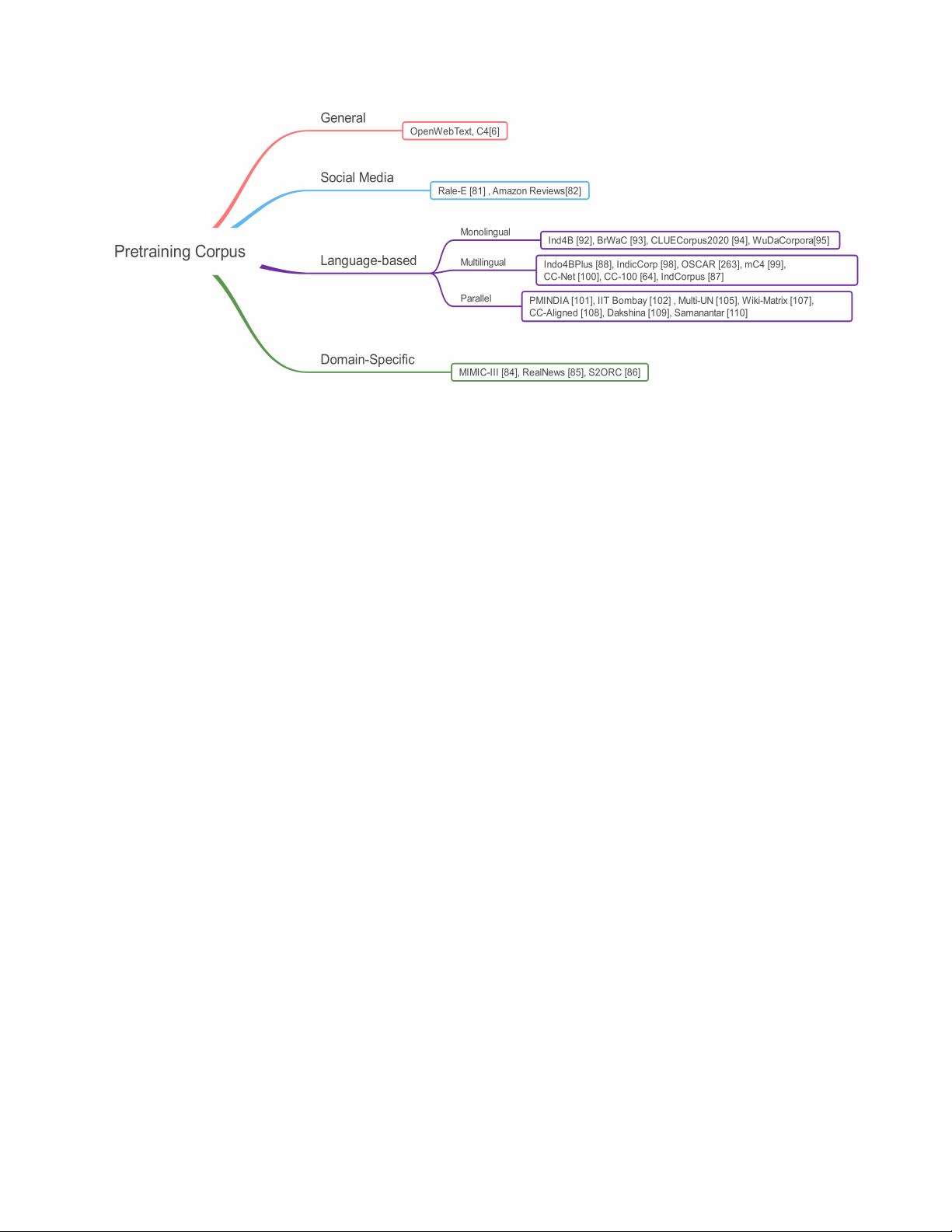

3.1.2 Pretraining Corpus . . . . 5

3.2 Types of Pretraining Methods . . . . 6

3.2.1 Pretraining from Scratch

(PTS) . . . . . . . . . . . . . 6

3.2.2 Continual Pretraining (CPT) 7

3.2.3 Simultaneous Pretraining

(SPT) . . . . . . . . . . . . . 8

3.2.4 Task Adaptive Pretraining

(TAPT) . . . . . . . . . . . . 8

• K.S.Kalyan is with the Department of Computer Applications, National

Institute of Technology Trichy, Trichy, Tamil Nadu, India, 620015.

E-mail: kalyan.ks@yahoo.com, Website: https://mr-nlp.github.io

• Ajit Rajasekharan is with the Nference.ai as CTO, Cambridge, MA, USA,

02142.

• S.Sangeetha is with the Department of Computer Applications, National

Institute of Technology Trichy, Trichy, Tamil Nadu, India, 620015..

Preprint under review - The paper is named (AMMUS - AMMU Smiles) in

the memory of one of the close friends of K.S.Kalyan (https://mr-nlp.github.

io).

3.2.5 Knowledge Inherited Pre-

training (KIPT) . . . . . . . 9

3.3 Pretraining Tasks . . . . . . . . . . . 9

3.4 Embeddings . . . . . . . . . . . . . . 12

3.4.1 Main Embeddings . . . . . 12

3.4.2 Auxiliary Embeddings . . 13

4 Taxonomy 14

4.1 Pretraining Corpus-based . . . . . . 14

4.1.1 General . . . . . . . . . . . 14

4.1.2 Social Media-based . . . . 14

4.1.3 Language-based . . . . . . 14

4.1.4 Domain-Specific Models . 17

4.2 Architecture . . . . . . . . . . . . . . 17

4.2.1 Encoder-based . . . . . . . 17

4.2.2 Decoder-based . . . . . . . 17

4.2.3 Encoder-Decoder based . . 18

4.3 SSL . . . . . . . . . . . . . . . . . . . . 19

4.3.1 Generative SSL . . . . . . . 19

4.3.2 Contrastive SSL . . . . . . 19

4.3.3 Adversarial SSL . . . . . . 19

4.3.4 Hybrid SSL . . . . . . . . . 20

4.4 Extensions . . . . . . . . . . . . . . . 20

4.4.1 Compact T-PTLMs . . . . . 20

4.4.2 Character-based T-PTLMs 21

4.4.3 Green T-PTLMs . . . . . . 21

4.4.4 Sentence-based T-PTLMs . 22

4.4.5 Tokenization-Free T-PLTMs 22

4.4.6 Large Scale T-PTLMs . . . 23

4.4.7 Knowledge Enriched T-

PTLMs . . . . . . . . . . . . 23

arXiv:2108.05542v1 [cs.CL] 12 Aug 2021

2

4.4.8 Long-Sequence T-PTLMs . 23

4.4.9 Efficient T-PTLMs . . . . . 23

5 Downstream Adaptation Methods 23

5.1 Feature-based . . . . . . . . . . . . . . 24

5.2 Fine-tuning . . . . . . . . . . . . . . . 24

5.2.1 Vanilla Fine-Tuning . . . . 25

5.2.2 Intermediate Fine-Tuning

(IFT) . . . . . . . . . . . . . 25

5.2.3 Multi-task Fine-Tuning

(MTFT) . . . . . . . . . . . 25

5.2.4 Parameter Efficient Fine-

Tuning . . . . . . . . . . . . 26

5.3 Prompt-based Tuning . . . . . . . . . 26

6 Evaluation 27

6.1 Intrinsic Evaluation . . . . . . . . . . 27

6.2 Extrinsic Evaluation . . . . . . . . . . 28

7 Useful Libraries 31

8 Discussions and Future Directions 31

8.1 Better Pretraining Methods . . . . . . 31

8.2 Sample Efficient Pretraining Tasks . 31

8.3 Efficient Models . . . . . . . . . . . . 31

8.4 Better Position Encoding Mechanisms 31

8.5 Improving existing T-PTLMs . . . . . 31

8.6 Beyond Vanilla Fine-tuning . . . . . 33

8.7 Benchmarks . . . . . . . . . . . . . . . 33

8.8 Compact Models . . . . . . . . . . . . 33

8.9 Robustness to Noise . . . . . . . . . . 33

8.10 Novel Adaptation Methods . . . . . 33

8.11 Privacy Issues . . . . . . . . . . . . . 33

8.12 Mitigating Bias . . . . . . . . . . . . . 34

8.13 Mitigating Fine-Tuning Instabilities . 34

9 Conclusion 34

References 34

1 INTRODUCTION

T

RANSFORMER-based pretrained language models

(T-PTLMs) like GPT-1 [1], BERT [2], XLNet [3],

RoBERTa [4], ELECTRA [5], T5 [6], ALBERT [7], BART

[8] and PEGAUSUS [9] have achieved tremendous suc-

cess in NLP because of their ability to learn universal

language representations from large volumes of unla-

beled text data and then transfer this knowledge to

downstream tasks. In the early days, NLP systems are

mostly rule-based which are later replaced by machine-

learned models. Machine learning models require feature

engineering which requires domain expertise and it is

a time-consuming process too. The evolution of better

computer hardware like GPUs and word embeddings

like Word2Vec [10] and Glove [11] increased the use

of deep learning models like CNN [12] and RNN [13],

[14] for building NLP systems. The main drawback

with these deep learning models is the requirement of

training the model from scratch except for the word

embeddings. Training the model from scratch requires

a large number of labeled instances which are expensive

to generate. However, we expect the model to perform

well using few labeled instances only. Transfer learning

[15] allows the reuse of knowledge learned in source

tasks to perform well in the target task. Here the target

task should be similar to the source task. Based on the

idea of transfer learning, researchers in Computer Vision

trained large CNN models [16]–[19] using large scale

labeled datasets like ImageNet [20], [21]. These models

learn image representations which are common across all

the tasks. The large pretrained CNN models are adapted

to downstream tasks by including few task-specific lay-

ers and then fine-tuned on the target datasets [22]. As

the pretrained CNN models provide good background

knowledge to the downstream models, they enjoyed

tremendous success in many CV tasks [18], [23].

Deep learning models like CNN and RNN have dif-

ficulties in modelling long term contexts and learn the

word representations with locality bias [24]. Moreover,

as RNNs process the input sequentially i.e., word by

word, the utilization of parallel computer hardware is

limited. To overcome these drawbacks in existing deep

learning models, Vaswani et al. [25] proposed a deep

learning model called Transformers which is completely

based on self-attention. Self-attention allows for more

parallelization compared to RNNs and can easily model

long term contexts as every token attend to all the tokens

in the input sequence [25]. Transformers contains a stack

of encoder and decoder layers. With the help of a stack

of encoder and decoder layers, transformers can learn

complex language information. It is a very expensive and

time-taking process to generate a large amount of labeled

data in the NLP domain. However, it is very easy to

get large volumes of unlabeled text data. NLP research

community impressed with the success of CNN-based

pretrained models in Computer Vision, have developed

T-PTLMs by combining the power of transformers and

self-supervised learning. Self-supervised learning allows

the transformers to learn based on the pseudo supervi-

sion provided by one or more pretraining tasks.

GPT and BERT are the first T-PTLMs developed based

on transformer decoder and encoder layers respectively.

Following GPT and BERT, models like XLNet , RoBERTa,

ELECTRA, ALBERT, T5, BART and PEGAUSUS are pro-

posed. Here XLNet, RoBERTa, ELECTRA and ALBERT

are improvements over BERT model while T5, BART and

PEGAUSUS are encoder-decoder based models. Kaplan

et al. [26] showed that the performance of T-PTLMs can

be increased just by increasing the size of the model. This

observation triggered the development of large-scale T-

PTLMs like GPT-3 (175B) [27], PANGU- (200B) [28],

GShard (600B) [29] which contain billions of parameters

and Switch-Transformers (1.6T) [30] which contains tril-

lions of parameters. Following the success of T-PTLMs in

general English domain, T-PTLMs are also developed for

other domains like Finance [31], Legal [32], [33], News

3

[34], Programming [35]–[39], Dialogue [40], Networking

[41], Academic [42]–[44] and Biomedical [45]–[48]. T-

PTLMs support transfer-learning also as these models

can be adapted to downstream tasks by fine-tuning or

prompt-tuning on target datasets. In this survey paper,

we present a comprehensive review of recent research

works related to T-PTLMs. We summarize the highlights

of our survey as

• We present a brief overview of SSL, the backbone

behind developing T-PTLMs (Section 2).

• We explain various core concepts related to T-

PTLMs like pretraining, pretraining methods, pre-

training tasks, embeddings and downstream adap-

tation methods (Section 3).

• We present a new taxonomy to categorize various

T-PTLMs. This taxonomy is based on four perspec-

tives namely pretraining corpus, architecture, type

of SSL and extensions (Section 4).

• We present a new taxonomy to categorize various

downstream adaptation methods and explain each

in detail (Section 5).

• We present a brief overview of various benchmarks

including both intrinsic and extrinsic which evaluate

the progress of T-PTLMs (Section 6).

• We present a brief overview of various li-

braries starting from Huggingface Transformers to

Transformer-interpret which are useful to work T-

PTLMs (Section 7).

• We briefly discuss some of the future research di-

rections which will drive the research community

to further improve the models (Section 8).

2 SELF-SUPERVISED LEARNING (SSL)

Self-supervised learning, a relatively new learning

paradigm has gained attention in the Artificial Intelli-

gence (AI) research community due to its ability to make

use of unlabeled data to inject universal knowledge

about language, image or speech into pretrained models.

Due to its data efficiency and generalization ability, SSL

finds applications in various AI fields like Robotics [49],

Speech [50], [51], Natural Language Processing [24], [52]

and Computer Vision [53], [54].

2.1 Why Self-Supervised Learning?

Supervised learning has played a crucial part in AI

progress by allowing the models to learn from human-

annotated instances. Models trained using supervised

learning over labeled instances perform well on a spe-

cific task. However, a model trained using supervised

learning requires a large number of labeled instances to

achieve good performance. Data collection and labelling

is a time-taking and expensive process. Moreover, it

is difficult to obtain labeled data in specific domains

like Medical and Legal. Further, the model learns only

what is available in the training data and suffers from

generalization error and spurious correlations. Although

supervised learning is a dominant learning paradigm in

developing AI models in the last two decades, the bot-

tlenecks in supervised learning have forced the research

community to look for alternative learning paradigms

like Self-Supervised Learning (SSL). SSL does not require

human labeled data and helps the model to gain more

generalization ability by learning from large amounts

of unlabeled data. We summarize the drawbacks of

supervised learning as

• heavy dependence on human labeled instances

which are expensive and time-consuming to gen-

erate.

• lack of generalization ability and suffers from spu-

rious correlations.

• many domains like Medical and Legal are labeled

data starved which limits the application of AI

models in these domains.

• inability to learn from large amount of freely avail-

able unlabeled data.

2.2 What is Self-Supervised Learning?

Self-Supervised Learning (SSL) is a new learning

paradigm which helps the model to learn univer-

sal knowledge based on the pseudo supervision

provided by pretraining tasks. In SSL, the labels

are automatically generated based on data attributes

and the definition of pretraining task. Let X =

(x

1

, p

1

), (x

2

, p

2

), (x

3

, p

3

), . . . , (x

n

, p

n

) represents pseudo

labeled instances. The pretraining loss (L

SSL

) of SSL

learning paradigm can be defined as

L

SSL

= λ

1

L

P T −1

+ λ

2

L

P T −2

+ ... + λ

m

L

P T −m

(1)

Here L

P T −1

(), L

P T −2

(),. . . ,L

P T −m

represent the loss

functions of ‘m’ pretraining tasks and λ

1

(), λ

2

(),. . . ,λ

m

()

represents weights. In general, pretraining using SSL

paradigm can involve more than one pretraining task.

For example, RoBERTa is pretrained using only masked

language modelling (MLM) while BERT model is pre-

trained using two pretraining tasks namely masked

language modelling (MLM) and next sentence predic-

tion (NSP). In case of MLM, the loss function used is

cross entropy loss and in case of NSP, it is sigmoid

loss. By solving the pretraining tasks over vast amount

of unlabeled data, the model learns general language

representations which can encode both syntax and se-

mantic information. These representations are useful

in downstream tasks and helps the model to achieve

much better performance using few labeled instances

only. We can say that pretraining over vast amount of

unlabeled data using SSL helps the model to gain basic

common sense or background knowledge without which

the model requires more labeled instances to achieve a

good performance.

SSL has similarities with other popular learning

paradigms like supervised and unsupervised learning.

SSL is like unsupervised learning as it does not require

human labeled instances. However, it is different from

4

unsupervised learning because a) SSL requires supervi-

sion unlike unsupervised learning and b) the objective

of unsupervised learning is to identify the hidden pat-

terns while the objective of SSL is to learn meaningful

representations. SSL is like supervised learning as both

the learning paradigms require supervision. However,

it is different from supervised learning because a) SSL

generates labels automatically without any human in-

volvement and b) the goal of supervised learning is pro-

vide task specific knowledge while SSL aims to provide

the model with universal knowledge. We summarize the

goals of SSL as

• learn universal language representations which pro-

vides a good background to the downstream model.

• better generalization ability by learning over vast

amount of freely available unlabeled text data.

2.3 Types of Self-Supervised Learning

Self-Supervised Learning can be classified into Genera-

tive SSL, Contrastive SSL and Adversarial SSL . Gener-

ative SSL allows the model to learn by decoding the en-

coded input. Generative SSL can use autoregressive, au-

toencoding or hybrid language models. Autoregressive

language model predicts the next tokens based on the

previous tokens. GPT-1 [1] is the first PTLM that is based

on the autoregressive language model. Autoencoding

language model predicts the masked tokens based on

the unmasked tokens (bidirectional context). For exam-

ple, masked language modelling (MLM) involves two

steps. The first step is to encode the masked tokens

using bidirectional context and the second step is to

decode (predict) the original tokens based on the en-

coded masked token representations. Models like BERT

[2], RoBERTa [4] and ALBERT [7] are pretrained using

MLM. Hybrid language models combine the advantages

of autoregressive and autoencoding language models.

For example, permutation language modelling (PLM) in

XLNet [3] is an example of a hybrid language model.

Contrastive SSL allows the model to learn by com-

paring. Next sentence prediction (NSP) in BERT and

sentence order prediction in ALBERT are examples of

contrastive SSL. NSP involves identifying whether the

given sentence pair includes consecutive sentences or

not, while SOP involves identifying whether the given

pair includes swapped sentences or not. Adversarial SSL

allows the model to learn by identifying whether the

tokens in the input sentence are replaced or shuffled or

randomly substituted. Replaced token detection (RTD) in

ELECTRA [5], shuffled token detection (STD) [55] and

random token substitution (RTS) [56] are examples of

Adversarial SSL. For detailed information about SSL and

types, please refer to the survey paper on SSL [49].

3 T-PTLM CORE CONCEPTS

3.1 Pretraining

Pretraining on large volumes of unlabeled text and then

fine-tuning on small task-specific datasets has become a

standard approach in modern natural language process-

ing. In Computer Vision, large models [16]–[19] based

on CNN are pretrained on large, labeled datasets like

ImageNet [20], [21], and then these models are used in

similar target tasks by adding few task-specific layers

[22]. Here pretraining allows the model to learn com-

mon image features which are useful in many tasks.

Inspired by the success of pretrained image models, NLP

researchers developed models like BERT [2], RoBERTa

[4], ELECTRA [5], XLNet [3], and T5 [6] by pretraining

them on large volumes of unlabelled text using self-

supervised learning. Some of the benefits of pretraining

are

• It helps the model to learn universal language repre-

sentations by leveraging large volumes of unlabeled

text.

• Pretrained models can be adapted to downstream

tasks by just adding one or two specific layers.

Hence it avoids training the downstream model (ex-

cept task-specific layers) from scratch by providing

a good initialization.

• It helps the model to perform better even with small

datasets and hence reduces the requirement of a

large number of labeled instances.

• Deep learning models due to having a large number

of parameters tend to overfit on small datasets. As

pretraining provides a good initialization, it avoids

overfitting on small datasets, and hence pretraining

can be viewed as a form of regularization [57].

3.1.1 Pretraining Steps

Pretraining a model involves the following five steps

1. Prepare the pretraining corpus – Pretraining corpus is

obtained from one or more sources of unlabelled text and

then cleaned. BERT [2] model is pretrained on English

Wikipedia and BooksCorpus. Further research [3], [4], [6]

showed that pretraining the model on a much larger text

corpus obtained from multiple sources further improves

the performance of the model. Moreover, Lee et al.

[58] showed there is a lot of redundancy in pretraining

corpus in the form of near-duplicate sentences and long

repetitive substrings. Further, Lee et al. [58] showed

pretraining the model on deduplicated corpus requires

fewer training steps to achieve similar performance.

2. Generate the vocabulary – Most of the transformer-

based pretrained language models use tokenizers like

WordPiece [59], Byte Pair Encoding (BPE) [60], Byte

Level BPE (bBPE) [61], and SentencePiece [62] to gen-

erate the vocabulary. Usually, vocabulary consists of all

the unique characters and commonly used subwords

and words. Vocabulary is generated by applying any

of the tokenizers on the pretraining corpus. Different T-

PTLMs use different tokenizers and generate vocabulary

with different sizes. For example, BERT uses WordPiece

vocabulary of size around 30K, RoBERTa uses bBPE

vocabulary of size around 50K, XLM [63] uses BPE vo-

cabulary of size 95K, mBERT [2] WordPiece vocabulary

5

Fig. 1: Pretraining corpus

of size 110K, XLM-R [64], and mBART [65] uses Senten-

cePiece vocabulary of size 250K. The large vocabulary

size in multilingual models like XLM, XLM-R, mBERT,

and mBART make sense as they have to represent

multiple languages. However, the size of the pretrained

model increases with an increase in vocabulary size. This

step is optional in the case of char-based T-PTLM like

CharacterBERT [66] and tokenization-free T-PTLMs like

CANINE [67], ByT5 [68], and Charformer [69].

3. Design the pretraining tasks - During pretraining, the

model learns language representations by minimizing

losses based on one or more pretraining tasks. A pre-

training task should

• be challenging enough to allow the model to learn seman-

tics at word, phrase, sentence, or document level. For

example, recent research works [4], [7] questioned

the efficiency of NSP task and resulted in new

pre-training tasks to learn semantics at sentence

level like sentence order prediction [7] and sentence

structure prediction [70].

• provide more training signal so that the model learns

more language information with less pretraining corpus.

For example, RTD provides more training signal

compared to MLM because RTD is defined over all

the input tokens while MLM is defined over a subset

of tokens only [5].

• close to downstream tasks. For example, span bound-

ary pretraining task in SpanBERT [71] is close to

the span extraction task and the gap sentence gen-

eration in PEGAUSUS [9] is close to the summariza-

tion task. Recent research works resulted in better

versions of MLM like Swapped Language Modeling

[56] which avoids the use of special mask tokens and

hence reduces the discrepancy between pretraining

and fine-tuning.

4. Choose the pretraining method – Training a new model

from scratch using SSL only is highly expensive and

consumes a lot of pretraining time. Instead of training

from scratch using SSL only, pretraining methods like

KIPT [72], [73] which pretrain a model using both SSL

and KD can be used. In the case of adapting general

models to specific domains, pretraining methods like

continual pretraining with new vocabulary [74]–[77] or

adapt and distill [78] can be used. To pretrain a domain-

specific model with limited domain-specific corpus, si-

multaneous pretraining which leverages both general

and in-domain corpus can be used [79].

5. Choose the pretraining dynamics – BERT model is

pretrained on sentence pairs with static masking in small

batch sizes. Liu et al. [4] showed that carefully designed

pretraining choices like dynamic masking, large batch

sizes, more pretraining steps, and long input sequences

further enhance the performance of the model. More-

over, when using large batch sizes which may cause dif-

ficulty in optimization, it is recommended to a) linearly

increase the learning rate in the early pretraining steps

and b) use different learning rates in different layers

which can also help to speed up convergence [80].

3.1.2 Pretraining Corpus

Self-Supervised learning to pretrain T-PTLMs requires

large volumes of pretraining data. As shown in Fig-

ure, pretraining corpus can be classified into four types

(refer Figure 1). The characteristic of the text differs

from one type of corpus to another. For example, in

the general domain, the text is less noisy and written

formally by professionals. In social media, the text is

mostly noisy and written colloquially by the general

public. Moreover, many specific domains like Biomed-

ical and Finance contain many domain-specific words

which are not used in the general domain. In general,

the performance of general domain models in domain-

specific tasks is limited [45]. So, we have to choose the

pretraining corpus depending on the target domain to

剩余41页未读,继续阅读

ProtTrans:ProtTrans提供了最先进的蛋白质预训练语言模型。 使用Transformers模型,对ProtTrans进行了来自Summit的数千个GPU和数百个Google TPU的培训

2021-04-13 上传

2021-09-07 上传

2021-10-19 上传

2020-09-21 上传

2023-03-29 上传

2022-08-03 上传

2023-08-10 上传

2023-05-13 上传

点击了解资源详情

syp_net

- 粉丝: 158

- 资源: 1187

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- 批量文件重命名神器:HaoZipRename使用技巧

- 简洁注册登录界面设计与代码实现

- 掌握Python字符串处理与正则表达式技巧

- YOLOv5模块改进 - C3与RFAConv融合增强空间特征

- 基于EasyX的C语言打字小游戏开发教程

- 前端项目作业资源包:完整可复现的开发经验分享

- 三菱PLC与组态王实现加热炉温度智能控制

- 使用Go语言通过Consul实现Prometheus监控服务自动注册

- 深入解析Python进程与线程的并发机制

- 小波神经网络均衡算法:MATLAB仿真及信道模型对比

- PHP 8.3 中文版官方手册(CHM格式)

- SSM框架+Layuimini的酒店管理系统开发教程

- 基于SpringBoot和Vue的招聘平台完整设计与实现教程

- 移动商品推荐系统:APP设计与实现

- JAVA代码生成器:一站式后台系统快速搭建解决方案

- JSP驾校预约管理系统设计与SSM框架结合案例解析

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功