没有合适的资源?快使用搜索试试~ 我知道了~

首页One-shot learning with Memory-Augmented Neural Networks

One-shot learning with Memory-Augmented Neural Networks

需积分: 0 10 下载量 34 浏览量

更新于2023-05-20

评论

收藏 2.17MB PDF 举报

One-shot learning with Memory-Augmented Neural Networks,这是2016年arXiv的关于元学习的论文

资源详情

资源评论

资源推荐

One-shot Learning with Memory-Augmented Neural Networks

Adam Santoro ADAMSANTORO@GOOGLE.COM

Google DeepMind

Sergey Bartunov SBOS@SBOS.IN

Google DeepMind, National Research University Higher School of Economics (HSE)

Matthew Botvinick BOTVINICK@GOOGLE.COM

Daan Wierstra WIERSTRA@GOOGLE.COM

Timothy Lillicrap COUNTZERO@GOOGLE.COM

Google DeepMind

Abstract

Despite recent breakthroughs in the applications

of deep neural networks, one setting that presents

a persistent challenge is that of “one-shot learn-

ing.” Traditional gradient-based networks require

a lot of data to learn, often through extensive it-

erative training. When new data is encountered,

the models must inefficiently relearn their param-

eters to adequately incorporate the new informa-

tion without catastrophic interference. Architec-

tures with augmented memory capacities, such as

Neural Turing Machines (NTMs), offer the abil-

ity to quickly encode and retrieve new informa-

tion, and hence can potentially obviate the down-

sides of conventional models. Here, we demon-

strate the ability of a memory-augmented neu-

ral network to rapidly assimilate new data, and

leverage this data to make accurate predictions

after only a few samples. We also introduce a

new method for accessing an external memory

that focuses on memory content, unlike previous

methods that additionally use memory location-

based focusing mechanisms.

1. Introduction

The current success of deep learning hinges on the abil-

ity to apply gradient-based optimization to high-capacity

models. This approach has achieved impressive results on

many large-scale supervised tasks with raw sensory input,

such as image classification (He et al., 2015), speech recog-

nition (Yu & Deng, 2012), and games (Mnih et al., 2015;

Silver et al., 2016). Notably, performance in such tasks is

typically evaluated after extensive, incremental training on

large data sets. In contrast, many problems of interest re-

quire rapid inference from small quantities of data. In the

limit of “one-shot learning,” single observations should re-

sult in abrupt shifts in behavior.

This kind of flexible adaptation is a celebrated aspect of hu-

man learning (Jankowski et al., 2011), manifesting in set-

tings ranging from motor control (Braun et al., 2009) to the

acquisition of abstract concepts (Lake et al., 2015). Gener-

ating novel behavior based on inference from a few scraps

of information – e.g., inferring the full range of applicabil-

ity for a new word, heard in only one or two contexts – is

something that has remained stubbornly beyond the reach

of contemporary machine intelligence. It appears to present

a particularly daunting challenge for deep learning. In sit-

uations when only a few training examples are presented

one-by-one, a straightforward gradient-based solution is to

completely re-learn the parameters from the data available

at the moment. Such a strategy is prone to poor learning,

and/or catastrophic interference. In view of these hazards,

non-parametric methods are often considered to be better

suited.

However, previous work does suggest one potential strat-

egy for attaining rapid learning from sparse data, and

hinges on the notion of meta-learning (Thrun, 1998; Vi-

lalta & Drissi, 2002). Although the term has been used

in numerous senses (Schmidhuber et al., 1997; Caruana,

1997; Schweighofer & Doya, 2003; Brazdil et al., 2003),

meta-learning generally refers to a scenario in which an

agent learns at two levels, each associated with different

time scales. Rapid learning occurs within a task, for ex-

ample, when learning to accurately classify within a par-

ticular dataset. This learning is guided by knowledge

accrued more gradually across tasks, which captures the

way in which task structure varies across target domains

(Giraud-Carrier et al., 2004; Rendell et al., 1987; Thrun,

1998). Given its two-tiered organization, this form of meta-

arXiv:1605.06065v1 [cs.LG] 19 May 2016

One-shot learning with Memory-Augmented Neural Networks

learning is often described as “learning to learn.”

It has been proposed that neural networks with mem-

ory capacities could prove quite capable of meta-learning

(Hochreiter et al., 2001). These networks shift their bias

through weight updates, but also modulate their output by

learning to rapidly cache representations in memory stores

(Hochreiter & Schmidhuber, 1997). For example, LSTMs

trained to meta-learn can quickly learn never-before-seen

quadratic functions with a low number of data samples

(Hochreiter et al., 2001).

Neural networks with a memory capacity provide a promis-

ing approach to meta-learning in deep networks. However,

the specific strategy of using the memory inherent in un-

structured recurrent architectures is unlikely to extend to

settings where each new task requires significant amounts

of new information to be rapidly encoded. A scalable so-

lution has a few necessary requirements: First, information

must be stored in memory in a representation that is both

stable (so that it can be reliably accessed when needed) and

element-wise addressable (so that relevant pieces of infor-

mation can be accessed selectively). Second, the number

of parameters should not be tied to the size of the mem-

ory. These two characteristics do not arise naturally within

standard memory architectures, such as LSTMs. How-

ever, recent architectures, such as Neural Turing Machines

(NTMs) (Graves et al., 2014) and memory networks (We-

ston et al., 2014), meet the requisite criteria. And so, in this

paper we revisit the meta-learning problem and setup from

the perspective of a highly capable memory-augmented

neural network (MANN) (note: here on, the term MANN

will refer to the class of external-memory equipped net-

works, and not other “internal” memory-based architec-

tures, such as LSTMs).

We demonstrate that MANNs are capable of meta-learning

in tasks that carry significant short- and long-term mem-

ory demands. This manifests as successful classification

of never-before-seen Omniglot classes at human-like accu-

racy after only a few presentations, and principled function

estimation based on a small number of samples. Addition-

ally, we outline a memory access module that emphasizes

memory access by content, and not additionally on mem-

ory location, as in original implementations of the NTM

(Graves et al., 2014). Our approach combines the best of

two worlds: the ability to slowly learn an abstract method

for obtaining useful representations of raw data, via gra-

dient descent, and the ability to rapidly bind never-before-

seen information after a single presentation, via an external

memory module. The combination supports robust meta-

learning, extending the range of problems to which deep

learning can be effectively applied.

2. Meta-Learning Task Methodology

Usually, we try to choose parameters θ to minimize a learn-

ing cost L across some dataset D. However, for meta-

learning, we choose parameters to reduce the expected

learning cost across a distribution of datasets p(D):

θ

∗

= argmin

θ

E

D∼p(D)

[L(D; θ)]. (1)

To accomplish this, proper task setup is critical (Hochre-

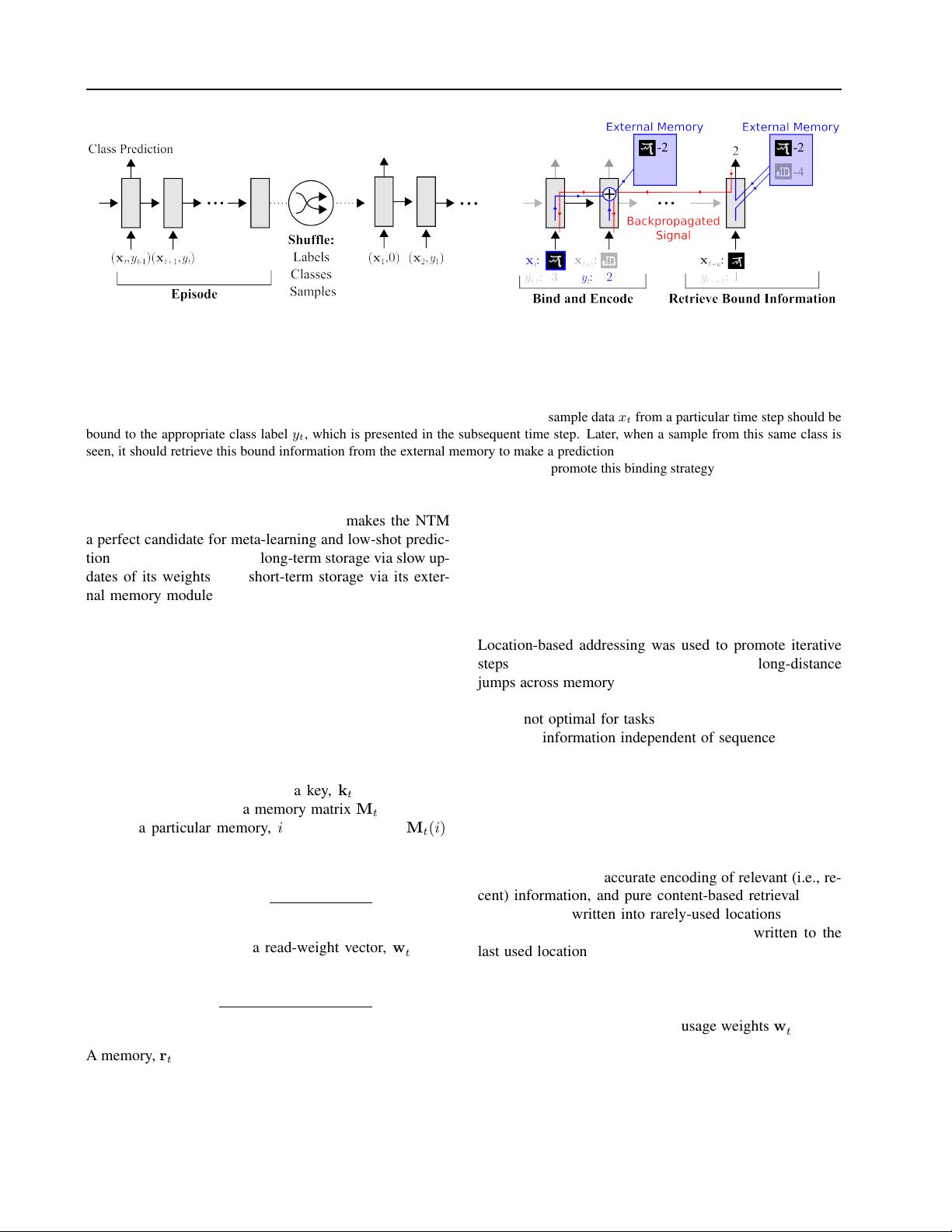

iter et al., 2001). In our setup, a task, or episode, in-

volves the presentation of some dataset D = {d

t

}

T

t=1

=

{(x

t

, y

t

)}

T

t=1

. For classification, y

t

is the class label for

an image x

t

, and for regression, y

t

is the value of a hid-

den function for a vector with real-valued elements x

t

, or

simply a real-valued number x

t

(here on, for consistency,

x

t

will be used). In this setup, y

t

is both a target, and

is presented as input along with x

t

, in a temporally off-

set manner; that is, the network sees the input sequence

(x

1

, null), (x

2

, y

1

), . . . , (x

T

, y

T −1

). And so, at time t the

correct label for the previous data sample (y

t−1

) is pro-

vided as input along with a new query x

t

(see Figure 1 (a)).

The network is tasked to output the appropriate label for

x

t

(i.e., y

t

) at the given timestep. Importantly, labels are

shuffled from dataset-to-dataset. This prevents the network

from slowly learning sample-class bindings in its weights.

Instead, it must learn to hold data samples in memory un-

til the appropriate labels are presented at the next time-

step, after which sample-class information can be bound

and stored for later use (see Figure 1 (b)). Thus, for a given

episode, ideal performance involves a random guess for the

first presentation of a class (since the appropriate label can

not be inferred from previous episodes, due to label shuf-

fling), and the use of memory to achieve perfect accuracy

thereafter. Ultimately, the system aims at modelling the

predictive distribution p(y

t

|x

t

, D

1:t−1

; θ), inducing a cor-

responding loss at each time step.

This task structure incorporates exploitable meta-

knowledge: a model that meta-learns would learn to bind

data representations to their appropriate labels regardless

of the actual content of the data representation or label,

and would employ a general scheme to map these bound

representations to appropriate classes or function values

for prediction.

3. Memory-Augmented Model

3.1. Neural Turing Machines

The Neural Turing Machine is a fully differentiable imple-

mentation of a MANN. It consists of a controller, such as

a feed-forward network or LSTM, which interacts with an

external memory module using a number of read and write

heads (Graves et al., 2014). Memory encoding and retrieval

in a NTM external memory module is rapid, with vector

One-shot learning with Memory-Augmented Neural Networks

(a) Task setup (b) Network strategy

Figure 1. Task structure. (a) Omniglot images (or x-values for regression), x

t

, are presented with time-offset labels (or function values),

y

t−1

, to prevent the network from simply mapping the class labels to the output. From episode to episode, the classes to be presented

in the episode, their associated labels, and the specific samples are all shuffled. (b) A successful strategy would involve the use of an

external memory to store bound sample representation-class label information, which can then be retrieved at a later point for successful

classification when a sample from an already-seen class is presented. Specifically, sample data x

t

from a particular time step should be

bound to the appropriate class label y

t

, which is presented in the subsequent time step. Later, when a sample from this same class is

seen, it should retrieve this bound information from the external memory to make a prediction. Backpropagated error signals from this

prediction step will then shape the weight updates from the earlier steps in order to promote this binding strategy.

representations being placed into or taken out of memory

potentially every time-step. This ability makes the NTM

a perfect candidate for meta-learning and low-shot predic-

tion, as it is capable of both long-term storage via slow up-

dates of its weights, and short-term storage via its exter-

nal memory module. Thus, if a NTM can learn a general

strategy for the types of representations it should place into

memory and how it should later use these representations

for predictions, then it may be able use its speed to make

accurate predictions of data that it has only seen once.

The controllers employed in our model are are either

LSTMs, or feed-forward networks. The controller inter-

acts with an external memory module using read and write

heads, which act to retrieve representations from memory

or place them into memory, respectively. Given some in-

put, x

t

, the controller produces a key, k

t

, which is then

either stored in a row of a memory matrix M

t

, or used to

retrieve a particular memory, i, from a row; i.e., M

t

(i).

When retrieving a memory, M

t

is addressed using the co-

sine similarity measure,

K

k

t

, M

t

(i)

=

k

t

· M

t

(i)

k k

t

kk M

t

(i) k

, (2)

which is used to produce a read-weight vector, w

r

t

, with

elements computed according to a softmax:

w

r

t

(i) ←

exp

K

k

t

, M

t

(i)

P

j

exp

K

k

t

, M

t

(j)

. (3)

A memory, r

t

, is retrieved using this weight vector:

r

t

←

X

i

w

r

t

(i)M

t

(i). (4)

This memory is used by the controller as the input to a clas-

sifier, such as a softmax output layer, and as an additional

input for the next controller state.

3.2. Least Recently Used Access

In previous instantiations of the NTM (Graves et al., 2014),

memories were addressed by both content and location.

Location-based addressing was used to promote iterative

steps, akin to running along a tape, as well as long-distance

jumps across memory. This method was advantageous for

sequence-based prediction tasks. However, this type of ac-

cess is not optimal for tasks that emphasize a conjunctive

coding of information independent of sequence. As such,

writing to memory in our model involves the use of a newly

designed access module called the Least Recently Used

Access (LRUA) module.

The LRUA module is a pure content-based memory writer

that writes memories to either the least used memory lo-

cation or the most recently used memory location. This

module emphasizes accurate encoding of relevant (i.e., re-

cent) information, and pure content-based retrieval. New

information is written into rarely-used locations, preserv-

ing recently encoded information, or it is written to the

last used location, which can function as an update of the

memory with newer, possibly more relevant information.

The distinction between these two options is accomplished

with an interpolation between the previous read weights

and weights scaled according to usage weights w

u

t

. These

usage weights are updated at each time-step by decaying

the previous usage weights and adding the current read and

剩余12页未读,继续阅读

傅里叶、

- 粉丝: 134

- 资源: 51

上传资源 快速赚钱

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

会员权益专享

最新资源

- RTL8188FU-Linux-v5.7.4.2-36687.20200602.tar(20765).gz

- c++校园超市商品信息管理系统课程设计说明书(含源代码) (2).pdf

- 建筑供配电系统相关课件.pptx

- 企业管理规章制度及管理模式.doc

- vb打开摄像头.doc

- 云计算-可信计算中认证协议改进方案.pdf

- [详细完整版]单片机编程4.ppt

- c语言常用算法.pdf

- c++经典程序代码大全.pdf

- 单片机数字时钟资料.doc

- 11项目管理前沿1.0.pptx

- 基于ssm的“魅力”繁峙宣传网站的设计与实现论文.doc

- 智慧交通综合解决方案.pptx

- 建筑防潮设计-PowerPointPresentati.pptx

- SPC统计过程控制程序.pptx

- SPC统计方法基础知识.pptx

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论0