没有合适的资源?快使用搜索试试~ 我知道了~

首页Megastore: Providing Scalable, Highly Available Storage for Interactive Services

Megastore: Providing Scalable, Highly Available Storage for Interactive Services Jason Baker, Chris Bond, James C. Corbett, JJ Furman, Andrey Khorlin, James Larson, Jean-Michel L ´eon, Yawei Li, Alexander Lloyd, Vadim Yushprakh Google, Inc. fjasonbaker,chrisbond,jcorbett,jfurman,akhorlin,jimlarson,jm,yaweili,alloyd,vadimyg@google.com

资源详情

资源评论

资源推荐

Megastore: Providing Scalable, Highly Available

Storage for Interactive Ser vices

Jason Baker, Chris Bond, James C. Corbett, JJ Furman, Andrey Khorlin, James Larson,

Jean-Michel L

´

eon, Yawei Li, Alexander Lloyd, Vadim Yushprakh

Google, Inc.

{jasonbaker,chrisbond,jcorbett,jfurman,akhorlin,jimlarson,jm,yaweili,alloyd,vadimy}@google.com

ABSTRACT

Megastore is a storage system developed to meet the re-

quirements of today’s interactive online services. Megas-

tore blends the scalability of a NoSQL datastore with the

convenience of a traditional RDBMS in a novel way, and

provides both strong consistency guarantees and high avail-

ability. We provide fully serializable ACID semantics within

fine-grained partitions of data. This partitioning allows us

to synchronously replicate each write across a wide area net-

work with reasonable latency and support seamless failover

b etween datacenters. This paper describ es Megastore’s se-

mantics and replication algorithm. It also describes our ex-

p erience supporting a wide range of Google production ser-

vices built with Megastore.

Categories and Subject Descriptors

C.2.4 [Distributed Systems]: Distributed databases; H.2.4

[Database Management]: Systems—concurrency, distrib-

uted databases

General Terms

Algorithms, Design, Performance, Reliability

Keywords

Large databases, Distributed transactions, Bigtable, Paxos

1. INTRODUCTION

Interactive online services are forcing the storage commu-

nity to meet new demands as desktop applications migrate

to the cloud. Services like email, collaborative documents,

and social networking have been growing exponentially and

are testing the limits of existing infrastructure. Meeting

these services’ storage demands is challenging due to a num-

b er of conflicting requirements.

First, the Internet brings a huge audience of potential

users, so the applications must be highly scalable. A service

This article is published under a Creative Commons Attribution License

(http://creativecommons.org/licenses/by/3.0/), which permits distribution

and reproduction in any medium as well allowing derivative works, pro-

vided that you attribute the original work to the author(s) and CIDR 2011.

5

th

Biennial Conference on Innovative Data Systems Research (CIDR ’11)

January 9-12, 2011, Asilomar, California, USA.

can be built rapidly using MySQL [10] as its datastore, but

scaling the service to millions of users requires a complete

redesign of its storage infrastructure. Second, services must

comp ete for users. This requires rapid development of fea-

tures and fast time-to-market. Third, the service must be

resp onsive; hence, the storage system must have low latency.

Fourth, the service should provide the user with a consistent

view of the data—the result of an update should be visible

immediately and durably. Seeing edits to a cloud-hosted

spreadsheet vanish, however briefly, is a poor user experi-

ence. Finally, users have come to expect Internet services to

b e up 24/7, so the service must be highly available. The ser-

vice must be resilient to many kinds of faults ranging from

the failure of individual disks, machines, or routers all the

way up to large-scale outages affecting entire datacenters.

These requirements are in conflict. Relational databases

provide a rich set of features for easily building applications,

but they are difficult to scale to hundreds of millions of

users. NoSQL datastores such as Google’s Bigtable [15],

Apache Hadoop’s HBase [1], or Facebook’s Cassandra [6]

are highly scalable, but their limited API and loose consis-

tency models complicate application development. Repli-

cating data across distant datacenters while providing low

latency is challenging, as is guaranteeing a consistent view

of replicated data, esp ecially during faults.

Megastore is a storage system developed to meet the stor-

age requirements of today’s interactive online services. It

is novel in that it blends the scalability of a NoSQL data-

store with the convenience of a traditional RDBMS. It uses

synchronous replication to achieve high availability and a

consistent view of the data. In brief, it provides fully serial-

izable ACID semantics over distant replicas with low enough

latencies to support interactive applications.

We accomplish this by taking a middle ground in the

RDBMS vs. NoSQL design space: we partition the data-

store and replicate each partition separately, providing full

ACID semantics within partitions, but only limited con-

sistency guarantees across them. We provide traditional

database features, such as secondary indexes, but only those

features that can scale within user-tolerable latency limits,

and only with the semantics that our partitioning scheme

can supp ort. We contend that the data for most Internet

services can be suitably partitioned (e.g., by user) to make

this approach viable, and that a small, but not spartan, set

of features can substantially ease the burden of developing

cloud applications.

Contrary to conventional wisdom [24, 28], we were able to

use Paxos [27] to build a highly available system that pro-

223

vides reasonable latencies for interactive applications while

synchronously replicating writes across geographically dis-

tributed datacenters. While many systems use Paxos solely

for locking, master election, or replication of metadata and

configurations, we believe that Megastore is the largest sys-

tem deployed that uses Paxos to replicate primary user data

across datacenters on every write.

Megastore has been widely deployed within Google for

several years [20]. It handles more than three billion write

and 20 billion read transactions daily and stores nearly a

p etabyte of primary data across many global datacenters.

The key contributions of this paper are:

1. the design of a data model and storage system that

allows rapid development of interactive applications

where high availability and scalability are built-in from

the start;

2. an implementation of the Paxos replication and con-

sensus algorithm optimized for low-latency operation

across geographically distributed datacenters to pro-

vide high availability for the system;

3. a report on our experience with a large-scale deploy-

ment of Megastore at Google.

The paper is organized as follows. Section 2 describes how

Megastore provides availability and scalability using parti-

tioning and also justifies the sufficiency of our design for

many interactive Internet applications. Section 3 provides

an overview of Megastore’s data model and features. Sec-

tion 4 explains the replication algorithms in detail and gives

some measurements on how they perform in practice. Sec-

tion 5 summarizes our experience developing the system.

We review related work in Section 6. Section 7 concludes.

2. TOWARD AVAILABILITY AND SCALE

In contrast to our need for a storage platform that is

global, reliable, and arbitrarily large in scale, our hardware

building blocks are geographically confined, failure-prone,

and suffer limited capacity. We must bind these compo-

nents into a unified ensemble offering greater throughput

and reliability.

To do so, we have taken a two-pronged approach:

• for availability, we implemented a synchronous, fault-

tolerant log replicator optimized for long distance-links;

• for scale, we partitioned data into a vast space of small

databases, each with its own replicated log stored in a

per-replica NoSQL datastore.

2.1 Replication

Replicating data across hosts within a single datacenter

improves availability by overcoming host-specific failures,

but with diminishing returns. We still must confront the

networks that connect them to the outside world and the

infrastructure that powers, cools, and houses them. Eco-

nomically constructed sites risk some level of facility-wide

outages [25] and are vulnerable to regional disasters. For

cloud storage to meet availability demands, service providers

must replicate data over a wide geographic area.

2.1.1 Strategies

We evaluated common strategies for wide-area replication:

Asynchronous Master/Slave A master node replicates

write-ahead log entries to at least one slave. Log ap-

pends are acknowledged at the master in parallel with

transmission to slaves. The master can support fast

ACID transactions but risks downtime or data loss

during failover to a slave. A consensus protocol is re-

quired to mediate mastership.

Synchronous Master/Slave A master waits for changes

to be mirrored to slaves before acknowledging them,

allowing failover without data loss. Master and slave

failures need timely detection by an external system.

Optimistic Replication Any member of a homogeneous

replica group can accept mutations [23], which are

asynchronously propagated through the group. Avail-

ability and latency are excellent. However, the global

mutation ordering is not known at commit time, so

transactions are impossible.

We avoided strategies which could lose data on failures,

which are common in large-scale systems. We also discarded

strategies that do not permit ACID transactions. Despite

the operational advantages of eventually consistent systems,

it is currently too difficult to give up the read-modify-write

idiom in rapid application development.

We also discarded options with a heavyweight master.

Failover requires a series of high-latency stages often caus-

ing a user-visible outage, and there is still a huge amount

of complexity. Why build a fault-tolerant system to arbi-

trate mastership and failover workflows if we could avoid

distinguished masters altogether?

2.1.2 Enter Paxos

We decided to use Paxos, a proven, optimal, fault-tolerant

consensus algorithm with no requirement for a distinguished

master [14, 27]. We replicate a write-ahead log over a group

of symmetric peers. Any node can initiate reads and writes.

Each log append blocks on acknowledgments from a ma-

jority of replicas, and replicas in the minority catch up as

they are able—the algorithm’s inherent fault tolerance elim-

inates the need for a distinguished “failed” state. A novel

extension to Paxos, detailed in Section 4.4.1, allows local

reads at any up-to-date replica. Another extension permits

single-roundtrip writes.

Even with fault tolerance from Paxos, there are limita-

tions to using a single log. With replicas spread over a

wide area, communication latencies limit overall through-

put. Moreover, progress is impeded when no replica is cur-

rent or a majority fail to acknowledge writes. In a traditional

SQL database hosting thousands or millions of users, us-

ing a synchronously replicated log would risk interruptions

of widespread impact [11]. So to improve availability and

throughput we use multiple replicated logs, each governing

its own partition of the data set.

2.2 Partitioning and Locality

To scale our replication scheme and maximize performance

of the underlying datastore, we give applications fine-grained

control over their data’s partitioning and locality.

2.2.1 Entity Groups

To scale throughput and localize outages, we partition our

data into a collection of entity groups [24], each indepen-

dently and synchronously replicated over a wide area. The

underlying data is stored in a scalable NoSQL datastore in

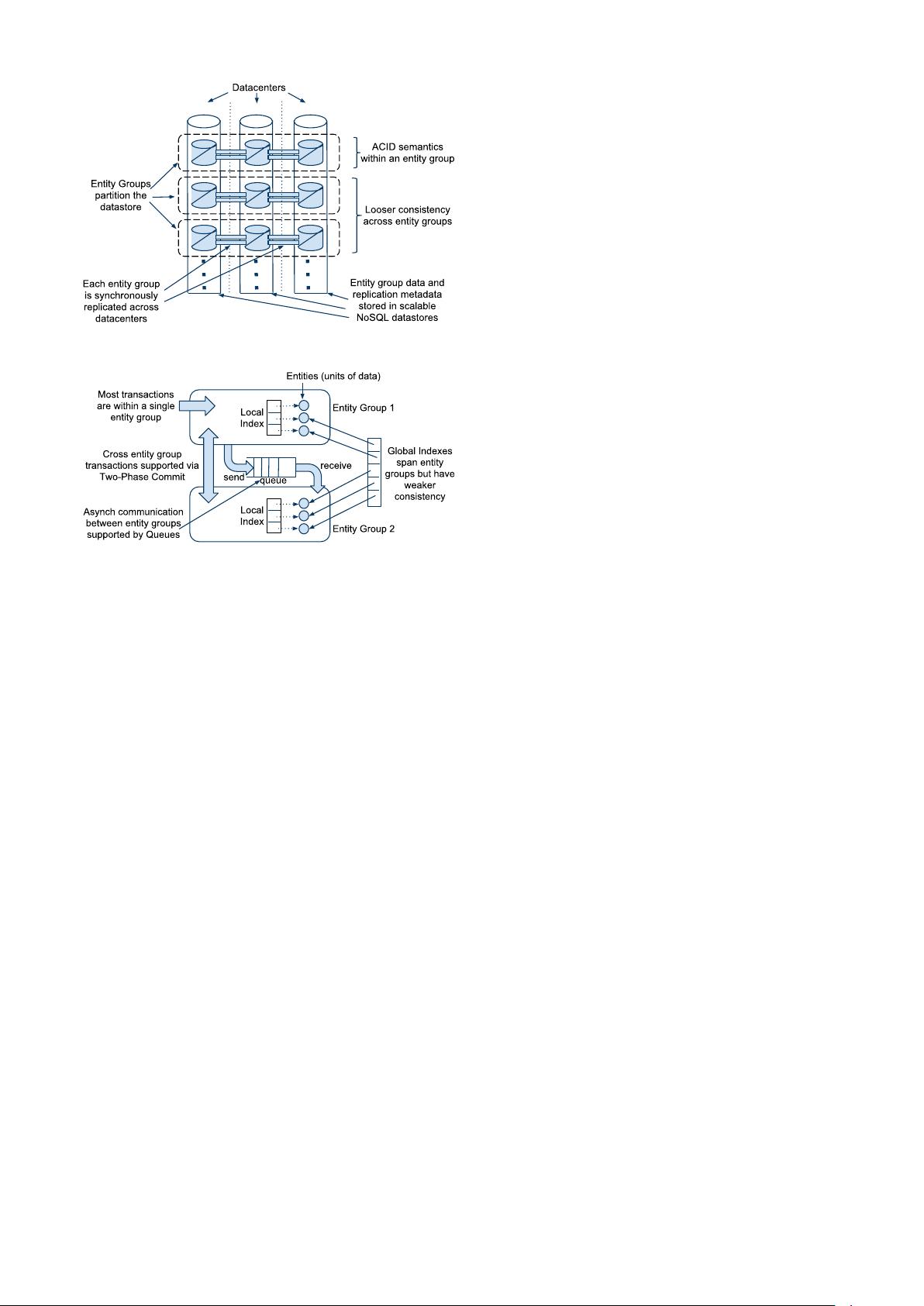

each datacenter (see Figure 1).

Entities within an entity group are mutated with single-

phase ACID transactions (for which the commit record is

224

Figure 1: Scalable Replication

Figure 2: Operations Across Entity Groups

replicated via Paxos). Operations across entity groups could

rely on expensive two-phase commits, but typically leverage

Megastore’s efficient asynchronous messaging. A transac-

tion in a sending entity group places one or more messages

in a queue; transactions in receiving entity groups atomically

consume those messages and apply ensuing mutations.

Note that we use asynchronous messaging between logi-

cally distant entity groups, not physically distant replicas.

All network traffic between datacenters is from replicated

op erations, which are synchronous and consistent.

Indexes local to an entity group obey ACID semantics;

those across entity groups have looser consistency. See Fig-

ure 2 for the various operations on and between entity groups.

2.2.2 Selecting Entity Group Boundaries

The entity group defines the a priori grouping of data

for fast op erations. Boundaries that are too fine-grained

force excessive cross-group operations, but placing too much

unrelated data in a single group serializes unrelated writes,

which degrades throughput.

The following examples show ways applications can work

within these constraints:

Email Each email account forms a natural entity group.

Operations within an account are transactional and

consistent: a user who sends or labels a message is

guaranteed to observe the change despite possible fail-

over to another replica. External mail routers handle

communication between accounts.

Blogs A blogging application would be modeled with mul-

tiple classes of entity groups. Each user has a profile,

which is naturally its own entity group. However, blogs

are collaborative and have no single permanent owner.

We create a second class of entity groups to hold the

p osts and metadata for each blog. A third class keys

off the unique name claimed by each blog. The appli-

cation relies on asynchronous messaging when a sin-

gle user operation affects both blogs and profiles. For

a lower-traffic operation like creating a new blog and

claiming its unique name, two-phase commit is more

convenient and performs adequately.

Maps Geographic data has no natural granularity of any

consistent or convenient size. A mapping application

can create entity groups by dividing the glob e into non-

overlapping patches. For mutations that span patches,

the application uses two-phase commit to make them

atomic. Patches must be large enough that two-phase

transactions are uncommon, but small enough that

each patch requires only a small write throughput.

Unlike the previous examples, the number of entity

groups does not grow with increased usage, so enough

patches must be created initially for sufficient aggre-

gate throughput at later scale.

Nearly all applications built on Megastore have found nat-

ural ways to draw entity group boundaries.

2.2.3 Physical Layout

We use Google’s Bigtable [15] for scalable fault-tolerant

storage within a single datacenter, allowing us to support

arbitrary read and write throughput by spreading operations

across multiple rows.

We minimize latency and maximize throughput by let-

ting applications control the placement of data: through the

selection of Bigtable instances and specification of locality

within an instance.

To minimize latency, applications try to keep data near

users and replicas near each other. They assign each entity

group to the region or continent from which it is accessed

most. Within that region they assign a triplet or quintuplet

of replicas to datacenters with isolated failure domains.

For low latency, cache efficiency, and throughput, the data

for an entity group are held in contiguous ranges of Bigtable

rows. Our schema language lets applications control the

placement of hierarchical data, storing data that is accessed

together in nearby rows or denormalized into the same row.

3. A TOUR OF MEGASTORE

Megastore maps this architecture onto a feature set care-

fully chosen to encourage rapid development of scalable ap-

plications. This section motivates the tradeoffs and de-

scrib es the developer-facing features that result.

3.1 API Design Philosophy

ACID transactions simplify reasoning about correctness,

but it is equally imp ortant to be able to reason about perfor-

mance. Megastore emphasizes cost-transparent APIs with

runtime costs that match application developers’ intuitions.

Normalized relational schemas rely on joins at query time

to service user operations. This is not the right model for

Megastore applications for several reasons:

• High-volume interactive workloads benefit more from

predictable performance than from an expressive query

language.

225

剩余11页未读,继续阅读

caizhanfei

- 粉丝: 324

- 资源: 9

上传资源 快速赚钱

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

会员权益专享

最新资源

- RTL8188FU-Linux-v5.7.4.2-36687.20200602.tar(20765).gz

- c++校园超市商品信息管理系统课程设计说明书(含源代码) (2).pdf

- 建筑供配电系统相关课件.pptx

- 企业管理规章制度及管理模式.doc

- vb打开摄像头.doc

- 云计算-可信计算中认证协议改进方案.pdf

- [详细完整版]单片机编程4.ppt

- c语言常用算法.pdf

- c++经典程序代码大全.pdf

- 单片机数字时钟资料.doc

- 11项目管理前沿1.0.pptx

- 基于ssm的“魅力”繁峙宣传网站的设计与实现论文.doc

- 智慧交通综合解决方案.pptx

- 建筑防潮设计-PowerPointPresentati.pptx

- SPC统计过程控制程序.pptx

- SPC统计方法基础知识.pptx

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功

评论3