and velocity. Three actions are available to the agent in each state: forward thrust, backward thrust, or no

thrust at all. The dynamics of the system are such that the car does not have enough thrust to simply drive

up the hill. Rather, the driver must learn to use momentum to his advantage to gain enough velocity to

successfully climb the hill. The reinforcement function is -1 for ALL state transitions except the transition

to the goal state, in which case a zero reinforcement is returned. Because the agent wishes to maximize

reinforcement, it learns to choose actions that minimize the time it takes to reach the goal state, and in so

doing learns the optimal strategy for driving the car up the hill.

Games

Thus far it has been assumed that the learning agent always attempts to maximize the reinforcement

function. This need not be the case. The learning agent could just as easily learn to minimize the

reinforcement function. This might be the case when the reinforcement is a function of limited resources

and the agent must learn to conserve these resources while achieving a goal (e.g., an airplane executing a

maneuver while conserving as much fuel as possible).

An alternative reinforcement function would be used in the context of a game environment, when there are

two or more players with opposing goals. In a game scenario, the RL system can learn to generate optimal

behavior for the players involved by finding the maximin, minimax, or saddlepoint of the reinforcement

function. For example, a missile might be given the goal of minimizing the distance to a given target (in

this case an airplane). The airplane would be given the opposing goal of maximizing the distance to the

missile. The agent would evaluate the state for each player and would choose an action independent of the

other players action. These actions would then be executed in parallel.

Because the actions are chosen independently and executed simultaneously, the RL agent learns to choose

actions for each player that would generate the best outcome for the given player in a “worst case” scenario.

The agent will perform actions for the missile that will minimize the maximum distance to the airplane

assuming the airplane will choose the action that maximizes the same distance. The agent will perform

actions for the airplane that will maximize the minimum distance to the missile assuming the missile will

perform the action that will minimize the same distance. A more detailed discussion of this alternative can

be found in Harmon, Baird, and Klopf (1994), and Littman(1996).

The Value Function

In previous sections the environment and the reinforcement function are discussed. However, the issue of

how the agent learns to choose “good” actions, or even how we might measure the utility of an action is not

explained. First, two terms are defined. A policy determines which action should be performed in each

state; a policy is a mapping from states to actions. The value of a state is defined as the sum of the

reinforcements received when starting in that state and following some fixed policy to a terminal state. The

optimal policy would therefore be the mapping from states to actions that maximizes the sum of the

reinforcements when starting in an arbitrary state and performing actions until a terminal state is reached.

Under this definition the value of a state is dependent upon the policy. The value function is a mapping

from states to state values and can be approximated using any type of function approximator (e.g., multi-

layered perceptron, memory based system, radial basis functions, look-up table, etc.).

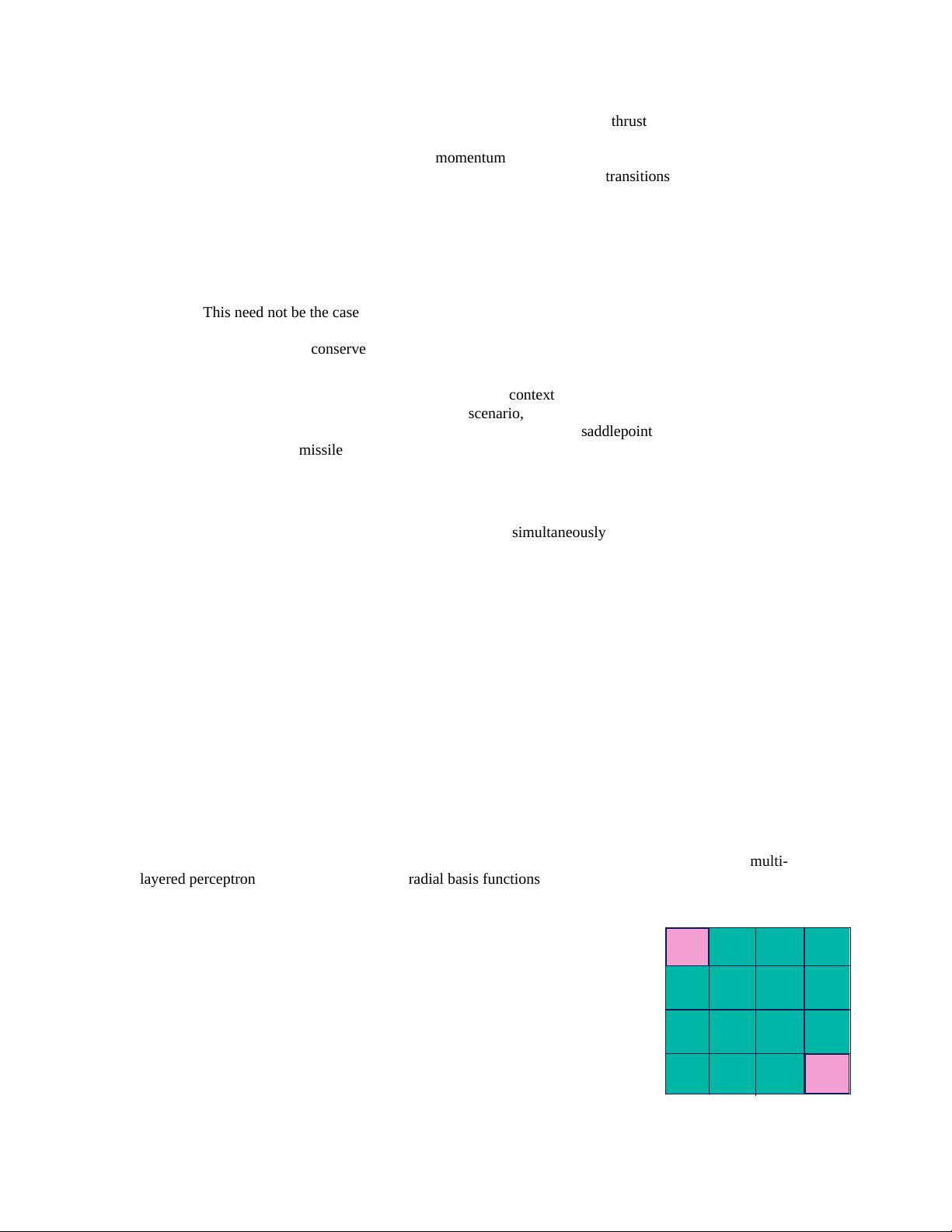

An example of a value function can be seen using a simple Markov decision process with 16 states. The

state space can be visualized using a 4x4 grid. Each square represents a state.

The reinforcement function is -1 everywhere (i.e., the agent receives a

reinforcement of -1 on each transition). There are 4 actions possible in each

state: north, south, east, west. The goal states are the upper left corner and the

lower right corner. The value function for the random policy is shown in Figure

1. For each state the random policy randomly chooses one of the four possible

actions. The numbers in the states represent the expected values of the states.

For example, when starting in the lower left corner and following a random

policy, on average there will be 22 transitions to other states before the terminal

state is reached.

0

0

-14

-14

-14

-14

-18

-18

-20

-22

-20

-20

-22

-20

-22

-22

Figure 1

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功