Published as a conference paper at ICLR 2020

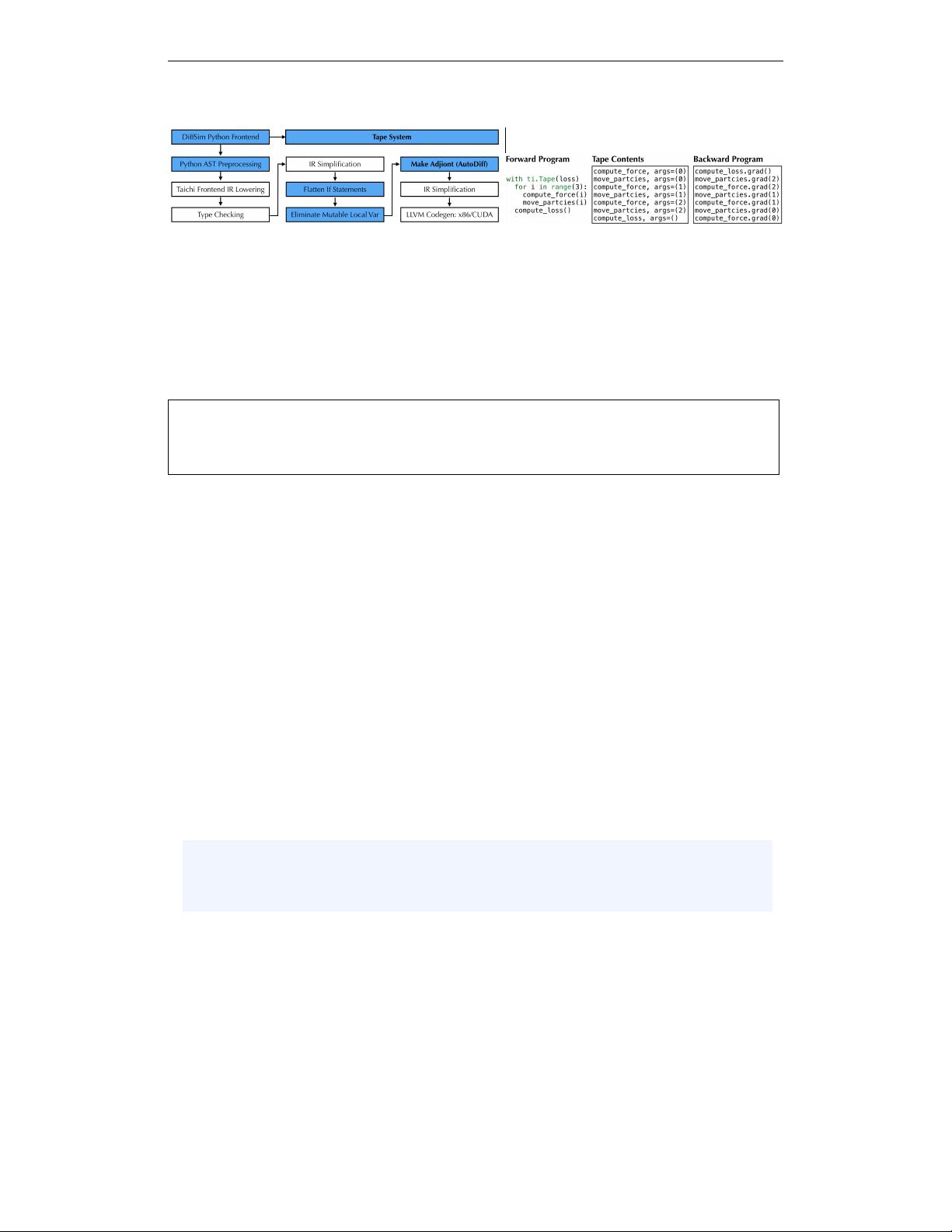

Figure 2: Left: The DiffTaichi system. We reuse some infrastructure (white boxes) from Taichi,

while the blue boxes are our extensions for differentiable programming. Right: The tape records

kernel launches and replays the gradient kernels in reverse order during backpropagation.

Assumption Unlike functional programming languages where immutable output buffers are gen-

erated, imperative programming allows programmers to freely modify global tensors. To make

automatic differentiation well-defined under this setting, we make the following assumption on im-

perative kernels:

Global Data Access Rules:

1) If a global tensor element is written more than once, then starting from the second write, the

write must come in the form of an atomic add (“accumulation”).

2) No read accesses happen to a global tensor element, until its accumulation is done.

In forward simulators, programmers may make subtle changes to satisfy the rules. For instance,

in the mass-spring simulation example, we record the whole history of x and v, instead of keep-

ing only the latest values. The memory consumption issues caused by this can be alleviated via

checkpointing, as discussed later in Appendix D.

With these assumptions, kernels will not overwrite the outputs of each other, and the goal of AD is

clear: given a primal kernel f that takes as input X

1

, X

2

, . . . , X

n

and outputs (or accumulates to)

Y

1

, Y

2

, . . . , Y

m

, the generated gradient (adjoint) kernel f

∗

should take as input X

1

, X

2

, . . . , X

n

and

Y

∗

1

, Y

∗

2

, . . . , Y

∗

m

and accumulate gradient contributions to X

∗

1

, X

∗

2

, . . . , X

∗

m

, where each X

∗

i

is an

adjoint of X

i

, i.e. ∂(loss)/∂X

i

.

Storage Control of Adjoint Tensors Users can specify the storage of adjoint tensors using the

Taichi data structure description language (Hu et al., 2019a), as if they are primal tensors. We also

provide ti.root.lazy_grad() to automatically place the adjoint tensors following the layout of their

primals.

3.1 LOCAL AD: DIFFERENTIATING TAICHI KERNELS USING SOURCE CODE TRANSFORMS

A typical Taichi kernel consists of multiple levels of for loops and a body block. To make later AD

easier, we introduce two basic code transforms to simplify the loop body, as detailed below.

int a = 0;

if (b > 0) { a = b;}

else { a = 2b;}

a = a + 1;

return a;

// flatten branching

int a = 0;

a = select(b > 0, b, 2b);

a = a + 1

return a;

// eliminate mutable var

ssa1 = select(b > 0, b, 2b);

ssa2 = ssa1 + 1

return ssa2;

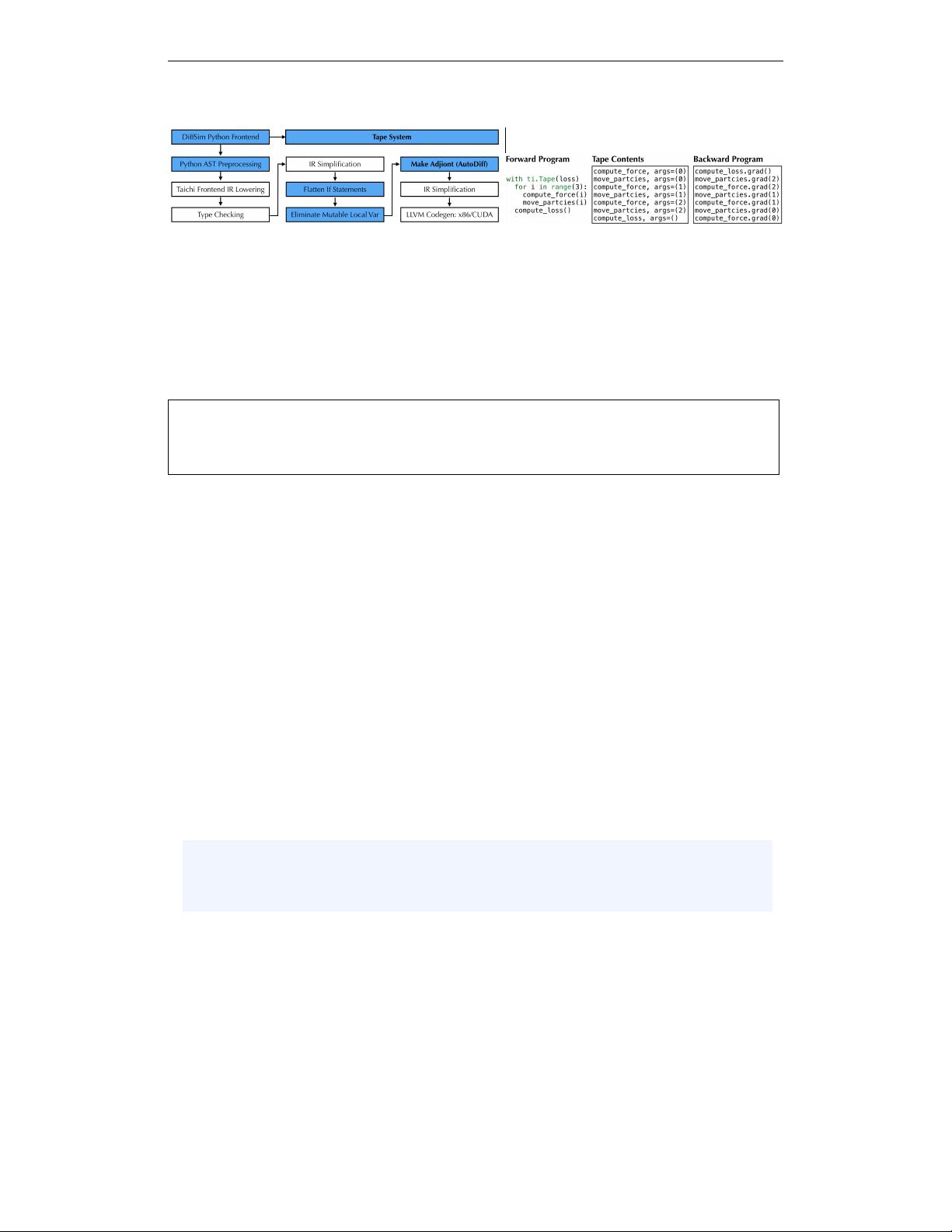

Figure 3: Simple IR preprocessing before running the AD source code transform (left to right).

Demonstrated in C++. The actual Taichi IR is often more complex. Containing loops are ignored.

Flatten Branching In physical simulation branches are common, e.g., when implementing bound-

ary conditions and collisions. To simplify the reverse-mode AD pass, we first replace “if” statements

with ternary operators select(cond, value_if_true, value_if_false), whose gradients are clearly de-

fined (Fig. 3, middle). This is a common transformation in program vectorization (e.g. Karrenberg

& Hack (2011); Pharr & Mark (2012)).

Eliminate Mutable Local Variables After removing branching, we end up with straight-line loop

bodies. To further simplify the IR and make the procedure truly single-assignment, we apply a

4